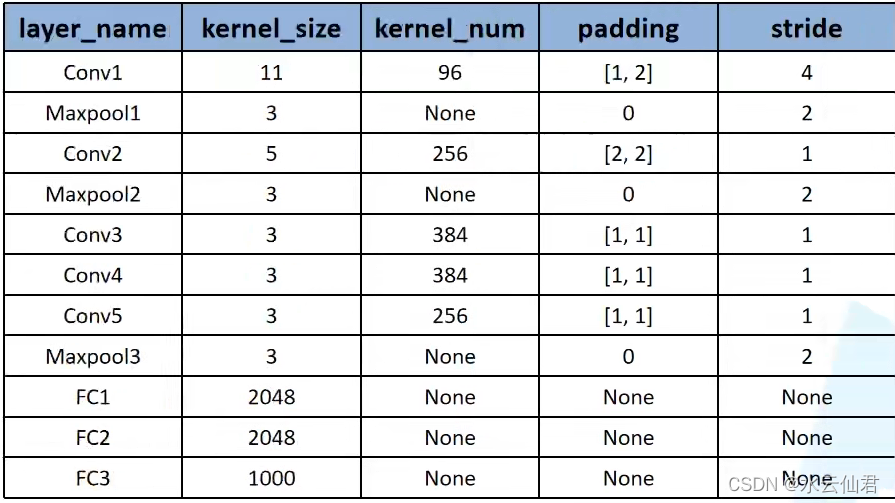

AlexNet详解

model.py

'''

python3.7

-*- coding: UTF-8 -*-

@Project -> File :pythonProject -> model

@IDE :PyCharm

@Author :YangShouWei

@USER: 296714435

@Date :2022/2/19 18:20:28

@LastEditor:

'''

import torch

import torch.nn as nn

class AlexNet(nn.Module):

def __init__(self,num_classes=1000, init_weights=False):

super(AlexNet, self).__init__()

self.features = nn.Sequential(

nn.Conv2d(3, 48, kernel_size=11, stride=4, padding=2), # input[3, 224,224] output[48, 55, 55]

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2), # output[48,27, 27]

nn.Conv2d(48, 128, kernel_size=5, padding=2), # output [128, 27, 27]

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2), # output[128, 13, 13]

nn.Conv2d(128, 192,kernel_size=3,padding=1), # output[192, 13, 13]

nn.ReLU(inplace=True),

nn.Conv2d(192, 192,kernel_size=3,padding=1), # output[192, 13, 13]

nn.ReLU(inplace=True),

nn.Conv2d(192, 128,kernel_size=3,padding=1), # [128, 13, 13]

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2), # output[128, 6, 6]

)

self.classifier = nn.Sequential(

nn.Dropout(p=0.5),

nn.Linear(128 * 6 * 6, 2048),

nn.ReLU(inplace=True),

nn.Dropout(p=0.5),

nn.Linear(2048, 2048),

nn.ReLU(inplace=True),

nn.Linear(2048, num_classes),

)

if init_weights:

self._initialize_weights()

def forward(self,x):

x = self.features(x)

x = torch.flatten(x, start_dim=1) # 展平操作,[batch,channel,Height,Width] 参数1表示从channel开始,把C,H,W三个维度展成一维向量。不去改动batch

x = self.classifier(x)

return x

def _initialize_weights(self):

for m in self.modules(): # self.modules 继承nn.Modules, 返回一个层结构迭代器,里面包含了上面网络定义中的每一层结构

if isinstance(m, nn.Conv2d): # 遍历到自己定义的卷积层以后就进行卷积初始化

nn.init.kaiming_normal_(m.weight,mode='fan_out',nonlinearity='relu') # 对卷积权重进行初始化。

if m.bias is not None:

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):

nn.init.normal_(m.weight, 0, 0.01)

nn.init.constant_(m.bias, 0)

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?