测试环境:

1.flink版本:1.13.0

2.Oracle版本:12c

3.Oracle CDC:2.1.0

核心包

<dependency>

<groupId>com.ververica</groupId>

<artifactId>flink-connector-oracle-cdc</artifactId>

<version>2.1.0</version>

</dependency>

pom全文件

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>flinkTest</groupId>

<artifactId>flinkTest</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<flink.version>1.13.0</flink.version>

<scala.version>2.12</scala.version>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-java</artifactId>

<version>${flink.version}</version>

</dependency>

<!-- https://mvnrepository.com/artifact/com.ververica/flink-connector-mysql-cdc -->

<dependency>

<groupId>com.ververica</groupId>

<artifactId>flink-connector-mysql-cdc</artifactId>

<version>2.1.0</version>

<exclusions>

<exclusion>

<artifactId>mysql-connector-java</artifactId>

<groupId>mysql</groupId>

</exclusion>

<exclusion>

<artifactId>slf4j-api</artifactId>

<groupId>org.slf4j</groupId>

</exclusion>

<!--<exclusion>

<artifactId>kafka-clients</artifactId>

<groupId>org.apache.kafka</groupId>

</exclusion>-->

<exclusion>

<artifactId>jackson-databind</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

<exclusion>

<artifactId>jackson-jaxrs-json-provider</artifactId>

<groupId>com.fasterxml.jackson.jaxrs</groupId>

</exclusion>

<exclusion>

<artifactId>jaxb-api</artifactId>

<groupId>javax.xml.bind</groupId>

</exclusion>

<exclusion>

<artifactId>jetty-servlet</artifactId>

<groupId>org.eclipse.jetty</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java_${scala.version}</artifactId>

<version>${flink.version}</version>

<exclusions>

<exclusion>

<artifactId>slf4j-api</artifactId>

<groupId>org.slf4j</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-clients_${scala.version}</artifactId>

<version>${flink.version}</version>

</dependency>

<!-- table API -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-api-java-bridge_${scala.version}</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hudi</groupId>

<artifactId>hudi-flink-bundle_2.11</artifactId>

<version>0.8.0</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-hadoop-compatibility_2.11</artifactId>

<version>1.11.0</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>3.1.1</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-jdbc_2.12</artifactId>

<version>1.13.0</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-planner-blink_${scala.version}</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-simple</artifactId>

<version>1.7.26</version>

</dependency>

<!-- connector -->

<!-- https://mvnrepository.com/artifact/org.apache.flink/flink-connector-kafka -->

<!-- <dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-kafka_2.12</artifactId>

<version>1.14.1</version>

<exclusions>

<exclusion>

<artifactId>slf4j-api</artifactId>

<groupId>org.slf4j</groupId>

</exclusion>

<exclusion>

<artifactId>snappy-java</artifactId>

<groupId>org.xerial.snappy</groupId>

</exclusion>

</exclusions>

</dependency>-->

<!-- https://mvnrepository.com/artifact/com.ververica/flink-connector-oracle-cdc -->

<dependency>

<groupId>com.ververica</groupId>

<artifactId>flink-connector-oracle-cdc</artifactId>

<version>2.1.0</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-sql-avro</artifactId>

<version>1.12.3</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-hive_2.11</artifactId>

<version>1.13.0</version>

<exclusions>

<exclusion>

<artifactId>flink-connector-base</artifactId>

<groupId>org.apache.flink</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>8.0.23</version>

</dependency>

<!-- other -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-csv</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-json</artifactId>

<version>1.13.0</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-jdbc_2.12</artifactId>

<version>1.10.3</version>

<exclusions>

<exclusion>

<artifactId>force-shading</artifactId>

<groupId>org.apache.flink</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>8.0.23</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<version>3.1.0</version>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-jar-plugin</artifactId>

<version>2.6</version>

<configuration>

<archive>

<manifest>

<addClasspath>true</addClasspath>

<classpathPrefix>lib/</classpathPrefix>

<mainClass>com.testCdC</mainClass>

</manifest>

</archive>

</configuration>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-dependency-plugin</artifactId>

<version>2.10</version>

<executions>

<execution>

<id>copy-dependencies</id>

<phase>package</phase>

<goals>

<goal>copy-dependencies</goal>

</goals>

<configuration>

<outputDirectory>${project.build.directory}/lib</outputDirectory>

</configuration>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<configuration>

<source>8</source>

<target>8</target>

</configuration>

</plugin>

</plugins>

</build>

</project>

主要代码实现cd

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

StreamTableEnvironment tableEnv = StreamTableEnvironment.create(env);

tableEnv.executeSql("CREATE TABLE flink (\n" +

" id STRING,\n" +

" name STRING,\n" +

" age STRING," +

" sex STRING\n" +

") WITH (\n" +

" 'connector' = 'oracle-cdc'," +

" 'hostname' = '127.0.0.1',\n" +

" 'port' = '6045',\n" +

" 'username' = 'liuyun',\n" +

" 'password' = '123456',\n"+

" 'database-name' = 'xe',\n"+

" 'schema-name' = 'LIUYUN',\n"+

" 'debezium.log.mining.continuous.mine'='true',\n"+

" 'debezium.log.mining.strategy'='online_catalog',\n"+

" 'table-name' = 'FLINK')");

TableResult tableResult = tableEnv.executeSql("select * from flink");

tableResult.print();

env.execute();

}

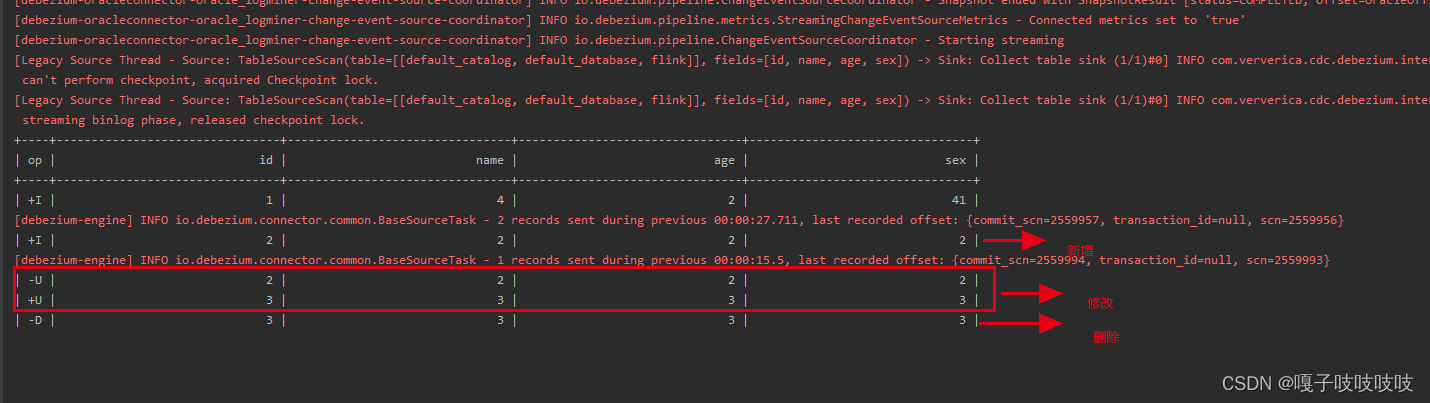

运行结果

相关问题排查

相关文档地址:https://ververica.github.io/flink-cdc-connectors/release-2.1/content/connectors/oracle-cdc.html

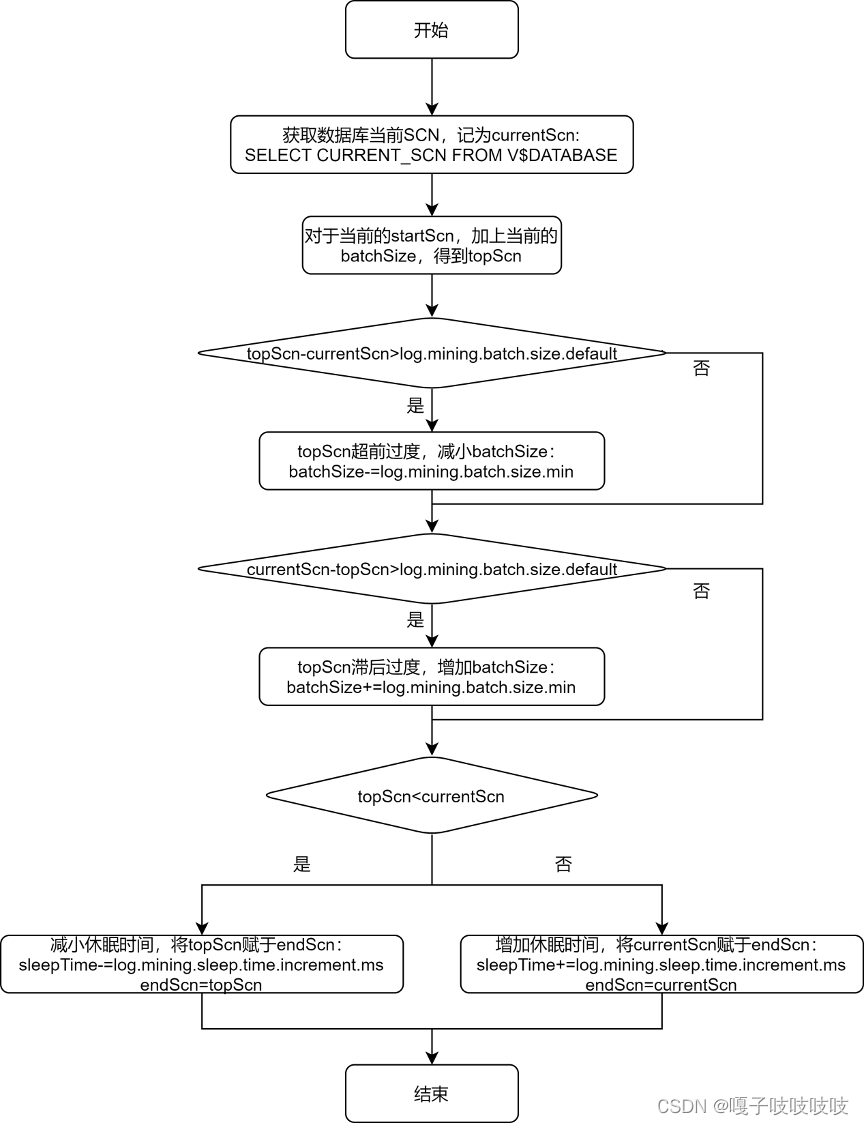

1读取数据有延时

在 create 语句中加上如下两个配置项:

'debezium.log.mining.strategy'='online_catalog',

'debezium.log.mining.continuous.mine'='true'

debezium 对监控时序范围和休眠时间的调节原理:

流程图参考:

https://mp.weixin.qq.com/s/IQiK7enF5fX0ighRE_i2sg

2 找不到表

[ERROR] Could not execute SQL statement. Reason:

io.debezium.DebeziumException: Supplemental logging not configured for table LIUYUN.flink Use command: ALTER TABLE LIUYUN.flink ADD SUPPLEMENTAL LOG DATA (ALL) COLUMNS

参看文档: https://docs.oracle.com/cd/E11882_01/server.112/e41084/sql_elements008.htm

可以在 create 语句中加上 :

'debezium.database.tablename.case.insensitive'='false',

3 包冲突

这个主要表现在kafka的相关包冲突

maven中解决这些冲突就行

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?