最近作者迷上了“斗罗大陆”动漫,但更新速度速度太慢,故利用所学bs4模块功能,爬取了该小说所有内容,进行查阅。具体代码如下:

import requests

from bs4 import BeautifulSoup

if __name__=='__main__':

#对首页的页面数据进行爬取

url='http://www.147xs.org/book/3722/'

headers={

'User-Agent':'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/72.0.3626.81 Safari/537.36 SE 2.X MetaSr 1.0'

}

page_text=requests.get(url=url,headers=headers).text

#1.实例化BeautifulSoup对象,需要将页面源码数据加载到该对象中

soup=BeautifulSoup(page_text,'lxml')

#print(soup)

#解析章节标题和详情页url

dd_list=soup.select('.box_con>div>dl>dd')

#print(dd_list)

fp=open('./douluodalu.txt','w',encoding='utf-8')

for dd in dd_list:

title=dd.a.string

detail_url='http://www.147xs.org'+dd.a['href']

#对详情页发起请求、解析出章节内容

detail_page=requests.get(url=detail_url,headers=headers).text

#解析详情页中相关章节的内容

detail_soup=BeautifulSoup(detail_page,'lxml')

detail_tag=detail_soup.find('div',id='content')

#解析出了章节内容

content=detail_tag.text

#存储

fp.write(title+':'+content+'\n')

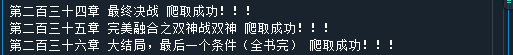

print(title,'爬取成功!!!')

运行结果:

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?