一、登录Cloudera Manager (http://192.168.201.128:7180/cmf/login)时,无法访问web页面

针对此问题网上有较多的解决方案(e.g. https://www.cnblogs.com/zlslch/p/7078119.html), 如果还不能解决你的问题,请看下面的解决方案。

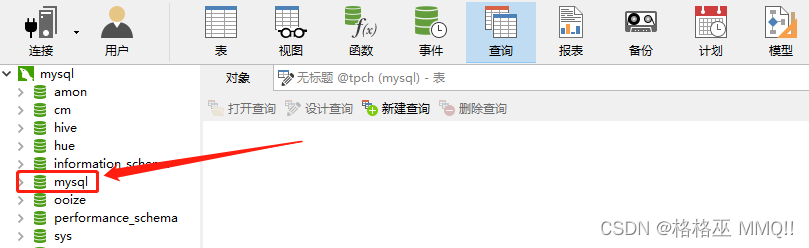

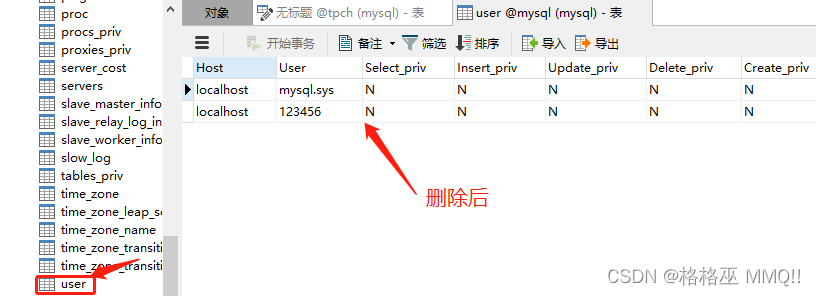

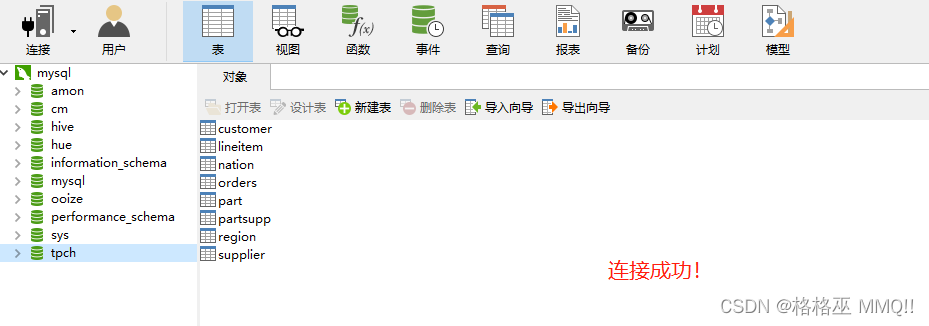

登录MySQL数据库(或利用Navicat),会发现有一个mysql数据库(下图所示),在mysql数据库中有一个user表,将User="root"的两条记录进行删除

select * from user;

delete from user where User=‘root’;

再次登录http://192.168.201.128:7180/cmf/login,发现登录成功!

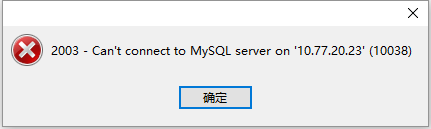

二、利用Navicat连接MySql数据库时,错误信息:Can’t connect to MySQL server on ‘xxxxx’(10038)

解决方案:

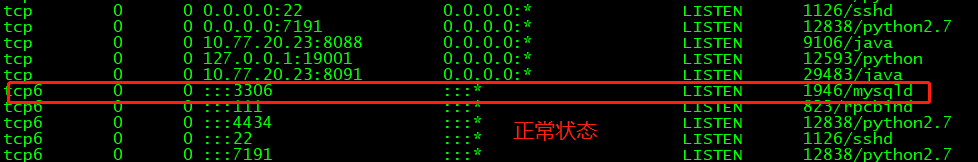

查看网络的端口信息:netstat -ntpl,下图状态为正常状态(不是请进行如下操作),如果没有netstat,在CentOS 7下利用yum -y install net-tools进行安装。

查看防火墙的状态,发现3306的端口是丢弃状态:

iptables -vnL

这里要清除防火墙中链中的规则

iptables -F

再次连接MySql数据库,发现连接成功!

三、无法启动NameNode,查看日志发现如下错误…

复制代码

复制代码

Exception in thread “main” org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.hdfs.server.namenode.SafeModeException): Cannot delete /tmp/hadoop-yarn/staging/hadoop/.staging/job_1490689337938_0001. Name node is in safe mode.

The reported blocks 48 needs additional 5 blocks to reach the threshold 0.9990 of total blocks 53.

The number of live datanodes 2 has reached the minimum number 0. Safe mode will be turned off automatically once the thresholds have been reached.

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.checkNameNodeSafeMode(FSNamesystem.java:1327)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.delete(FSNamesystem.java:3713)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.delete(NameNodeRpcServer.java:953)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.delete(ClientNamenodeProtocolServerSideTranslatorPB.java:611)

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol

2.

c

a

l

l

B

l

o

c

k

i

n

g

M

e

t

h

o

d

(

C

l

i

e

n

t

N

a

m

e

n

o

d

e

P

r

o

t

o

c

o

l

P

r

o

t

o

s

.

j

a

v

a

)

a

t

o

r

g

.

a

p

a

c

h

e

.

h

a

d

o

o

p

.

i

p

c

.

P

r

o

t

o

b

u

f

R

p

c

E

n

g

i

n

e

2.callBlockingMethod(ClientNamenodeProtocolProtos.java) at org.apache.hadoop.ipc.ProtobufRpcEngine

2.callBlockingMethod(ClientNamenodeProtocolProtos.java)atorg.apache.hadoop.ipc.ProtobufRpcEngineServer

P

r

o

t

o

B

u

f

R

p

c

I

n

v

o

k

e

r

.

c

a

l

l

(

P

r

o

t

o

b

u

f

R

p

c

E

n

g

i

n

e

.

j

a

v

a

:

616

)

a

t

o

r

g

.

a

p

a

c

h

e

.

h

a

d

o

o

p

.

i

p

c

.

R

P

C

ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:616) at org.apache.hadoop.ipc.RPC

ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:616)atorg.apache.hadoop.ipc.RPCServer.call(RPC.java:982)

at org.apache.hadoop.ipc.Server$Handler

1.

r

u

n

(

S

e

r

v

e

r

.

j

a

v

a

:

2049

)

a

t

o

r

g

.

a

p

a

c

h

e

.

h

a

d

o

o

p

.

i

p

c

.

S

e

r

v

e

r

1.run(Server.java:2049) at org.apache.hadoop.ipc.Server

1.run(Server.java:2049)atorg.apache.hadoop.ipc.ServerHandler

1.

r

u

n

(

S

e

r

v

e

r

.

j

a

v

a

:

2045

)

a

t

j

a

v

a

.

s

e

c

u

r

i

t

y

.

A

c

c

e

s

s

C

o

n

t

r

o

l

l

e

r

.

d

o

P

r

i

v

i

l

e

g

e

d

(

N

a

t

i

v

e

M

e

t

h

o

d

)

a

t

j

a

v

a

x

.

s

e

c

u

r

i

t

y

.

a

u

t

h

.

S

u

b

j

e

c

t

.

d

o

A

s

(

S

u

b

j

e

c

t

.

j

a

v

a

:

422

)

a

t

o

r

g

.

a

p

a

c

h

e

.

h

a

d

o

o

p

.

s

e

c

u

r

i

t

y

.

U

s

e

r

G

r

o

u

p

I

n

f

o

r

m

a

t

i

o

n

.

d

o

A

s

(

U

s

e

r

G

r

o

u

p

I

n

f

o

r

m

a

t

i

o

n

.

j

a

v

a

:

1698

)

a

t

o

r

g

.

a

p

a

c

h

e

.

h

a

d

o

o

p

.

i

p

c

.

S

e

r

v

e

r

1.run(Server.java:2045) at java.security.AccessController.doPrivileged(Native Method) at javax.security.auth.Subject.doAs(Subject.java:422) at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1698) at org.apache.hadoop.ipc.Server

1.run(Server.java:2045)atjava.security.AccessController.doPrivileged(NativeMethod)atjavax.security.auth.Subject.doAs(Subject.java:422)atorg.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1698)atorg.apache.hadoop.ipc.ServerHandler.run(Server.java:2045)

复制代码

复制代码

什么是安全模式?

安全模式是HDFS所处的一种特殊状态,在这种状态下,文件系统只接受读数据请求,而不接受删除、修改等变更请求。在NameNode主节点启动时,HDFS首先进入安全模式,DataNode在启动的时候会向namenode汇报可用的block等状态,当整个系统达到安全标准时,HDFS自动离开安全模式。如果HDFS出于安全模式下,则文件block不能进行任何的副本复制操作,因此达到最小的副本数量要求是基于datanode启动时的状态来判定的,启动时不会再做任何复制(从而达到最小副本数量要求)原博文:https://blog.youkuaiyun.com/bingduanlbd/article/details/51900512。

1、集群升级维护时手动进入安全模式

hadoop dfsadmin -safemode enter

2、退出安全模式:

hadoop dfsadmin -safemode leave

3、返回安全模式是否开启的信息

hadoop dfsadmin -safemode get

因此,当发现namenode处于安全模式,无法启动时,可以使用hadoop dfsadmin -safemode leave退出安全模式,重启namenode解决问题!

四、INFO hdfs.DFSClient: Exception in createBlockOutputStream java.net.NoRouteToHostException: No route to host

复制代码

复制代码

16/07/27 01:29:26 INFO hdfs.DFSClient: Exception in createBlockOutputStream

java.net.NoRouteToHostException: No route to host

at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717)

at org.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206)

at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:531)

at org.apache.hadoop.hdfs.DFSOutputStream.createSocketForPipeline(DFSOutputStream.java:1537)

at org.apache.hadoop.hdfs.DFSOutputStream

D

a

t

a

S

t

r

e

a

m

e

r

.

c

r

e

a

t

e

B

l

o

c

k

O

u

t

p

u

t

S

t

r

e

a

m

(

D

F

S

O

u

t

p

u

t

S

t

r

e

a

m

.

j

a

v

a

:

1313

)

a

t

o

r

g

.

a

p

a

c

h

e

.

h

a

d

o

o

p

.

h

d

f

s

.

D

F

S

O

u

t

p

u

t

S

t

r

e

a

m

DataStreamer.createBlockOutputStream(DFSOutputStream.java:1313) at org.apache.hadoop.hdfs.DFSOutputStream

DataStreamer.createBlockOutputStream(DFSOutputStream.java:1313)atorg.apache.hadoop.hdfs.DFSOutputStreamDataStreamer.nextBlockOutputStream(DFSOutputStream.java:1266)

at org.apache.hadoop.hdfs.DFSOutputStream

D

a

t

a

S

t

r

e

a

m

e

r

.

r

u

n

(

D

F

S

O

u

t

p

u

t

S

t

r

e

a

m

.

j

a

v

a

:

449

)

16

/

07

/

2701

:

29

:

26

I

N

F

O

h

d

f

s

.

D

F

S

C

l

i

e

n

t

:

A

b

a

n

d

o

n

i

n

g

B

P

−

555863411

−

172.16.95.100

−

1469590594354

:

b

l

k

1

07374182

5

1

00116

/

07

/

2701

:

29

:

26

I

N

F

O

h

d

f

s

.

D

F

S

C

l

i

e

n

t

:

E

x

c

l

u

d

i

n

g

d

a

t

a

n

o

d

e

D

a

t

a

n

o

d

e

I

n

f

o

W

i

t

h

S

t

o

r

a

g

e

[

172.16.95.101

:

50010

,

D

S

−

e

e

00

e

1

f

8

−

5143

−

4

f

06

−

9

e

f

8

−

b

0

f

862

f

c

e

649

,

D

I

S

K

]

16

/

07

/

2701

:

29

:

26

I

N

F

O

h

d

f

s

.

D

F

S

C

l

i

e

n

t

:

E

x

c

e

p

t

i

o

n

i

n

c

r

e

a

t

e

B

l

o

c

k

O

u

t

p

u

t

S

t

r

e

a

m

j

a

v

a

.

n

e

t

.

N

o

R

o

u

t

e

T

o

H

o

s

t

E

x

c

e

p

t

i

o

n

:

N

o

r

o

u

t

e

t

o

h

o

s

t

a

t

s

u

n

.

n

i

o

.

c

h

.

S

o

c

k

e

t

C

h

a

n

n

e

l

I

m

p

l

.

c

h

e

c

k

C

o

n

n

e

c

t

(

N

a

t

i

v

e

M

e

t

h

o

d

)

a

t

s

u

n

.

n

i

o

.

c

h

.

S

o

c

k

e

t

C

h

a

n

n

e

l

I

m

p

l

.

f

i

n

i

s

h

C

o

n

n

e

c

t

(

S

o

c

k

e

t

C

h

a

n

n

e

l

I

m

p

l

.

j

a

v

a

:

717

)

a

t

o

r

g

.

a

p

a

c

h

e

.

h

a

d

o

o

p

.

n

e

t

.

S

o

c

k

e

t

I

O

W

i

t

h

T

i

m

e

o

u

t

.

c

o

n

n

e

c

t

(

S

o

c

k

e

t

I

O

W

i

t

h

T

i

m

e

o

u

t

.

j

a

v

a

:

206

)

a

t

o

r

g

.

a

p

a

c

h

e

.

h

a

d

o

o

p

.

n

e

t

.

N

e

t

U

t

i

l

s

.

c

o

n

n

e

c

t

(

N

e

t

U

t

i

l

s

.

j

a

v

a

:

531

)

a

t

o

r

g

.

a

p

a

c

h

e

.

h

a

d

o

o

p

.

h

d

f

s

.

D

F

S

O

u

t

p

u

t

S

t

r

e

a

m

.

c

r

e

a

t

e

S

o

c

k

e

t

F

o

r

P

i

p

e

l

i

n

e

(

D

F

S

O

u

t

p

u

t

S

t

r

e

a

m

.

j

a

v

a

:

1537

)

a

t

o

r

g

.

a

p

a

c

h

e

.

h

a

d

o

o

p

.

h

d

f

s

.

D

F

S

O

u

t

p

u

t

S

t

r

e

a

m

DataStreamer.run(DFSOutputStream.java:449) 16/07/27 01:29:26 INFO hdfs.DFSClient: Abandoning BP-555863411-172.16.95.100-1469590594354:blk_1073741825_1001 16/07/27 01:29:26 INFO hdfs.DFSClient: Excluding datanode DatanodeInfoWithStorage[172.16.95.101:50010,DS-ee00e1f8-5143-4f06-9ef8-b0f862fce649,DISK] 16/07/27 01:29:26 INFO hdfs.DFSClient: Exception in createBlockOutputStream java.net.NoRouteToHostException: No route to host at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method) at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717) at org.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206) at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:531) at org.apache.hadoop.hdfs.DFSOutputStream.createSocketForPipeline(DFSOutputStream.java:1537) at org.apache.hadoop.hdfs.DFSOutputStream

DataStreamer.run(DFSOutputStream.java:449)16/07/2701:29:26INFOhdfs.DFSClient:AbandoningBP−555863411−172.16.95.100−1469590594354:blk1073741825100116/07/2701:29:26INFOhdfs.DFSClient:ExcludingdatanodeDatanodeInfoWithStorage[172.16.95.101:50010,DS−ee00e1f8−5143−4f06−9ef8−b0f862fce649,DISK]16/07/2701:29:26INFOhdfs.DFSClient:ExceptionincreateBlockOutputStreamjava.net.NoRouteToHostException:Noroutetohostatsun.nio.ch.SocketChannelImpl.checkConnect(NativeMethod)atsun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717)atorg.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206)atorg.apache.hadoop.net.NetUtils.connect(NetUtils.java:531)atorg.apache.hadoop.hdfs.DFSOutputStream.createSocketForPipeline(DFSOutputStream.java:1537)atorg.apache.hadoop.hdfs.DFSOutputStreamDataStreamer.createBlockOutputStream(DFSOutputStream.java:1313)

at org.apache.hadoop.hdfs.DFSOutputStream

D

a

t

a

S

t

r

e

a

m

e

r

.

n

e

x

t

B

l

o

c

k

O

u

t

p

u

t

S

t

r

e

a

m

(

D

F

S

O

u

t

p

u

t

S

t

r

e

a

m

.

j

a

v

a

:

1266

)

a

t

o

r

g

.

a

p

a

c

h

e

.

h

a

d

o

o

p

.

h

d

f

s

.

D

F

S

O

u

t

p

u

t

S

t

r

e

a

m

DataStreamer.nextBlockOutputStream(DFSOutputStream.java:1266) at org.apache.hadoop.hdfs.DFSOutputStream

DataStreamer.nextBlockOutputStream(DFSOutputStream.java:1266)atorg.apache.hadoop.hdfs.DFSOutputStreamDataStreamer.run(DFSOutputStream.java:449)

16/07/27 01:29:26 INFO hdfs.DFSClient: Abandoning BP-555863411-172.16.95.100-1469590594354:blk_1073741826_1002

16/07/27 01:29:26 INFO hdfs.DFSClient: Excluding datanode DatanodeInfoWithStorage[172.16.95.102:50010,DS-eea51eda-0a07-4583-9eee-acd7fc645859,DISK]

16/07/27 01:29:26 WARN hdfs.DFSClient: DataStreamer Exception

org.apache.hadoop.ipc.RemoteException(java.io.IOException): File /wc/mytemp/123.COPYING could only be replicated to 0 nodes instead of minReplication (=1). There are 2 datanode(s) running and 2 node(s) are excluded in this operation.

at org.apache.hadoop.hdfs.server.blockmanagement.BlockManager.chooseTarget4NewBlock(BlockManager.java:1547)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.getNewBlockTargets(FSNamesystem.java:3107)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.getAdditionalBlock(FSNamesystem.java:3031)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.addBlock(NameNodeRpcServer.java:724)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.addBlock(ClientNamenodeProtocolServerSideTranslatorPB.java:492)

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol

2.

c

a

l

l

B

l

o

c

k

i

n

g

M

e

t

h

o

d

(

C

l

i

e

n

t

N

a

m

e

n

o

d

e

P

r

o

t

o

c

o

l

P

r

o

t

o

s

.

j

a

v

a

)

a

t

o

r

g

.

a

p

a

c

h

e

.

h

a

d

o

o

p

.

i

p

c

.

P

r

o

t

o

b

u

f

R

p

c

E

n

g

i

n

e

2.callBlockingMethod(ClientNamenodeProtocolProtos.java) at org.apache.hadoop.ipc.ProtobufRpcEngine

2.callBlockingMethod(ClientNamenodeProtocolProtos.java)atorg.apache.hadoop.ipc.ProtobufRpcEngineServer

P

r

o

t

o

B

u

f

R

p

c

I

n

v

o

k

e

r

.

c

a

l

l

(

P

r

o

t

o

b

u

f

R

p

c

E

n

g

i

n

e

.

j

a

v

a

:

616

)

a

t

o

r

g

.

a

p

a

c

h

e

.

h

a

d

o

o

p

.

i

p

c

.

R

P

C

ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:616) at org.apache.hadoop.ipc.RPC

ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:616)atorg.apache.hadoop.ipc.RPCServer.call(RPC.java:969)

at org.apache.hadoop.ipc.Server$Handler

1.

r

u

n

(

S

e

r

v

e

r

.

j

a

v

a

:

2049

)

a

t

o

r

g

.

a

p

a

c

h

e

.

h

a

d

o

o

p

.

i

p

c

.

S

e

r

v

e

r

1.run(Server.java:2049) at org.apache.hadoop.ipc.Server

1.run(Server.java:2049)atorg.apache.hadoop.ipc.ServerHandler

1.

r

u

n

(

S

e

r

v

e

r

.

j

a

v

a

:

2045

)

a

t

j

a

v

a

.

s

e

c

u

r

i

t

y

.

A

c

c

e

s

s

C

o

n

t

r

o

l

l

e

r

.

d

o

P

r

i

v

i

l

e

g

e

d

(

N

a

t

i

v

e

M

e

t

h

o

d

)

a

t

j

a

v

a

x

.

s

e

c

u

r

i

t

y

.

a

u

t

h

.

S

u

b

j

e

c

t

.

d

o

A

s

(

S

u

b

j

e

c

t

.

j

a

v

a

:

422

)

a

t

o

r

g

.

a

p

a

c

h

e

.

h

a

d

o

o

p

.

s

e

c

u

r

i

t

y

.

U

s

e

r

G

r

o

u

p

I

n

f

o

r

m

a

t

i

o

n

.

d

o

A

s

(

U

s

e

r

G

r

o

u

p

I

n

f

o

r

m

a

t

i

o

n

.

j

a

v

a

:

1657

)

a

t

o

r

g

.

a

p

a

c

h

e

.

h

a

d

o

o

p

.

i

p

c

.

S

e

r

v

e

r

1.run(Server.java:2045) at java.security.AccessController.doPrivileged(Native Method) at javax.security.auth.Subject.doAs(Subject.java:422) at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1657) at org.apache.hadoop.ipc.Server

1.run(Server.java:2045)atjava.security.AccessController.doPrivileged(NativeMethod)atjavax.security.auth.Subject.doAs(Subject.java:422)atorg.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1657)atorg.apache.hadoop.ipc.ServerHandler.run(Server.java:2043)

at org.apache.hadoop.ipc.Client.call(Client.java:1475)

at org.apache.hadoop.ipc.Client.call(Client.java:1412)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:229)

at com.sun.proxy.$Proxy9.addBlock(Unknown Source)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.addBlock(ClientNamenodeProtocolTranslatorPB.java:418)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:497)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:191)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:102)

at com.sun.proxy.$Proxy10.addBlock(Unknown Source)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.locateFollowingBlock(DFSOutputStream.java:1459)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.nextBlockOutputStream(DFSOutputStream.java:1255)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:449)

put: File /wc/mytemp/123.COPYING could only be replicated to 0 nodes instead of minReplication (=1). There are 2 datanode(s) running and 2 node(s) are excluded in this operation.

[hadoop@master bin]$ service firewall

The service command supports only basic LSB actions (start, stop, restart, try-restart, reload, force-reload, status). For other actions, please try to use systemctl.

复制代码

复制代码

检查防火墙是否关闭,如果没有关闭将所有节点的防火墙进行关闭。在CentOS 6中命令 service iptables stop,在CentOS 7中命令 service firewalld stop

检查所有的主机,/etc/selinux/config下的SELINUX,设置SELINUX=disabled。

再检测上述问题即可解决。为了防止防火墙开机重启,执行命令systemctl disable firewalld.service

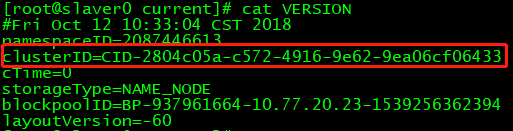

五、hadoop 运行出错,发现是ClusterId不一致问题

进入/dfs/nn/current,利用cat VERSION,查看确认各节点的clusterID是否一致

如果不一致,将主节点的clusterID进行拷贝,并修改各不一致的子节点的clusterID,保存退出,即可解决问题!

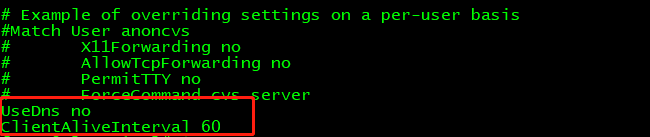

六、解决SecureCRT等软件连接Linux速度缓慢问题,(有时出现 The semaphore timeout period has expired)

编辑sshd_config文件 ---->vi /etc/ssh/sshd_config

在文件ssh_config中添加如下代码,并保存退出,重启service sshd restart 或重启 reboot 即可。

两种情况类似一块儿处理了…,避免再出现问题

UseDns no

ClientAliveInterval 60

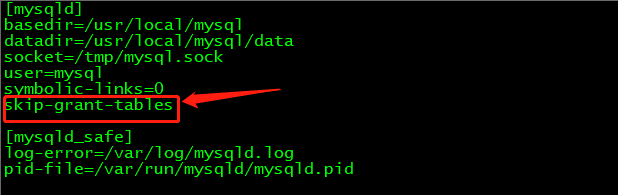

七、登录MySQL5.7时出现ERROR 1045 (28000): Access denied for user ‘root’@‘localhost’

使用vi /etc/my.cnf,打开mysql配置文件,在文件中[mysqld]加入代码 skip-grant-tables, 退出并保存。

使用service mysql restart, 重启MySQL服务

然后再次进入到终端当中,敲入 mysql -u root -p 命令然后回车,当需要输入密码时,直接按enter键,便可以不用密码登录到数据库当中

update mysql.user set authentication_string=password(‘123456’) where user=‘root’;

再次进入到之前的配置文件中,将代码 skip-grant-tables进行删除即可。

如果实在不行,请参考https://www.cnblogs.com/yanqr/p/9753445.html

290

290

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?