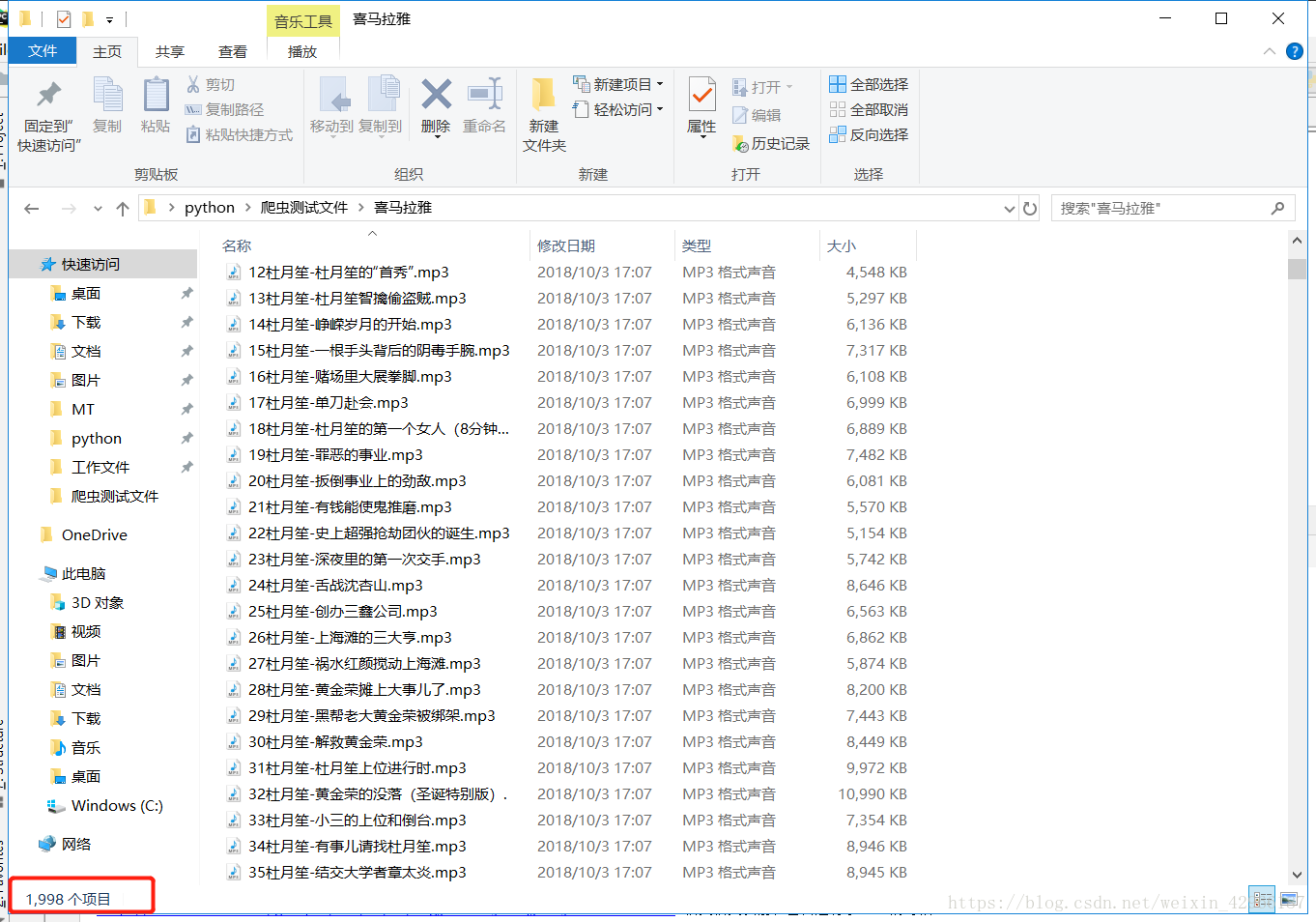

先上图,只爬了20分钟左右。保存了2000个音频

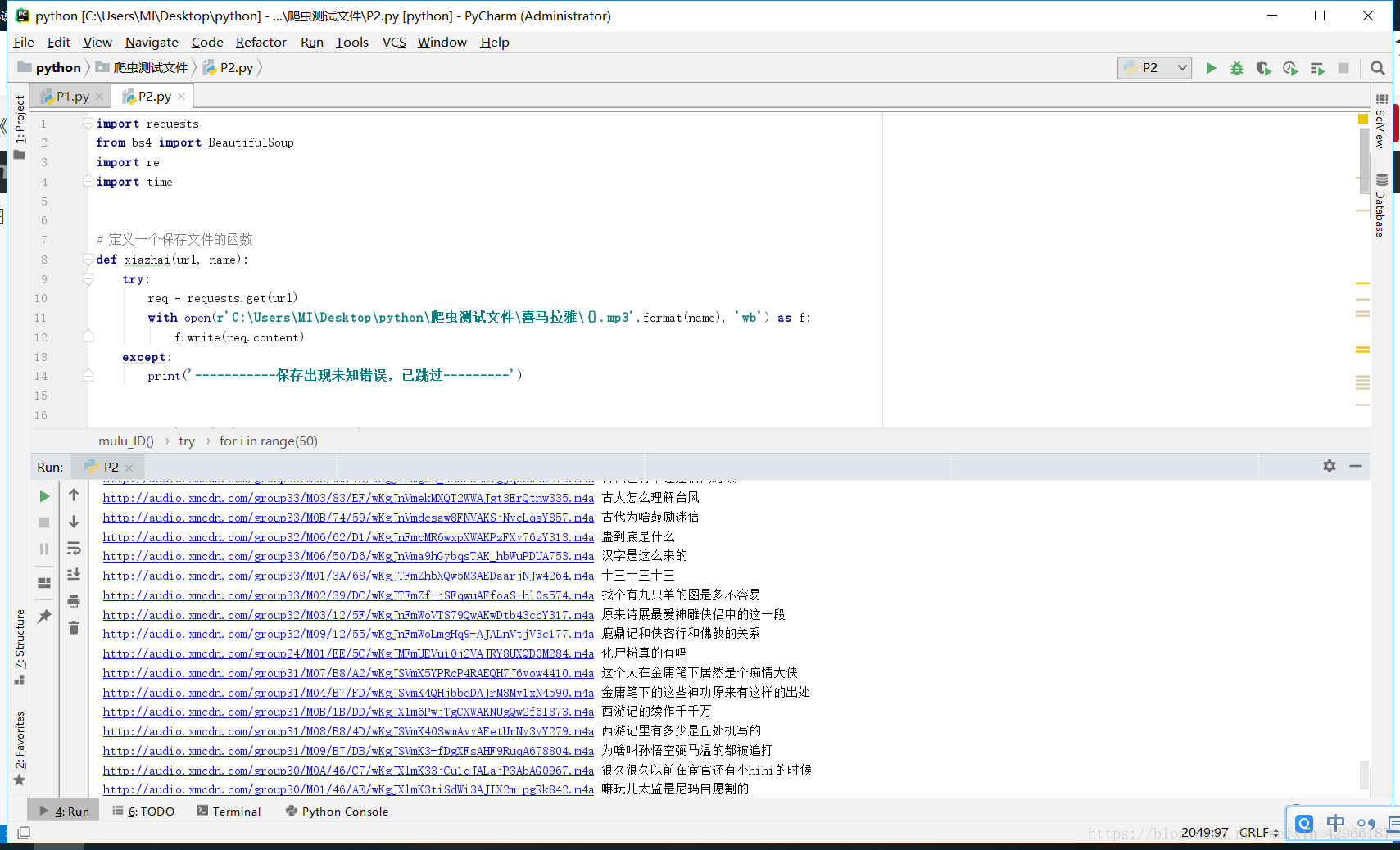

完整代码如下,,,直接拿去就可以用,

报错的话更改一下Cookie

import requests

from bs4 import BeautifulSoup

import re

import time

# 定义一个保存文件的函数

def xiazhai(url, name):

try:

req = requests.get(url)

with open(r'C:\Users\MI\Desktop\python\爬虫测试文件\喜马拉雅\{}.mp3'.format(name), 'wb') as f:

f.write(req.content)

except:

print('-----------保存出现未知错误,已跳过---------')

# 定义一个获取下载链接的函数

def huoqu_url(mulu):

for pn in range(1, 31): # 每个书有30页数据

headers = {

'Cookie': 'Hm_lvt_4a7d8ec50cfd6af753c4f8aee3425070=1538547574; Hm_lpvt_4a7d8ec50cfd6af753c4f8aee3425070=1538547574',

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:62.0) Gecko/20100101 Firefox/62.0',

'Host': 'www.ximalaya.com',

'Referer': 'https: // www.ximalaya.com / xiangsheng / {} /'.format(mulu)

}

data = {

'albumId': mulu,

'pageNum': pn,

'sort': ' -1',

'pageSize': ' 30'

}

url1 = 'https://www.ximalaya.com/revision/play/album?albumId={}&pageNum={}&sort=-1&pageSize=30'.format(mulu, pn)

try:

r = requests.get(url1, headers=headers, data=data)

r = r.json()

for i in range(30):

r1 = r['data']['tracksAudioPlay'][i]['src'] # 获取到的url

r2 = r['data']['tracksAudioPlay'][i]['trackName']

r2 = re.sub(r'《|》|?|!|。|,|:|;|:|;| ', '', r2) # 正则后的名称

xiazhai(r1, r2)

print(r1, r2)

time.sleep(1)

except:

print('----------获取下载链接出现未知错误,已跳过---------')

#定义一个获取排行榜音频ID

def mulu_ID():

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:62.0) Gecko/20100101 Firefox/62.0'

}

url1 = 'https://www.ximalaya.com/lishi/top/'

try:

r = requests.get(url1, headers=headers)

soup = BeautifulSoup(r.text, 'lxml')

r = soup.find_all('div', attrs={'class': 'rankpage-content clearfix', 'class': 'RZ7r right-rank-content',

'class': 'RZ7r rrc-list'})

soup = BeautifulSoup(str(r), 'lxml')

r_1 = soup.find_all('a')

for i in range(50): # 排行榜第一页有50个数据

r1 = str(r_1[i])

r = r1[16:24]

id = re.sub(r'/|<|>\"|"|>','',r)

print('-----------------------这是第{}本书--------------------'.format(i+1),'图书ID:',id)

huoqu_url(id)#调用获取下载链接的函数

except:

print('-----------获取书本目录出现出现未知错误,已跳过---------')

mulu_ID()

926

926

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?