TensorFlow的cpu安装非常简单,只要输入命令pip install tensorflow即可。下面介绍的是安装gpu版本的TensorFlow。

- 安装cuda

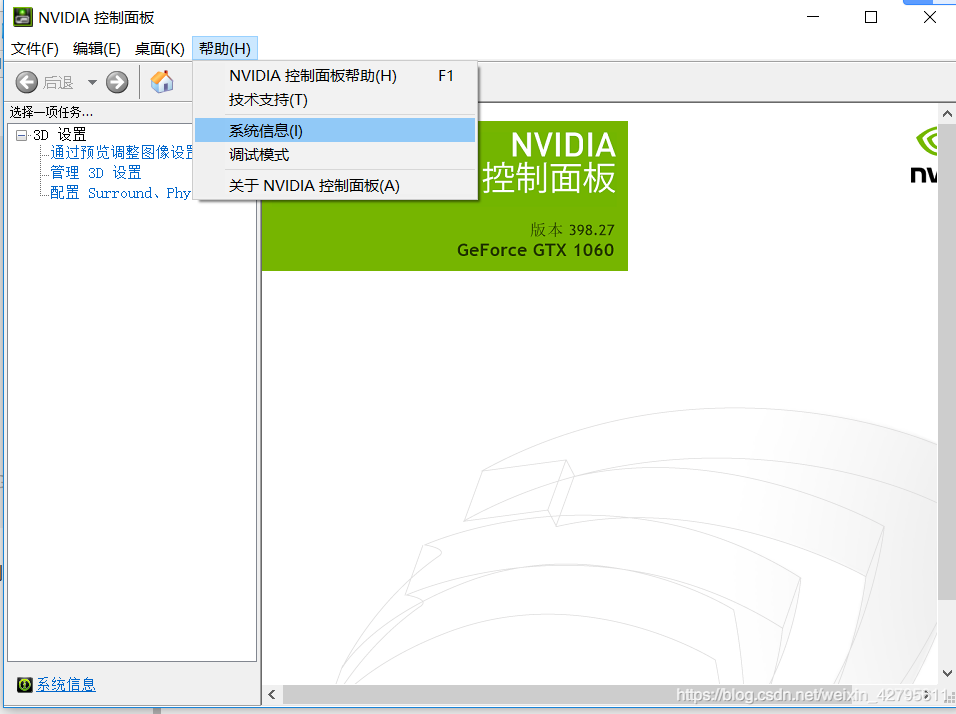

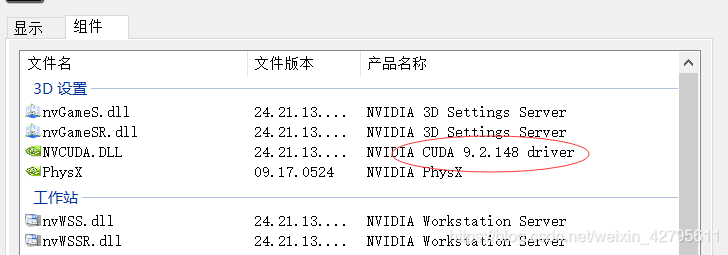

在安装cuda之前,首先得确定你的显卡驱动安装正确,而且要下对应的版本,打开NVIDIA控制面板,点击帮助,在点击系统信息,点击组件,我的版本是cuda9.2,网上很多教程都没有说到这一块,所以我在这研究了一天才解决,你得根据你电脑的显卡下载对应的版本。下载好之后直接点击安装默认路径就行,接着在下载cuddn v7.4。

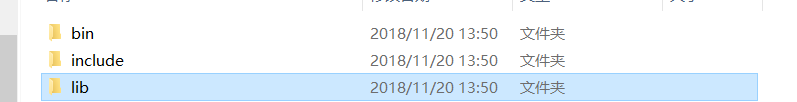

cuddn下载后解压得到如下三个文件夹

三个目录的文件分别为

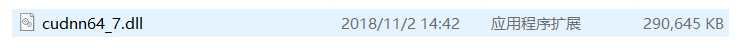

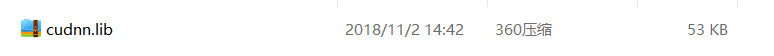

将相应的bin、include、lib分别放于自己的cuda下面的相应目录中,cuda默认目录为C:\ProgramFiles\NVIDIA GPU Computing Toolkit\CUDA\v7.5,因此将刚才解压的文件放在这个目录下面的bin、include、lib文件夹下。例如将cudnn64_7.dll复制到bin目录之下:

至此,CUDA安装完毕。

2. 安装Anaconda2

我安装的版本是python2.7版本,如果你的电脑是python3.6的话也可以,这里有一篇文章,Windows10下python3和python2同时安装。安装比较简单就不在叙述。

3. 安装TensorFlow-gpu

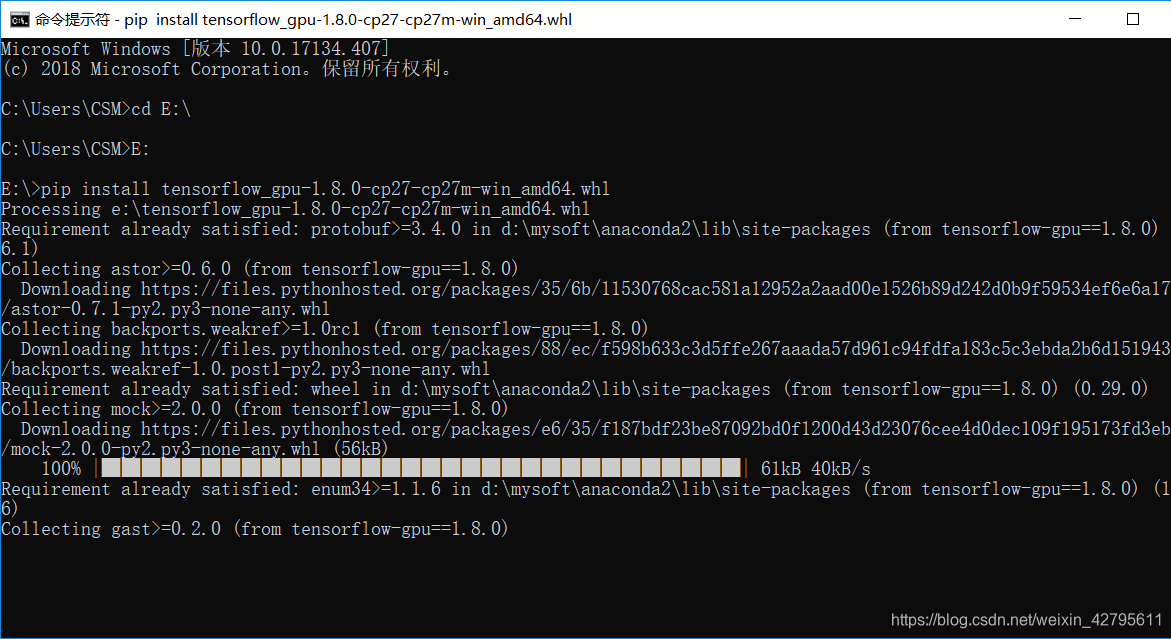

打开命令提示符,使用命令pip install tensorflow-gpu 进行安装。这时你会发现 安装很慢,而且很容易出现安装出错,所以我这里换一种安装方法。下载tensorflow_gpu-1.8.0-cp27-cp27m-win_amd64.whl文件,这个安装包只能用python2.7版本安装,其他的版本网上有很多下载链接就不在提供地址了。

下载好之后将目录指定到tensorflow_gpu-1.8.0-cp27-cp27m-win_amd64.whl文件目录下,记住一定要是英文目录,不能出现中文字。输入命令pip install tensorflow_gpu-1.8.0-cp27-cp27m-win_amd64.whl

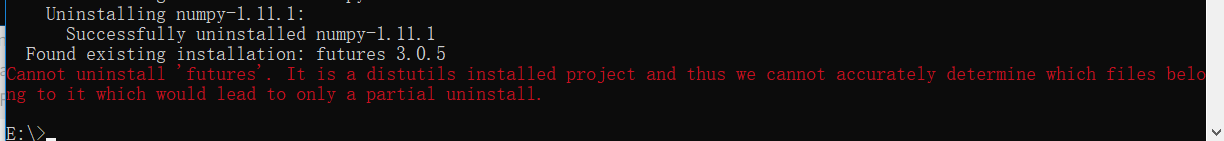

4. 这时候你可能会出现如下错误

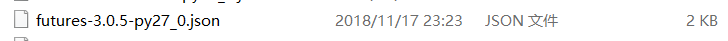

这个错误是由于futures版本过低的原因,需要重新安装,但是你会发现想要卸载futures都会出错,这里教一个小技巧,找到你python的安装路径D:\mysoft\Anaconda2\Lib\site-packages(这是我安装的路径),在此路径下删除futures文件

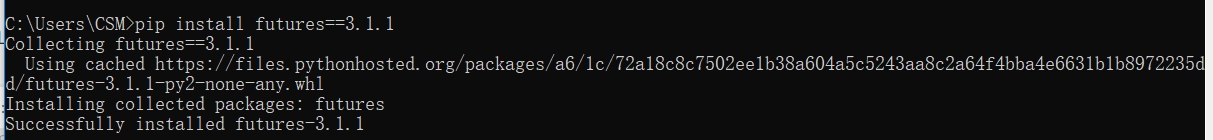

然后重新打开命令窗口输入命令pip install futures==3.1.1

安装成功后再重复第3步重新安装TensorFlow。到此安装结束。

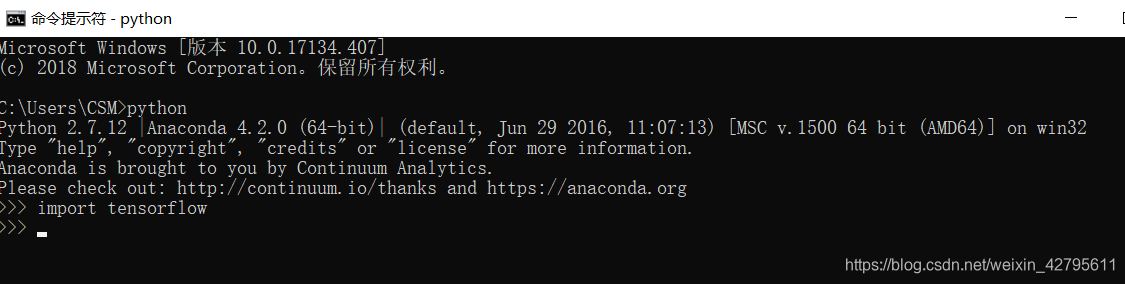

5. 测试TensorFlow是否安装成功

重新打开命令窗口输入python命令,再输入import tensorflow,如果没报错就证明安装成功了。

1734

1734

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?