spring-cloud-netflix-eureka 源码解析:

本文主要针对 spring-cloud-dependencies Hoxton.SR4版本, spring-cloud-starter-netflix-eureka-server 的 2.2.2.RELEASE 版本进行源码的解析。

对于未接触过 Eureka 的小伙伴可以参考 https://www.cnblogs.com/wuzhenzhao/p/9466752.html 进行一些基础知识的了解。

这里主要从以下几个方面进行源码解析:

- 服务注册入口

- 服务注册请求的发起

- Eureka Server 处理注册请求

- Eureka Server 服务信息同步

- Eureka 的多级缓存设计

- 服务续约

- Eureka Serer 收到续约心跳请求的处理

- 服务发现

- Eureka 自我保护机制

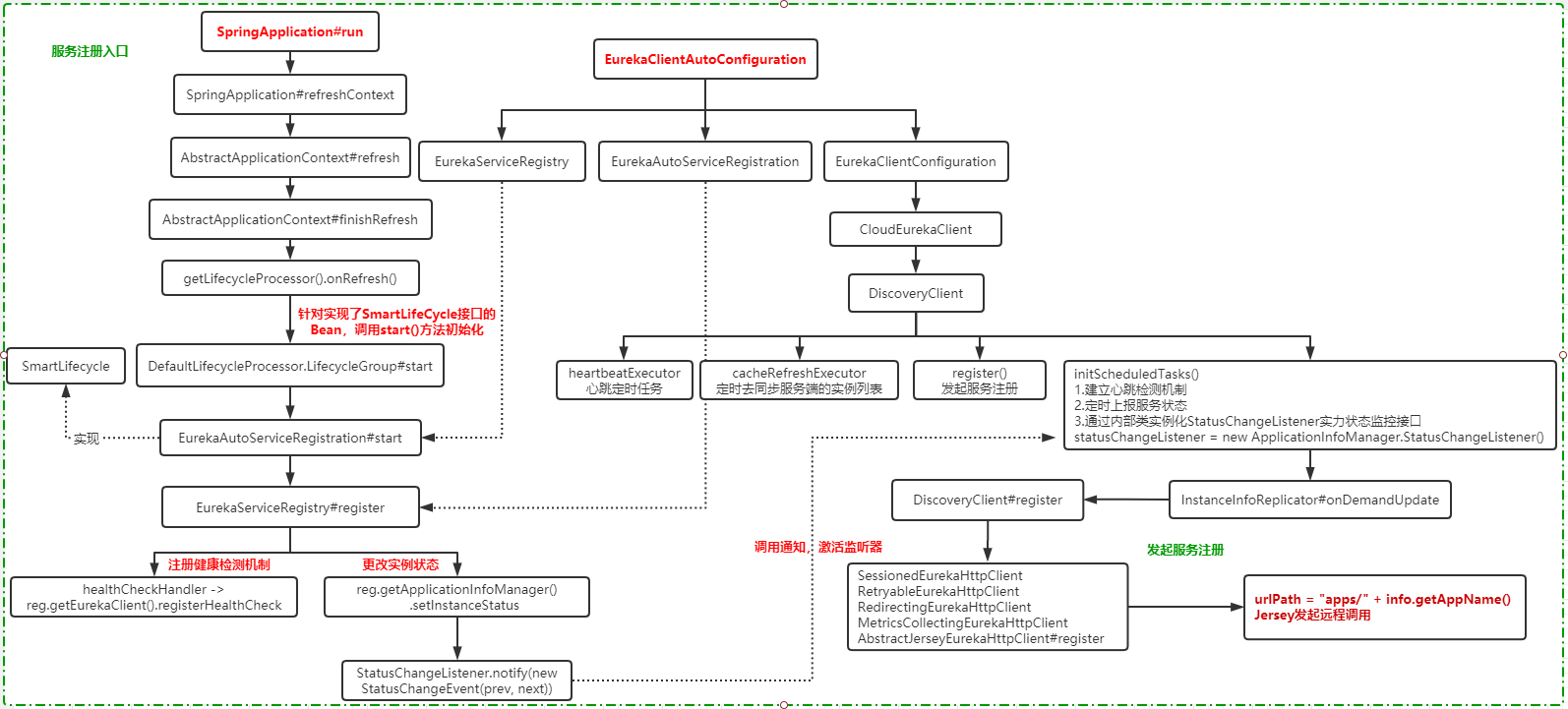

服务注册的入口 :

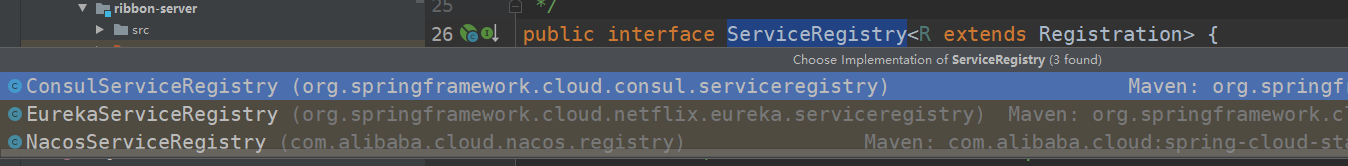

服务注册是在spring boot应用启动的时候发起的。我们说spring cloud是一个生态,它提供了一套标准,这套标准可以通过不同的组件来实现,其中就包含服务注册/发现、熔断、负载均衡等,在spring-cloud-common这个包中,org.springframework.cloud.client.serviceregistry 路径下,可以看到一个服务注册的接口定义 ServiceRegistry 。它就是定义了spring cloud中服务注册的一个接口。这个接口有一个实现 EurekaServiceRegistry 。表示采用的是Eureka Server作为服务注册中心。由于我本地项目里引入了 Consul Nacos,所以 可以看到多个实现:

服务注册的发起,我们可以猜测一下应该是什么时候完成?大家想想其实应该不难猜测到,服务的注册取决于服务是否已经启动好了。而在spring boot中,会等到spring 容器启动并且所有的配置都完成之后来进行注册。而这个动作在spring boot的启动方法中的refreshContext中完成。

而 refreshContext 一定会走到 AbstractApplicationContext#finishRefresh 方法里,具体的流程大家可以自己跟一下源码,从 SpringApplication.run() 开始。

我们观察一下finishRefresh这个方法,从名字上可以看到它是用来体现完成刷新的操作,也就是刷新完成之后要做的后置的操作。它主要做几个事情

protected void finishRefresh() {

// Clear context-level resource caches (such as ASM metadata from scanning).

//清空缓存

clearResourceCaches();

// Initialize lifecycle processor for this context.

//初始化一个LifecycleProcessor,在Spring启动的时候启动bean,在spring结束的时候销毁bean

initLifecycleProcessor();

// Propagate refresh to lifecycle processor first.

//调用LifecycleProcessor的onRefresh方法,启动实现了Lifecycle接口的bean

getLifecycleProcessor().onRefresh();

// Publish the final event.

//发布ContextRefreshedEvent

publishEvent(new ContextRefreshedEvent(this));

// Participate in LiveBeansView MBean, if active.

//注册MBean,通过JMX进行监控和管理

LiveBeansView.registerApplicationContext(this);

}

在这个方法中,我们重点关注 getLifecycleProcessor().onRefresh() ,它是调用生命周期处理器的onrefresh方法,找到SmartLifecycle接口的所有实现类并调用start方法。

后续的调用链路为:DefaultLifecycleProcessor.startBean -> start() -> doStart() -> bean.start()

SmartLifeCycle是一个接口,当Spring容器加载完所有的Bean并且初始化之后,会继续回调实现了SmartLifeCycle接口的类中对应的方法,比如(start)。

在 Eureka 的启动过程中,正是利用这个机制,而对应的类就是 EurekaAutoServiceRegistration ,通过名字我们也能知道,这正是服务注册自动配置类。他也正巧实现了 SmartLifeCycle。

服务的注册 :

经过前面的 SmartLifeCycle 知识点的了解。此时,bean.start(),调用的可能是EurekaAutoServiceRegistration中的start方法,因为很显然,它实现了SmartLifeCycle接口。

@Override

public void start() {

// only set the port if the nonSecurePort or securePort is 0 and this.port != 0

if (this.port.get() != 0) {

if (this.registration.getNonSecurePort() == 0) {

this.registration.setNonSecurePort(this.port.get());

}

if (this.registration.getSecurePort() == 0 && this.registration.isSecure()) {

this.registration.setSecurePort(this.port.get());

}

}

// only initialize if nonSecurePort is greater than 0 and it isn't already running

// because of containerPortInitializer below

if (!this.running.get() && this.registration.getNonSecurePort() > 0) {

this.serviceRegistry.register(this.registration);

this.context.publishEvent(new InstanceRegisteredEvent<>(this,

this.registration.getInstanceConfig()));

this.running.set(true);

}

}

在start方法中,我们可以看到 this.serviceRegistry.register 这个方法,它实际上就是发起服务注册的机制。此时this.serviceRegistry的实例,应该是 EurekaServiceRegistry , 原因是EurekaAutoServiceRegistration的构造方法中,会有一个赋值操作,而这个构造方法是在 EurekaClientAutoConfiguration 这个自动装配类中被装配和初始化的。(注:如果找不到一个类的初始化,可以利用 IDEA的 find usage 进行查找)

this.serviceRegistry.register(this.registration); 方法最终会调用EurekaServiceRegistry 类中的 register 方法来实现服务注册。

@Override

public void register(EurekaRegistration reg) {

maybeInitializeClient(reg);

if (log.isInfoEnabled()) {

log.info("Registering application "

+ reg.getApplicationInfoManager().getInfo().getAppName()

+ " with eureka with status "

+ reg.getInstanceConfig().getInitialStatus());

}

//设置当前实例的状态,一旦这个实例的状态发生变化,只要状态不是DOWN,那么就会被监听器监听并且执行服务注册。

reg.getApplicationInfoManager()

.setInstanceStatus(reg.getInstanceConfig().getInitialStatus());

//设置健康检查的处理

reg.getHealthCheckHandler().ifAvailable(healthCheckHandler -> reg

.getEurekaClient().registerHealthCheck(healthCheckHandler));

}

从上述代码来看,注册方法中并没有真正调用Eureka的方法去执行注册,而是仅仅设置了一个状态以及设置健康检查处理器。

我想找到EurekaServiceRegistry.register方法中的 reg.getApplicationInfoManager 这个实例是什么,而且我们发现ApplicationInfoManager是来自于EurekaRegistration这个类中的属性。而EurekaRegistration又是在EurekaAutoServiceRegistration这个类中实例化的。那我在想,是不是在自动装配中做了什么东西。于是找到EurekaClientAutoConfiguration这个类,果然看到了Bean的一些自动装配,其中包含 EurekaClient 、 ApplicationInfoMangager 、 EurekaRegistration 等。

@Configuration(proxyBeanMethods = false)

@ConditionalOnMissingRefreshScope

protected static class EurekaClientConfiguration {

@Autowired

private ApplicationContext context;

@Autowired

private AbstractDiscoveryClientOptionalArgs<?> optionalArgs;

@Bean(destroyMethod = "shutdown")

@ConditionalOnMissingBean(value = EurekaClient.class,

search = SearchStrategy.CURRENT)

public EurekaClient eurekaClient(ApplicationInfoManager manager,

EurekaClientConfig config) {

return new CloudEurekaClient(manager, config, this.optionalArgs,

this.context);

}

@Bean

@ConditionalOnMissingBean(value = ApplicationInfoManager.class,

search = SearchStrategy.CURRENT)

public ApplicationInfoManager eurekaApplicationInfoManager(

EurekaInstanceConfig config) {

InstanceInfo instanceInfo = new InstanceInfoFactory().create(config);

return new ApplicationInfoManager(config, instanceInfo);

}

@Bean

@ConditionalOnBean(AutoServiceRegistrationProperties.class)

@ConditionalOnProperty(

value = "spring.cloud.service-registry.auto-registration.enabled",

matchIfMissing = true)

public EurekaRegistration eurekaRegistration(EurekaClient eurekaClient,

CloudEurekaInstanceConfig instanceConfig,

ApplicationInfoManager applicationInfoManager, @Autowired(

required = false) ObjectProvider<HealthCheckHandler> healthCheckHandler) {

return EurekaRegistration.builder(instanceConfig).with(applicationInfoManager)

.with(eurekaClient).with(healthCheckHandler).build();

}

}

不难发现,我们似乎看到了一个很重要的Bean在启动的时候做了自动装配,也就是CloudEurekaClient 。从名字上来看,我可以很容易的识别并猜测出它是Eureka客户端的一个工具类,用来实现和服务端的通信以及处理。这个很多源码一贯的套路,要么在构造方法里面去做很多的初始化和一些后台执行的程序操作,要么就是通过异步事件的方式来处理。

接着,我们看一下CloudEurekaClient的初始化过程,它的构造方法中会通过 super 调用父类的构造方法。也就是DiscoveryClient的构造。我们可以看到在最终的DiscoveryClient改造方法中,有非常长的代码。其实很多代码可以不需要关心,大部分都是一些初始化工作,比如初始化了几个定时任务

-

heartbeatExecutor:心跳定时任务

-

cacheRefreshExecutor:定时去同步服务端的实例列表

-

initScheduledTasks()

-

建立心跳检测机制

-

定时上报服务状态

-

通过内部类实例化StatusChangeListener实力状态监控接口

-

@Inject

DiscoveryClient(ApplicationInfoManager applicationInfoManager, EurekaClientConfig config, AbstractDiscoveryClientOptionalArgs args,

Provider<BackupRegistry> backupRegistryProvider, EndpointRandomizer endpointRandomizer) {

// .......构建本服务实例信息 等等操作

// 是否要从eureka server上获取服务地址信息

if (config.shouldFetchRegistry()) {

this.registryStalenessMonitor = new ThresholdLevelsMetric(this, METRIC_REGISTRY_PREFIX + "lastUpdateSec_", new long[]{15L, 30L, 60L, 120L, 240L, 480L});

} else {

this.registryStalenessMonitor = ThresholdLevelsMetric.NO_OP_METRIC;

}

//是否要注册到eureka server上

if (config.shouldRegisterWithEureka()) {

this.heartbeatStalenessMonitor = new ThresholdLevelsMetric(this, METRIC_REGISTRATION_PREFIX + "lastHeartbeatSec_", new long[]{15L, 30L, 60L, 120L, 240L, 480L});

} else {

this.heartbeatStalenessMonitor = ThresholdLevelsMetric.NO_OP_METRIC;

}

logger.info("Initializing Eureka in region {}", clientConfig.getRegion());

//如果不需要注册、也不需要拉取服务地址,就到这里结束

if (!config.shouldRegisterWithEureka() && !config.shouldFetchRegistry()) {

logger.info("Client configured to neither register nor query for data.");

scheduler = null;

heartbeatExecutor = null;

cacheRefreshExecutor = null;

eurekaTransport = null;

instanceRegionChecker = new InstanceRegionChecker(new PropertyBasedAzToRegionMapper(config), clientConfig.getRegion());

// This is a bit of hack to allow for existing code using DiscoveryManager.getInstance()

// to work with DI'd DiscoveryClient

DiscoveryManager.getInstance().setDiscoveryClient(this);

DiscoveryManager.getInstance().setEurekaClientConfig(config);

initTimestampMs = System.currentTimeMillis();

logger.info("Discovery Client initialized at timestamp {} with initial instances count: {}",

initTimestampMs, this.getApplications().size());

return; // no need to setup up an network tasks and we are done

}

try {

// default size of 2 - 1 each for heartbeat and cacheRefresh

// 开启任务线程池,分别给下面两个

scheduler = Executors.newScheduledThreadPool(2,

new ThreadFactoryBuilder()

.setNameFormat("DiscoveryClient-%d")

.setDaemon(true)

.build());

heartbeatExecutor = new ThreadPoolExecutor(

1, clientConfig.getHeartbeatExecutorThreadPoolSize(), 0, TimeUnit.SECONDS,

new SynchronousQueue<Runnable>(),

new ThreadFactoryBuilder()

.setNameFormat("DiscoveryClient-HeartbeatExecutor-%d")

.setDaemon(true)

.build()

); // use direct handoff

cacheRefreshExecutor = new ThreadPoolExecutor(

1, clientConfig.getCacheRefreshExecutorThreadPoolSize(), 0, TimeUnit.SECONDS,

new SynchronousQueue<Runnable>(),

new ThreadFactoryBuilder()

.setNameFormat("DiscoveryClient-CacheRefreshExecutor-%d")

.setDaemon(true)

.build()

); // use direct handoff

eurekaTransport = new EurekaTransport();

scheduleServerEndpointTask(eurekaTransport, args);

AzToRegionMapper azToRegionMapper;

if (clientConfig.shouldUseDnsForFetchingServiceUrls()) {

azToRegionMapper = new DNSBasedAzToRegionMapper(clientConfig);

} else {

azToRegionMapper = new PropertyBasedAzToRegionMapper(clientConfig);

}

if (null != remoteRegionsToFetch.get()) {

azToRegionMapper.setRegionsToFetch(remoteRegionsToFetch.get().split(","));

}

instanceRegionChecker = new InstanceRegionChecker(azToRegionMapper, clientConfig.getRegion());

} catch (Throwable e) {

throw new RuntimeException("Failed to initialize DiscoveryClient!", e);

}

if (clientConfig.shouldFetchRegistry() && !fetchRegistry(false)) {

fetchRegistryFromBackup();

}

// call and execute the pre registration handler before all background tasks (inc registration) is started

if (this.preRegistrationHandler != null) {

this.preRegistrationHandler.beforeRegistration();

}

//如果需要注册到Eureka server并且是开启了初始化的时候强制注册,则调用register()发起服务注册,默认是不开启的

if (clientConfig.shouldRegisterWithEureka() && clientConfig.shouldEnforceRegistrationAtInit()) {

try {

if (!register() ) {

throw new IllegalStateException("Registration error at startup. Invalid server response.");

}

} catch (Throwable th) {

logger.error("Registration error at startup: {}", th.getMessage());

throw new IllegalStateException(th);

}

}

// finally, init the schedule tasks (e.g. cluster resolvers, heartbeat, instanceInfo replicator, fetch

initScheduledTasks();

try {

Monitors.registerObject(this);

} catch (Throwable e) {

logger.warn("Cannot register timers", e);

}

// This is a bit of hack to allow for existing code using DiscoveryManager.getInstance()

// to work with DI'd DiscoveryClient

DiscoveryManager.getInstance().setDiscoveryClient(this);

DiscoveryManager.getInstance().setEurekaClientConfig(config);

initTimestampMs = System.currentTimeMillis();

logger.info("Discovery Client initialized at timestamp {} with initial instances count: {}",

initTimestampMs, this.getApplications().size());

}

根据上面代码的分析,最后会走 initScheduledTasks 去启动一个定时任务。

-

如果配置了开启从注册中心刷新服务列表,则会开启cacheRefreshExecutor这个定时任务

-

如果开启了服务注册到Eureka,则通过需要做几个事情.

-

建立心跳检测机制

-

通过内部类来实例化StatusChangeListener 实例状态监控接口,这个就是前面我们在分析启动过程中所看到的 reg.getApplicationInfoManager().setInstanceStatus,调用notify的方法,实际上会在这里体现。

-

private void initScheduledTasks() {

//如果配置了开启从注册中心刷新服务列表,则会开启cacheRefreshExecutor这个定时任务

if (clientConfig.shouldFetchRegistry()) {

// registry cache refresh timer

int registryFetchIntervalSeconds = clientConfig.getRegistryFetchIntervalSeconds();

int expBackOffBound = clientConfig.getCacheRefreshExecutorExponentialBackOffBound();

cacheRefreshTask = new TimedSupervisorTask(

"cacheRefresh",

scheduler,

cacheRefreshExecutor,

registryFetchIntervalSeconds,

TimeUnit.SECONDS,

expBackOffBound,

new CacheRefreshThread()

);

scheduler.schedule(

cacheRefreshTask,

registryFetchIntervalSeconds, TimeUnit.SECONDS);

}

//如果开启了服务注册到Eureka,则通过需要做几个事情

if (clientConfig.shouldRegisterWithEureka()) {

int renewalIntervalInSecs = instanceInfo.getLeaseInfo().getRenewalIntervalInSecs();

int expBackOffBound = clientConfig.getHeartbeatExecutorExponentialBackOffBound();

logger.info("Starting heartbeat executor: " + "renew interval is: {}", renewalIntervalInSecs);

// Heartbeat timer 开启心跳检测

heartbeatTask = new TimedSupervisorTask(

"heartbeat",

scheduler,

heartbeatExecutor,

renewalIntervalInSecs,

TimeUnit.SECONDS,

expBackOffBound,

new HeartbeatThread()

);

scheduler.schedule(

heartbeatTask,

renewalIntervalInSecs, TimeUnit.SECONDS);

// InstanceInfo replicator 实例同步

instanceInfoReplicator = new InstanceInfoReplicator(

this,

instanceInfo,

clientConfig.getInstanceInfoReplicationIntervalSeconds(),

2); // burstSize

statusChangeListener = new ApplicationInfoManager.StatusChangeListener() {

@Override

public String getId() {

return "statusChangeListener";

}

@Override

public void notify(StatusChangeEvent statusChangeEvent) {

if (InstanceStatus.DOWN == statusChangeEvent.getStatus() ||

InstanceStatus.DOWN == statusChangeEvent.getPreviousStatus()) {

// log at warn level if DOWN was involved

logger.warn("Saw local status change event {}", statusChangeEvent);

} else {

logger.info("Saw local status change event {}", statusChangeEvent);

}

instanceInfoReplicator.onDemandUpdate();

}

};

//注册实例状态变化的监听

if (clientConfig.shouldOnDemandUpdateStatusChange()) {

applicationInfoManager.registerStatusChangeListener(statusChangeListener);

}

//启动一个实例信息复制器,主要就是为了开启一个定时线程,每40秒判断实例信息是否变更,如果变更了则重新注册

instanceInfoReplicator.start(clientConfig.getInitialInstanceInfoReplicationIntervalSeconds());

} else {

logger.info("Not registering with Eureka server per configuration");

}

}

instanceInfoReplicator.onDemandUpdate() :这个方法的主要作用是根据实例数据是否发生变化,来触发服务注册中心的数据。

public boolean onDemandUpdate() {

//限流判断

if (rateLimiter.acquire(burstSize, allowedRatePerMinute)) {

if (!scheduler.isShutdown()) {

//提交一个任务

scheduler.submit(new Runnable() {

@Override

public void run() {

logger.debug("Executing on-demand update of local InstanceInfo");

//取出之前已经提交的任务,也就是在start方法中提交的更新任务,如果任务还没有执行完成,则取消之前的任务。

Future latestPeriodic = scheduledPeriodicRef.get();

if (latestPeriodic != null && !latestPeriodic.isDone()) {

logger.debug("Canceling the latest scheduled update, it will be rescheduled at the end of on demand update");

latestPeriodic.cancel(false);//如果此任务未完成,就立即取消

}

//通过调用run方法,令任务在延时后执行,相当于周期性任务中的一次

InstanceInfoReplicator.this.run();

}

});

return true;

} else {

logger.warn("Ignoring onDemand update due to stopped scheduler");

return false;

}

} else {

logger.warn("Ignoring onDemand update due to rate limiter");

return false;

}

}

然后进入 InstanceInfoReplicator#run :

public void run() {

try {

discoveryClient.refreshInstanceInfo();

Long dirtyTimestamp = instanceInfo.isDirtyWithTime();

if (dirtyTimestamp != null) {

discoveryClient.register();

instanceInfo.unsetIsDirty(dirtyTimestamp);

}

} catch (Throwable t) {

logger.warn("There was a problem with the instance info replicator", t);

} finally {

Future next = scheduler.schedule(this, replicationIntervalSeconds, TimeUnit.SECONDS);

scheduledPeriodicRef.set(next);

}

}

最终,我们终于找到服务注册的入口了,DiscoveryClient.register

boolean register() throws Throwable {

logger.info(PREFIX + "{}: registering service...", appPathIdentifier);

EurekaHttpResponse<Void> httpResponse;

try {

httpResponse = eurekaTransport.registrationClient.register(instanceInfo);

} catch (Exception e) {

logger.warn(PREFIX + "{} - registration failed {}", appPathIdentifier, e.getMessage(), e);

throw e;

}

if (logger.isInfoEnabled()) {

logger.info(PREFIX + "{} - registration status: {}", appPathIdentifier, httpResponse.getStatusCode());

}

return httpResponse.getStatusCode() == Status.NO_CONTENT.getStatusCode();

}

到了这里我们需要知道的是 eurekaTransport.registrationClient 这个对象到底是什么 ?我们需要先知道 eurekaTransport.transportClientFactory 是什么

在 DiscoveryClient 构造方法内 调用了一个 scheduleServerEndpointTask 方法,在改方法里初始化了 eurekaTransport

private void scheduleServerEndpointTask(EurekaTransport eurekaTransport,

AbstractDiscoveryClientOptionalArgs args) {

// .......

// Ignore the raw types warnings since the client filter interface changed between jersey 1/2

@SuppressWarnings("rawtypes")

TransportClientFactories transportClientFactories = argsTransportClientFactories == null

? new Jersey1TransportClientFactories()

: argsTransportClientFactories;

Optional<SSLContext> sslContext = args == null

? Optional.empty()

: args.getSSLContext();

Optional<HostnameVerifier> hostnameVerifier = args == null

? Optional.empty()

: args.getHostnameVerifier();

// If the transport factory was not supplied with args, assume they are using jersey 1 for passivity

eurekaTransport.transportClientFactory = providedJerseyClient == null

? transportClientFactories.newTransportClientFactory(clientConfig, additionalFilters, applicationInfoManager.getInfo(), sslContext, hostnameVerifier)

: transportClientFactories.newTransportClientFactory(additionalFilters, providedJerseyClient);

// .........

}

可以发现 transportClientFactories 刚刚进来会是 null,然后构建了一个 Jersey1TransportClientFactories ,继而通过 Jersey1TransportClientFactories.newTransportClientFactory :

@Override

public TransportClientFactory newTransportClientFactory(EurekaClientConfig clientConfig,

Collection<ClientFilter> additionalFilters, InstanceInfo myInstanceInfo, Optional<SSLContext> sslContext,

Optional<HostnameVerifier> hostnameVerifier) {

final TransportClientFactory jerseyFactory = JerseyEurekaHttpClientFactory.create(

clientConfig,

additionalFilters,

myInstanceInfo,

new EurekaClientIdentity(myInstanceInfo.getIPAddr()),

sslContext,

hostnameVerifier

);

final TransportClientFactory metricsFactory = MetricsCollectingEurekaHttpClient.createFactory(jerseyFactory);

return new TransportClientFactory() {

@Override

public EurekaHttpClient newClient(EurekaEndpoint serviceUrl) {

return metricsFactory.newClient(serviceUrl);

}

@Override

public void shutdown() {

metricsFactory.shutdown();

jerseyFactory.shutdown();

}

};

}

然后这里调用了 MetricsCollectingEurekaHttpClient.createFactory(jerseyFactory)

public static TransportClientFactory createFactory(final TransportClientFactory delegateFactory) {

final Map<RequestType, EurekaHttpClientRequestMetrics> metricsByRequestType = initializeMetrics();

final ExceptionsMetric exceptionMetrics = new ExceptionsMetric(EurekaClientNames.METRIC_TRANSPORT_PREFIX + "exceptions");

return new TransportClientFactory() {

@Override

public EurekaHttpClient newClient(EurekaEndpoint endpoint) {

return new MetricsCollectingEurekaHttpClient(

delegateFactory.newClient(endpoint), //请牢记,这个delegateFactory 是上面传进来的 JerseyEurekaHttpClientFactory

metricsByRequestType,

exceptionMetrics,

false

);

}

@Override

public void shutdown() {

shutdownMetrics(metricsByRequestType);

exceptionMetrics.shutdown();

}

};

}

所以我们知道了 eurekaTransport.transportClientFactory 就是下图的 代码,匿名对象,而metricsFactory就是上面这段代码的匿名内部类

new TransportClientFactory() {

@Override

public EurekaHttpClient newClient(EurekaEndpoint serviceUrl) {

return metricsFactory.newClient(serviceUrl);

}

@Override

public void shutdown() {

metricsFactory.shutdown();

jerseyFactory.shutdown();

}

};

然后我们回到 scheduleServerEndpointTask 方法的另外一段代码 :

if (clientConfig.shouldRegisterWithEureka()) {

EurekaHttpClientFactory newRegistrationClientFactory = null;

EurekaHttpClient newRegistrationClient = null;

try {

newRegistrationClientFactory = EurekaHttpClients.registrationClientFactory(

eurekaTransport.bootstrapResolver,

eurekaTransport.transportClientFactory,

transportConfig

);

newRegistrationClient = newRegistrationClientFactory.newClient();

} catch (Exception e) {

logger.warn("Transport initialization failure", e);

}

eurekaTransport.registrationClientFactory = newRegistrationClientFactory;

eurekaTransport.registrationClient = newRegistrationClient;

}

EurekaHttpClients.registrationClientFactory 构造出来的对象是也是跟上面一样的匿名内部类,然后 newRegistrationClientFactory.newClient() 得到一个 SessionedEurekaHttpClient.

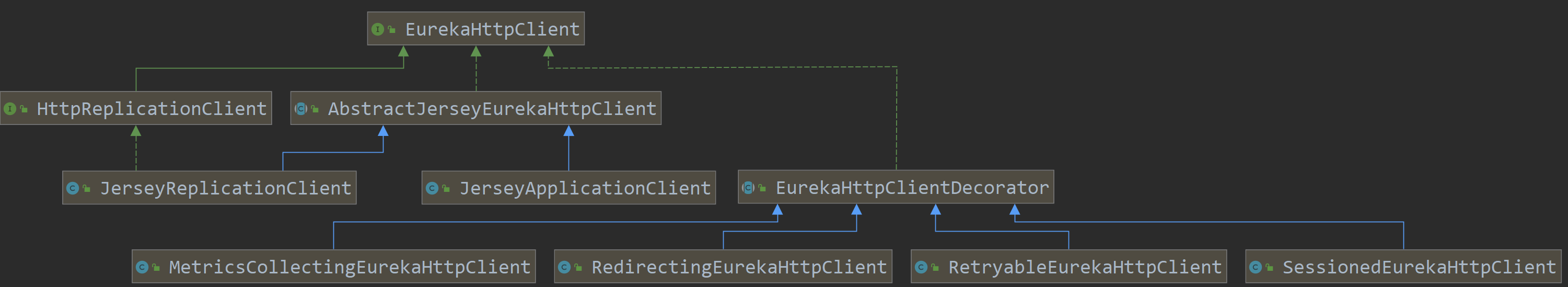

回到 DiscoveryClient#register 我们发现并没有 SessionedEurekaHttpClient,这个时候需要看一下其类图如下

我们发现其父类 EurekaHttpClientDecorator然后我们跟进去看一下:

@Override

public EurekaHttpResponse<Void> register(final InstanceInfo info) {

return execute(new RequestExecutor<Void>() {

@Override

public EurekaHttpResponse<Void> execute(EurekaHttpClient delegate) {

return delegate.register(info);

}

@Override

public RequestType getRequestType() {

return RequestType.Register;

}

});

}

最后会在这里经历过多次的循环 依次是 SessionedEurekaHttpClient ---> RetryableEurekaHttpClient ---> RedirectingEurekaHttpClient ---> MetricsCollectingEurekaHttpClient 最终走到 AbstractJerseyEurekaHttpClient#register

这里还是需要大家自己多跟代码,卡住了就断点跟:

@Override

public EurekaHttpResponse<Void> register(InstanceInfo info) {

String urlPath = "apps/" + info.getAppName();

ClientResponse response = null;

try {

Builder resourceBuilder = jerseyClient.resource(serviceUrl).path(urlPath).getRequestBuilder();

addExtraHeaders(resourceBuilder);

response = resourceBuilder

.header("Accept-Encoding", "gzip")

.type(MediaType.APPLICATION_JSON_TYPE)

.accept(MediaType.APPLICATION_JSON)

.post(ClientResponse.class, info);

return anEurekaHttpResponse(response.getStatus()).headers(headersOf(response)).build();

} finally {

if (logger.isDebugEnabled()) {

logger.debug("Jersey HTTP POST {}/{} with instance {}; statusCode={}", serviceUrl, urlPath, info.getId(),

response == null ? "N/A" : response.getStatus());

}

if (response != null) {

response.close();

}

}

}

很显然,这里是发起了一次http请求,访问Eureka-Server的apps/${APP_NAME}接口,将当前服务实例的信息发送到Eureka Server进行保存。至此,我们基本上已经知道Spring Cloud Eureka 是如何在启动的时候把服务信息注册到Eureka Server上的了

至此,我们知道Eureka Client发起服务注册时,有两个地方会执行服务注册的任务

- 在Spring Boot启动时,由于自动装配机制将CloudEurekaClient注入到了容器,并且执行了构造方法,而在构造方法中有一个定时任务每40s会执行一次判断,判断实例信息是否发生了变化,如果是则会发起服务注册的流程(InstanceInfoReplicator#start)

- 在Spring Boot启动时,通过refresh方法,最终调用StatusChangeListener.notify进行服务状态变更的监听,而这个监听的方法受到事件之后会去执行服务注册。(InstanceInfoReplicator#onDemandUpdate)

服务注入的注册入口流程以及服务注册的发起流程图如下:

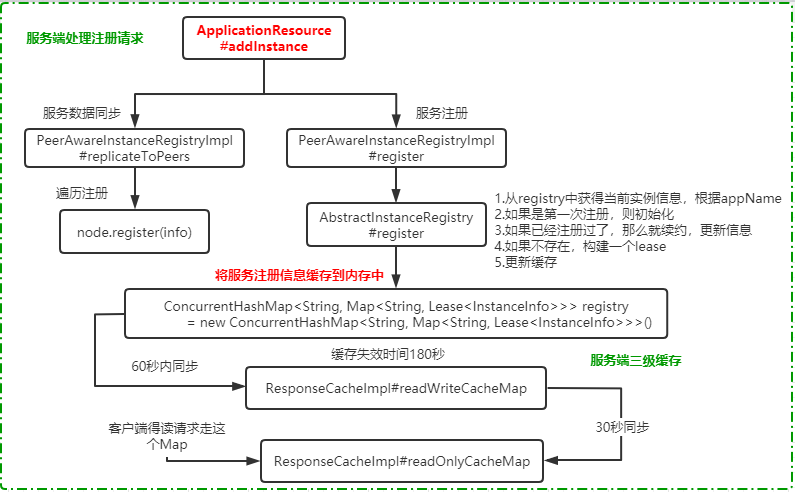

Eureka Server收到请求之后的处理:

我们一定知道它肯定对请求过来的服务实例数据进行了存储。那么我们去Eureka Server端看一下处理流程。请求入口在: com.netflix.eureka.resources.ApplicationResource.addInstance() 。

大家可以发现,这里所提供的REST服务,采用的是jersey来实现的。Jersey是基于JAX-RS标准,提供REST的实现的支持,有兴趣的可以自己去研究一下。

当EurekaClient调用register方法发起注册时,会调用ApplicationResource.addInstance方法。服务注册就是发送一个 POST 请求带上当前实例信息到类 ApplicationResource 的 addInstance方法进行服务注册。

@POST

@Consumes({"application/json", "application/xml"})

public Response addInstance(InstanceInfo info,

@HeaderParam(PeerEurekaNode.HEADER_REPLICATION) String isReplication) {

logger.debug("Registering instance {} (replication={})", info.getId(), isReplication);

// ........省略代码

registry.register(info, "true".equals(isReplication));

return Response.status(204).build(); // 204 to be backwards compatible

}

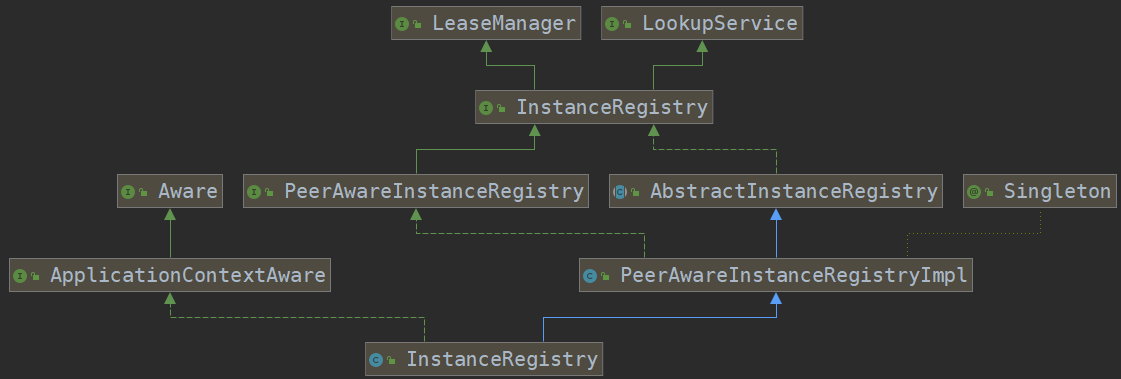

我们发现 registry.register 有多个实现 ,所以我们来看一下类图

从类关系图可以看出,PeerAwareInstanceRegistry的最顶层接口为LeaseManager与LookupService,其中LookupService定义了最基本的发现示例的行为,LeaseManager定义了处理客户端注册,续约,注销等操作。

在 addInstance 方法中,最终调用的是 PeerAwareInstanceRegistryImpl.register 方法。

- leaseDuration 表示租约过期时间,默认是90s,也就是当服务端超过90s没有收到客户端的心跳,则主动剔除该节点

- 调用super.register发起节点注册

- 将信息复制到Eureka Server集群中的其他机器上,同步的实现也很简单,就是获得集群中的所有节点,然后逐个发起注册

@Override

public void register(final InstanceInfo info, final boolean isReplication) {

int leaseDuration = Lease.DEFAULT_DURATION_IN_SECS;

if (info.getLeaseInfo() != null && info.getLeaseInfo().getDurationInSecs() > 0) {

leaseDuration = info.getLeaseInfo().getDurationInSecs();//如果客户端有自己定义心跳超时时间,则采用客户端的时间

}

//节点注册

super.register(info, leaseDuration, isReplication);

//复制到Eureka Server集群中的其他节点

replicateToPeers(Action.Register, info.getAppName(), info.getId(), info, null, isReplication);

}

AbstractInstanceRegistry#register :简单来说,Eureka-Server的服务注册,实际上是将客户端传递过来的实例数据保存到Eureka-Server中的ConcurrentHashMap中。

public void register(InstanceInfo registrant, int leaseDuration, boolean isReplication) {

try {

read.lock();

//从registry中获得当前实例信息,根据appName

Map<String, Lease<InstanceInfo>> gMap = registry.get(registrant.getAppName());

REGISTER.increment(isReplication);//增加注册次数到监控信息中

if (gMap == null) {//如果当前appName是第一次注册,则初始化一个 ConcurrentHashMap

final ConcurrentHashMap<String, Lease<InstanceInfo>> gNewMap = new ConcurrentHashMap<String, Lease<InstanceInfo>>();

gMap = registry.putIfAbsent(registrant.getAppName(), gNewMap);

if (gMap == null) {

gMap = gNewMap;

}

}

//从gMap中查询已经存在的Lease信息,Lease中文翻译为租约,实际上它把服务提供者的实例信息包装成了一个lease,里面提供了对于改服务实例的租约管理

Lease<InstanceInfo> existingLease = gMap.get(registrant.getId());

// Retain the last dirty timestamp without overwriting it, if there is already a lease

// 当instance已经存在是,和客户端的instance的信息做比较,时间最新的那个,为有效instance信息

if (existingLease != null && (existingLease.getHolder() != null)) {

Long existingLastDirtyTimestamp = existingLease.getHolder().getLastDirtyTimestamp();

Long registrationLastDirtyTimestamp = registrant.getLastDirtyTimestamp();

logger.debug("Existing lease found (existing={}, provided={}", existingLastDirtyTimestamp, registrationLastDirtyTimestamp);

// this is a > instead of a >= because if the timestamps are equal, we still take the remote transmitted

// InstanceInfo instead of the server local copy.

if (existingLastDirtyTimestamp > registrationLastDirtyTimestamp) {

logger.warn("There is an existing lease and the existing lease's dirty timestamp {} is greater" +

" than the one that is being registered {}", existingLastDirtyTimestamp, registrationLastDirtyTimestamp);

logger.warn("Using the existing instanceInfo instead of the new instanceInfo as the registrant");

registrant = existingLease.getHolder();

}

} else {

// The lease does not exist and hence it is a new registration

synchronized (lock) {//当lease不存在时,进入到这段代码,

if (this.expectedNumberOfClientsSendingRenews > 0) {

// Since the client wants to register it, increase the number of clients sending renews

this.expectedNumberOfClientsSendingRenews = this.expectedNumberOfClientsSendingRenews + 1;

updateRenewsPerMinThreshold();

}

}

logger.debug("No previous lease information found; it is new registration");

}

//构建一个lease

Lease<InstanceInfo> lease = new Lease<InstanceInfo>(registrant, leaseDuration);

if (existingLease != null) {

// 当原来存在Lease的信息时,设置serviceUpTimestamp, 保证服务启动的时间一直是第一次注册的那个

lease.setServiceUpTimestamp(existingLease.getServiceUpTimestamp());

}

gMap.put(registrant.getId(), lease);

recentRegisteredQueue.add(new Pair<Long, String>(//添加到最近注册的队列中

System.currentTimeMillis(),

registrant.getAppName() + "(" + registrant.getId() + ")"));

// This is where the initial state transfer of overridden status happens

// 检查实例状态是否发生变化,如果是并且存在,则覆盖原来的状态

if (!InstanceStatus.UNKNOWN.equals(registrant.getOverriddenStatus())) {

logger.debug("Found overridden status {} for instance {}. Checking to see if needs to be add to the "

+ "overrides", registrant.getOverriddenStatus(), registrant.getId());

if (!overriddenInstanceStatusMap.containsKey(registrant.getId())) {

logger.info("Not found overridden id {} and hence adding it", registrant.getId());

overriddenInstanceStatusMap.put(registrant.getId(), registrant.getOverriddenStatus());

}

}

InstanceStatus overriddenStatusFromMap = overriddenInstanceStatusMap.get(registrant.getId());

if (overriddenStatusFromMap != null) {

logger.info("Storing overridden status {} from map", overriddenStatusFromMap);

registrant.setOverriddenStatus(overriddenStatusFromMap);

}

// Set the status based on the overridden status rules

InstanceStatus overriddenInstanceStatus = getOverriddenInstanceStatus(registrant, existingLease, isReplication);

registrant.setStatusWithoutDirty(overriddenInstanceStatus);

// 得到instanceStatus,判断是否是UP状态,

// If the lease is registered with UP status, set lease service up timestamp

if (InstanceStatus.UP.equals(registrant.getStatus())) {

lease.serviceUp();

}

// 设置注册类型为添加

registrant.setActionType(ActionType.ADDED);

// 租约变更记录队列,记录了实例的每次变化, 用于注册信息的增量获取

recentlyChangedQueue.add(new RecentlyChangedItem(lease));

registrant.setLastUpdatedTimestamp();

//让缓存失效

invalidateCache(registrant.getAppName(), registrant.getVIPAddress(), registrant.getSecureVipAddress());

logger.info("Registered instance {}/{} with status {} (replication={})",

registrant.getAppName(), registrant.getId(), registrant.getStatus(), isReplication);

} finally {

read.unlock();

}

}

至此,我们就把服务注册在客户端和服务端的处理过程做了一个详细的分析,实际上在Eureka Server端,会把客户端的地址信息保存到ConcurrentHashMap中存储。并且服务提供者和注册中心之间,会建立一个心跳检测机制。用于监控服务提供者的健康状态。

Eureka Server 节点的数据同步:

服务注册处理完以后 Eureka Server 就要向各个节点进行数据同步,由于他并没有leader这么一说,我们来看一下是怎么完成的:

private void replicateToPeers(Action action, String appName, String id,

InstanceInfo info /* optional */,

InstanceStatus newStatus /* optional */, boolean isReplication) {

Stopwatch tracer = action.getTimer().start();

try {

if (isReplication) {

numberOfReplicationsLastMin.increment();

}

// If it is a replication already, do not replicate again as this will create a poison replication

if (peerEurekaNodes == Collections.EMPTY_LIST || isReplication) {

return;

}

// Eureka Server 可以拿到其他节点的信息,是我们配置好的,然后遍历

for (final PeerEurekaNode node : peerEurekaNodes.getPeerEurekaNodes()) {

// If the url represents this host, do not replicate to yourself.

if (peerEurekaNodes.isThisMyUrl(node.getServiceUrl())) {

continue; //是自己 跳过

}

replicateInstanceActionsToPeers(action, appName, id, info, newStatus, node);

}

} finally {

tracer.stop();

}

}

说白了,同步服务信息,就是 遍历注册.

Eureka Server节点注册存储(多级缓存设计):

Eureka Server存在三个变量:(registry、readWriteCacheMap、readOnlyCacheMap)保存服务注册信息,默认情况下定时任务每30s将readWriteCacheMap同步至readOnlyCacheMap,每60s清理超过90s未续约的节点,Eureka Client每30s从readOnlyCacheMap更新服务注册信息,而客户端服务的注册则从registry更新服务注册信息。从而避免并发带来的相关问题,也实现了基于内存得读写分离机制.

其中 registry 是存储着注册上来得所有实例信息,存放位置在 AbstractInstanceRegistry 类, 如下代码:

public abstract class AbstractInstanceRegistry implements InstanceRegistry {

//注册实例得缓存

private final ConcurrentHashMap<String, Map<String, Lease<InstanceInfo>>> registry

}

readWriteCacheMap、readOnlyCacheMap 读写分离的内存存储信息位于 ResponseCacheImpl 类:

public class ResponseCacheImpl implements ResponseCache {

//读缓存

private final ConcurrentMap<Key, Value> readOnlyCacheMap = new ConcurrentHashMap<Key, Value>();

// 写缓存

private final LoadingCache<Key, Value> readWriteCacheMap;

// .......

}

多级缓存的意义:

这里为什么要设计多级缓存呢?原因很简单,就是当存在大规模的服务注册和更新时,如果只是修改一个ConcurrentHashMap数据,那么势必因为锁的存在导致竞争,影响性能。而Eureka又是AP模型,只需要满足最终可用就行。所以它在这里用到多级缓存来实现读写分离。注册方法写的时候直接写内存注册表,写完表之后主动失效读写缓存。获取注册信息接口先从只读缓存取,只读缓存没有再去读写缓存取,读写缓存没有再去内存注册表里取(不只是取,此处较复杂)。并且,读写缓存会更新回写只读缓存

- responseCacheUpdateIntervalMs : readOnlyCacheMap 缓存更新的定时器时间间隔,默认为30秒

- responseCacheAutoExpirationInSeconds : readWriteCacheMap 缓存过期时间,默认为 180 秒。

服务注册的缓存失效:

在AbstractInstanceRegistry.register方法的最后,会调用invalidateCache(registrant.getAppName(), registrant.getVIPAddress(),registrant.getSecureVipAddress()); 方法,使得读写缓存失效

定时同步缓存 :

ResponseCacheImpl的构造方法中,会启动一个定时任务,这个任务会定时检查写缓存中的数据变化,进行更新和同步。

ResponseCacheImpl(EurekaServerConfig serverConfig, ServerCodecs serverCodecs, AbstractInstanceRegistry registry) {

// .......

//

this.readWriteCacheMap =

CacheBuilder.newBuilder().initialCapacity(serverConfig.getInitialCapacityOfResponseCache())

.expireAfterWrite(serverConfig.getResponseCacheAutoExpirationInSeconds(), TimeUnit.SECONDS)

.removalListener(new RemovalListener<Key, Value>() {

@Override

public void onRemoval(RemovalNotification<Key, Value> notification) {

Key removedKey = notification.getKey();

if (removedKey.hasRegions()) {

Key cloneWithNoRegions = removedKey.cloneWithoutRegions();

regionSpecificKeys.remove(cloneWithNoRegions, removedKey);

}

}

})

.build(new CacheLoader<Key, Value>() {

@Override

public Value load(Key key) throws Exception {

if (key.hasRegions()) {

Key cloneWithNoRegions = key.cloneWithoutRegions();

regionSpecificKeys.put(cloneWithNoRegions, key);

}

Value value = generatePayload(key);

return value;

}

});

if (shouldUseReadOnlyResponseCache) {

timer.schedule(getCacheUpdateTask(),

new Date(((System.currentTimeMillis() / responseCacheUpdateIntervalMs) * responseCacheUpdateIntervalMs)

+ responseCacheUpdateIntervalMs),

responseCacheUpdateIntervalMs);

}

// .......

}

最后基于服务端的注册请求处理附上处理流程图:

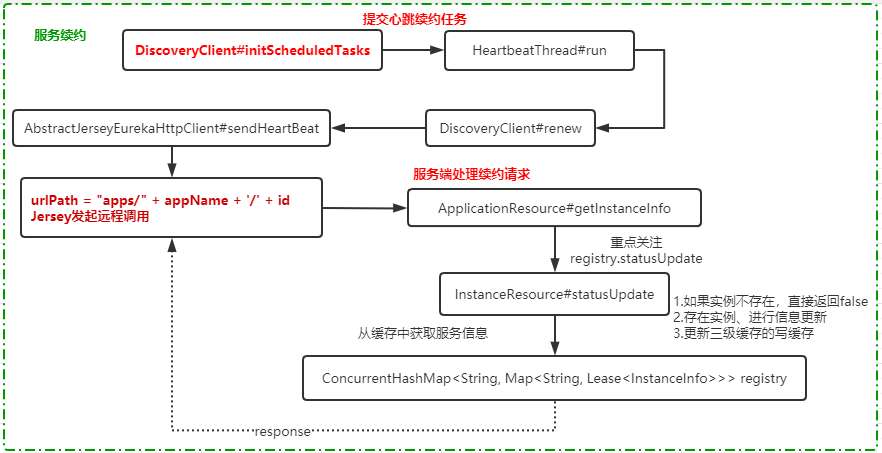

服务续约:

所谓的服务续约,其实就是一种心跳检查机制。客户端会定期发送心跳来续约。在客户端初始化的时候 ,在DiscoveryClient构造方法内,在 initScheduledTasks 中,创建一个心跳检测的定时任务:

// Heartbeat timer

heartbeatTask = new TimedSupervisorTask(

"heartbeat",

scheduler,

heartbeatExecutor,

renewalIntervalInSecs, // 30

TimeUnit.SECONDS,

expBackOffBound, // 10

new HeartbeatThread()

);

scheduler.schedule(

heartbeatTask,

renewalIntervalInSecs, // 30

TimeUnit.SECONDS);

HeartbeatThread:然后这个定时任务中,会执行一个 HearbeatThread 的线程,这个线程会定时调用renew()来做续约。

/**

* The heartbeat task that renews the lease in the given intervals.

*/

private class HeartbeatThread implements Runnable {

public void run() {

if (renew()) {

lastSuccessfulHeartbeatTimestamp = System.currentTimeMillis();

}

}

}

boolean renew() {

EurekaHttpResponse<InstanceInfo> httpResponse;

try {

httpResponse = eurekaTransport.registrationClient.sendHeartBeat(instanceInfo.getAppName(), instanceInfo.getId(), instanceInfo, null);

logger.debug(PREFIX + "{} - Heartbeat status: {}", appPathIdentifier, httpResponse.getStatusCode());

if (httpResponse.getStatusCode() == Status.NOT_FOUND.getStatusCode()) {

REREGISTER_COUNTER.increment();

logger.info(PREFIX + "{} - Re-registering apps/{}", appPathIdentifier, instanceInfo.getAppName());

long timestamp = instanceInfo.setIsDirtyWithTime();

boolean success = register();

if (success) {

instanceInfo.unsetIsDirty(timestamp);

}

return success;

}

return httpResponse.getStatusCode() == Status.OK.getStatusCode();

} catch (Throwable e) {

logger.error(PREFIX + "{} - was unable to send heartbeat!", appPathIdentifier, e);

return false;

}

}

@Override

public EurekaHttpResponse<InstanceInfo> sendHeartBeat(String appName, String id, InstanceInfo info, InstanceStatus overriddenStatus) {

String urlPath = "apps/" + appName + '/' + id;

ClientResponse response = null;

try {

WebResource webResource = jerseyClient.getClient().resource(serviceUrl)

.path(urlPath)

.queryParam("status", info.getStatus().toString())

.queryParam("lastDirtyTimestamp", info.getLastDirtyTimestamp().toString());

if (overriddenStatus != null) {

webResource = webResource.queryParam("overriddenstatus", overriddenStatus.name());

}

Builder requestBuilder = webResource.getRequestBuilder();

addExtraHeaders(requestBuilder);

response = requestBuilder.accept(MediaType.APPLICATION_JSON_TYPE).put(ClientResponse.class);

InstanceInfo infoFromPeer = null;

if (response.getStatus() == Status.CONFLICT.getStatusCode() && response.hasEntity()) {

infoFromPeer = response.getEntity(InstanceInfo.class);

}

return anEurekaHttpResponse(response.getStatus(), infoFromPeer).type(MediaType.APPLICATION_JSON_TYPE).build();

} finally {

if (logger.isDebugEnabled()) {

logger.debug("[heartbeat] Jersey HTTP PUT {}; statusCode={}", urlPath, response == null ? "N/A" : response.getStatus());

}

if (response != null) {

response.close();

}

}

}

服务端收到心跳请求的处理:

上面代码里很清楚,会发送一个请求到"apps/" + appName + '/' + id 的路径的接口上。在ApplicationResource.getInstanceInfo这个接口中,会返回一个InstanceResource的实例,在该实例下,定义了一个statusUpdate的接口来更新状态

@Path("{id}")

public InstanceResource getInstanceInfo(@PathParam("id") String id) {

return new InstanceResource(this, id, serverConfig, registry);

}

InstanceResource.statusUpdate():在该方法中,我们重点关注 registry.statusUpdate 这个方法,它会调用AbstractInstanceRegistry.statusUpdate来更新指定服务提供者在服务端存储的信息中的变化。

@PUT

@Path("status")

public Response statusUpdate(

@QueryParam("value") String newStatus,

@HeaderParam(PeerEurekaNode.HEADER_REPLICATION) String isReplication,

@QueryParam("lastDirtyTimestamp") String lastDirtyTimestamp) {

try {// 通过服务名称获取服务集合

if (registry.getInstanceByAppAndId(app.getName(), id) == null) {

logger.warn("Instance not found: {}/{}", app.getName(), id);

return Response.status(Status.NOT_FOUND).build();

}//修改状态

boolean isSuccess = registry.statusUpdate(app.getName(), id,

InstanceStatus.valueOf(newStatus), lastDirtyTimestamp,

"true".equals(isReplication));

if (isSuccess) {

logger.info("Status updated: {} - {} - {}", app.getName(), id, newStatus);

return Response.ok().build();

} else {

logger.warn("Unable to update status: {} - {} - {}", app.getName(), id, newStatus);

return Response.serverError().build();

}

} catch (Throwable e) {

logger.error("Error updating instance {} for status {}", id,

newStatus);

return Response.serverError().build();

}

}

AbstractInstanceRegistry.statusUpdate :在这个方法中,会拿到应用对应的实例列表,然后调用Lease.renew()去进行心跳续约。

@Override

public boolean statusUpdate(String appName, String id,

InstanceStatus newStatus, String lastDirtyTimestamp,

boolean isReplication) {

try {

read.lock();

// 更新状态的次数 状态统计

STATUS_UPDATE.increment(isReplication);

// 从本地数据里面获取实例信息,

Map<String, Lease<InstanceInfo>> gMap = registry.get(appName);

Lease<InstanceInfo> lease = null;

if (gMap != null) {

lease = gMap.get(id);

}

// 实例不存在,则直接返回,表示失败

if (lease == null) {

return false;

} else {

// 执行一下lease的renew方法,里面主要是更新了这个instance的最后更新时间。

lease.renew();

// 获取instance实例信息

InstanceInfo info = lease.getHolder();

// Lease is always created with its instance info object.

// This log statement is provided as a safeguard, in case this invariant is violated.

if (info == null) {

logger.error("Found Lease without a holder for instance id {}", id);

}

// 当instance信息不为空时,并且实例状态发生了变化

if ((info != null) && !(info.getStatus().equals(newStatus))) {

// Mark service as UP if needed

if (InstanceStatus.UP.equals(newStatus)) {

// 如果新状态是UP的状态,那么启动一下serviceUp() , 主要是更新服务的注册时间

lease.serviceUp();

}

// This is NAC overriden status

// 将instance Id 和这个状态的映射信息放入覆盖缓存MAP里面去

overriddenInstanceStatusMap.put(id, newStatus);

// Set it for transfer of overridden status to replica on

// replica start up

// 设置覆盖状态到实例信息里面去

info.setOverriddenStatus(newStatus);

long replicaDirtyTimestamp = 0;

info.setStatusWithoutDirty(newStatus);

if (lastDirtyTimestamp != null) {

replicaDirtyTimestamp = Long.valueOf(lastDirtyTimestamp);

}

// If the replication's dirty timestamp is more than the existing one, just update

// it to the replica's.// 如果replicaDirtyTimestamp 的时间大于instance的getLastDirtyTimestamp() ,则更新

if (replicaDirtyTimestamp > info.getLastDirtyTimestamp()) {

info.setLastDirtyTimestamp(replicaDirtyTimestamp);

}

info.setActionType(ActionType.MODIFIED);

recentlyChangedQueue.add(new RecentlyChangedItem(lease));

info.setLastUpdatedTimestamp();

//更新写缓存

invalidateCache(appName, info.getVIPAddress(), info.getSecureVipAddress());

}

return true;

}

} finally {

read.unlock();

}

}

至此,心跳续约功能就分析完成了。流程图如下:

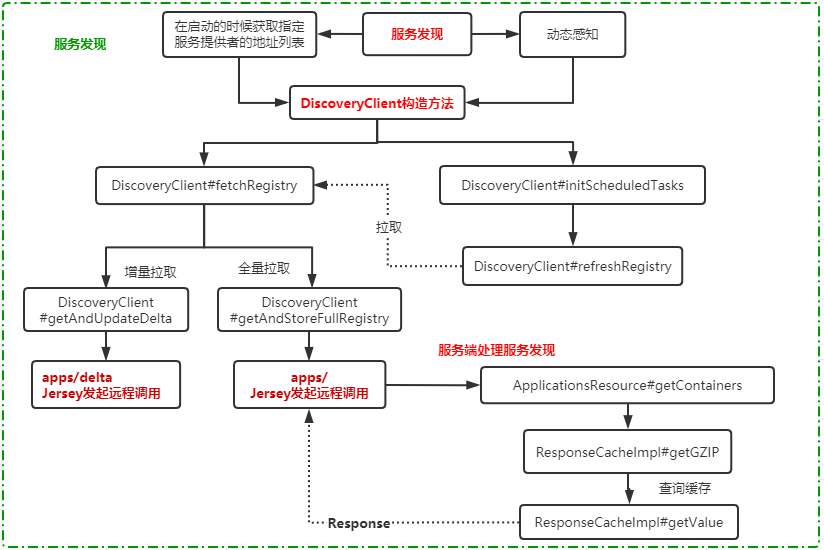

服务发现:

我们继续来研究服务的发现过程,就是客户端需要能够满足两个功能

- 在启动的时候获取指定服务提供者的地址列表

- Eureka server端地址发生变化时,需要动态感知

DiscoveryClient构造时进行查询:构造方法中,如果当前的客户端默认开启了fetchRegistry,则会从eureka-server中拉取数据。

@Inject

DiscoveryClient(ApplicationInfoManager applicationInfoManager, EurekaClientConfig config, AbstractDiscoveryClientOptionalArgs args,

Provider<BackupRegistry> backupRegistryProvider, EndpointRandomizer endpointRandomizer) {

// ........

if (clientConfig.shouldFetchRegistry() && !fetchRegistry(false)) {

fetchRegistryFromBackup();

}

// ......

}

DiscoveryClient.fetchRegistry:

private boolean fetchRegistry(boolean forceFullRegistryFetch) {

Stopwatch tracer = FETCH_REGISTRY_TIMER.start();

try {

// If the delta is disabled or if it is the first time, get all

// applications 从本地缓存 localRegionApps.get() 服务实例信息

Applications applications = getApplications();

//1. 是否禁用增量更新;

//2. 是否对某个region特别关注;

//3. 外部调用时是否通过入参指定全量更新;

//4. 本地还未缓存有效的服务列表信息

if (clientConfig.shouldDisableDelta()

|| (!Strings.isNullOrEmpty(clientConfig.getRegistryRefreshSingleVipAddress()))

|| forceFullRegistryFetch

|| (applications == null) //如果服务列表为空

|| (applications.getRegisteredApplications().size() == 0)

|| (applications.getVersion() == -1)) //Client application does not have latest library supporting delta

{

logger.info("Disable delta property : {}", clientConfig.shouldDisableDelta());

logger.info("Single vip registry refresh property : {}", clientConfig.getRegistryRefreshSingleVipAddress());

logger.info("Force full registry fetch : {}", forceFullRegistryFetch);

logger.info("Application is null : {}", (applications == null));

logger.info("Registered Applications size is zero : {}",

(applications.getRegisteredApplications().size() == 0));

logger.info("Application version is -1: {}", (applications.getVersion() == -1));

getAndStoreFullRegistry(); //全量拉取

} else {

getAndUpdateDelta(applications);//增量拉取

}

applications.setAppsHashCode(applications.getReconcileHashCode());

logTotalInstances();

} catch (Throwable e) {

logger.error(PREFIX + "{} - was unable to refresh its cache! status = {}", appPathIdentifier, e.getMessage(), e);

return false;

} finally {

if (tracer != null) {

tracer.stop();

}

}

// Notify about cache refresh before updating the instance remote status

onCacheRefreshed();//刷新环迅

// Update remote status based on refreshed data held in the cache

updateInstanceRemoteStatus();//更新服务实例状态

// registry was fetched successfully, so return true

return true;

}

再跟进去就能看到对应的接口,对应的就是 ApplicationResource 跟 ApplicationsResource 两个类里面开放的接口。我们下面会讲到。

定时任务每隔30s更新一次本地地址列表,在DiscoveryClient构造的时候,会初始化一些任务,这个在前面咱们分析过了。其中有一个任务动态更新本地服务地址列表,叫 cacheRefreshTask 。这个任务最终执行的是CacheRefreshThread这个线程。它是一个周期性执行的任务,具体我们来看一下。

private void initScheduledTasks() {

if (clientConfig.shouldFetchRegistry()) {

// registry cache refresh timer

int registryFetchIntervalSeconds = clientConfig.getRegistryFetchIntervalSeconds();

int expBackOffBound = clientConfig.getCacheRefreshExecutorExponentialBackOffBound();

cacheRefreshTask = new TimedSupervisorTask(

"cacheRefresh",

scheduler,

cacheRefreshExecutor,

registryFetchIntervalSeconds,//30

TimeUnit.SECONDS,

expBackOffBound,

new CacheRefreshThread()

);

scheduler.schedule(

cacheRefreshTask,

registryFetchIntervalSeconds,// 30

TimeUnit.SECONDS);

}

//.......

}

TimedSupervisorTask:从整体上看,TimedSupervisorTask是固定间隔的周期性任务,一旦遇到超时就会将下一个周期的间隔时间调大,如果连续超时,那么每次间隔时间都会增大一倍,一直到达外部参数设定的上限为止,一旦新任务不再超时,间隔时间又会自动恢复为初始值。这种设计还是值得学习的。

@Override

public void run() {

Future<?> future = null;

try {

//使用Future,可以设定子线程的超时时间,这样当前线程就不用无限等待了

future = executor.submit(task);

threadPoolLevelGauge.set((long) executor.getActiveCount());

//指定等待子线程的最长时间

future.get(timeoutMillis, TimeUnit.MILLISECONDS); // block until done or timeout

delay.set(timeoutMillis);//delay是个很有用的变量,后面会用到,这里记得每次执行任务成功都会将delay重置

threadPoolLevelGauge.set((long) executor.getActiveCount());

successCounter.increment();

} catch (TimeoutException e) {

logger.warn("task supervisor timed out", e);

timeoutCounter.increment();

long currentDelay = delay.get();

long newDelay = Math.min(maxDelay, currentDelay * 2);

//设置为最新的值,考虑到多线程,所以用了CAS

delay.compareAndSet(currentDelay, newDelay);

} catch (RejectedExecutionException e) {

//一旦线程池的阻塞队列中放满了待处理任务,触发了拒绝策略,就会将调度器停掉

if (executor.isShutdown() || scheduler.isShutdown()) {

logger.warn("task supervisor shutting down, reject the task", e);

} else {

logger.warn("task supervisor rejected the task", e);

}

rejectedCounter.increment();

} catch (Throwable e) {//一旦出现未知的异常,就停掉调度器

if (executor.isShutdown() || scheduler.isShutdown()) {

logger.warn("task supervisor shutting down, can't accept the task");

} else {

logger.warn("task supervisor threw an exception", e);

}

throwableCounter.increment();

} finally {

if (future != null) {//这里任务要么执行完毕,要么发生异常,都用cancel方法来清理任务;

future.cancel(true);

}

//只要调度器没有停止,就再指定等待时间之后在执行一次同样的任务

if (!scheduler.isShutdown()) {

//这里就是周期性任务的原因:只要没有停止调度器,就再创建一次性任务,执行时间时dealy的值,

//假设外部调用时传入的超时时间为30秒(构造方法的入参timeout),最大间隔时间为50秒(构造方法的入参expBackOffBound)

//如果最近一次任务没有超时,那么就在30秒后开始新任务,

//如果最近一次任务超时了,那么就在50秒后开始新任务(异常处理中有个乘以二的操作,乘以二后的60秒超过了最大间隔50秒)

scheduler.schedule(this, delay.get(), TimeUnit.MILLISECONDS);

}

}

}

executor.submit(task) 提交的 CacheRefreshThread 会走到 CacheRefreshThread.refreshRegistry 这段代码主要两个逻辑

- 判断remoteRegions是否发生了变化

- 调用fetchRegistry获取本地服务地址缓存

@VisibleForTesting

void refreshRegistry() {

try {

boolean isFetchingRemoteRegionRegistries = isFetchingRemoteRegionRegistries();

boolean remoteRegionsModified = false;

// This makes sure that a dynamic change to remote regions to fetch is honored.

//如果部署在aws环境上,会判断最后一次远程区域更新的信息和当前远程区域信息进行比较,如果不想等,则更新

String latestRemoteRegions = clientConfig.fetchRegistryForRemoteRegions();

if (null != latestRemoteRegions) {

String currentRemoteRegions = remoteRegionsToFetch.get();

if (!latestRemoteRegions.equals(currentRemoteRegions)) {//判断最后一次

// Both remoteRegionsToFetch and AzToRegionMapper.regionsToFetch need to be in sync

synchronized (instanceRegionChecker.getAzToRegionMapper()) {

if (remoteRegionsToFetch.compareAndSet(currentRemoteRegions, latestRemoteRegions)) {

String[] remoteRegions = latestRemoteRegions.split(",");

remoteRegionsRef.set(remoteRegions);

instanceRegionChecker.getAzToRegionMapper().setRegionsToFetch(remoteRegions);

remoteRegionsModified = true;

} else {

logger.info("Remote regions to fetch modified concurrently," +

" ignoring change from {} to {}", currentRemoteRegions, latestRemoteRegions);

}

}

} else {

// Just refresh mapping to reflect any DNS/Property change

instanceRegionChecker.getAzToRegionMapper().refreshMapping();

}

}

// 拉取服务地址

boolean success = fetchRegistry(remoteRegionsModified);

if (success) {

registrySize = localRegionApps.get().size();

lastSuccessfulRegistryFetchTimestamp = System.currentTimeMillis();

}

if (logger.isDebugEnabled()) {

StringBuilder allAppsHashCodes = new StringBuilder();

allAppsHashCodes.append("Local region apps hashcode: ");

allAppsHashCodes.append(localRegionApps.get().getAppsHashCode());

allAppsHashCodes.append(", is fetching remote regions? ");

allAppsHashCodes.append(isFetchingRemoteRegionRegistries);

for (Map.Entry<String, Applications> entry : remoteRegionVsApps.entrySet()) {

allAppsHashCodes.append(", Remote region: ");

allAppsHashCodes.append(entry.getKey());

allAppsHashCodes.append(" , apps hashcode: ");

allAppsHashCodes.append(entry.getValue().getAppsHashCode());

}

logger.debug("Completed cache refresh task for discovery. All Apps hash code is {} ",

allAppsHashCodes);

}

} catch (Throwable e) {

logger.error("Cannot fetch registry from server", e);

}

}

不管是启动的时候拉取服务列表,还是定时任务获取服务列表,都会通过 com.netflix.discovery.DiscoveryClient#fetchRegistry,从上面的分析 我们知道了 客户端发起服务地址的查询有两种,一种是全量、另一种是增量。从eureka server端获取服务注册中心的地址信息,然后更新并设置到本地缓存 localRegionApps 。

- 对于全量查询请求,会调用Eureka-server的ApplicationsResource的getContainers方法。(apps/ GET请求)

- 而对于增量请求,会调用ApplicationsResource.getContainerDifferential。(apps/delta GET请求)

ApplicationsResource.getContainers:接收客户端发送的获取全量注册信息请求

@GET

public Response getContainers(@PathParam("version") String version,

@HeaderParam(HEADER_ACCEPT) String acceptHeader,

@HeaderParam(HEADER_ACCEPT_ENCODING) String acceptEncoding,

@HeaderParam(EurekaAccept.HTTP_X_EUREKA_ACCEPT) String eurekaAccept,

@Context UriInfo uriInfo,

@Nullable @QueryParam("regions") String regionsStr) {

boolean isRemoteRegionRequested = null != regionsStr && !regionsStr.isEmpty();

String[] regions = null;

if (!isRemoteRegionRequested) {

EurekaMonitors.GET_ALL.increment();

} else {

regions = regionsStr.toLowerCase().split(",");

Arrays.sort(regions); // So we don't have different caches for same regions queried in different order.

EurekaMonitors.GET_ALL_WITH_REMOTE_REGIONS.increment();

}

// Check if the server allows the access to the registry. The server can

// restrict access if it is not

// ready to serve traffic depending on various reasons.

// EurekaServer无法提供服务,返回403

if (!registry.shouldAllowAccess(isRemoteRegionRequested)) {

return Response.status(Status.FORBIDDEN).build();

}

CurrentRequestVersion.set(Version.toEnum(version));

KeyType keyType = Key.KeyType.JSON;// 设置返回数据格式,默认JSON

String returnMediaType = MediaType.APPLICATION_JSON;

if (acceptHeader == null || !acceptHeader.contains(HEADER_JSON_VALUE)) {

keyType = Key.KeyType.XML;// 如果接收到的请求头部没有具体格式信息,则返回格式为XML

returnMediaType = MediaType.APPLICATION_XML;

}

// 构建缓存键

Key cacheKey = new Key(Key.EntityType.Application,

ResponseCacheImpl.ALL_APPS,

keyType, CurrentRequestVersion.get(), EurekaAccept.fromString(eurekaAccept), regions

);

// 返回不同的编码类型的数据,去缓存中取数据的方法基本一致

Response response;

if (acceptEncoding != null && acceptEncoding.contains(HEADER_GZIP_VALUE)) {

response = Response.ok(responseCache.getGZIP(cacheKey))

.header(HEADER_CONTENT_ENCODING, HEADER_GZIP_VALUE)

.header(HEADER_CONTENT_TYPE, returnMediaType)

.build();

} else { //这里就是上面提到的 服务端缓存区获取服务

response = Response.ok(responseCache.get(cacheKey))

.build();

}

CurrentRequestVersion.remove();

return response;

}

然后将服务信息返回给客户端,结束流程,流程图如下:

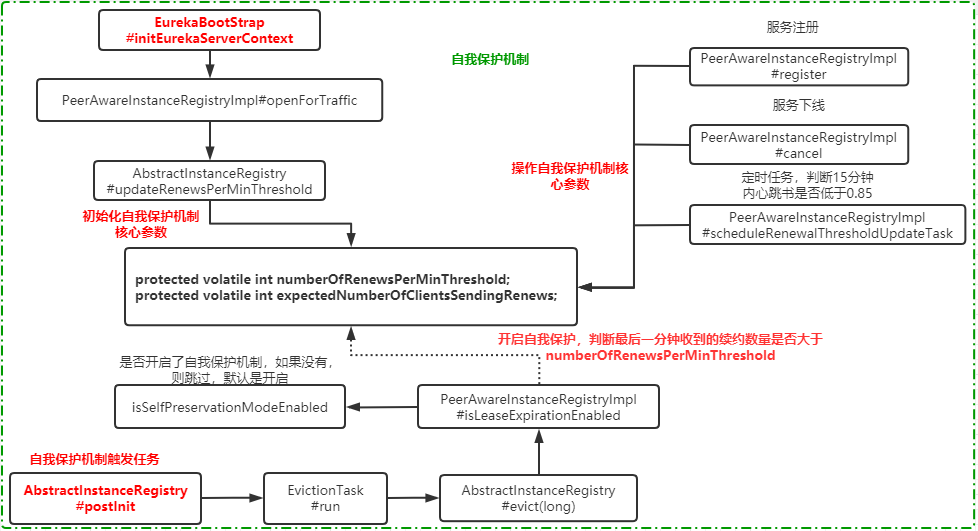

Eureka 自我保护机制:

了解了Eureka 的服务注册发现相关源码后,我们回过投来看看Eureka 的自我保护机制是怎么实现的?

在Eureka的自我保护机制中,有两个很重要的变量,Eureka的自我保护机制,都是围绕这两个变量来实现的,在AbstractInstanceRegistry这个类中定义的

protected volatile int numberOfRenewsPerMinThreshold; //每分钟最小续约数量

protected volatile int expectedNumberOfClientsSendingRenews; //预期每分钟收到续约的客户端数量,取决于注册到eureka server上的服务数量

numberOfRenewsPerMinThreshold 表示每分钟的最小续约数量,它表示什么意思呢?就是EurekaServer期望每分钟收到客户端实例续约的总数的阈值。如果小于这个阈值,就会触发自我保护机制。它是在以下代码中赋值的,

protected void updateRenewsPerMinThreshold() {

this.numberOfRenewsPerMinThreshold = (int) (this.expectedNumberOfClientsSendingRenews

* (60.0 / serverConfig.getExpectedClientRenewalIntervalSeconds())

* serverConfig.getRenewalPercentThreshold());

}

//自我保护阀值 = 服务总数 * 每分钟续约数(60S/客户端续约间隔) * 自我保护续约百分比阀值因子默认 0.85

- getExpectedClientRenewalIntervalSeconds,客户端的续约间隔,默认为30s

- getRenewalPercentThreshold,自我保护续约百分比阈值因子,默认0.85。 也就是说每分钟的续约数量要大于85%

这两个变量的更新:需要注意的是,这两个变量是动态更新的,有四个地方来更新这两个值

1.Eureka-Server的初始化:在EurekaBootstrap这个类中,有一个 initEurekaServerContext 方法

protected void initEurekaServerContext() throws Exception {

EurekaServerConfig eurekaServerConfig = new DefaultEurekaServerConfig();

// ......

registry.openForTraffic(applicationInfoManager, registryCount);

// Register all monitoring statistics.

EurekaMonitors.registerAllStats();

}

public void openForTraffic(ApplicationInfoManager applicationInfoManager, int count) {

// Renewals happen every 30 seconds and for a minute it should be a factor of 2.

this.expectedNumberOfClientsSendingRenews = count;//初始化

updateRenewsPerMinThreshold();//更新numberOfRenewsPerMinThreshold

logger.info("Got {} instances from neighboring DS node", count);

logger.info("Renew threshold is: {}", numberOfRenewsPerMinThreshold);

this.startupTime = System.currentTimeMillis();

if (count > 0) {

this.peerInstancesTransferEmptyOnStartup = false;

}

DataCenterInfo.Name selfName = applicationInfoManager.getInfo().getDataCenterInfo().getName();

boolean isAws = Name.Amazon == selfName;

if (isAws && serverConfig.shouldPrimeAwsReplicaConnections()) {

logger.info("Priming AWS connections for all replicas..");

primeAwsReplicas(applicationInfoManager);

}

logger.info("Changing status to UP");

applicationInfoManager.setInstanceStatus(InstanceStatus.UP);

super.postInit();

}

2.PeerAwareInstanceRegistryImpl.cancel,当服务提供者主动下线时,表示这个时候Eureka-Server要剔除这个服务提供者的地址,同时也代表这这个心跳续约的阈值要发生变化。所以在 PeerAwareInstanceRegistryImpl.cancel 中可以看到数据的更新调用路径 PeerAwareInstanceRegistryImpl.cancel -> AbstractInstanceRegistry.cancel->internalCancel

protected boolean internalCancel(String appName, String id, boolean isReplication) {

try {

read.lock();

//.......

synchronized (lock) {

if (this.expectedNumberOfClientsSendingRenews > 0) {

// Since the client wants to cancel it, reduce the number of clients to send renews.

this.expectedNumberOfClientsSendingRenews = this.expectedNumberOfClientsSendingRenews - 1;

updateRenewsPerMinThreshold();

}

}

return true;

}

3.PeerAwareInstanceRegistryImpl.register,当有新的服务提供者注册到eureka-server上时,需要增加续约的客户端数量,所以在register方法中会进行处理,register ->super.register(AbstractInstanceRegistry)

public void register(InstanceInfo registrant, int leaseDuration, boolean isReplication) {

//.......

// The lease does not exist and hence it is a new registration

synchronized (lock) {

if (this.expectedNumberOfClientsSendingRenews > 0) {

// Since the client wants to register it, increase the number of clients sending renews

this.expectedNumberOfClientsSendingRenews = this.expectedNumberOfClientsSendingRenews + 1;

updateRenewsPerMinThreshold();

}

}

logger.debug("No previous lease information found; it is new registration");

}

//........

}

4.PeerAwareInstanceRegistryImpl.scheduleRenewalThresholdUpdateTask,15分钟运行一次,判断在15分钟之内心跳失败比例是否低于85%。在DefaultEurekaServerContext ---> @PostConstruct修饰的initialize()方法 ---> init()

private void updateRenewalThreshold() {

try {

Applications apps = eurekaClient.getApplications();

int count = 0;

for (Application app : apps.getRegisteredApplications()) {

for (InstanceInfo instance : app.getInstances()) {

if (this.isRegisterable(instance)) {

++count;

}

}

}

synchronized (lock) {

// Update threshold only if the threshold is greater than the

// current expected threshold or if self preservation is disabled.

if ((count) > (serverConfig.getRenewalPercentThreshold() * expectedNumberOfClientsSendingRenews)

|| (!this.isSelfPreservationModeEnabled())) {

this.expectedNumberOfClientsSendingRenews = count;

updateRenewsPerMinThreshold();

}

}

logger.info("Current renewal threshold is : {}", numberOfRenewsPerMinThreshold);

} catch (Throwable e) {

logger.error("Cannot update renewal threshold", e);

}

}

自我保护机制触发任务:

在AbstractInstanceRegistry的postInit方法中,会开启一个EvictionTask的任务,这个任务用来检测是否需要开启自我保护机制。

protected void postInit() {

renewsLastMin.start();

if (evictionTaskRef.get() != null) {

evictionTaskRef.get().cancel();

}

evictionTaskRef.set(new EvictionTask());

evictionTimer.schedule(evictionTaskRef.get(),

serverConfig.getEvictionIntervalTimerInMs(),

serverConfig.getEvictionIntervalTimerInMs());

}

其中,EvictionTask表示最终执行的任务

@Override

public void run() {

try {

long compensationTimeMs = getCompensationTimeMs();

logger.info("Running the evict task with compensationTime {}ms", compensationTimeMs);

evict(compensationTimeMs);

} catch (Throwable e) {

logger.error("Could not run the evict task", e);

}

}

public void evict(long additionalLeaseMs) {

logger.debug("Running the evict task");

/ 是否需要开启自我保护机制,如果需要,那么直接RETURE, 不需要继续往下执行了

if (!isLeaseExpirationEnabled()) {

logger.debug("DS: lease expiration is currently disabled.");

return;

}

//这下面主要是做服务自动下线的操作的

}

isLeaseExpirationEnabled:

- 是否开启了自我保护机制,如果没有,则跳过,默认是开启

- 计算是否需要开启自我保护,判断最后一分钟收到的续约数量是否大于numberOfRenewsPerMinThreshold

public boolean isLeaseExpirationEnabled() {

if (!isSelfPreservationModeEnabled()) {

// The self preservation mode is disabled, hence allowing the instances to expire.

return true;

}

return numberOfRenewsPerMinThreshold > 0 && getNumOfRenewsInLastMin() > numberOfRenewsPerMinThreshold;

}

一目了然,附上自我保护机制的流程图:

Eureka 的这几个流程的源码还是蛮绕的,只要大家静下心来,仔细的阅读几遍,问题还是不大的。更多的信息请参考官网。

本文详细解析了Spring Cloud Eureka的服务注册与发现机制,包括服务注册、续约、发现及自我保护机制的源码分析。

本文详细解析了Spring Cloud Eureka的服务注册与发现机制,包括服务注册、续约、发现及自我保护机制的源码分析。

4182

4182

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?