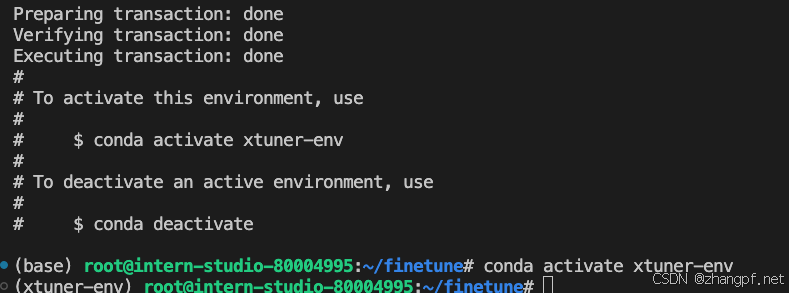

1. 构建环境

代码如下:

cd ~

#git clone 本repo

git clone https://github.com/InternLM/Tutorial.git -b camp4

mkdir -p /root/finetune && cd /root/finetune

conda create -n xtuner-env python=3.10 -y

conda activate xtuner-env

2. 安装XTuner 及 pytorch

代码如下:

cd /root/Tutorial/docs/L1/XTuner

pip install -r requirements.txt

pip install torch==2.4.1 torchvision==0.19.1 torchaudio==2.4.1 --index-url https://download.pytorch.org/whl/cu121

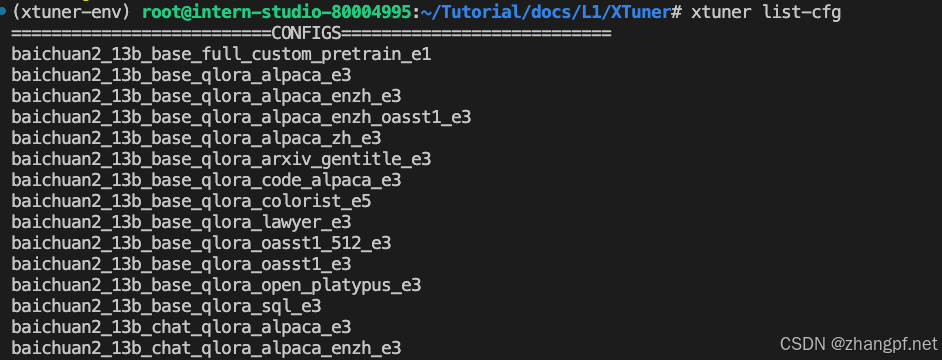

3. 验证XTuner是否正确安装

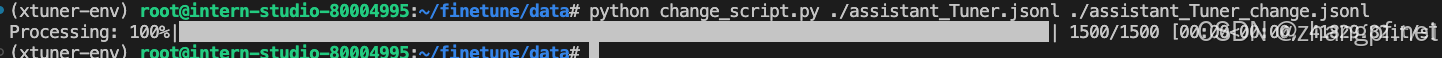

4. 数据准备

mkdir -p /root/finetune/data && cd /root/finetune/data

cp -r /root/Tutorial/data/assistant_Tuner.jsonl /root/finetune/data

利用python来修改微调数据,在data目录下新建change_script.py文件,内容如下:

import json

import argparse

from tqdm import tqdm

def process_line(line, old_text, new_text):

# 解析 JSON 行

data = json.loads(line)

# 递归函数来处理嵌套的字典和列表

def replace_text(obj):

if isinstance(obj, dict):

return {k: replace_text(v) for k, v in obj.items()}

elif isinstance(obj, list):

return [replace_text(item) for item in obj]

elif isinstance(obj, str):

return obj.replace(old_text, new_text)

else:

return obj

# 处理整个 JSON 对象

processed_data = replace_text(data)

# 将处理后的对象转回 JSON 字符串

return json.dumps(processed_data, ensure_ascii=False)

def main(input_file, output_file, old_text, new_text):

with open(input_file, 'r', encoding='utf-8') as infile, \

open(output_file, 'w', encoding='utf-8') as outfile:

# 计算总行数用于进度条

total_lines = sum(1 for _ in infile)

infile.seek(0) # 重置文件指针到开头

# 使用 tqdm 创建进度条

for line in tqdm(infile, total=total_lines, desc="Processing"):

processed_line = process_line(line.strip(), old_text, new_text)

outfile.write(processed_line + '\n')

if __name__ == "__main__":

parser = argparse.ArgumentParser(description="Replace text in a JSONL file.")

parser.add_argument("input_file", help="Input JSONL file to process")

parser.add_argument("output_file", help="Output file for processed JSONL")

parser.add_argument("--old_text", default="尖米", help="Text to be replaced")

parser.add_argument("--new_text", default="大千", help="Text to replace with")

args = parser.parse_args()

main(args.input_file, args.output_file, args.old_text, args.new_text)

执行脚本:

cd ~/finetune/data

python change_script.py ./assistant_Tuner.jsonl ./assistant_Tuner_change.jsonl

5. 启动训练

做软连接,把InternStudio已经提供的模型连接到fineturne/models目录中

mkdir /root/finetune/models

ln -s /root/share/new_models/Shanghai_AI_Laboratory/internlm2_5-7b-chat /root/finetune/models/internlm2_5-7b-chat

获取官方写好的 config

cd /root/finetune

mkdir ./config

cd config

xtuner copy-cfg internlm2_5_chat_7b_qlora_alpaca_e3 ./

修改配置文件,最终内容如下:

# Copyright (c) OpenMMLab. All rights reserved.

import torch

from datasets import load_dataset

from mmengine.dataset import DefaultSampler

from mmengine.hooks import (CheckpointHook, DistSamplerSeedHook, IterTimerHook,

LoggerHook, ParamSchedulerHook)

from mmengine.optim import AmpOptimWrapper, CosineAnnealingLR, LinearLR

from peft import LoraConfig

from torch.optim import AdamW

from transformers import (AutoModelForCausalLM, AutoTokenizer,

BitsAndBytesConfig)

from xtuner.dataset import process_hf_dataset

from xtuner.dataset.collate_fns import default_collate_fn

from xtuner.dataset.map_fns import alpaca_map_fn, template_map_fn_factory

from xtuner.engine.hooks import (DatasetInfoHook, EvaluateChatHook,

VarlenAttnArgsToMessageHubHook)

from xtuner.engine.runner import TrainLoop

from xtuner.model import SupervisedFinetune

from xtuner.parallel.sequence import SequenceParallelSampler

from xtuner.utils import PROMPT_TEMPLATE, SYSTEM_TEMPLATE

#######################################################################

# PART 1 Settings #

#######################################################################

# Model

pretrained_model_name_or_path = '/root/finetune/models/internlm2_5-7b-chat'

use_varlen_attn = False

# Data

alpaca_en_path = '/root/finetune/data/assistant_Tuner_change.jsonl'

prompt_template = PROMPT_TEMPLATE.internlm2_chat

max_length = 2048

pack_to_max_length = True

# parallel

sequence_parallel_size = 1

# Scheduler & Optimizer

batch_size = 1 # per_device

accumulative_counts = 1

accumulative_counts *= sequence_parallel_size

dataloader_num_workers = 0

max_epochs = 30

optim_type = AdamW

lr = 2e-4

betas = (0.9, 0.999)

weight_decay = 0

max_norm = 1 # grad clip

warmup_ratio = 0.03

# Save

save_steps = 10

save_total_limit = 2 # Maximum checkpoints to keep (-1 means unlimited)

# Evaluate the generation performance during the training

evaluation_freq = 10

SYSTEM = SYSTEM_TEMPLATE.alpaca

evaluation_inputs = [

'请介绍一下你自己', 'Please introduce yourself'

]

#######################################################################

# PART 2 Model & Tokenizer #

#######################################################################

tokenizer = dict(

type=AutoTokenizer.from_pretrained,

pretrained_model_name_or_path=pretrained_model_name_or_path,

trust_remote_code=True,

padding_side='right')

model = dict(

type=SupervisedFinetune,

use_varlen_attn=use_varlen_attn,

llm=dict(

type=AutoModelForCausalLM.from_pretrained,

pretrained_model_name_or_path=pretrained_model_name_or_path,

trust_remote_code=True,

torch_dtype=torch.float16,

quantization_config=dict(

type=BitsAndBytesConfig,

load_in_4bit=True,

load_in_8bit=False,

llm_int8_threshold=6.0,

llm_int8_has_fp16_weight=False,

bnb_4bit_compute_dtype=torch.float16,

bnb_4bit_use_double_quant=True,

bnb_4bit_quant_type='nf4')),

lora=dict(

type=LoraConfig,

r=64,

lora_alpha=16,

lora_dropout=0.1,

bias='none',

task_type='CAUSAL_LM'))

#######################################################################

# PART 3 Dataset & Dataloader #

#######################################################################

alpaca_en = dict(

type=process_hf_dataset,

dataset=dict(type=load_dataset, path='json', data_files=dict(train=alpaca_en_path)),

tokenizer=tokenizer,

max_length=max_length,

dataset_map_fn=None,

template_map_fn=dict(

type=template_map_fn_factory, template=prompt_template),

remove_unused_columns=True,

shuffle_before_pack=True,

pack_to_max_length=pack_to_max_length,

use_varlen_attn=use_varlen_attn)

sampler = SequenceParallelSampler \

if sequence_parallel_size > 1 else DefaultSampler

train_dataloader = dict(

batch_size=batch_size,

num_workers=dataloader_num_workers,

dataset=alpaca_en,

sampler=dict(type=sampler, shuffle=True),

collate_fn=dict(type=default_collate_fn, use_varlen_attn=use_varlen_attn))

#######################################################################

# PART 4 Scheduler & Optimizer #

#######################################################################

# optimizer

optim_wrapper = dict(

type=AmpOptimWrapper,

optimizer=dict(

type=optim_type, lr=lr, betas=betas, weight_decay=weight_decay),

clip_grad=dict(max_norm=max_norm, error_if_nonfinite=False),

accumulative_counts=accumulative_counts,

loss_scale='dynamic',

dtype='float16')

# learning policy

# More information: https://github.com/open-mmlab/mmengine/blob/main/docs/en/tutorials/param_scheduler.md # noqa: E501

param_scheduler = [

dict(

type=LinearLR,

start_factor=1e-5,

by_epoch=True,

begin=0,

end=warmup_ratio * max_epochs,

convert_to_iter_based=True),

dict(

type=CosineAnnealingLR,

eta_min=0.0,

by_epoch=True,

begin=warmup_ratio * max_epochs,

end=max_epochs,

convert_to_iter_based=True)

]

# train, val, test setting

train_cfg = dict(type=TrainLoop, max_epochs=max_epochs)

#######################################################################

# PART 5 Runtime #

#######################################################################

# Log the dialogue periodically during the training process, optional

custom_hooks = [

dict(type=DatasetInfoHook, tokenizer=tokenizer),

dict(

type=EvaluateChatHook,

tokenizer=tokenizer,

every_n_iters=evaluation_freq,

evaluation_inputs=evaluation_inputs,

system=SYSTEM,

prompt_template=prompt_template)

]

if use_varlen_attn:

custom_hooks += [dict(type=VarlenAttnArgsToMessageHubHook)]

# configure default hooks

default_hooks = dict(

# record the time of every iteration.

timer=dict(type=IterTimerHook),

# print log every 10 iterations.

logger=dict(type=LoggerHook, log_metric_by_epoch=False, interval=10),

# enable the parameter scheduler.

param_scheduler=dict(type=ParamSchedulerHook),

# save checkpoint per `save_steps`.

checkpoint=dict(

type=CheckpointHook,

by_epoch=False,

interval=save_steps,

max_keep_ckpts=save_total_limit),

# set sampler seed in distributed evrionment.

sampler_seed=dict(type=DistSamplerSeedHook),

)

# configure environment

env_cfg = dict(

# whether to enable cudnn benchmark

cudnn_benchmark=False,

# set multi process parameters

mp_cfg=dict(mp_start_method='fork', opencv_num_threads=0),

# set distributed parameters

dist_cfg=dict(backend='nccl'),

)

# set visualizer

visualizer = None

# set log level

log_level = 'INFO'

# load from which checkpoint

load_from = None

# whether to resume training from the loaded checkpoint

resume = False

# Defaults to use random seed and disable `deterministic`

randomness = dict(seed=None, deterministic=False)

# set log processor

log_processor = dict(by_epoch=False)

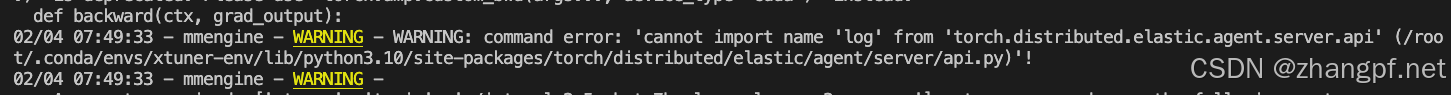

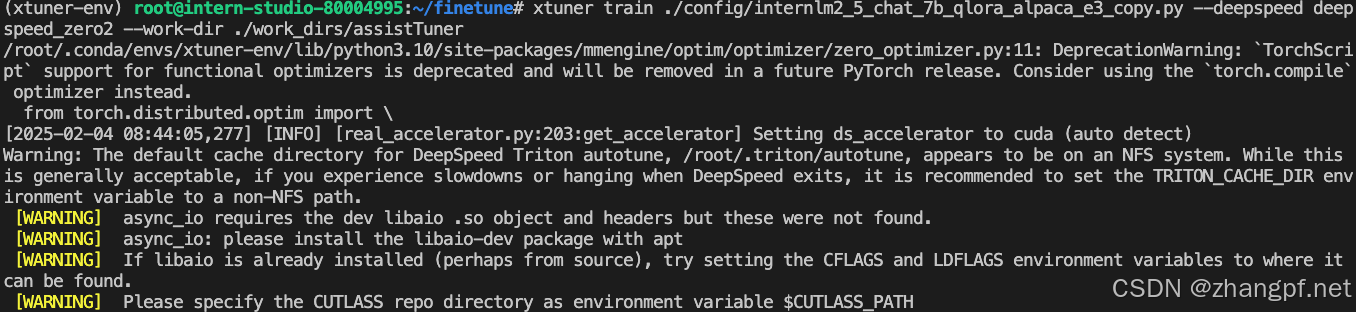

启动微调:

cd /root/finetune

xtuner train ./config/internlm2_5_chat_7b_qlora_alpaca_e3_copy.py --deepspeed deepspeed_zero2 --work-dir ./work_dirs/assistTuner

如果遇到如下错误:

安装 deepspeed==0.14.4 可以解决

pip install deepspeed==0.14.4

重新启动微调:

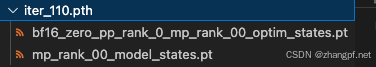

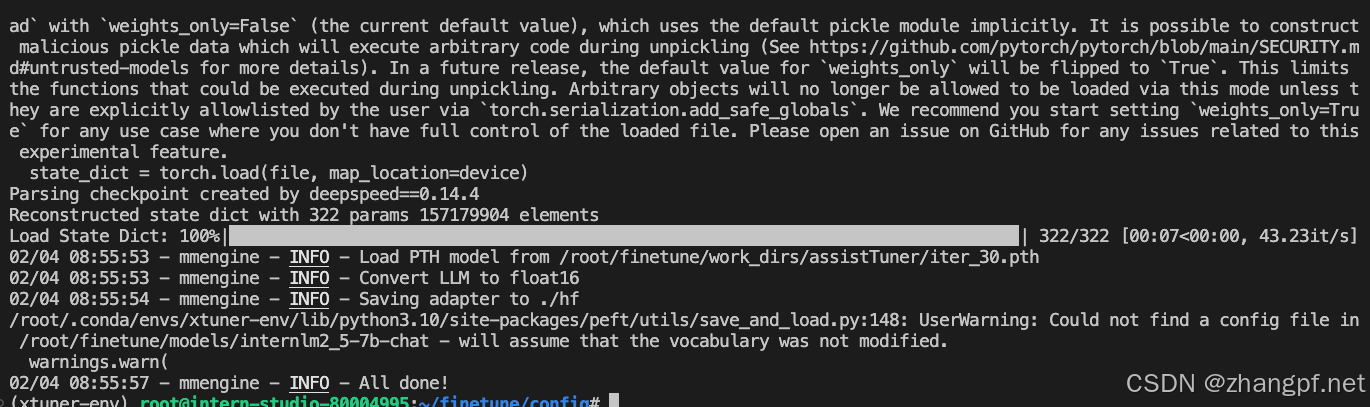

权重文件为使用Pytorch训练出来的,需要转换为HuggingFace格式。

转换命令:

# 先获取最后保存的一个pth文件

pth_file=`ls -t /root/finetune/work_dirs/assistTuner/*.pth | head -n 1 | sed 's/:$//'`

export MKL_SERVICE_FORCE_INTEL=1

export MKL_THREADING_LAYER=GNU

xtuner convert pth_to_hf ./internlm2_5_chat_7b_qlora_alpaca_e3_copy.py ${pth_file} ./hf

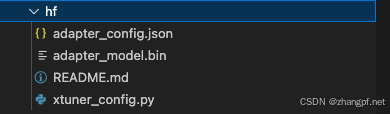

转换之后的文件:

6. 模型合并

转换为之后并不是一个完整的模型,还需要把该权重与基座模型进行合并,合并之后才是一个完整的模型,就可以被直接使用了。

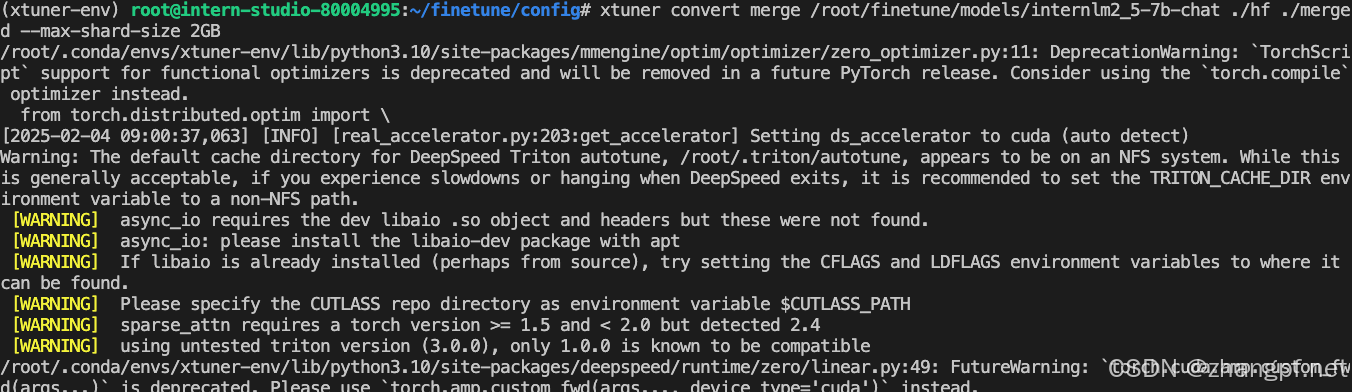

XTuner的合并命令为 xtuner convert merge。

xtuner convert merge /root/finetune/models/internlm2_5-7b-chat ./hf ./merged --max-shard-size 2GB

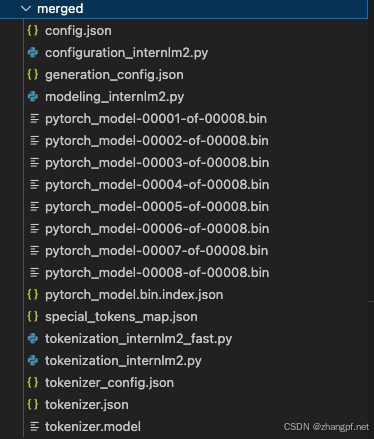

合并之后的目录结构:

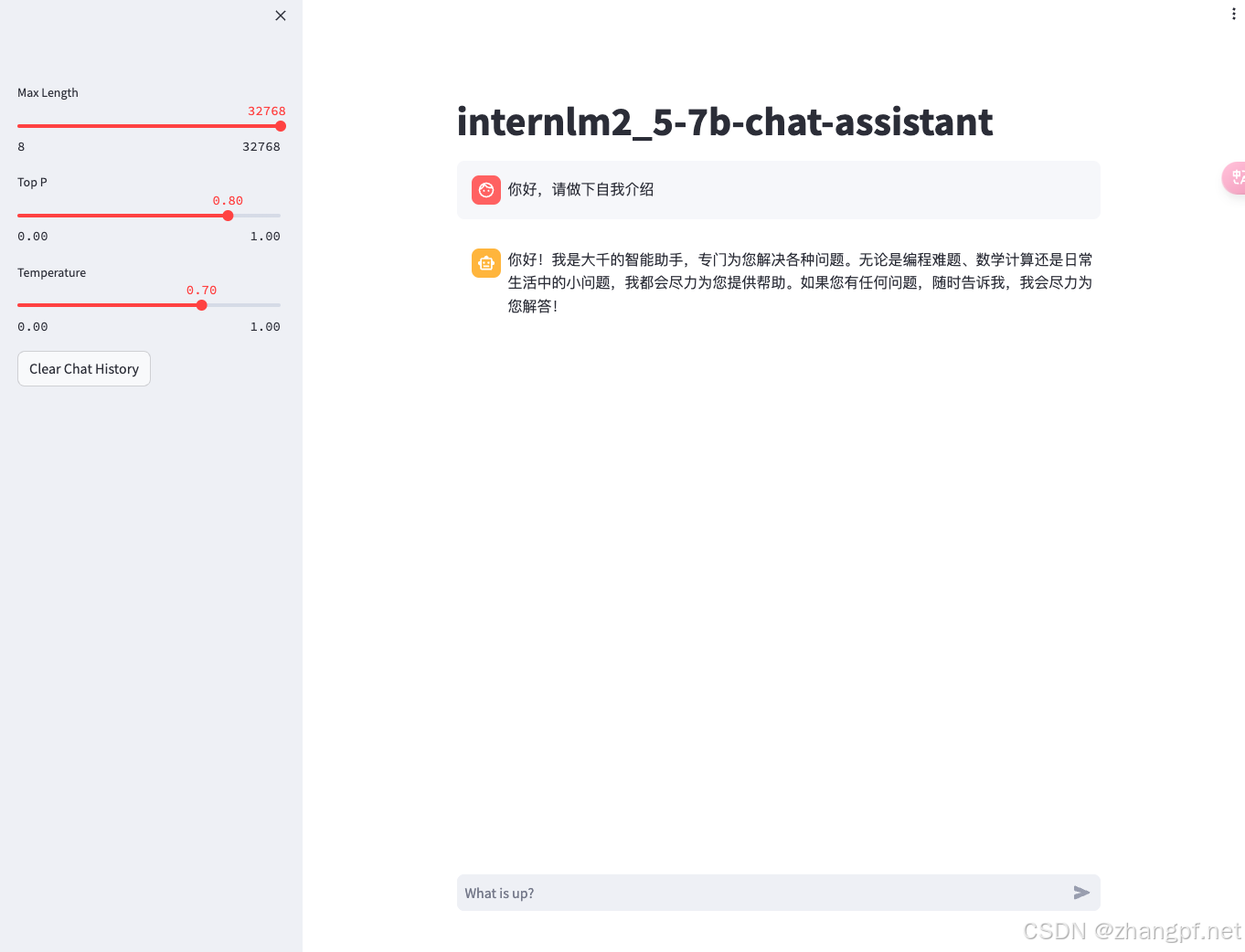

7. 利用合并模型 web 对话

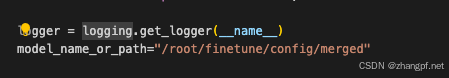

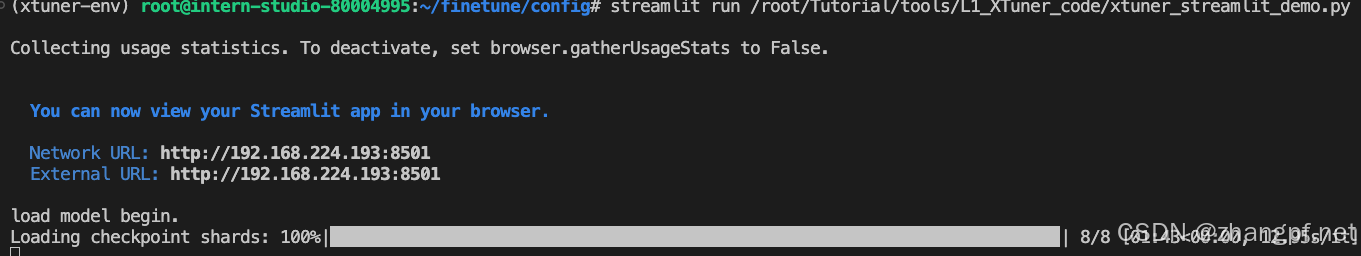

修改/root/Tutorial/tools/L1_XTuner_code/xtuner_streamlit_demo.py文件,把其中的模型修改为我们刚刚合并的模型路径,然后执行 。

。

利用端口映射把服务器中的8501端口映射到本地

ssh -CNg -L 8501:127.0.0.1:8501 root@ssh.intern-ai.org.cn

浏览器访问 127.0.0.1:8501, 效果如下:

1442

1442

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?