打卡

import mindspore

from mindspore import nn

from mindspore.dataset import vision, transforms

from mindspore.dataset import MnistDataset

from download import download

url = "https://mindspore-website.obs.cn-north-4.myhuaweicloud.com/" \

"notebook/datasets/MNIST_Data.zip"

path = download(url, "./", kind="zip", replace=True)

def datapipe(path, batch_size):

image_transforms = [

vision.Rescale(1.0 / 255.0, 0),

vision.Normalize(mean=(0.1307,), std=(0.3081,)),

vision.HWC2CHW()

]

label_transform = transforms.TypeCast(mindspore.int32)

dataset = MnistDataset(path)

dataset = dataset.map(image_transforms, 'image')

dataset = dataset.map(label_transform, 'label')

dataset = dataset.batch(batch_size)

return dataset

train_dataset = datapipe('MNIST_Data/train', batch_size=64)

test_dataset = datapipe('MNIST_Data/test', batch_size=64)

class Network(nn.Cell):

def __init__(self):

super().__init__()

self.flatten = nn.Flatten()

self.dense_relu_sequential = nn.SequentialCell(

nn.Dense(28*28, 512),

nn.ReLU(),

nn.Dense(512,512),

nn.ReLU(),

nn.Dense(512, 10)

)

def construct(self, x):

x = self.flatten(x)

logits = self.dense_relu_sequential(x)

return logits

model = Network()

epochs = 3

batch_size = 64

learning_rate = 1e-2

loss_fn = nn.CrossEntropyLoss()

optimizer = nn.SGD(model.trainable_params(), learning_rate=learning_rate)

def forward_fn(data, label):

logits = model(data)

loss = loss_fn(logits, label)

return loss, logits

grad_fn = mindspore.value_and_grad(forward_fn, None, optimizer.parameters, has_aux=True)

def train_step(data, label):

(loss, _), grads = grad_fn(data, label)

optimizer(grads)

return loss

def train_loop(model, dataset):

size = dataset.get_dataset_size()

model.set_train()

for batch, (data, label) in enumerate(dataset.create_tuple_iterator()):

loss = train_step(data, label)

if batch % 100 == 0:

loss, current = loss.asnumpy(),batch

print(f"loss: {loss:>7f} [{current:>3d}/{size:>3d}]")

def test_loop(model, dataset, loss_fn):

num_batches = dataset.get_dataset_size()

model.set_train(False)

total, test_loss, correct = 0, 0, 0

for data, label in dataset.create_tuple_iterator():

pred = model(data)

total += len(data)

test_loss += loss_fn(pred,label).asnumpy()

correct += (pred.argmax(1) == label).asnumpy().sum()

test_loss /= num_batches

correct /= total

print(f"Test: \n Accuracy: {(100*correct):>0.1f}%, Avg loss: {test_loss:>8f} \n")

loss_fn = nn.CrossEntropyLoss()

optimizer = nn.SGD(model.trainable_params(), learning_rate=learning_rate)

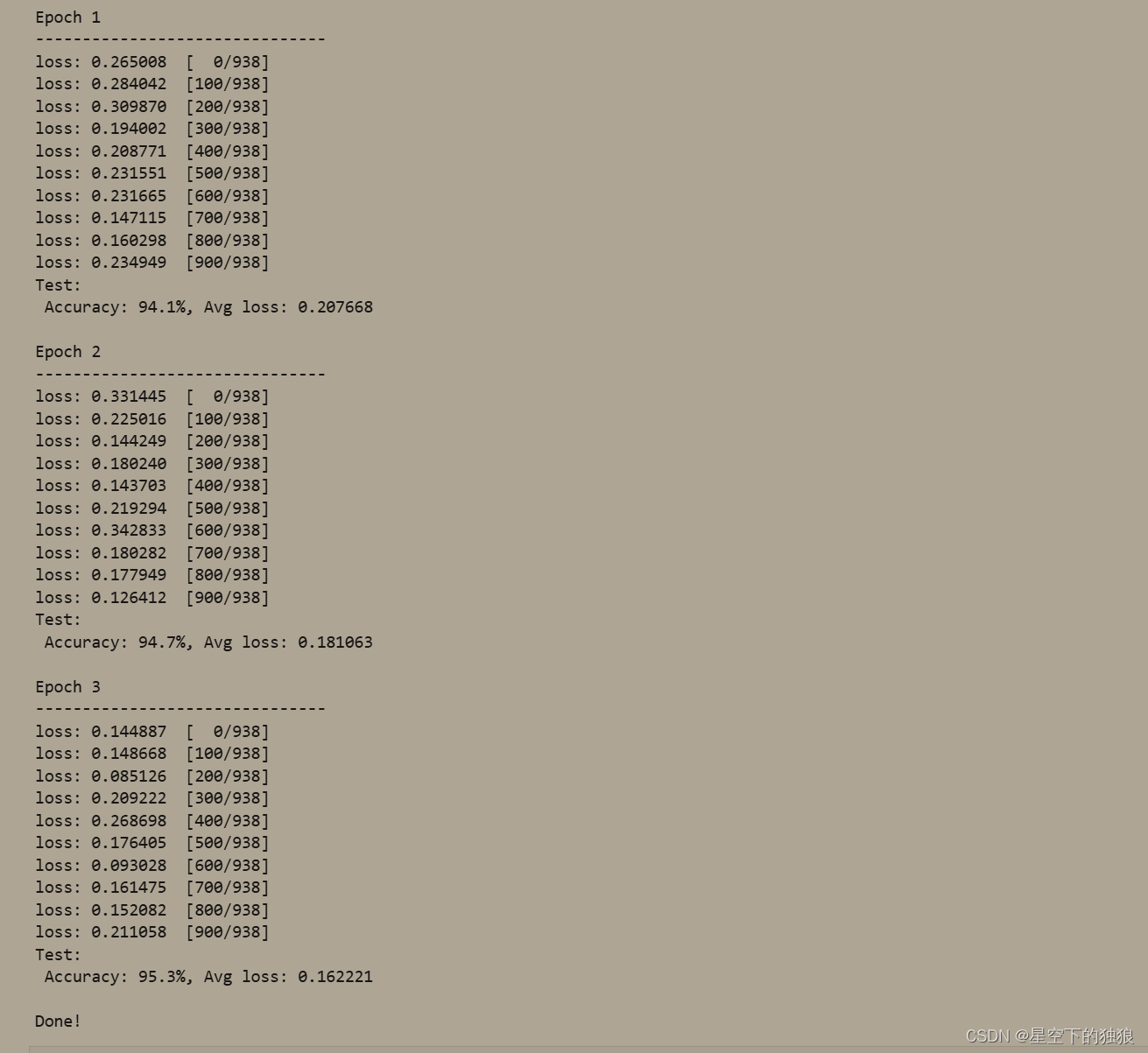

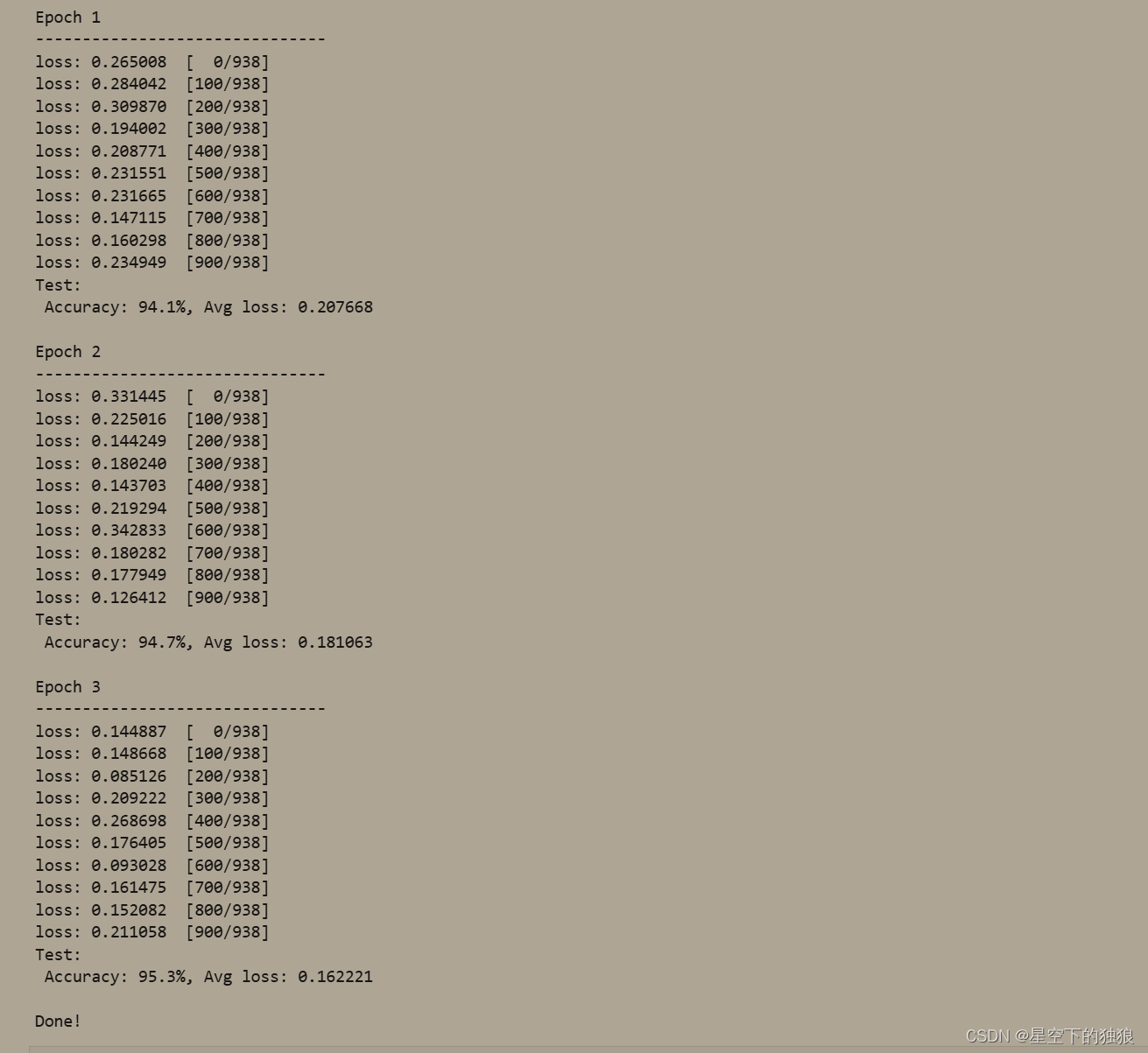

for t in range(epochs):

print(f"Epoch {t+1}\n------------------------------")

train_loop(model, train_dataset)

test_loop(model,test_dataset, loss_fn)

print("Done!")

输出 这边由于内存不足没有结果,借用其他机器输出

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?