ffmpeg中一般图像视频编解码中像素格式用的是yuv,其中y是亮度(灰度),uv表示色度,当没有uv时,彩色就变成了黑白色。

ffmpeg读取的图片yuv分量中,是经过量化后的,所谓量化,是指将范围固定到某一范围,此时y,u,v取值128,才表示此分量真正没有。

所以对读取到图片,进行下面的处理

memset(pFrameVideoA->data[1], 128, m_pReadCodecCtx_VideoA->width * m_pReadCodecCtx_VideoA->height / 4);

memset(pFrameVideoA->data[2], 128, m_pReadCodecCtx_VideoA->width * m_pReadCodecCtx_VideoA->height / 4);

则相当于清除了色度信息。

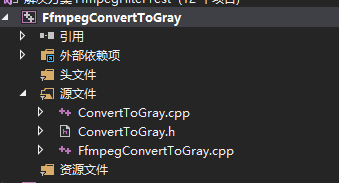

代码结构如下:

其中FfmpegConvertToGray.cpp的代码如下:

#include <iostream>

#include "ConvertToGray.h"

#ifdef __cplusplus

extern "C"

{

#endif

#pragma comment(lib, "avcodec.lib")

#pragma comment(lib, "avformat.lib")

#pragma comment(lib, "avutil.lib")

#pragma comment(lib, "avdevice.lib")

#pragma comment(lib, "avfilter.lib")

#pragma comment(lib, "postproc.lib")

#pragma comment(lib, "swresample.lib")

#pragma comment(lib, "swscale.lib")

#ifdef __cplusplus

};

#endif

int main()

{

CConvertToGray cConvertToGray;

const char *pFileA = "E:\\learn\\ffmpeg\\FfmpegFilterTest\\x64\\Release\\in-vs.mp4";

const char *pFileOut = "E:\\learn\\ffmpeg\\FfmpegFilterTest\\x64\\Release\\out-gray.mp4";

cConvertToGray.StartConvertToGray(pFileA, pFileOut);

cConvertToGray.WaitFinish();

return 0;

}

ConvertToGray.h的代码如下:

#pragma once

#include <Windows.h>

#ifdef __cplusplus

extern "C"

{

#endif

#include "libavcodec/avcodec.h"

#include "libavformat/avformat.h"

#include "libswscale/swscale.h"

#include "libswresample/swresample.h"

#include "libavdevice/avdevice.h"

#include "libavutil/audio_fifo.h"

#include "libavutil/avutil.h"

#include "libavutil/fifo.h"

#include "libavutil/frame.h"

#include "libavutil/imgutils.h"

#include "libavfilter/avfilter.h"

#include "libavfilter/buffersink.h"

#include "libavfilter/buffersrc.h"

#ifdef __cplusplus

};

#endif

class CConvertToGray

{

public:

CConvertToGray();

~CConvertToGray();

public:

int StartConvertToGray(const char *pFileA, const char *pFileOut);

int WaitFinish();

private:

int OpenFileA(const char *pFileA);

int OpenOutPut(const char *pFileOut);

private:

static DWORD WINAPI VideoAReadProc(LPVOID lpParam);

void VideoARead();

static DWORD WINAPI VideoConvertToGrayProc(LPVOID lpParam);

void VideoConvertToGray();

private:

AVFormatContext *m_pFormatCtx_FileA

本文介绍了如何在FFmpeg中读取并处理YUV格式的图片,通过清除色度信息(UV分量设为128)实现灰度转换,展示了关键的内存操作和编码处理流程。

本文介绍了如何在FFmpeg中读取并处理YUV格式的图片,通过清除色度信息(UV分量设为128)实现灰度转换,展示了关键的内存操作和编码处理流程。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1773

1773

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?