最近下载了下spak,跑个hello word小Demo,准备如下门,使用的是local方式的,在idea中跑wordcount,就出现了上述问题。按照网上说的,hadoop.ddl和winutils.exe都放到hadoop的bin下面了,而且还放到了C:/window/system32,满心欢喜的运行例子,发现还是出现上述问题,我懵了,后来看大家提供的需要把nativeIo那个类源码的access0方法变成returen true,我也照做了,好消息是终于成功了,开心!!!

后来我准备试下spark-submit提交,发现又出现问题了,我在想可能是我编译的例子有问题,那我就用spark-shell直接在终端写demo,发现到了RDD操作的时候,就出现上述问题,我崩溃了。。。

这个问题不解决,总感觉恶心的慌,我想是不是版本问题,我的spark是3.1.1,scala版本是2.12.10,hadoop是3.2,我看了下官网说的符合要求,后来怀疑haoop.ddl和winutils.exe是不是不符合我的hadoop版本,网上找了下,这俩包最新的是3.1,凑合用吧,再次满怀心情运行,发现还是出现问题。。。

后来几经崩溃下,发现网上的一篇,说是hadoop.ddl没有就是为了没有编译,System.load("D://hadoop.ddl"),发现说是java版本问题,重装了下Java,终于完美解决!

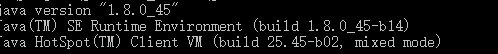

之前的java版本是32位的,但是我的机器是64位的

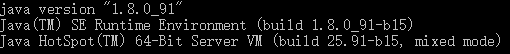

后来重装了个64位java

实践是检验真理的唯一标准!

博主在尝试运行Spark本地模式的WordCount Demo时遇到问题,通过放置hadoop.ddl和winutils.exe文件,修改源码以及更新Java版本,最终解决了本地运行的问题。然而,当尝试使用spark-submit提交作业时,又遇到新的错误。经过排查,发现是由于使用了32位Java,而博主的系统为64位。升级到64位Java后,问题得到解决。博客强调了版本匹配和实践的重要性。

博主在尝试运行Spark本地模式的WordCount Demo时遇到问题,通过放置hadoop.ddl和winutils.exe文件,修改源码以及更新Java版本,最终解决了本地运行的问题。然而,当尝试使用spark-submit提交作业时,又遇到新的错误。经过排查,发现是由于使用了32位Java,而博主的系统为64位。升级到64位Java后,问题得到解决。博客强调了版本匹配和实践的重要性。

2052

2052