In this tutorial series, I will try to recreate an artificial neural network as described in the book Neural Networks from Scratch by Harrison Kinsley in Cangjie instead of Python starting from code for a single neuron, moving to layers of neurons, implement batching, activation and loss functions, backpropagation, and finally train it on actual data.

Introduction

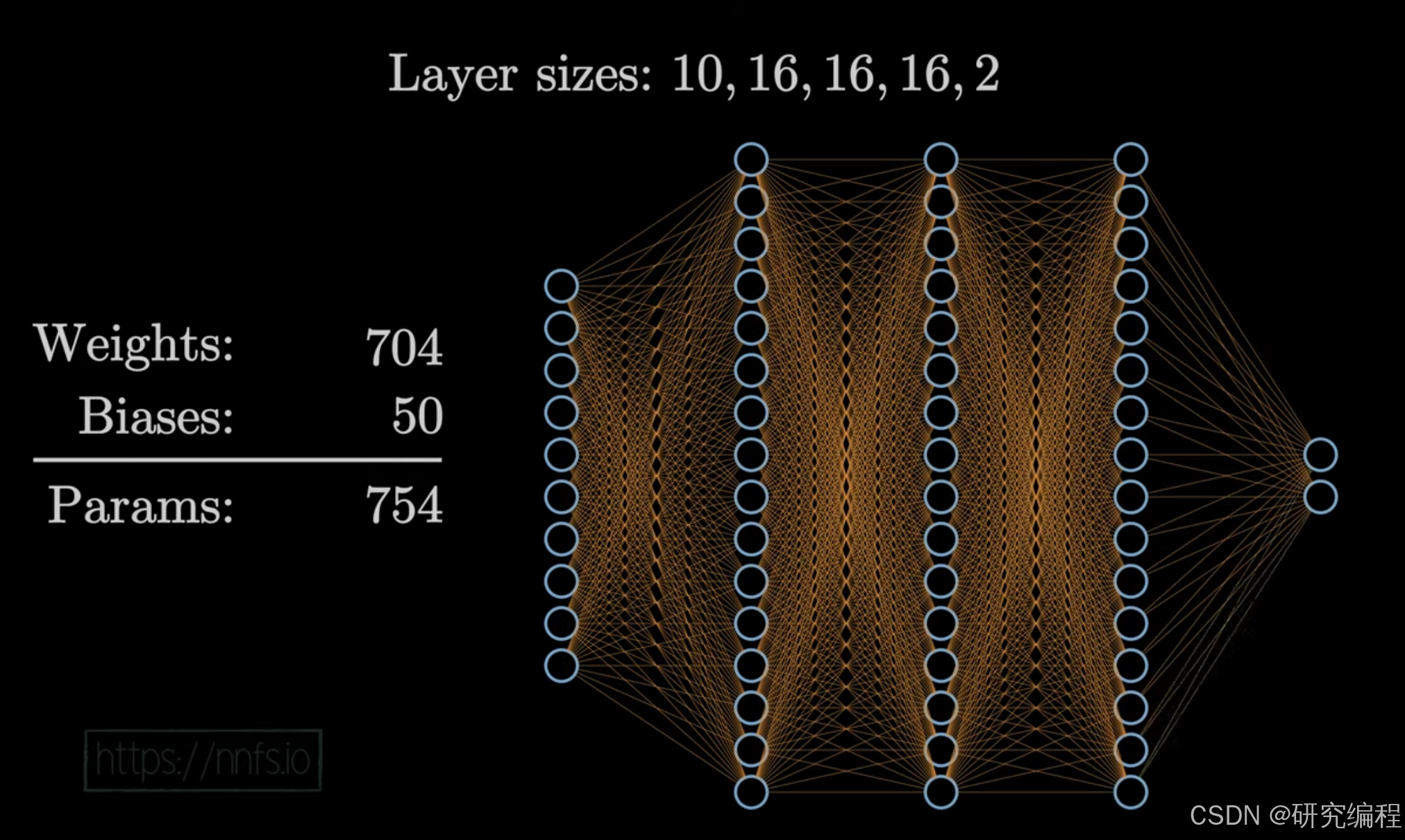

I am sure many of you are familiar with the idea of neural networks (NN). Below is an example of a typical NN for binary classification. Here we have 5 layers. The first layer is the input layer of size 10 - your data (also called `features` or `X`).

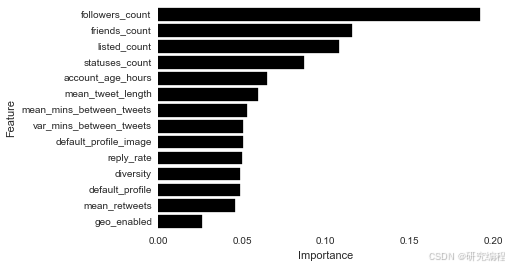

Each input corresponds to a data point. For example, in a Twitter bot dataset, these features could be follower count, friends count, account age in hours, mean tweet length, etc. - all of which are important indicators of whether an account is a bot (1 or True) or human (0 or False) - your `y` (the predicted value).

Source: Bot or Not: an end-to-end data analysis in Python

It is important to note that values of X, or values of the input layer, need to be numeric or at least represented as such. For example, `diversity` feature in the image above refers to lexical diversity - the ratio of unique tokens to total tokens in a document - and not actual unique words.

Layers 2, 3, and 4 of size 16 are hidden layers (also referred to as `dense`)

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?