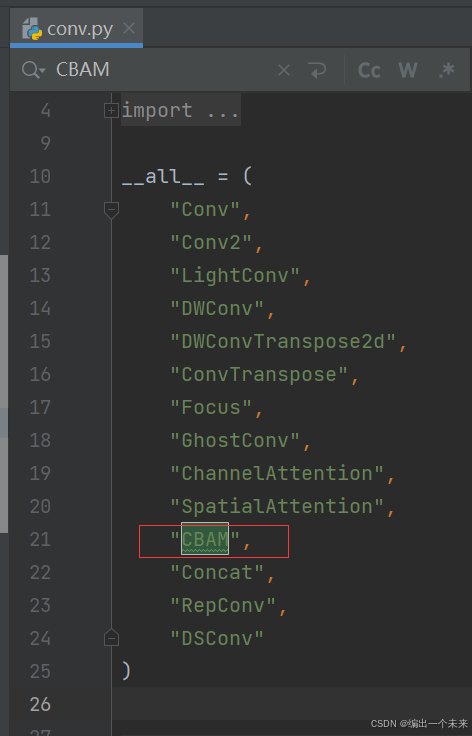

1 CBAM代码写入ultralytics.nn.modules.conv文件下面 并在文件前 添加关键字

class ChannelAttention(nn.Module):

"""Channel-attention module https://github.com/open-mmlab/mmdetection/tree/v3.0.0rc1/configs/rtmdet."""

def __init__(self, channels: int) -> None:

"""Initializes the class and sets the basic configurations and instance variables required."""

super().__init__()

self.pool = nn.AdaptiveAvgPool2d(1)

self.fc = nn.Conv2d(channels, channels, 1, 1, 0, bias=True)

self.act = nn.Sigmoid()

def forward(self, x: torch.Tensor) -> torch.Tensor:

"""Applies forward pass using activation on convolutions of the input, optionally using batch normalization."""

return x * self.act(self.fc(self.pool(x)))

class SpatialAttention(nn.Module):

"""Spatial-attention module."""

def __init__(self, kernel_size=7):

"""Initialize Spatial-attention module with kernel size argument."""

super().__init__()

assert kernel_size in {3, 7}, "kernel size must be 3 or 7"

padding = 3 if kernel_size == 7 else 1

self.cv1 = nn.Conv2d(2, 1, kernel_size, padding=padding, bias=False)

self.act = nn.Sigmoid()

def forward(self, x):

"""Apply channel and spatial attention on input for feature recalibration."""

return x * self.act(self.cv1(torch.cat([torch.mean(x, 1, keepdim=True), torch.max(x, 1, keepdim=True)[0]], 1)))

class CBAM(nn.Module):

"""Convolutional Block Attention Module."""

def __init__(self, c1, kernel_size=7):

"""Initialize CBAM with given input channel (c1) and kernel size."""

super().__init__()

self.channel_attention = ChannelAttention(c1)

self.spatial_attention = SpatialAttention(kernel_size)

def forward(self, x):

"""Applies the forward pass through C1 module."""

return self.spatial_attention(self.channel_attention(x))

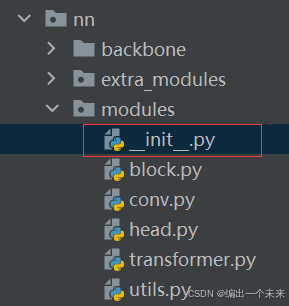

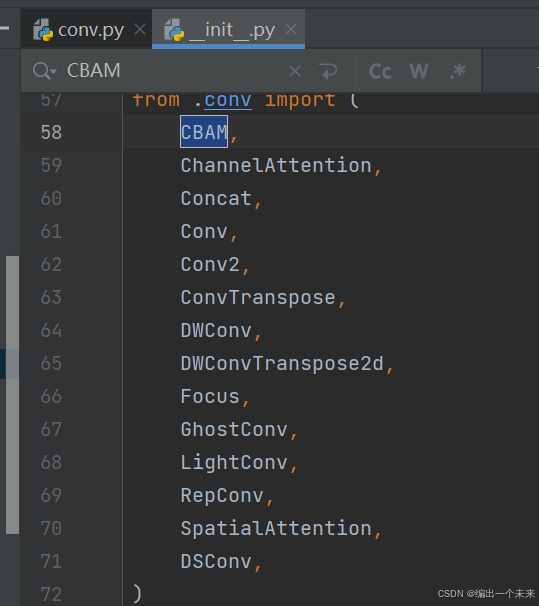

2 在ultralytics.nn.modules 的init.py 中写添加关键字

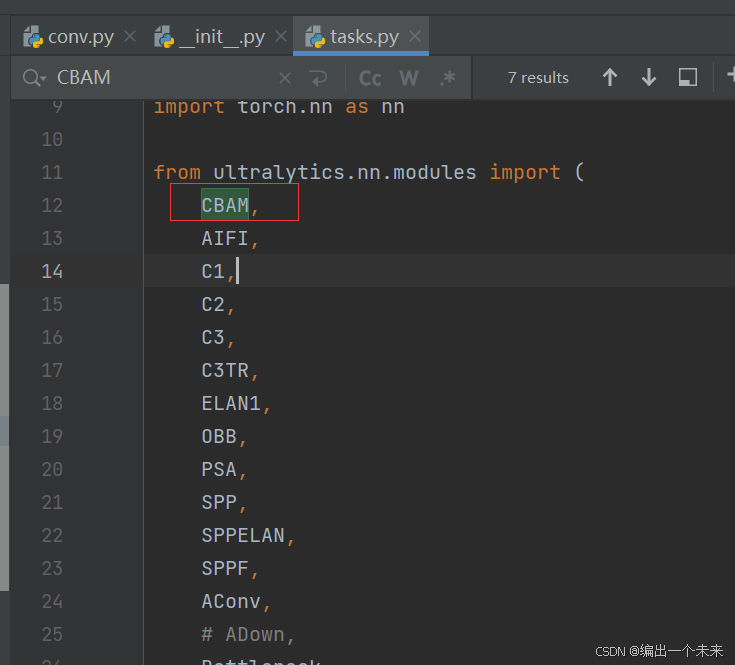

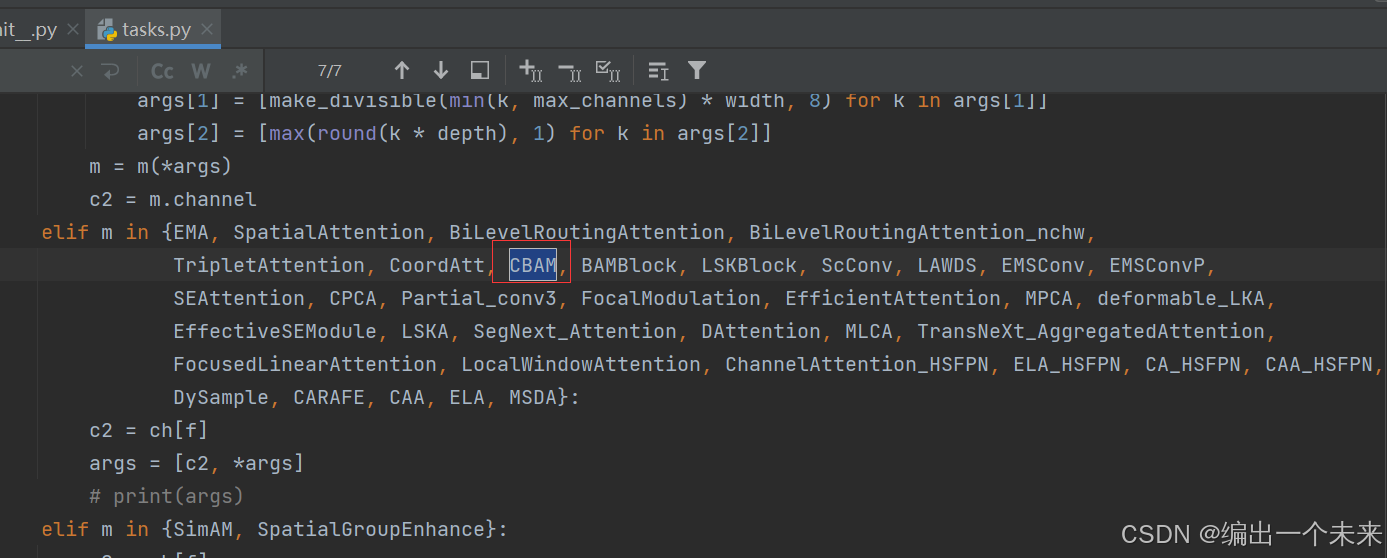

3 在ultralytics.nn 里 tasks.py 里面 导入CBAM

4 在tasks.py文件中的parse_model文件中 下图所示位置加入关键字。

5 编写网络结构 yaml文件

# Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLOv8 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPs

s: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPs

m: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPs

l: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPs

x: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs

# YOLOv8.0n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- [-1, 3, C2f, [128, True]]

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- [-1, 6, C2f, [256, True]]

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- [-1, 6, C2f, [512, True]]

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- [-1, 3, C2f, [1024, True]]

- [-1, 1, SPPF, [1024, 5]] # 9

- [-1, 1, CBAM, []] # 10

# YOLOv8.0n head

head:

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 6], 1, Concat, [1]] # cat backbone P4

- [-1, 3, C2f, [512]] # 12

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 4], 1, Concat, [1]] # cat backbone P3

- [-1, 3, C2f, [256]] # 15 (P3/8-small)

- [-1, 1, Conv, [256, 3, 2]]

- [[-1, 13], 1, Concat, [1]] # cat head P4

- [-1, 3, C2f, [512]] # 18 (P4/16-medium)

- [-1, 1, Conv, [512, 3, 2]]

- [[-1, 10], 1, Concat, [1]] # cat head P5

- [-1, 3, C2f, [1024]] # 21 (P5/32-large)

- [[16, 19, 22], 1, Detect, [nc]] # Detect(P3, P4, P5)

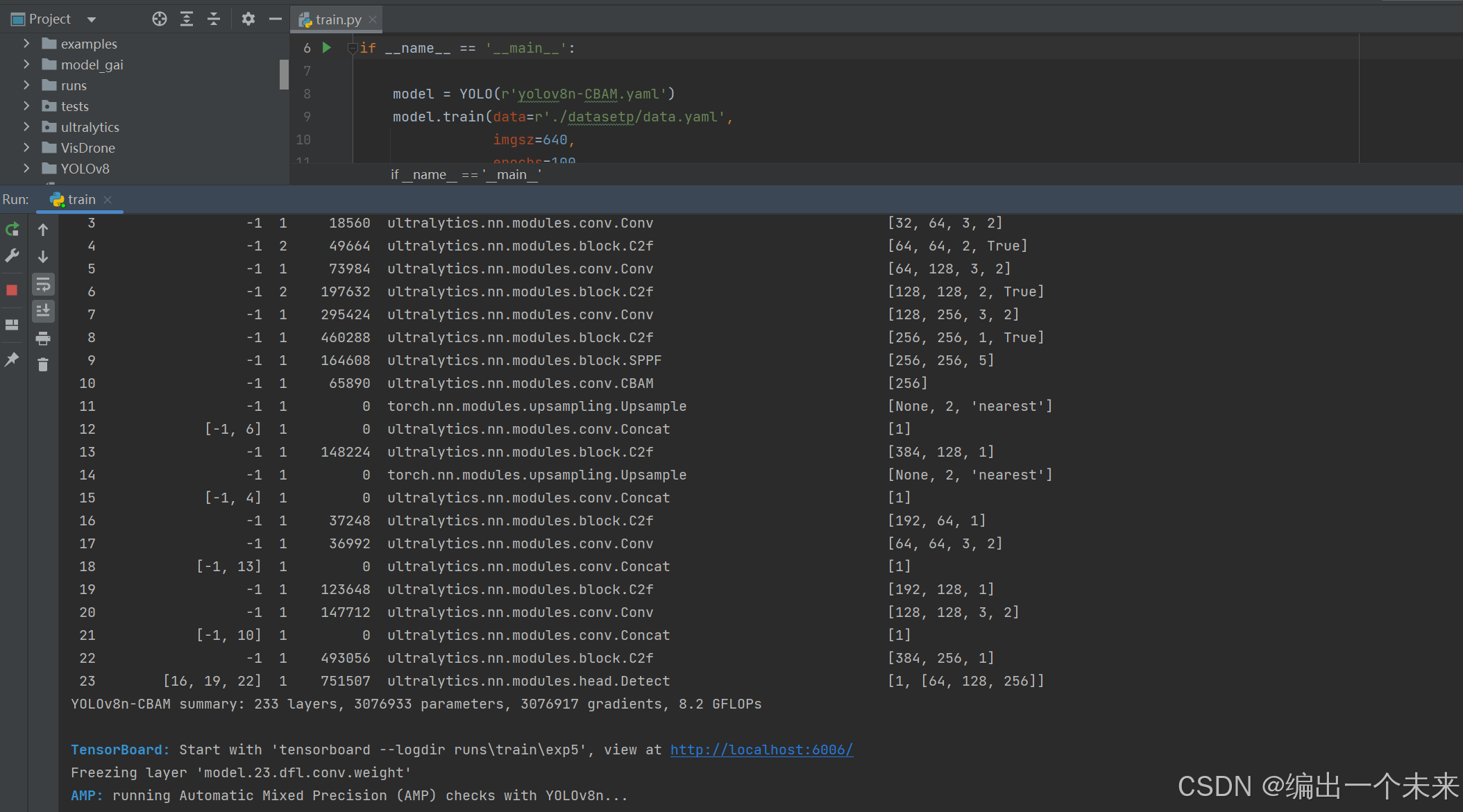

6 直接正常训练代码运行。

1050

1050

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?