Hadoop

- Hadoop实现了一个分布式文件系统(Hadoop Distributed FileSystem),简称HDFS。HDFS有高容错性的特点,并且设计用来部署在低廉的(low-cost)硬件上;而且它提供高吞吐量(highthroughput)来访问应用程序的数据,适合那些有着超大数据集(large dataset)的应用程序。HDFS放宽了(relax)POSIX的要求,可以以流的形式访问(streaming access)文件系统中的数据。

Hadoop技术原理

- Hdfs主要模块:NameNode、DataNode

- Yarn主要模块:ResourceManager、NodeManager

HDFS主要模块及运行原理:

1)NameNode:

- 功能:是整个文件系统的管理节点。维护整个文件系统的文件目录树,文件/目录的元数据和每个文件对应的数据块列表。接收用户的请求。

2)DataNode:

- 功能:是HA(高可用性)的一个解决方案,是备用镜像,但不支持热备

一、hadoop单机版测试

1.建立用户,设置密码

[root@server1 ~]# useradd -u 1000 hadoop

[root@server1 ~]# passwd hadoop

2.hadoop的安装配置

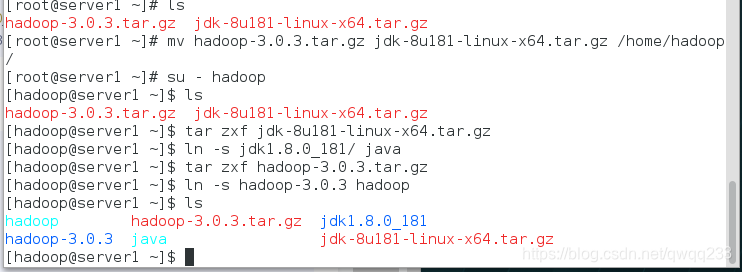

切换到hadoop用户解压安装包,我们需要将hadoop安装在hadoop用户中

[root@server1 ~]# mv hadoop-3.0.3.tar.gz jdk-8u181-linux-x64.tar.gz /home/hadoop

[root@server1 ~]# su - hadoop

[hadoop@server1 ~]$ ls

hadoop-3.0.3.tar.gz jdk-8u181-linux-x64.tar.gz

[hadoop@server1 ~]$ tar zxf jdk-8u181-linux-x64.tar.gz

[hadoop@server1 ~]$ ln -s jdk1.8.0_181/ java

[hadoop@server1 ~]$ tar zxf hadoop-3.0.3.tar.gz

[hadoop@server1 ~]$ ln -s hadoop-3.0.3 hadoop

[hadoop@server1 ~]$ ls

hadoop hadoop-3.0.3.tar.gz jdk1.8.0_181

hadoop-3.0.3 java jdk-8u181-linux-x64.tar.gz

3.配置环境变量

[hadoop@server1 hadoop]$ pwd

/home/hadoop/hadoop/etc/hadoop

[hadoop@server1 hadoop]$ vim hadoop-env.sh

54 export JAVA_HOME=/home/hadoop/java

[hadoop@server1 ~]$ vim .bash_profile

PATH=$PATH:$HOME/.local/bin:$HOME/bin:$HOME/java/bin

[hadoop@server1 ~]$ source .bash_profile

[hadoop@server1 ~]$ jps ##配置成功可以调用

2518 Jps

4.测试

[hadoop@server1 hadoop]$ pwd

/home/hadoop/hadoop

[hadoop@server1 hadoop]$ mkdir input

[hadoop@server1 hadoop]$ cp etc/hadoop/*.xml input/

[hadoop@server1 hadoop]$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-3.0.3.jar grep input output 'dfs[a-z.]+'

[hadoop@server1 hadoop]$ cd output/

[hadoop@server1 output]$ ls

part-r-00000 _SUCCESS

[hadoop@server1 output]$ cat *

1 dfsadmin

二、伪分布式

1.编辑文件

[hadoop@server1 hadoop]$ pwd

/home/hadoop/hadoop/etc/hadoop

[hadoop@server1 hadoop]$ vim core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

</configuration>

[hadoop@server1 hadoop]$ vim hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value> ##自己充当节点

</property>

</configuration>

2.生成密钥做免密连接

[hadoop@server1 hadoop]$ ssh-keygen

[hadoop@server1 hadoop]$ ssh-copy-id localhost

[hadoop@server1 ~]$ exit

logout

Connection to localhost closed.

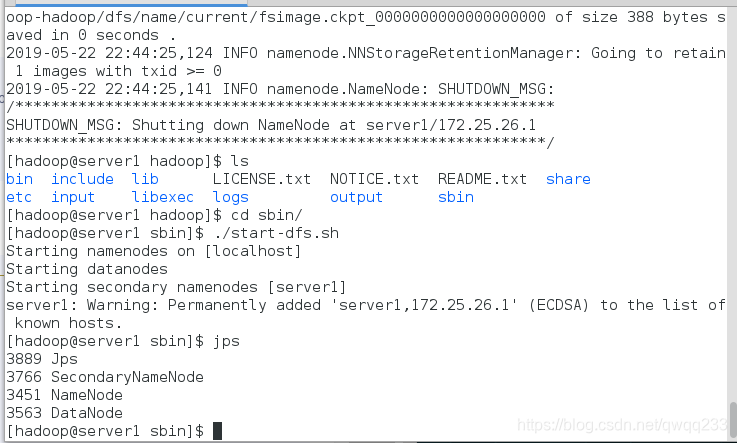

3.格式化,并开启服务

[hadoop@server1 hadoop]$ bin/hdfs namenode -format

[hadoop@server1 hadoop]$ pwd

/home/hadoop/hadoop

[hadoop@server1 hadoop]$ cd sbin/

[hadoop@server1 sbin]$ ./start-dfs.sh

[hadoop@server1 sbin]$ jps

3889 Jps

3766 SecondaryNameNode

3451 NameNode

3563 DataNode

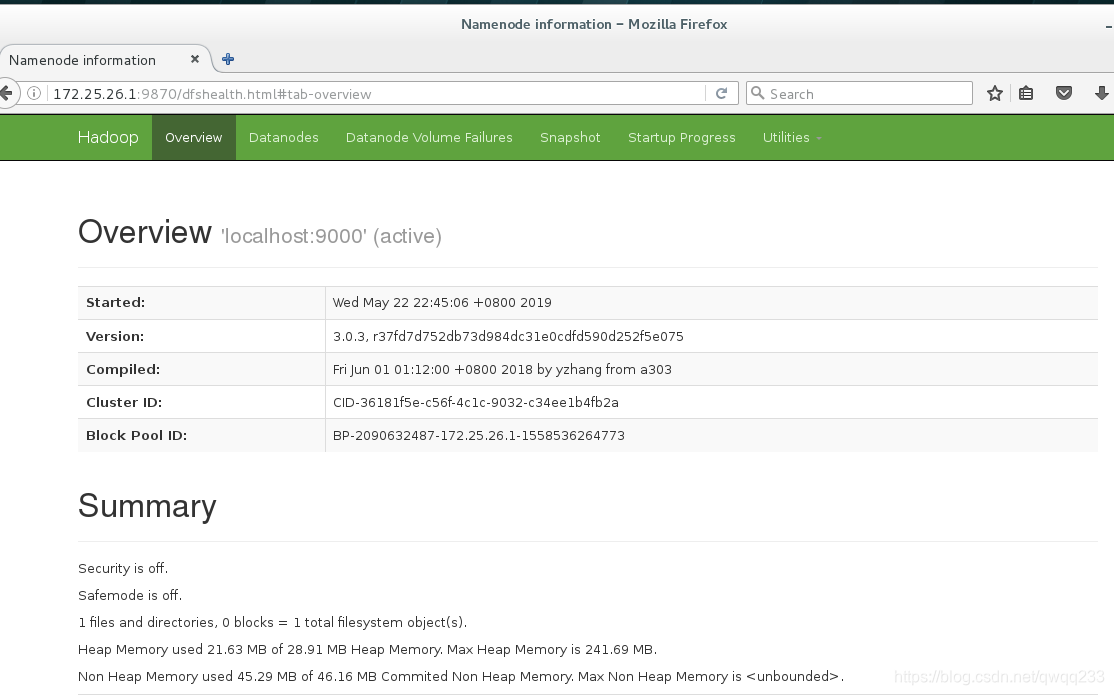

4.浏览器查看http://172.25.26.1:9870

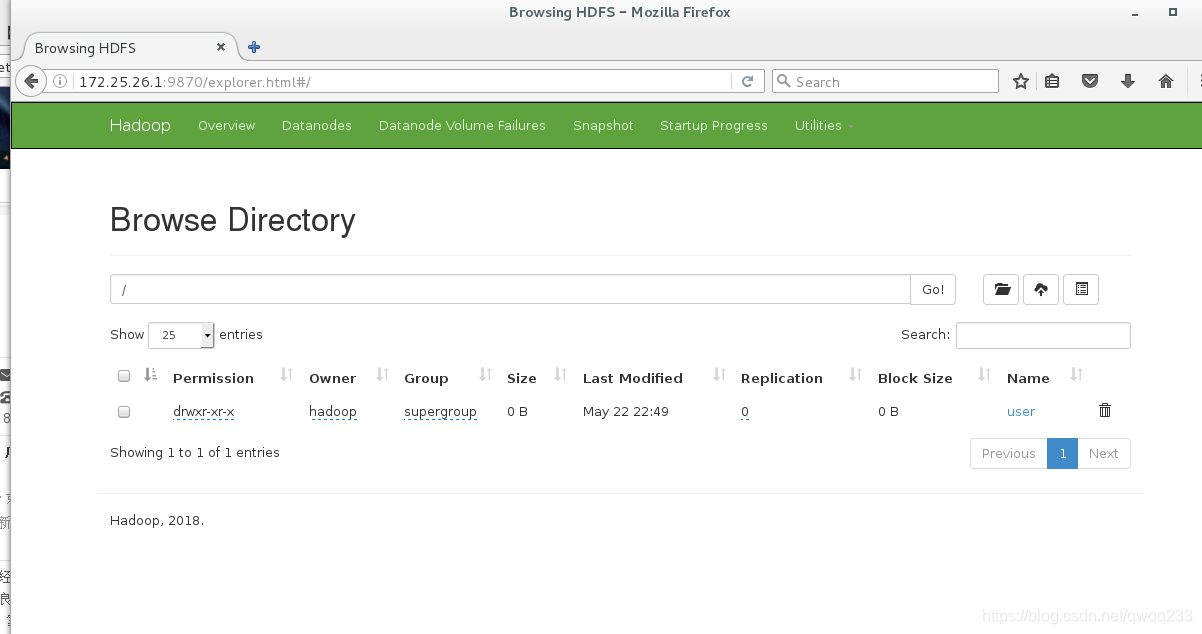

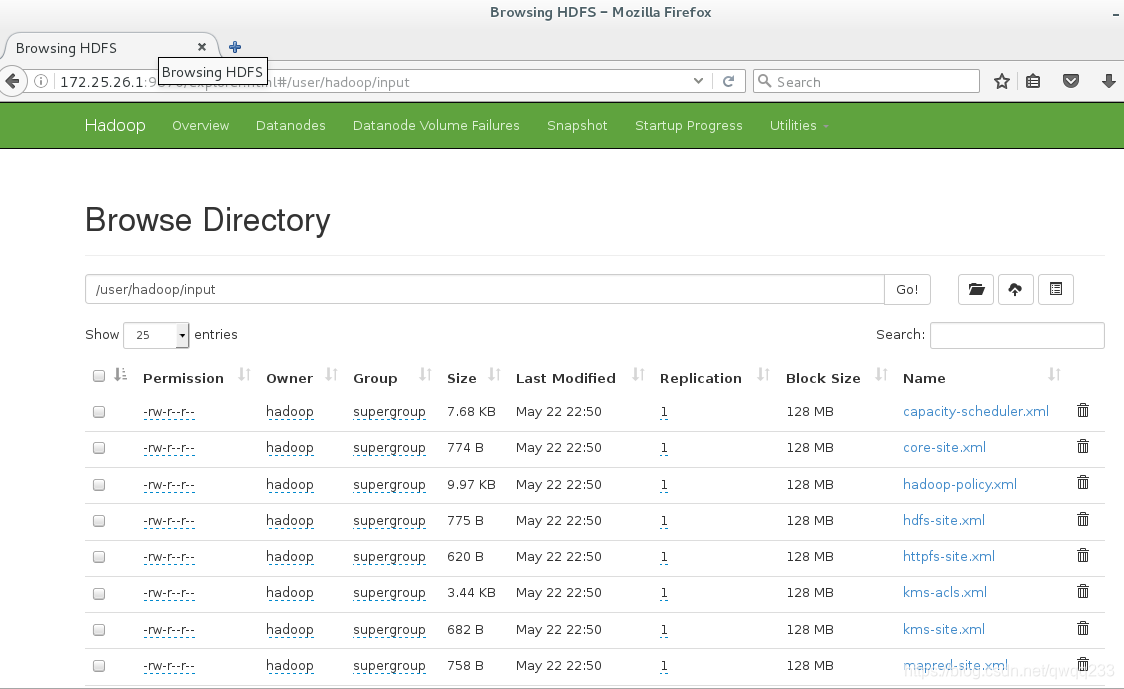

5.测试,创建目录,并上传

[hadoop@server1 hadoop]$ pwd

/home/hadoop/hadoop

[hadoop@server1 hadoop]$ bin/hdfs dfs -mkdir -p /user/hadoop

[hadoop@server1 hadoop]$ bin/hdfs dfs -ls

[hadoop@server1 hadoop]$

[hadoop@server1 hadoop]$ bin/hdfs dfs -put input

[hadoop@server1 hadoop]$ bin/hdfs dfs -ls

Found 1 items

drwxr-xr-x - hadoop supergroup 0 2019-05-22 22:50 input

删除input和output文件,重新执行命令

[hadoop@server1 hadoop]$ rm -fr input/ output/

[hadoop@server1 hadoop]$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-3.0.3.jar grep input output 'dfs[a-z.]+'

[hadoop@server1 hadoop]$ ls

bin etc include lib libexec LICENSE.txt logs NOTICE.txt README.txt sbin share

**此时input和output不会出现在当前目录下,而是上传到了分布式文件系统中,网页上可以看到**

[hadoop@server1 hadoop]$ bin/hdfs dfs -cat output/*

1 dfsadmin

[hadoop@server1 hadoop]$ bin/hdfs dfs -get output ##从分布式系统中get下来output目录

[hadoop@server1 hadoop]$ cd output/

[hadoop@server1 output]$ ls

part-r-00000 _SUCCESS

[hadoop@server1 output]$ cat *

1 dfsadmin

三、分布式

1.先停掉服务,清除原来的数据

[hadoop@server1 hadoop]$ sbin/stop-dfs.sh

[hadoop@server1 hadoop]$ jps

13867 Jps

[hadoop@server1 ~]$ cd /tmp

[hadoop@server1 tmp]$ ls

hadoop hadoop-hadoop hsperfdata_hadoop

[hadoop@server1 tmp]$ rm -fr *

2.新开两个虚拟机,当做节点

##创建用户

[root@server2 ~]# useradd -u 1000 hadoop

[root@server3 ~]# useradd -u 1000 hadoop

##安装nfs-utils

[root@server1 ~]# yum install -y nfs-utils

[root@server2 ~]# yum install -y nfs-utils

[root@server3 ~]# yum install -y nfs-utils

[root@server1 ~]# systemctl start rpcbind

[root@server2 ~]# systemctl start rpcbind

[root@server3 ~]# systemctl start rpcbind

3.server1开启服务,配置

[root@server1 ~]# systemctl start nfs-server

[root@server1 ~]# vim /etc/exports

/home/hadoop *(rw,anonuid=1000,anongid=1000)

[root@server1 ~]# exportfs -rv

exporting *:/home/hadoop

[root@server1 ~]# showmount -e

Export list for server1:

/home/hadoop *

4.server2,3挂载

[root@server2 ~]# mount 172.25.26.1:/home/hadoop /home/hadoop

[root@server2 ~]# df

Filesystem 1K-blocks Used Available Use% Mounted on

/dev/mapper/rhel-root 17811456 1097752 16713704 7% /

devtmpfs 497292 0 497292 0% /dev

tmpfs 508264 0 508264 0% /dev/shm

tmpfs 508264 13128 495136 3% /run

tmpfs 508264 0 508264 0% /sys/fs/cgroup

/dev/sda1 1038336 141508 896828 14% /boot

tmpfs 101656 0 101656 0% /run/user/0

172.25.26.1:/home/hadoop 17811456 2797184 15014272 16% /home/hadoop

[root@server3 ~]# mount 172.25.26.1:/home/hadoop /home/hadoop

5.此时发现可以免密登录(因为是挂载上的)

[root@server1 ~]# su - hadoop

Last login: Sat Apr 6 10:12:17 CST 2019 from localhost on pts/1

[hadoop@server1 ~]$ ssh 172.25.26.2

[hadoop@server2 ~]$ logout

Connection to 172.25.26.2 closed.

[hadoop@server1 ~]$ ssh 172.25.26.3

[hadoop@server3 ~]$ logout

Connection to 172.25.26.3 closed.

6.重新编辑文件

[hadoop@server1 hadoop]$ pwd

/home/hadoop/hadoop/etc/hadoop

[hadoop@server1 hadoop]$ vim core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://172.25.26.1:9000</value>

</property>

</configuration>

[hadoop@server1 hadoop]$ vim hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>2</value> ##改为两个节点

</property>

</configuration>

[hadoop@server1 hadoop]$ vim workers

[hadoop@server1 hadoop]$ cat workers

172.25.26.2

172.25.26.3

##在一个地方编辑,其他节点都有了

[root@server2 ~]# su - hadoop

Last login: Sat Apr 6 11:32:41 CST 2019 from server1 on pts/1

[hadoop@server2 ~]$ cd hadoop/etc/hadoop/

[hadoop@server2 hadoop]$ cat workers

172.25.26.2

172.25.26.3

[root@server3 ~]# su - hadoop

Last login: Sat Apr 6 11:32:41 CST 2019 from server1 on pts/1

[hadoop@server3 ~]$ cd hadoop/etc/hadoop/

[hadoop@server3 hadoop]$ cat workers

172.25.26.2

172.25.26.3

7.格式化,并启动服务

[hadoop@server1 hadoop]$ bin/hdfs namenode -format

[hadoop@server1 hadoop]$ sbin/start-dfs.sh

Starting namenodes on [server1]

Starting datanodes

Starting secondary namenodes [server1]

[hadoop@server1 hadoop]$ jps

14085 NameNode

14424 Jps

14303 SecondaryNameNode ##出现SecondaryNameNode

##从节点可以看到datanode信息

[hadoop@server2 ~]$ jps

11959 DataNode

12046 Jps

[hadoop@server3 ~]$ jps

2616 DataNode

2702 Jps

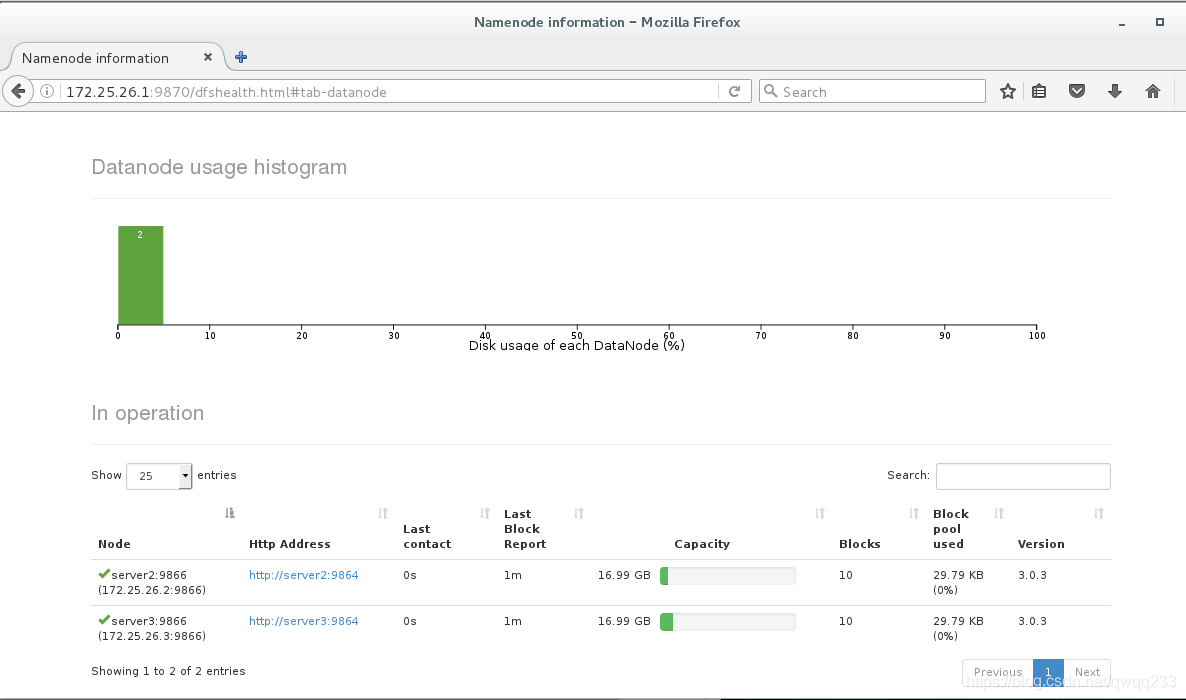

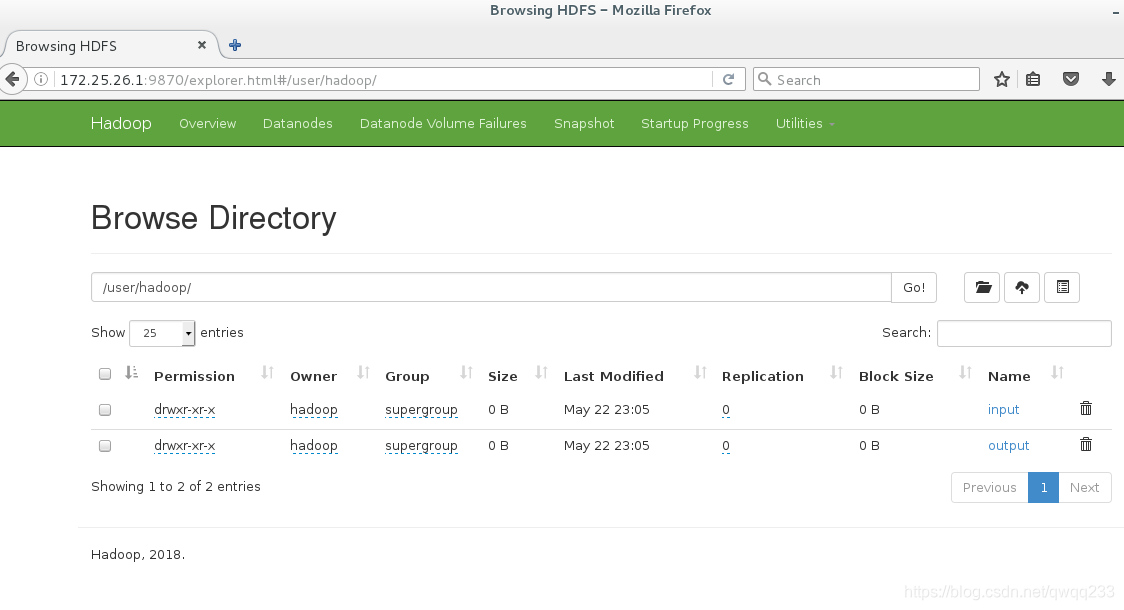

8.测试

[hadoop@server1 hadoop]$ bin/hdfs dfs -mkdir -p /user/hadoop

[hadoop@server1 hadoop]$ bin/hdfs dfs -mkdir input

[hadoop@server1 hadoop]$ bin/hdfs dfs -put etc/hadoop/*.xml input

[hadoop@server1 hadoop]$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-3.0.3.jar grep input output 'dfs[a-z.]+'

网页上查看,有两个节点,且数据已经上传

本文详细介绍了如何进行Hadoop的单机版测试、伪分布式以及分布式环境的搭建。从创建用户、安装配置到各个节点的设置,包括NameNode和DataNode的角色解析,以及HDFS和YARN的主要模块。通过一系列步骤,最终实现了多节点的Hadoop集群并成功进行了数据操作测试。

本文详细介绍了如何进行Hadoop的单机版测试、伪分布式以及分布式环境的搭建。从创建用户、安装配置到各个节点的设置,包括NameNode和DataNode的角色解析,以及HDFS和YARN的主要模块。通过一系列步骤,最终实现了多节点的Hadoop集群并成功进行了数据操作测试。

1939

1939

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?