生产者命令行操作

1.查看操作生产者命令参数

bin/kafka-console-producer.sh

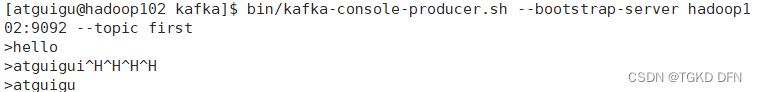

2.发送消息

bin/kafka-console-producer.sh --bootstrap-server hadoop102:9092 --topic first

消费者命令行操作

1. 查看操作消费者命令参数

bin/kafka-console-consumer.sh

2.消费消息

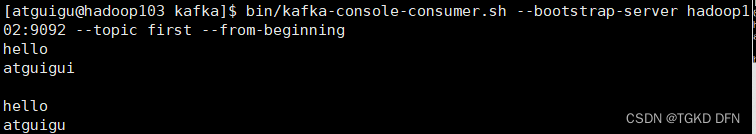

2.1消费first主题中的数据

bin/kafka-console-consumer.sh --bootstrap-server hadoop102:9092 --topic first

2.2把主题中所有的数据都读取出来(包括历史数据)

bin/kafka-console-consumer.sh --bootstrao-server hadoop102:9092 --from-beginning --topic first

异步发送API

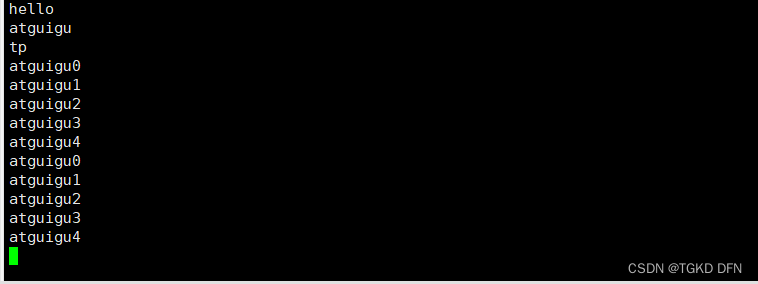

1.普通异步发送API

package com.atguigu.kafka.producer;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.common.serialization.StringSerializer;

import java.awt.datatransfer.StringSelection;

import java.util.Properties;

public class CustomProducer {

public static void main(String[] args) {

Properties properties = new Properties();

properties.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG,"hadoop102:9092,hadoop103:9092");

properties.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

properties.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG,StringSerializer.class.getName());

KafkaProducer<String, String> kafkaProducer = new KafkaProducer<>(properties);

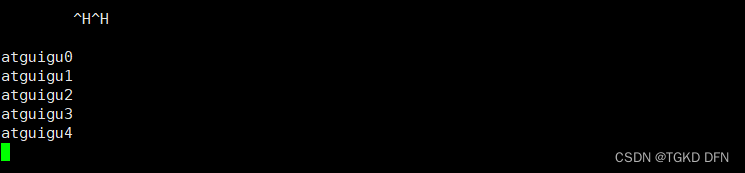

for (int i = 0; i < 5; i++) {

kafkaProducer.send(new ProducerRecord<>("first","atguigu"+i));

}

kafkaProducer.close();

}

}

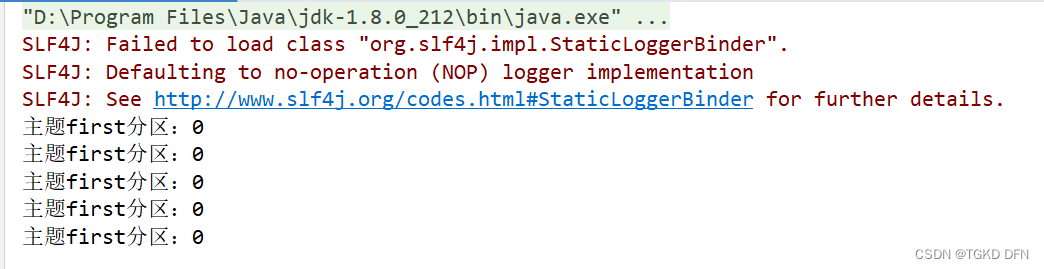

2.带回调函数的异步发送

package com.atguigu.kafka.producer;

import org.apache.kafka.clients.producer.*;

import org.apache.kafka.common.serialization.StringSerializer;

import java.awt.datatransfer.StringSelection;

import java.util.Properties;

public class CustomProducerCallback {

public static void main(String[] args) {

Properties properties = new Properties();

properties.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG,"hadoop102:9092,hadoop103:9092");

properties.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

properties.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG,StringSerializer.class.getName());

KafkaProducer<String, String> kafkaProducer = new KafkaProducer<>(properties);

for (int i = 0; i < 5; i++) {

kafkaProducer.send(new ProducerRecord<>("first", "atguigu" + i), new Callback() {

@Override

public void onCompletion(RecordMetadata metadata, Exception exception) {

if(exception == null){

System.out.println("主题" + metadata.topic() + "分区:" + metadata.partition());

}

}

});

}

kafkaProducer.close();

}

}

同步发送API

package com.atguigu.kafka.producer;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.common.serialization.StringSerializer;

import java.util.Properties;

import java.util.concurrent.ExecutionException;

public class CustomProducerSync {

public static void main(String[] args) throws ExecutionException, InterruptedException {

Properties properties = new Properties();

properties.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG,"hadoop102:9092,hadoop103:9092");

properties.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

properties.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG,StringSerializer.class.getName());

KafkaProducer<String, String> kafkaProducer = new KafkaProducer<>(properties);

for (int i = 0; i < 5; i++) {

kafkaProducer.send(new ProducerRecord<>("first","atguigu"+i)).get();

}

kafkaProducer.close();

}

}

362

362

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?