图像增广

%matplotlib inline

import matplotlib

import torch

import torchvision

import torch.nn as nn

import d2l.torch as d2l

d2l.set_figsize((4,5))

img = d2l.Image.open('../3.img/cat1.jpg')

d2l.plt.imshow(img)

def apply(img, aug, num_rows=2, num_cols=4, scale=1.5):

Y = [aug(img) for _ in range(num_rows * num_cols)]

d2l.show_images(Y, num_rows, num_cols, scale=scale)

- 翻转和裁剪

apply(img, torchvision.transforms.RandomHorizontalFlip())

apply(img, torchvision.transforms.RandomVerticalFlip())

shape_aug = torchvision.transforms.RandomResizedCrop((200, 200), scale=(0.1, 1), ratio=(0.5, 2))

apply(img, shape_aug)

- 改变颜⾊

color_aug = torchvision.transforms.ColorJitter(brightness=0.5, contrast=0.5, saturation=0.5, hue=0.5)

apply(img, color_aug)

- 结合多种图像增⼴⽅法

augs = torchvision.transforms.Compose([torchvision.transforms.RandomHorizontalFlip(), color_aug, shape_aug])

apply(img, augs)

模型训练

# 1.下载数据集

all_images = torchvision.datasets.CIFAR10(root='../data/', download=True)

d2l.show_images([all_images[i][0] for i in range(32)], 4, 8, scale=1)

# 2.使⽤ToTensor实例将⼀批图像转换为深度学习框架所要求的格式,即形状为(批量⼤⼩,通道数,⾼度,宽度)的32位浮点数,取值范围为0〜1。

train_augs = torchvision.transforms.Compose([torchvision.transforms.RandomHorizontalFlip(),torchvision.transforms.ToTensor()])

test_augs = torchvision.transforms.Compose([torchvision.transforms.ToTensor()])

# 3.加载数据集

def load_data(is_train, augs, batch_size, num_workers):

data_set = torchvision.datasets.CIFAR10(root='../data/', transform=augs, download=True)

data_loader = torch.utils.data.DataLoader(data_set, batch_size=batch_size, shuffle=is_train, num_workers=num_workers, drop_last=True)

return data_loader

# 4.模型训练

def train_batch_ch13(net, X, y, loss, trainer, devices):

# 使用多GPU进行小批量训练

if isinstance(X, list):

X = [x.to(devices[0]) for x in X]

else:

X = X.to(devices[0])

y = y.to(devices[0])

net.train()

trainer.zero_grad()

pred = net(X)

ls = loss(pred, y)

ls.sum().backward()

trainer.step()

train_loss_sum = ls.sum()

train_acc_sum = d2l.accuracy(pred, y)

return train_loss_sum, train_acc_sum

def train_ch13(net, train_iter, test_iter, loss, trainer, num_epochs, devices):

timer, batch_size = d2l.Timer(), len(train_iter)

animator = d2l.Animator(xlabel='epoch',xlim=[1, num_epochs], ylim=[0, 1],

legend=['train_loss', 'train_acc', 'test_acc'])

net = nn.DataParallel(net,device_ids=devices).to(devices[0])

for epoch in range(num_epochs):

# 4个维度:储存训练损失,训练准确度,实例数,特点数

metric = d2l.Accumulator(4)

for i, (features, labels) in enumerate(train_iter):

timer.start()

l, acc = train_batch_ch13(net, features, labels, loss, trainer, devices)

metric.add(l, acc, labels.shape[0], labels.numel())

timer.stop()

if (i + 1) % (batch_size // 5) == 0 or i == batch_size - 1:

animator.add(epoch + (i + 1) / batch_size,(metric[0] / metric[2], metric[1] / metric[3],None))

test_acc = d2l.evaluate_accuracy_gpu(net, test_iter)

animator.add(epoch + 1, (None, None, test_acc))

print(f'loss {metric[0] / metric[2]:.3f}, train acc 'f'{metric[1] / metric[3]:.3f}, test acc {test_acc:.3f}')

print(f'{metric[2] * num_epochs / timer.sum():.1f} examples/sec on 'f'{str(devices)}')

batch_size, devices, net = 256, d2l.try_all_gpus(), d2l.resnet18(10, 3)

def init_weights(m):

if type(m) in [nn.Linear, nn.Conv2d]:

nn.init.xavier_uniform_(m.weight)

net.apply(init_weights)

def train_with_data_aug(train_augs, test_augs, net, lr=0.001):

train_iter = load_data(True, train_augs, batch_size, 0)

test_iter = load_data(False, test_augs, batch_size, 0)

loss = nn.CrossEntropyLoss(reduction="none")

trainer = torch.optim.Adam(net.parameters(), lr=lr)

train_ch13(net, train_iter, test_iter, loss, trainer, 10, devices)

train_with_data_aug(train_augs, test_augs, net)

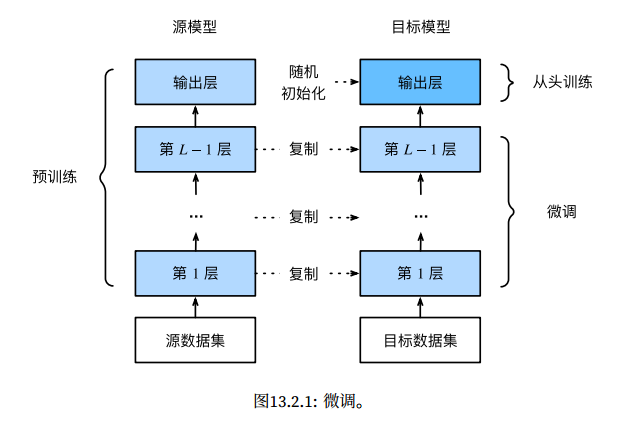

迁移学习常见技巧:微调

由于训练样本数量有限,训练模型的准确性可能⽆法满⾜实际要求。

解决方法一:⼀个显⽽易⻅的解决⽅案是收集更多的数据。

解决方法二:另⼀种解决⽅案是应⽤迁移学习(transfer learning)将从源数据集学到的知识迁移到⽬标数据集。

迁移学习步骤

- 在源数据集(例如ImageNet数据集)上预训练神经⽹络模型,即源模型。

- 创建⼀个新的神经⽹络模型,即⽬标模型。这将复制源模型上的所有模型设计及其参数(输出层除外)。

我们假定这些模型参数包含从源数据集中学到的知识,这些知识也将适⽤于⽬标数据集。我们还假设源模型的输出层与源数据集的标签密切相关;因此不在⽬标模型中使⽤该层。 - 向⽬标模型添加输出层,其输出数是⽬标数据集中的类别数。然后随机初始化该层的模型参数。

- 在⽬标数据集(如椅⼦数据集)上训练⽬标模型。输出层将从头开始进⾏训练,⽽所有其他层的参数将根据源模型的参数进⾏微调。

当⽬标数据集⽐源数据集⼩得多时,微调有助于提⾼模型的泛化能⼒。

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?