1.优化方式

2.神经网络的训练和测试

import numpy as np

import random

import warnings

warnings.filterwarnings("ignore", category=Warning)

def load_data():

# 下载MNIST数据集

dataset = np.load('./mnist.npz', allow_pickle=True)

print(dataset.files)

# print(len(dataset.files))

# print(dataset['x_train'])

x_train = dataset['x_train']

labels_train = dataset['y_train']

x_test = dataset['x_test']

labels_test = dataset['y_test']

# 划分数据集

total_num = len(x_train)

split_valid = 0.2

train_num = int((total_num * (1-split_valid)))

# 数据集划分为训练集和验证集

# 训练集

train_x = x_train[:train_num]

train_labels = labels_train[:train_num]

# 验证集

valid_x = x_train[train_num:]

valid_labels = labels_train[train_num:]

# 测试集

test_x = x_test

test_labels = labels_test

return (train_x,train_labels,valid_x,valid_labels,test_x,test_labels)

def load_data_wrapper():

tr_d,tr_l,va_d,va_l,te_d,te_l = load_data()

# tr_d[0]: x; 1*784

# tr_l[1]: y; 0-9

training_inputs = [np.reshape(x, (784, 1)) for x in tr_d]

training_results = [vectorized_result(y) for y in tr_l]

training_data = zip(training_inputs, training_results)

validation_inputs = [np.reshape(x, (784, 1)) for x in va_d]

validation_data = zip(validation_inputs, va_l)

test_inputs = [np.reshape(x, (784, 1)) for x in te_d]

test_data = zip(test_inputs, te_l)

return (training_data, validation_data, test_data)

def vectorized_result(j):

v = np.zeros((10, 1))

v[j] = 1.0

return v

class Network(object):

def __init__(self, sizes):

"""初始化权重和偏置

:param sizes: 每一层神经元数量,类型为list

weights:权重

biases:偏置

"""

self.sizes = sizes

self.num_layers = len(sizes)

self.weights = np.array([np.random.randn(x, y) for x, y in zip(sizes[1:], sizes[:-1])])

self.biases = np.array([np.random.randn(y, 1) for y in sizes[1:]])

def feedforward(self, a):

"""对一组样本x进行预测,然后输出"""

for w, b in zip(self.weights, self.biases):

a = sigmoid(np.dot(w, a) + b)

return a

def gradient_descent(self, training_data, epochs, mini_batch_size, alpha, test_data=None):

"""MBGD,运行一个或者几个batch时更新一次

:param training_data: 训练数据,每一个样本包括(x, y),类型为zip

:epochs: 迭代次数

:mini_batch_size:每一个小批量数据的数量

:alpha: 学习率

:test_data: 测试数据

"""

training_data = list(training_data)

n = len(training_data)

test_data = list(test_data)

total_test = len(list(test_data))

for i in range(epochs):

random.shuffle(training_data)

mini_batches = [training_data[k:k+mini_batch_size] for k in range(0, n, mini_batch_size)]

for mini_batch in mini_batches:

init_ws_derivative = np.array([np.zeros(w.shape) for w in self.weights])

init_bs_derivative = np.array([np.zeros(b.shape) for b in self.biases])

for x, y in mini_batch:

activations, zs = self.forwardprop(x) #前向传播

delta = self.cost_deviation(activations[-1], zs[-1], y) #计算最后一层误差

ws_derivative, bs_derivative = self.backprop(activations, zs, delta) #反向传播,cost func对w和b求偏导

init_ws_derivative = init_ws_derivative + ws_derivative

init_bs_derivative = init_bs_derivative + bs_derivative

self.weights = self.weights - alpha / len(mini_batch) * init_ws_derivative

self.biases = self.biases - alpha / len(mini_batch) * init_bs_derivative

if test_data:

print("Epoch {} : {} / {}".format(i, self.evaluate(test_data),total_test)) #识别准确数量/测试数据集总数量

print("accuracy:%.2f%%" % (self.evaluate(test_data) / float(total_test) * 100))

else:

print("Epoch {} complete".format(i))

def forwardprop(self, x):

"""前向传播"""

activation = x

activations = [x]

zs = []

for w, b in zip(self.weights, self.biases):

z = np.dot(w, activation) + b

zs.append(z)

activation = sigmoid(z)

activations.append(activation)

return (activations, zs)

def cost_deviation(self, output, z, y):

"""计算最后一层误差"""

return (output - y) * sigmoid_derivative(z)

def backprop(self, activations, zs, delta):

"""反向传播"""

ws_derivative = np.array([np.zeros(w.shape) for w in self.weights])

bs_derivative = np.array([np.zeros(b.shape) for b in self.biases])

ws_derivative[-1] = np.dot(delta, activations[-2].transpose())

bs_derivative[-1] = delta

for l in range(2, self.num_layers):

z = zs[-l]

delta = np.dot((self.weights[-l+1]).transpose(), delta) * sigmoid_derivative(z)

ws_derivative[-l] = np.dot(delta, activations[-l-1].transpose())

bs_derivative[-l] = delta

return (ws_derivative, bs_derivative)

def evaluate(self, test_data):

"""评估"""

test_results = [(np.argmax(self.feedforward(x)), y) for (x, y) in test_data]

return sum(int(output == y) for (output, y) in test_results)

def sigmoid(z):

return 1.0 / (1.0 + np.exp(-z))

def sigmoid_derivative(z):

"""sigmoid函数偏导"""

return sigmoid(z) * (1 - sigmoid(z))

if __name__ == '__main__':

training_data, validation_data, test_data = load_data_wrapper()

net = Network([784, 30, 10]) #28*28

net.gradient_descent(training_data, 50, 100, 0.5, test_data=test_data)

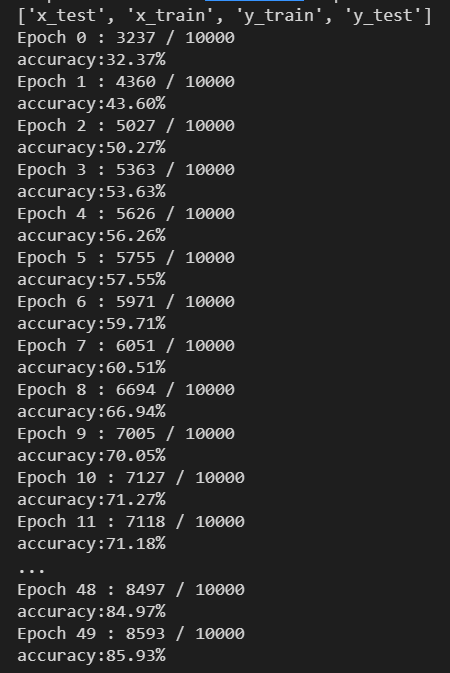

训练结果:

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?