import torch

import torch.nn as nn

import torchvision

import torchvision.transforms as transforms

# Device configuration; torch.device代表将torch.Tensor分配到的设备的对象。

device = torch.device('cuda'if torch.cuda.is_available() else 'cpu')

# Hyper-parameters

input_size = 784

hidden_size = 500

num_classes = 10

num_epochs = 5

batch_size = 100

learning_rate = 0.001

# MNIST dataset

train_dataset = torchvision.datasets.MNIST(root='../data', train=True, transform=transforms.ToTensor(), download=True)

test_dataset = torchvision.datasets.MNIST(root='../data', train=False, transform=transforms.ToTensor())

# Data loader

train_loader = torch.utils.data.DataLoader(dataset=train_dataset, batch_size=batch_size, shuffle=True)

test_loader = torch.utils.data.DataLoader(dataset=test_dataset, batch_size=batch_size, shuffle=False)

# Fully connected neural network with one hidden layer 定义自己模型

class NeuralNet(nn.Module):

def __init__(self, input_size, hiddlen_size, num_classes):

super(NeuralNet, self).__init__()

self.fc1 = nn.Linear(input_size, hidden_size)

self.relu = nn.ReLU()

self.fc2 = nn.Linear(hidden_size, num_classes)

def forward(self, x):

out = self.fc1(x)

out = self.relu(out)

out = self.fc2(out)

return out

model = NeuralNet(input_size, hidden_size, num_classes).to(device)

# Loss and optimizer

criterion = nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(model.parameters(), lr=learning_rate)

# Train the model

total_step = len(train_loader)

for epoch in range(num_epochs):

for i, (images, labels) in enumerate(train_loader):

# Move tensors to the configured device

images = images.reshape(-1,28 * 28).to(device)

labels = labels.to(device) #???

# Forward pass

outputs = model(images)

loss = criterion(outputs, labels)

# Backward and optimize

optimizer.zero_grad()

loss.backward()

optimizer.step()

if (i+1) % 100 == 0:

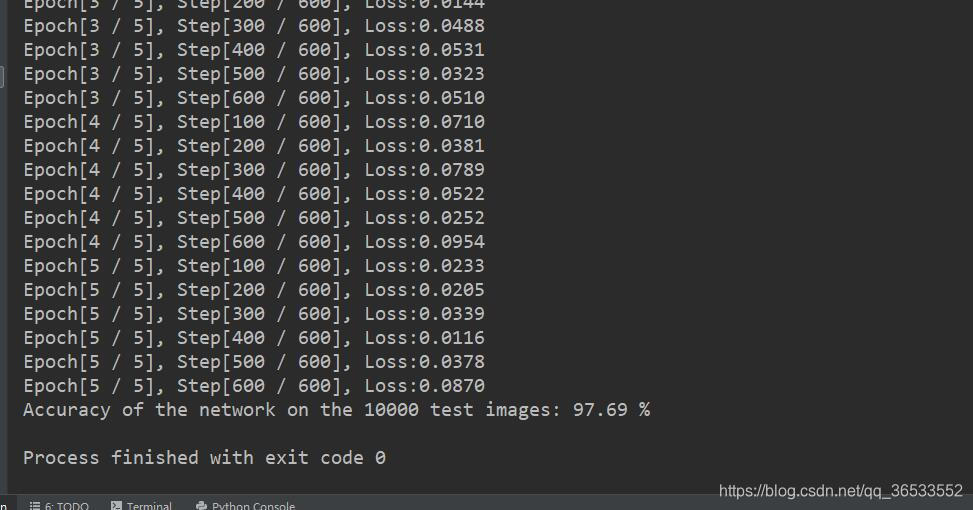

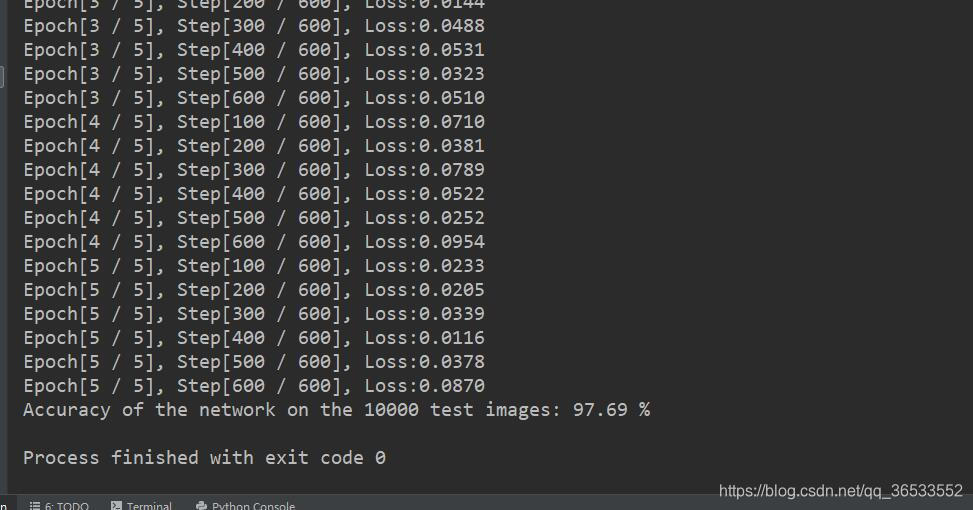

print('Epoch[{} / {}], Step[{} / {}], Loss:{:.4f}'.format(epoch+1, num_epochs, i+1, total_step, loss.item()))

# Test the model

# In test phase, we don't need to compute gradients (for memory efficiency)

with torch.no_grad():

correct = 0

total = 0

for images, labels in test_loader:

images = images.reshape(-1, 28*28).to(device)

labels = labels.to(device)

outputs = model(images)

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('Accuracy of the network on the 10000 test images: {} %'.format(100 * correct / total))

# Save the model checkpoint

torch.save(model.state_dict(), 'model.ckpt')

本文介绍了一个使用PyTorch框架搭建的简单全连接神经网络模型,并在MNIST手写数字数据集上进行训练和测试的过程。模型包含一个隐藏层,采用ReLU激活函数,并使用Adam优化器及交叉熵损失函数来更新参数。

本文介绍了一个使用PyTorch框架搭建的简单全连接神经网络模型,并在MNIST手写数字数据集上进行训练和测试的过程。模型包含一个隐藏层,采用ReLU激活函数,并使用Adam优化器及交叉熵损失函数来更新参数。

3130

3130

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?