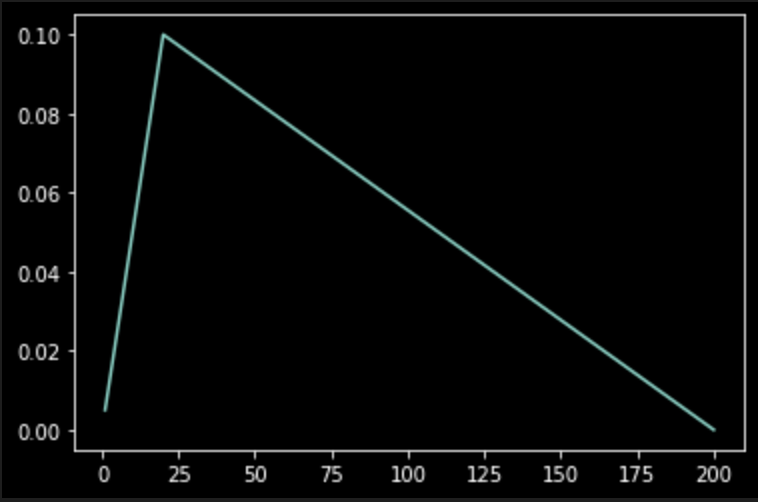

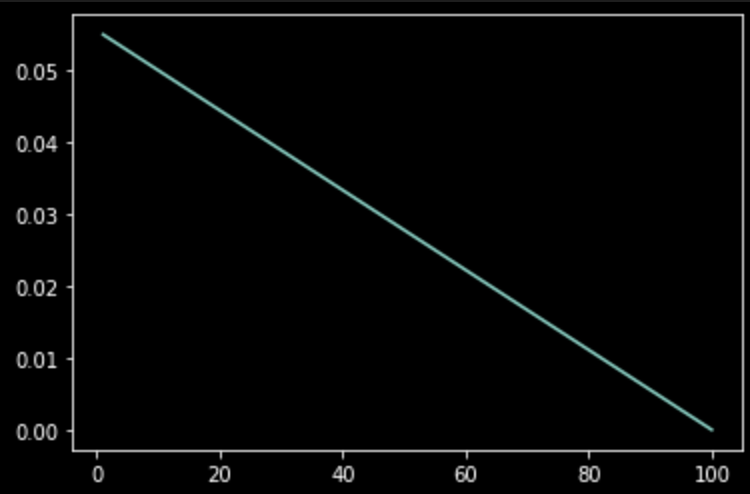

左1:从头开始训练时,lr的变化。 左2:从epoch100时开始训练

左1:从头开始训练时,lr的变化。 左2:从epoch100时开始训练

🙋♂️ 张同学,zhangruiyuan@zju.edu.cn ,有问题请联系我

一、导入一些必要的包

import os

import sys

import pandas as pd

import numpy as np

from tqdm import tqdm,trange

from matplotlib import pyplot as plt

import seaborn as sns

import json

import pathlib

from pathlib import Path

import torch

from torch import nn, einsum

import torch.nn.functional as F

from einops import rearrange, repeat

from einops.layers.torch import Rearrange

from transformers import(

get_scheduler

)

二、定义模型

class MyModal(nn.Module):

def __init__(self) -> None:

super().__init__()

self.tf = nn.TransformerEncoderLayer(d_model=512, nhead=8)

self.linear = nn.Linear(512, 10)

self.softmax = nn.Softmax(dim=1)

def forward(self, x):

x = self.tf(x)

x = self.linear(x[:,0])

x = self.softmax(x)

return x

三、训练时代码

model = MyModal()

max_train_steps = 200

optimizer = torch.optim.AdamW(model.parameters(), lr=0.1)

lr_scheduler = get_scheduler(

name='linear',

optimizer=optimizer,

num_warmup_steps=20,

num_training_steps=max_train_steps,

)

criterion = nn.CrossEntropyLoss()

lrs = []

start_step = 0

for i in range(start_step):

optimizer.step()

lr_scheduler.step() # 对优化器中的lr进行更新

optimizer.zero_grad() # 更新模型记录的梯度为0

for i in range(max_train_steps-start_step):

src = torch.rand(10,32,512)

labels = torch.randint(0,10,[10]) # bs = 10

output = model(src)

loss = criterion(output, labels)

loss.backward()

optimizer.step()

lr_scheduler.step() # 对优化器中的lr进行更新

optimizer.zero_grad() # 更新模型记录的梯度为0

# https://blog.youkuaiyun.com/qq_41375318/article/details/115540896

lrs.append(optimizer.state_dict()['param_groups'][0]['lr'])

x = np.arange(1, len(lrs)+1)

plt.plot(x, lrs)

plt.show()

7402

7402

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?