Mycat 简介

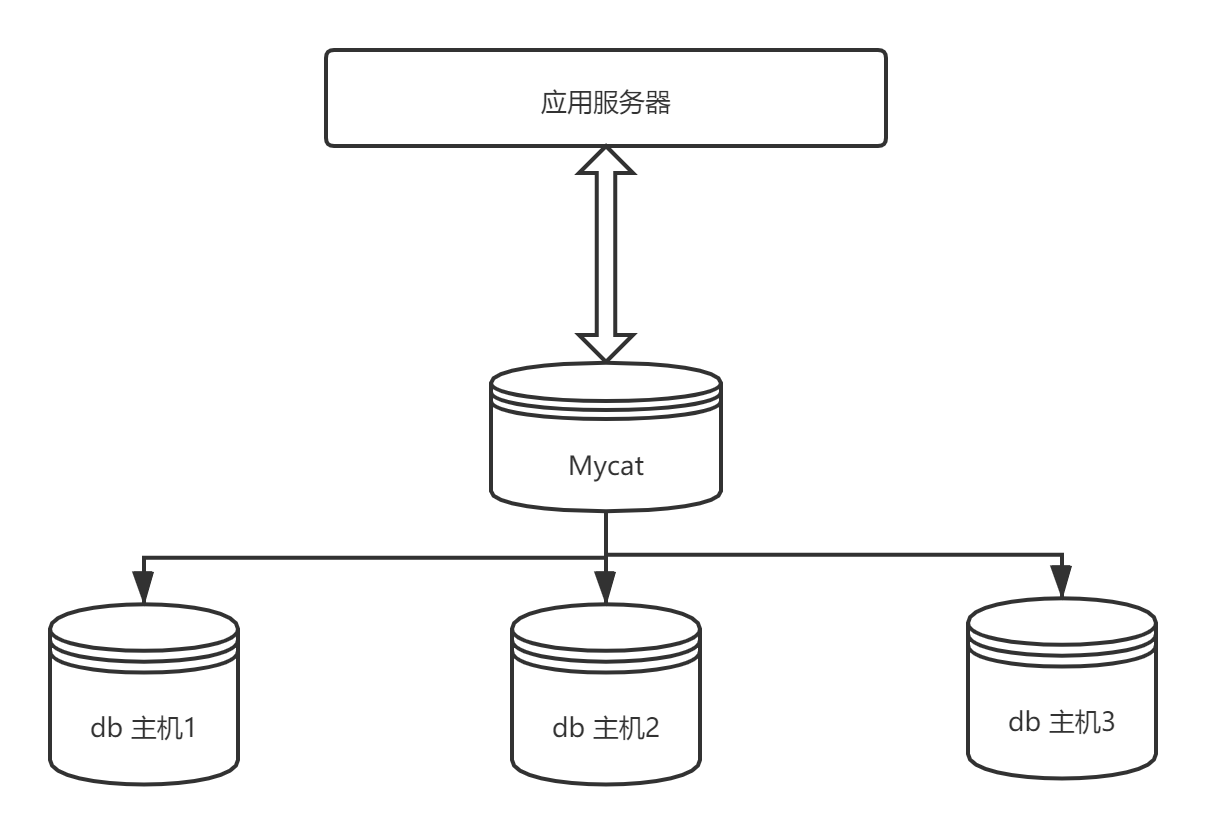

Mycat 的前身是Ali 的Cobar,核心的功能时提供数据库的分片和读写分离。Mycat 并不是完全意义上的分布式数据库,而是介于数据库和应用之间的中间件服务;

Mycat 核心概念

-

逻辑库

Mycat 作为一个中间件,对于使用者来说,无需知道中间件的存在,所以数据库中间件可以被当做一个或者多个数据库集群构成的逻辑库; -

逻辑表

逻辑表和逻辑库相似,对于应用来说,读写数据表就是逻辑表。逻辑表可以是数据切分后分布在一个或者多个分片库中,也可以是单独的一个表; -

分片表

分片表是将原有的大表根据规则切分到多个数据的表。每个分片都有一部分数据,所以分片构成完整的数据 -

非分片表

非分片表指未切分分片的表。 -

ER 表

ER 表是用来保证子表的记录和所关联的父表记录能够存放在同一个数据分片上,好处就是关联查询时,不会出现join 跨库操作; -

全局表

全局表在业务系统中,对于一些变动很少的表,比如字典表,当这类表数据量大到需要切片时,那么可以通过每个数据分片都冗余一份,来解决这类表的join 关联查询。 -

分片节点

数据被切分后,分布到不同的数据库上面。每个表分片所在的数据库分就是分片节点 -

节点主机

一个或者多个分片节点所占用的主机称为节点主机 -

分片规则

分片规则是数据切分时,切分遵循的规则称为分片规则

Mycat 配置文件详解

schema.xm

<?xml version="1.0"?>

<!DOCTYPE mycat:schema SYSTEM "schema.dtd">

<mycat:schema xmlns:mycat="http://io.mycat/">

<!--schema 标签用来定义逻辑库name:逻辑库的名称,checkSQLschema为true 时,执行sql 语句时会将schema name 去除,sqlMaxLimit 最大返回结果集行数 -->

<schema name="TESTDB" checkSQLschema="true" sqlMaxLimit="100" randomDataNode="dn1">

<!-- auto sharding by id (long) -->

<!--splitTableNames 启用<table name 属性使用逗号分割配置多个表,即多个表使用这个配置-->

<!--fetchStoreNodeByJdbc 启用ER表使用JDBC方式获取DataNode-->

<!--primaryKey 字段主要用作mycat 主键缓存机制,分片规则使用非主键进行分片时,那么第一次根据主键查询时,mycat 会对所有的datanode 节点进行广播式查询,并对结果主键进行分析,确定主键位于哪个dataNode节点上,及完成了主键id和分片id的缓存。下次在根据主键查询时不需要广播式查询,而是可以到缓存的分片id进行查询-->

<table name="customer" primaryKey="id" dataNode="dn1,dn2" rule="sharding-by-intfile" autoIncrement="true" fetchStoreNodeByJdbc="true">

<!--childTable用于定义ER分片的子表;joinKey 子表中的字段,插入数据时根据joinKey 查找父表的存储节点;parentKey 父级表的字段名称,查询连接条件是childtable.joinKey = parenttable.parentKey时,不会跨库查询 -->

<childTable name="customer_addr" primaryKey="id" joinKey="customer_id" parentKey="id"> </childTable>

</table>

<!-- <table name="oc_call" primaryKey="ID" dataNode="dn1$0-743" rule="latest-month-calldate"

/> -->

</schema>

<!-- <dataNode name="dn1$0-743" dataHost="localhost1" database="db$0-743"

/> -->

<dataNode name="dn1" dataHost="localhost1" database="db1" />

<dataNode name="dn2" dataHost="localhost1" database="db2" />

<dataNode name="dn3" dataHost="localhost1" database="db3" />

<!--dataHost 指定了数据的具体实例;

balance =0:不开启读写分离;balance=1: 开启读写分离,所有写操作落在当前的writeHost上,读操作落在对应的readHost 节点-->

<!--switchType: 服务是否自动切换,-1 不自动切换,1自动切换,2基于mysql 同步状态决定是否切换,3 基于mysql galar cluster 的自动切换机制(适用于集群)-->

<dataHost name="localhost1" maxCon="1000" minCon="10" balance="0"

writeType="0" dbType="mysql" dbDriver="jdbc" switchType="1" slaveThreshold="100">

<!--heartbeat心跳检查-->

<heartbeat>select user()</heartbeat>

<!-- can have multi write hosts -->

<writeHost host="hostM1" url="jdbc:mysql://localhost:3306" user="root"

password="root">

<readHost host="hostS1" url="jdbc:mysql://localhost:3306" user="root" password="root"/>

</writeHost>

<!-- <writeHost host="hostM2" url="localhost:3316" user="root" password="123456"/> -->

</dataHost>

<!--

<dataHost name="sequoiadb1" maxCon="1000" minCon="1" balance="0" dbType="sequoiadb" dbDriver="jdbc">

<heartbeat> </heartbeat>

<writeHost host="hostM1" url="sequoiadb://1426587161.dbaas.sequoialab.net:11920/SAMPLE" user="jifeng" password="jifeng"></writeHost>

</dataHost>

<dataHost name="oracle1" maxCon="1000" minCon="1" balance="0" writeType="0" dbType="oracle" dbDriver="jdbc"> <heartbeat>select 1 from dual</heartbeat>

<connectionInitSql>alter session set nls_date_format='yyyy-mm-dd hh24:mi:ss'</connectionInitSql>

<writeHost host="hostM1" url="jdbc:oracle:thin:@127.0.0.1:1521:nange" user="base" password="123456" > </writeHost> </dataHost>

<dataHost name="jdbchost" maxCon="1000" minCon="1" balance="0" writeType="0" dbType="mongodb" dbDriver="jdbc">

<heartbeat>select user()</heartbeat>

<writeHost host="hostM" url="mongodb://192.168.0.99/test" user="admin" password="123456" ></writeHost> </dataHost>

<dataHost name="sparksql" maxCon="1000" minCon="1" balance="0" dbType="spark" dbDriver="jdbc">

<heartbeat> </heartbeat>

<writeHost host="hostM1" url="jdbc:hive2://feng01:10000" user="jifeng" password="jifeng"></writeHost> </dataHost> -->

<!-- <dataHost name="jdbchost" maxCon="1000" minCon="10" balance="0" dbType="mysql"

dbDriver="jdbc"> <heartbeat>select user()</heartbeat> <writeHost host="hostM1"

url="jdbc:mysql://localhost:3306" user="root" password="123456"> </writeHost>

</dataHost> -->

</mycat:schema>Server.xml

<?xml version="1.0" encoding="UTF-8"?>

<!-- - - Licensed under the Apache License, Version 2.0 (the "License");

- you may not use this file except in compliance with the License. - You

may obtain a copy of the License at - - http://www.apache.org/licenses/LICENSE-2.0

- - Unless required by applicable law or agreed to in writing, software -

distributed under the License is distributed on an "AS IS" BASIS, - WITHOUT

WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. - See the

License for the specific language governing permissions and - limitations

under the License. -->

<!DOCTYPE mycat:server SYSTEM "server.dtd">

<mycat:server xmlns:mycat="http://io.mycat/">

<system>

<property name="nonePasswordLogin">0</property> <!-- 0为需要密码登陆、1为不需要密码登陆 ,默认为0,设置为1则需要指定默认账户-->

<property name="ignoreUnknownCommand">0</property><!-- 0遇上没有实现的报文(Unknown command:),就会报错、1为忽略该报文,返回ok报文。

在某些mysql客户端存在客户端已经登录的时候还会继续发送登录报文,mycat会报错,该设置可以绕过这个错误-->

<property name="useHandshakeV10">1</property>

<property name="removeGraveAccent">1</property>

<property name="useSqlStat">0</property> <!-- 1为开启实时统计、0为关闭 -->

<property name="useGlobleTableCheck">0</property> <!-- 1为开启全加班一致性检测、0为关闭 -->

<property name="sqlExecuteTimeout">300</property> <!-- SQL 执行超时 单位:秒-->

<property name="sequenceHandlerType">1</property>

<!--<property name="sequnceHandlerPattern">(?:(\s*next\s+value\s+for\s*MYCATSEQ_(\w+))(,|\)|\s)*)+</property>

INSERT INTO `travelrecord` (`id`,user_id) VALUES ('next value for MYCATSEQ_GLOBAL',"xxx");

-->

<!--必须带有MYCATSEQ_或者 mycatseq_进入序列匹配流程 注意MYCATSEQ_有空格的情况-->

<property name="sequnceHandlerPattern">(?:(\s*next\s+value\s+for\s*MYCATSEQ_(\w+))(,|\)|\s)*)+</property>

<property name="subqueryRelationshipCheck">false</property> <!-- 子查询中存在关联查询的情况下,检查关联字段中是否有分片字段 .默认 false -->

<property name="sequenceHanlderClass">io.mycat.route.sequence.handler.HttpIncrSequenceHandler</property>

<!-- <property name="useCompression">1</property>--> <!--1为开启mysql压缩协议-->

<!-- <property name="fakeMySQLVersion">5.6.20</property>--> <!--设置模拟的MySQL版本号-->

<!-- <property name="processorBufferChunk">40960</property> -->

<!--

<property name="processors">1</property>

<property name="processorExecutor">32</property>

-->

<!--默认为type 0: DirectByteBufferPool | type 1 ByteBufferArena | type 2 NettyBufferPool -->

<property name="processorBufferPoolType">0</property>

<!--默认是65535 64K 用于sql解析时最大文本长度 -->

<!--<property name="maxStringLiteralLength">65535</property>-->

<!--<property name="sequenceHandlerType">0</property>-->

<!--<property name="backSocketNoDelay">1</property>-->

<!--<property name="frontSocketNoDelay">1</property>-->

<!--<property name="processorExecutor">16</property>-->

<!--

<property name="serverPort">8066</property> <property name="managerPort">9066</property>

<property name="idleTimeout">300000</property> <property name="bindIp">0.0.0.0</property>

<property name="dataNodeIdleCheckPeriod">300000</property> 5 * 60 * 1000L; //连接空闲检查

<property name="frontWriteQueueSize">4096</property> <property name="processors">32</property> -->

<!--分布式事务开关,0为不过滤分布式事务,1为过滤分布式事务(如果分布式事务内只涉及全局表,则不过滤),2为不过滤分布式事务,但是记录分布式事务日志-->

<property name="handleDistributedTransactions">0</property>

<!--

off heap for merge/order/group/limit 1开启 0关闭

-->

<property name="useOffHeapForMerge">0</property>

<!--

单位为m

-->

<property name="memoryPageSize">64k</property>

<!--

单位为k

-->

<property name="spillsFileBufferSize">1k</property>

<property name="useStreamOutput">0</property>

<!--

单位为m

-->

<property name="systemReserveMemorySize">384m</property>

<!--是否采用zookeeper协调切换 -->

<property name="useZKSwitch">false</property>

<!-- XA Recovery Log日志路径 -->

<!--<property name="XARecoveryLogBaseDir">./</property>-->

<!-- XA Recovery Log日志名称 -->

<!--<property name="XARecoveryLogBaseName">tmlog</property>-->

<!--如果为 true的话 严格遵守隔离级别,不会在仅仅只有select语句的时候在事务中切换连接-->

<property name="strictTxIsolation">false</property>

<!--如果为0的话,涉及多个DataNode的catlet任务不会跨线程执行-->

<property name="parallExecute">0</property>

</system>

<!-- 全局SQL防火墙设置 -->

<!--白名单可以使用通配符%或着*-->

<!--例如<host host="127.0.0.*" user="root"/>-->

<!--例如<host host="127.0.*" user="root"/>-->

<!--例如<host host="127.*" user="root"/>-->

<!--例如<host host="1*7.*" user="root"/>-->

<!--这些配置情况下对于127.0.0.1都能以root账户登录-->

<!--

<firewall>

<whitehost>

<host host="1*7.0.0.*" user="root"/>

</whitehost>

<blacklist check="false">

</blacklist>

</firewall>

-->

<user name="root" defaultAccount="true">

<property name="password">123456</property>

<property name="schemas">TESTDB</property>

<property name="defaultSchema">TESTDB</property>

<!--No MyCAT Database selected 错误前会尝试使用该schema作为schema,不设置则为null,报错 -->

<!-- 表级 DML 权限设置 -->

<!--

<privileges check="false">

<schema name="TESTDB" dml="0110" >

<table name="tb01" dml="0000"></table>

<table name="tb02" dml="1111"></table>

</schema>

</privileges>

-->

</user>

<user name="user">

<property name="password">user</property>

<property name="schemas">TESTDB</property>

<property name="readOnly">true</property>

<property name="defaultSchema">TESTDB</property>

</user>

</mycat:server>

rule.xml

<?xml version="1.0" encoding="UTF-8"?>

<!-- - - Licensed under the Apache License, Version 2.0 (the "License");

- you may not use this file except in compliance with the License. - You

may obtain a copy of the License at - - http://www.apache.org/licenses/LICENSE-2.0

- - Unless required by applicable law or agreed to in writing, software -

distributed under the License is distributed on an "AS IS" BASIS, - WITHOUT

WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. - See the

License for the specific language governing permissions and - limitations

under the License. -->

<!DOCTYPE mycat:rule SYSTEM "rule.dtd">

<mycat:rule xmlns:mycat="http://io.mycat/">

<tableRule name="rule1">

<rule>

<columns>id</columns>

<algorithm>func1</algorithm>

</rule>

</tableRule>

<tableRule name="sharding-by-date">

<rule>

<columns>createTime</columns>

<algorithm>partbyday</algorithm>

</rule>

</tableRule>

<tableRule name="rule2">

<rule>

<columns>user_id</columns>

<algorithm>func1</algorithm>

</rule>

</tableRule>

<tableRule name="sharding-by-intfile">

<rule>

<columns>sharding_id</columns>

<algorithm>hash-int</algorithm>

</rule>

</tableRule>

<tableRule name="auto-sharding-long">

<rule>

<columns>id</columns>

<algorithm>rang-long</algorithm>

</rule>

</tableRule>

<tableRule name="mod-long">

<rule>

<columns>id</columns>

<algorithm>mod-long</algorithm>

</rule>

</tableRule>

<tableRule name="sharding-by-murmur">

<rule>

<columns>id</columns>

<algorithm>murmur</algorithm>

</rule>

</tableRule>

<tableRule name="crc32slot">

<rule>

<columns>id</columns>

<algorithm>crc32slot</algorithm>

</rule>

</tableRule>

<tableRule name="sharding-by-month">

<rule>

<columns>create_time</columns>

<algorithm>partbymonth</algorithm>

</rule>

</tableRule>

<tableRule name="latest-month-calldate">

<rule>

<columns>calldate</columns>

<algorithm>latestMonth</algorithm>

</rule>

</tableRule>

<tableRule name="auto-sharding-rang-mod">

<rule>

<columns>id</columns>

<algorithm>rang-mod</algorithm>

</rule>

</tableRule>

<tableRule name="jch">

<rule>

<columns>id</columns>

<algorithm>jump-consistent-hash</algorithm>

</rule>

</tableRule>

<function name="murmur"

class="io.mycat.route.function.PartitionByMurmurHash">

<property name="seed">0</property><!-- 默认是0 -->

<property name="count">2</property><!-- 要分片的数据库节点数量,必须指定,否则没法分片 -->

<property name="virtualBucketTimes">160</property><!-- 一个实际的数据库节点被映射为这么多虚拟节点,默认是160倍,也就是虚拟节点数是物理节点数的160倍 -->

<!-- <property name="weightMapFile">weightMapFile</property> 节点的权重,没有指定权重的节点默认是1。以properties文件的格式填写,以从0开始到count-1的整数值也就是节点索引为key,以节点权重值为值。所有权重值必须是正整数,否则以1代替 -->

<!-- <property name="bucketMapPath">/etc/mycat/bucketMapPath</property>

用于测试时观察各物理节点与虚拟节点的分布情况,如果指定了这个属性,会把虚拟节点的murmur hash值与物理节点的映射按行输出到这个文件,没有默认值,如果不指定,就不会输出任何东西 -->

</function>

<function name="crc32slot"

class="io.mycat.route.function.PartitionByCRC32PreSlot">

<property name="count">2</property><!-- 要分片的数据库节点数量,必须指定,否则没法分片 -->

</function>

<function name="hash-int"

class="io.mycat.route.function.PartitionByFileMap">

<property name="mapFile">partition-hash-int.txt</property>

</function>

<function name="rang-long"

class="io.mycat.route.function.AutoPartitionByLong">

<property name="mapFile">autopartition-long.txt</property>

</function>

<function name="mod-long" class="io.mycat.route.function.PartitionByMod">

<!-- how many data nodes -->

<property name="count">3</property>

</function>

<function name="func1" class="io.mycat.route.function.PartitionByLong">

<property name="partitionCount">8</property>

<property name="partitionLength">128</property>

</function>

<function name="latestMonth"

class="io.mycat.route.function.LatestMonthPartion">

<property name="splitOneDay">24</property>

</function>

<function name="partbymonth"

class="io.mycat.route.function.PartitionByMonth">

<property name="dateFormat">yyyy-MM-dd</property>

<property name="sBeginDate">2015-01-01</property>

</function>

<function name="partbyday"

class="io.mycat.route.function.PartitionByDate">

<property name="dateFormat">yyyy-MM-dd</property>

<property name="sNaturalDay">0</property>

<property name="sBeginDate">2014-01-01</property>

<property name="sEndDate">2014-01-31</property>

<property name="sPartionDay">10</property>

</function>

<function name="rang-mod" class="io.mycat.route.function.PartitionByRangeMod">

<property name="mapFile">partition-range-mod.txt</property>

</function>

<function name="jump-consistent-hash" class="io.mycat.route.function.PartitionByJumpConsistentHash">

<property name="totalBuckets">3</property>

</function>

</mycat:rule>

1109

1109

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?