1.创建maven项目

2.pom.xml

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/maven-v4_0_0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.imooc.spark</groupId>

<artifactId>sparktrain</artifactId>

<version>1.0</version>

<inceptionYear>2008</inceptionYear>

<properties>

<scala.version>2.11.8</scala.version>

<kafka.version>0.10.2.2</kafka.version>

</properties>

<dependencies>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<!--Kafka依赖-->

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_2.11</artifactId>

<version>${kafka.version}</version>

</dependency>

</dependencies>

<build>

<sourceDirectory>src/main/scala</sourceDirectory>

<testSourceDirectory>src/test/scala</testSourceDirectory>

<plugins>

<plugin>

<groupId>org.scala-tools</groupId>

<artifactId>maven-scala-plugin</artifactId>

<executions>

<execution>

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

</execution>

</executions>

<configuration>

<scalaVersion>${scala.version}</scalaVersion>

<args>

<arg>-target:jvm-1.5</arg>

</args>

</configuration>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-eclipse-plugin</artifactId>

<configuration>

<downloadSources>true</downloadSources>

<buildcommands>

<buildcommand>ch.epfl.lamp.sdt.core.scalabuilder</buildcommand>

</buildcommands>

<additionalProjectnatures>

<projectnature>ch.epfl.lamp.sdt.core.scalanature</projectnature>

</additionalProjectnatures>

<classpathContainers>

<classpathContainer>org.eclipse.jdt.launching.JRE_CONTAINER</classpathContainer>

<classpathContainer>ch.epfl.lamp.sdt.launching.SCALA_CONTAINER</classpathContainer>

</classpathContainers>

</configuration>

</plugin>

</plugins>

</build>

<reporting>

<plugins>

<plugin>

<groupId>org.scala-tools</groupId>

<artifactId>maven-scala-plugin</artifactId>

<configuration>

<scalaVersion>${scala.version}</scalaVersion>

</configuration>

</plugin>

</plugins>

</reporting>

</project>

2.kafka常用配置文件 KafkaProperties

/**

* kafka常用配置文件

*/

public class KafkaProperties {

public static final String ZK="192.168.218.129:2181";

public static final String TOPIC="hello_topic";

public static final String BROKER_LIST="192.168.218.129:9092";

public static final String GROUP_ID="test_group1";

}

3.生产者

import kafka.javaapi.producer.Producer;

import kafka.producer.KeyedMessage;

import kafka.producer.ProducerConfig;

import java.util.Properties;

/**

* Kafka生产者

*/

public class KafkaProducer extends Thread{

private String topic;

private Producer<Integer, String> producer;

public KafkaProducer(String topic) {

this.topic = topic;

Properties properties = new Properties();

properties.put("metadata.broker.list",KafkaProperties.BROKER_LIST);

properties.put("serializer.class","kafka.serializer.StringEncoder");

properties.put("request.required.acks","1");

producer = new Producer<Integer, String>(new ProducerConfig(properties));

}

@Override

public void run() {

int messageNo = 1;

while(true) {

String message = "message_" + messageNo;

producer.send(new KeyedMessage<Integer, String>(topic, message));

System.out.println("Sent: " + message);

messageNo ++ ;

try{

Thread.sleep(2000);

} catch (Exception e){

e.printStackTrace();

}

}

}

}

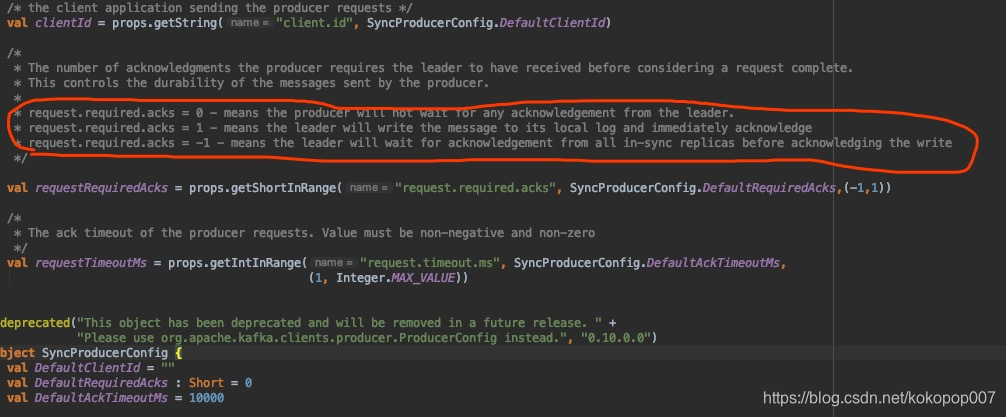

request.required.ack = 0 -表示生产者不会等待领导者的任何确认。

request.required.ack = 1 -表示leader将消息写入本地日志并立即确认

request.required.ack = -1 -表示领导者将等待所有同步副本的确认,然后才确认写入

4.test

/**

* kafka java api 测试

*/

public class KafkaClientApp {

public static void main(String[] args) {

new KafkaProducer(KafkaProperties.TOPIC).start();

//new KafkaConsumer(KafkaProperties.TOPIC).start();

}

}

5.消费者

import kafka.consumer.Consumer;

import kafka.consumer.ConsumerConfig;

import kafka.consumer.ConsumerIterator;

import kafka.consumer.KafkaStream;

import kafka.javaapi.consumer.ConsumerConnector;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.Properties;

/**

* Kafka消费者

*/

public class KafkaConsumer extends Thread{

private String topic;

public KafkaConsumer(String topic) {

this.topic = topic;

}

private ConsumerConnector createConnector(){

Properties properties = new Properties();

properties.put("zookeeper.connect", KafkaProperties.ZK);

properties.put("group.id",KafkaProperties.GROUP_ID);

return Consumer.createJavaConsumerConnector(new ConsumerConfig(properties));

}

@Override

public void run() {

ConsumerConnector consumer = createConnector();

Map<String, Integer> topicCountMap = new HashMap<String, Integer>();

topicCountMap.put(topic, 1);

// topicCountMap.put(topic2, 1);

// topicCountMap.put(topic3, 1);

// String: topic

// List<KafkaStream<byte[], byte[]>> 对应的数据流

Map<String, List<KafkaStream<byte[], byte[]>>> messageStream = consumer.createMessageStreams(topicCountMap);

KafkaStream<byte[], byte[]> stream = messageStream.get(topic).get(0); //get(0)获取我们每次接收到的数据

ConsumerIterator<byte[], byte[]> iterator = stream.iterator();

while (iterator.hasNext()) {

String message = new String(iterator.next().message());

System.out.println("rec: " + message);

}

}

}

本文介绍了如何创建一个Maven项目进行Kafka API编程,包括配置KafkaProperties,详细讲解了生产者配置如request.required.ack的三种模式,并提供了测试用例,最后阐述了消费者的实现。

本文介绍了如何创建一个Maven项目进行Kafka API编程,包括配置KafkaProperties,详细讲解了生产者配置如request.required.ack的三种模式,并提供了测试用例,最后阐述了消费者的实现。

5万+

5万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?