centos7部署单点Kubernetes1.18

前提:

- 至少2台 2核4G 的服务器

- Cent OS 7.6 / 7.7 / 7.8

安装后的软件版本为

https://www.kuboard.cn/install/history-k8s/install-k8s-1.18.x.html

- Kubernetes v1.18.9

- calico 3.13.1

- nginx-ingress 1.5.5

- Docker 19.03.8

docker images version

master

REPOSITORY TAG

registry.aliyuncs.com/k8sxio/kube-proxy v1.18.9

registry.aliyuncs.com/k8sxio/kube-apiserver v1.18.9

registry.aliyuncs.com/k8sxio/kube-controller-manager v1.18.9

registry.aliyuncs.com/k8sxio/kube-scheduler v1.18.9

quay.io/coreos/etcd v3.4.13

calico/node v3.13.1

calico/pod2daemon-flexvol v3.13.1

calico/cni v3.13.1

calico/kube-controllers v3.13.1

registry.aliyuncs.com/k8sxio/pause 3.2

registry.aliyuncs.com/k8sxio/coredns 1.6.7

registry.aliyuncs.com/k8sxio/etcd 3.4.3-0

node

REPOSITORY TAG

registry.aliyuncs.com/k8sxio/kube-proxy v1.18.9

quay.io/coreos/etcd v3.4.13

calico/node v3.13.1

calico/pod2daemon-flexvol v3.13.1

calico/cni v3.13.1

registry.aliyuncs.com/k8sxio/pause 3.2

1、修改 hostname

如果您需要修改 hostname,可执行如下指令:

# 修改 hostname

hostnamectl set-hostname your-new-host-name

# 查看修改结果

hostnamectl status

# 设置 hostname 解析

echo "127.0.0.1 $(hostname)" >> /etc/hosts

2、检查网络

kubelet使用的IP地址

[root@demo-master-a-1 ~]$ ip route show

default via 172.21.0.1 dev eth0

169.254.0.0/16 dev eth0 scope link metric 1002

172.21.0.0/20 dev eth0 proto kernel scope link src 172.21.0.12

[root@demo-master-a-1 ~]$ ip address

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:16:3e:12:a4:1b brd ff:ff:ff:ff:ff:ff

inet 172.17.216.80/20 brd 172.17.223.255 scope global dynamic eth0

valid_lft 305741654sec preferred_lft 305741654sec

ip route show命令中,可以知道机器的默认网卡,通常是eth0,例如 default via 172.21.0.23 dev eth0ip address命令中,可显示默认网卡的 IP 地址,Kubernetes 将使用此 IP 地址与集群内的其他节点通信,如172.17.216.80- 所有节点上 Kubernetes 所使用的 IP 地址必须可以互通(无需 NAT 映射、无安全组或防火墙隔离)

3、安装docker及kubelet

注意事项:

①、我的任意节点 centos 版本为 7.6 / 7.7 或 7.8

②、我的任意节点 CPU 内核数量大于等于 2,且内存大于等于 4G

③、我的任意节点 hostname 不是 localhost,且不包含下划线、小数点、大写字母

④、我的任意节点都有固定的内网 IP 地址

⑤、我的任意节点都只有一个网卡,如果有特殊目的,我可以在完成 K8S 安装后再增加新的网卡

⑥、我的任意节点上 Kubelet使用的 IP 地址可互通(无需 NAT 映射即可相互访问),且没有防火墙、安全组隔离

⑦、我的任意节点不会直接使用 docker run 或 docker-compose 运行容器

添加镜像源,根据情况自己选择:

# 在 master 节点和 worker 节点都要执行

# 最后一个参数 1.18.9 用于指定 kubenetes 版本,支持所有 1.18.x 版本的安装

# 腾讯云 docker hub 镜像

# export REGISTRY_MIRROR="https://mirror.ccs.tencentyun.com"

# DaoCloud 镜像

# export REGISTRY_MIRROR="http://f1361db2.m.daocloud.io"

# 阿里云 docker hub 镜像

export REGISTRY_MIRROR=https://registry.cn-hangzhou.aliyuncs.com

1、安装docker

注:最好迁移docker存储位置,根据自身情况选择

#!/bin/bash

# 在 master 节点和 worker 节点都要执行

# 安装 docker

# 参考文档如下

# https://docs.docker.com/install/linux/docker-ce/centos/

# https://docs.docker.com/install/linux/linux-postinstall/

# 卸载旧版本

sudo yum remove -y docker \

docker-client \

docker-client-latest \

docker-ce-cli \

docker-common \

docker-latest \

docker-latest-logrotate \

docker-logrotate \

docker-selinux \

docker-engine-selinux \

docker-engine

# 设置 yum repository

sudo yum install -y yum-utils \

device-mapper-persistent-data \

lvm2

sudo yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# 安装并启动 docker

sudo yum install -y docker-ce-19.03.8 docker-ce-cli-19.03.8 containerd.io

sudo systemctl enable docker

sudo systemctl start docker

# 迁移docker目录(根据自身需求决定)

sudo systemctl stop docker

sudo mkdir -p /var/opt/docker-lib

sudo mv /var/lib/docker /var/opt/docker-lib

sudo ln -s /var/opt/docker-lib/docker /var/lib/docker

sudo systemctl start docker

2、配置基础环境安装kubelet

#!/bin/bash

# 安装 nfs-utils

# 必须先安装 nfs-utils 才能挂载 nfs 网络存储

sudo yum install -y nfs-utils

sudo yum install -y wget

# 关闭 防火墙

sudo systemctl stop firewalld

sudo systemctl disable firewalld

# 关闭 SeLinux

setenforce 0

sudo sed -i "s/SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

# 关闭 swap

sudo swapoff -a

yes | cp /etc/fstab /etc/fstab_bak

cat /etc/fstab_bak |grep -v swap > /etc/fstab

# 修改 /etc/sysctl.conf

# 如果有配置,则修改

sed -i "s#^net.ipv4.ip_forward.*#net.ipv4.ip_forward=1#g" /etc/sysctl.conf

sed -i "s#^net.bridge.bridge-nf-call-ip6tables.*#net.bridge.bridge-nf-call-ip6tables=1#g" /etc/sysctl.conf

sed -i "s#^net.bridge.bridge-nf-call-iptables.*#net.bridge.bridge-nf-call-iptables=1#g" /etc/sysctl.conf

sed -i "s#^net.ipv6.conf.all.disable_ipv6.*#net.ipv6.conf.all.disable_ipv6=1#g" /etc/sysctl.conf

sed -i "s#^net.ipv6.conf.default.disable_ipv6.*#net.ipv6.conf.default.disable_ipv6=1#g" /etc/sysctl.conf

sed -i "s#^net.ipv6.conf.lo.disable_ipv6.*#net.ipv6.conf.lo.disable_ipv6=1#g" /etc/sysctl.conf

sed -i "s#^net.ipv6.conf.all.forwarding.*#net.ipv6.conf.all.forwarding=1#g" /etc/sysctl.conf

# 可能没有,追加

sudo bash -c "echo 'net.ipv4.ip_forward = 1' >> /etc/sysctl.conf"

sudo bash -c "echo 'net.bridge.bridge-nf-call-ip6tables = 1' >> /etc/sysctl.conf"

sudo bash -c "echo 'net.bridge.bridge-nf-call-iptables = 1' >> /etc/sysctl.conf"

sudo bash -c "echo 'net.ipv6.conf.all.disable_ipv6 = 1' >> /etc/sysctl.conf"

sudo bash -c "echo 'net.ipv6.conf.default.disable_ipv6 = 1' >> /etc/sysctl.conf"

sudo bash -c "echo 'net.ipv6.conf.lo.disable_ipv6 = 1' >> /etc/sysctl.conf"

sudo bash -c "echo 'net.ipv6.conf.all.forwarding = 1' >> /etc/sysctl.conf"

# 执行命令以应用

sudo sysctl -p

# 配置K8S的yum源

sudo bash -c "cat > /etc/yum.repos.d/kubernetes.repo" << EOF

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

# 卸载旧版本

sudo yum remove -y kubelet kubeadm kubectl

# 安装kubelet、kubeadm、kubectl

# 将 ${1} 替换为 kubernetes 版本号,例如 1.17.2

# yum install -y kubelet-${1} kubeadm-${1} kubectl-${1}

sudo yum install -y kubelet-1.18.9 kubeadm-1.18.9 kubectl-1.18.9

# 修改docker Cgroup Driver为systemd

# # 将/usr/lib/systemd/system/docker.service文件中的这一行 ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock

# # 修改为 ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --exec-opt native.cgroupdriver=systemd

# 如果不修改,在添加 worker 节点时可能会碰到如下错误

# [WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd".

# Please follow the guide at https://kubernetes.io/docs/setup/cri/

sudo sed -i "s#^ExecStart=/usr/bin/dockerd.*#ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --exec-opt native.cgroupdriver=systemd#g" /usr/lib/systemd/system/docker.service

# 设置 docker 镜像,提高 docker 镜像下载速度和稳定性

# 如果您访问 https://hub.docker.io 速度非常稳定,亦可以跳过这个步骤

curl -sSL https://kuboard.cn/install-script/set_mirror.sh | sh -s ${REGISTRY_MIRROR}

# 重启 docker,并启动 kubelet

sudo systemctl daemon-reload

sudo systemctl restart docker

sudo systemctl enable kubelet && systemctl start kubelet

docker version

4、初始化 master 节点

关于初始化时用到的环境变量

- APISERVER_NAME 不能是 master 的 hostname

- APISERVER_NAME 必须全为小写字母、数字、小数点,不能包含减号

- POD_SUBNET 所使用的网段不能与 master节点/worker节点 所在的网段重叠。该字段的取值为一个 CIDR 值,如果您对 CIDR 这个概念还不熟悉,请仍然执行 export POD_SUBNET=10.100.0.1/16 命令,不做修改

# 只在 master 节点执行

# 替换 x.x.x.x 为 master 节点的内网IP

# export 命令只在当前 shell 会话中有效,开启新的 shell 窗口后,如果要继续安装过程,请重新执行此处的 export 命令

export MASTER_IP=x.x.x.x

# 替换 apiserver.demo 为 您想要的 dnsName

export APISERVER_NAME=apiserver.demo

# Kubernetes 容器组所在的网段,该网段安装完成后,由 kubernetes 创建,事先并不存在于您的物理网络中

export POD_SUBNET=10.100.0.1/16

sudo bash -c "echo '${MASTER_IP} ${APISERVER_NAME}' >> /etc/hosts"

calico-3.13.1.yaml

---

# Source: calico/templates/calico-config.yaml

# This ConfigMap is used to configure a self-hosted Calico installation.

kind: ConfigMap

apiVersion: v1

metadata:

name: calico-config

namespace: kube-system

data:

# Typha is disabled.

typha_service_name: "none"

# Configure the backend to use.

calico_backend: "bird"

# Configure the MTU to use

veth_mtu: "1440"

# The CNI network configuration to install on each node. The special

# values in this config will be automatically populated.

cni_network_config: |-

{

"name": "k8s-pod-network",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "calico",

"log_level": "info",

"datastore_type": "kubernetes",

"nodename": "__KUBERNETES_NODE_NAME__",

"mtu": __CNI_MTU__,

"ipam": {

"type": "calico-ipam"

},

"policy": {

"type": "k8s"

},

"kubernetes": {

"kubeconfig": "__KUBECONFIG_FILEPATH__"

}

},

{

"type": "portmap",

"snat": true,

"capabilities": {"portMappings": true}

},

{

"type": "bandwidth",

"capabilities": {"bandwidth": true}

}

]

}

---

# Source: calico/templates/kdd-crds.yaml

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: bgpconfigurations.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: BGPConfiguration

plural: bgpconfigurations

singular: bgpconfiguration

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: bgppeers.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: BGPPeer

plural: bgppeers

singular: bgppeer

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: blockaffinities.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: BlockAffinity

plural: blockaffinities

singular: blockaffinity

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: clusterinformations.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: ClusterInformation

plural: clusterinformations

singular: clusterinformation

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: felixconfigurations.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: FelixConfiguration

plural: felixconfigurations

singular: felixconfiguration

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: globalnetworkpolicies.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: GlobalNetworkPolicy

plural: globalnetworkpolicies

singular: globalnetworkpolicy

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: globalnetworksets.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: GlobalNetworkSet

plural: globalnetworksets

singular: globalnetworkset

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: hostendpoints.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: HostEndpoint

plural: hostendpoints

singular: hostendpoint

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: ipamblocks.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: IPAMBlock

plural: ipamblocks

singular: ipamblock

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: ipamconfigs.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: IPAMConfig

plural: ipamconfigs

singular: ipamconfig

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: ipamhandles.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: IPAMHandle

plural: ipamhandles

singular: ipamhandle

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: ippools.crd.projectcalico.org

spec:

scope: Cluster

group: crd.projectcalico.org

version: v1

names:

kind: IPPool

plural: ippools

singular: ippool

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: networkpolicies.crd.projectcalico.org

spec:

scope: Namespaced

group: crd.projectcalico.org

version: v1

names:

kind: NetworkPolicy

plural: networkpolicies

singular: networkpolicy

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: networksets.crd.projectcalico.org

spec:

scope: Namespaced

group: crd.projectcalico.org

version: v1

names:

kind: NetworkSet

plural: networksets

singular: networkset

---

---

# Source: calico/templates/rbac.yaml

# Include a clusterrole for the kube-controllers component,

# and bind it to the calico-kube-controllers serviceaccount.

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: calico-kube-controllers

rules:

# Nodes are watched to monitor for deletions.

- apiGroups: [""]

resources:

- nodes

verbs:

- watch

- list

- get

# Pods are queried to check for existence.

- apiGroups: [""]

resources:

- pods

verbs:

- get

# IPAM resources are manipulated when nodes are deleted.

- apiGroups: ["crd.projectcalico.org"]

resources:

- ippools

verbs:

- list

- apiGroups: ["crd.projectcalico.org"]

resources:

- blockaffinities

- ipamblocks

- ipamhandles

verbs:

- get

- list

- create

- update

- delete

# Needs access to update clusterinformations.

- apiGroups: ["crd.projectcalico.org"]

resources:

- clusterinformations

verbs:

- get

- create

- update

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: calico-kube-controllers

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: calico-kube-controllers

subjects:

- kind: ServiceAccount

name: calico-kube-controllers

namespace: kube-system

---

# Include a clusterrole for the calico-node DaemonSet,

# and bind it to the calico-node serviceaccount.

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: calico-node

rules:

# The CNI plugin needs to get pods, nodes, and namespaces.

- apiGroups: [""]

resources:

- pods

- nodes

- namespaces

verbs:

- get

- apiGroups: [""]

resources:

- endpoints

- services

verbs:

# Used to discover service IPs for advertisement.

- watch

- list

# Used to discover Typhas.

- get

# Pod CIDR auto-detection on kubeadm needs access to config maps.

- apiGroups: [""]

resources:

- configmaps

verbs:

- get

- apiGroups: [""]

resources:

- nodes/status

verbs:

# Needed for clearing NodeNetworkUnavailable flag.

- patch

# Calico stores some configuration information in node annotations.

- update

# Watch for changes to Kubernetes NetworkPolicies.

- apiGroups: ["networking.k8s.io"]

resources:

- networkpolicies

verbs:

- watch

- list

# Used by Calico for policy information.

- apiGroups: [""]

resources:

- pods

- namespaces

- serviceaccounts

verbs:

- list

- watch

# The CNI plugin patches pods/status.

- apiGroups: [""]

resources:

- pods/status

verbs:

- patch

# Calico monitors various CRDs for config.

- apiGroups: ["crd.projectcalico.org"]

resources:

- globalfelixconfigs

- felixconfigurations

- bgppeers

- globalbgpconfigs

- bgpconfigurations

- ippools

- ipamblocks

- globalnetworkpolicies

- globalnetworksets

- networkpolicies

- networksets

- clusterinformations

- hostendpoints

- blockaffinities

verbs:

- get

- list

- watch

# Calico must create and update some CRDs on startup.

- apiGroups: ["crd.projectcalico.org"]

resources:

- ippools

- felixconfigurations

- clusterinformations

verbs:

- create

- update

# Calico stores some configuration information on the node.

- apiGroups: [""]

resources:

- nodes

verbs:

- get

- list

- watch

# These permissions are only requried for upgrade from v2.6, and can

# be removed after upgrade or on fresh installations.

- apiGroups: ["crd.projectcalico.org"]

resources:

- bgpconfigurations

- bgppeers

verbs:

- create

- update

# These permissions are required for Calico CNI to perform IPAM allocations.

- apiGroups: ["crd.projectcalico.org"]

resources:

- blockaffinities

- ipamblocks

- ipamhandles

verbs:

- get

- list

- create

- update

- delete

- apiGroups: ["crd.projectcalico.org"]

resources:

- ipamconfigs

verbs:

- get

# Block affinities must also be watchable by confd for route aggregation.

- apiGroups: ["crd.projectcalico.org"]

resources:

- blockaffinities

verbs:

- watch

# The Calico IPAM migration needs to get daemonsets. These permissions can be

# removed if not upgrading from an installation using host-local IPAM.

- apiGroups: ["apps"]

resources:

- daemonsets

verbs:

- get

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: calico-node

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: calico-node

subjects:

- kind: ServiceAccount

name: calico-node

namespace: kube-system

---

# Source: calico/templates/calico-node.yaml

# This manifest installs the calico-node container, as well

# as the CNI plugins and network config on

# each master and worker node in a Kubernetes cluster.

kind: DaemonSet

apiVersion: apps/v1

metadata:

name: calico-node

namespace: kube-system

labels:

k8s-app: calico-node

spec:

selector:

matchLabels:

k8s-app: calico-node

updateStrategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 1

template:

metadata:

labels:

k8s-app: calico-node

annotations:

# This, along with the CriticalAddonsOnly toleration below,

# marks the pod as a critical add-on, ensuring it gets

# priority scheduling and that its resources are reserved

# if it ever gets evicted.

scheduler.alpha.kubernetes.io/critical-pod: ''

spec:

nodeSelector:

kubernetes.io/os: linux

hostNetwork: true

tolerations:

# Make sure calico-node gets scheduled on all nodes.

- effect: NoSchedule

operator: Exists

# Mark the pod as a critical add-on for rescheduling.

- key: CriticalAddonsOnly

operator: Exists

- effect: NoExecute

operator: Exists

serviceAccountName: calico-node

# Minimize downtime during a rolling upgrade or deletion; tell Kubernetes to do a "force

# deletion": https://kubernetes.io/docs/concepts/workloads/pods/pod/#termination-of-pods.

terminationGracePeriodSeconds: 0

priorityClassName: system-node-critical

initContainers:

# This container performs upgrade from host-local IPAM to calico-ipam.

# It can be deleted if this is a fresh installation, or if you have already

# upgraded to use calico-ipam.

- name: upgrade-ipam

image: calico/cni:v3.13.1

command: ["/opt/cni/bin/calico-ipam", "-upgrade"]

env:

- name: KUBERNETES_NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

- name: CALICO_NETWORKING_BACKEND

valueFrom:

configMapKeyRef:

name: calico-config

key: calico_backend

volumeMounts:

- mountPath: /var/lib/cni/networks

name: host-local-net-dir

- mountPath: /host/opt/cni/bin

name: cni-bin-dir

securityContext:

privileged: true

# This container installs the CNI binaries

# and CNI network config file on each node.

- name: install-cni

image: calico/cni:v3.13.1

command: ["/install-cni.sh"]

env:

# Name of the CNI config file to create.

- name: CNI_CONF_NAME

value: "10-calico.conflist"

# The CNI network config to install on each node.

- name: CNI_NETWORK_CONFIG

valueFrom:

configMapKeyRef:

name: calico-config

key: cni_network_config

# Set the hostname based on the k8s node name.

- name: KUBERNETES_NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

# CNI MTU Config variable

- name: CNI_MTU

valueFrom:

configMapKeyRef:

name: calico-config

key: veth_mtu

# Prevents the container from sleeping forever.

- name: SLEEP

value: "false"

volumeMounts:

- mountPath: /host/opt/cni/bin

name: cni-bin-dir

- mountPath: /host/etc/cni/net.d

name: cni-net-dir

securityContext:

privileged: true

# Adds a Flex Volume Driver that creates a per-pod Unix Domain Socket to allow Dikastes

# to communicate with Felix over the Policy Sync API.

- name: flexvol-driver

image: calico/pod2daemon-flexvol:v3.13.1

volumeMounts:

- name: flexvol-driver-host

mountPath: /host/driver

securityContext:

privileged: true

containers:

# Runs calico-node container on each Kubernetes node. This

# container programs network policy and routes on each

# host.

- name: calico-node

image: calico/node:v3.13.1

env:

# Use Kubernetes API as the backing datastore.

- name: DATASTORE_TYPE

value: "kubernetes"

# Wait for the datastore.

- name: WAIT_FOR_DATASTORE

value: "true"

# Set based on the k8s node name.

- name: NODENAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

# Choose the backend to use.

- name: CALICO_NETWORKING_BACKEND

valueFrom:

configMapKeyRef:

name: calico-config

key: calico_backend

# Cluster type to identify the deployment type

- name: CLUSTER_TYPE

value: "k8s,bgp"

- name: IP_AUTODETECTION_METHOD

value: "interface=ens.*"

# Auto-detect the BGP IP address.

- name: IP

value: "autodetect"

# Enable IPIP

- name: CALICO_IPV4POOL_IPIP

value: "Always"

# Set MTU for tunnel device used if ipip is enabled

- name: FELIX_IPINIPMTU

valueFrom:

configMapKeyRef:

name: calico-config

key: veth_mtu

# The default IPv4 pool to create on startup if none exists. Pod IPs will be

# chosen from this range. Changing this value after installation will have

# no effect. This should fall within `--cluster-cidr`.

# - name: CALICO_IPV4POOL_CIDR

# value: "192.168.0.0/16"

# Disable file logging so `kubectl logs` works.

- name: CALICO_DISABLE_FILE_LOGGING

value: "true"

# Set Felix endpoint to host default action to ACCEPT.

- name: FELIX_DEFAULTENDPOINTTOHOSTACTION

value: "ACCEPT"

# Disable IPv6 on Kubernetes.

- name: FELIX_IPV6SUPPORT

value: "false"

# Set Felix logging to "info"

- name: FELIX_LOGSEVERITYSCREEN

value: "info"

- name: FELIX_HEALTHENABLED

value: "true"

securityContext:

privileged: true

resources:

requests:

cpu: 250m

livenessProbe:

exec:

command:

- /bin/calico-node

- -felix-live

- -bird-live

periodSeconds: 10

initialDelaySeconds: 10

failureThreshold: 6

readinessProbe:

exec:

command:

- /bin/calico-node

- -felix-ready

- -bird-ready

periodSeconds: 10

volumeMounts:

- mountPath: /lib/modules

name: lib-modules

readOnly: true

- mountPath: /run/xtables.lock

name: xtables-lock

readOnly: false

- mountPath: /var/run/calico

name: var-run-calico

readOnly: false

- mountPath: /var/lib/calico

name: var-lib-calico

readOnly: false

- name: policysync

mountPath: /var/run/nodeagent

volumes:

# Used by calico-node.

- name: lib-modules

hostPath:

path: /lib/modules

- name: var-run-calico

hostPath:

path: /var/run/calico

- name: var-lib-calico

hostPath:

path: /var/lib/calico

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

# Used to install CNI.

- name: cni-bin-dir

hostPath:

path: /opt/cni/bin

- name: cni-net-dir

hostPath:

path: /etc/cni/net.d

# Mount in the directory for host-local IPAM allocations. This is

# used when upgrading from host-local to calico-ipam, and can be removed

# if not using the upgrade-ipam init container.

- name: host-local-net-dir

hostPath:

path: /var/lib/cni/networks

# Used to create per-pod Unix Domain Sockets

- name: policysync

hostPath:

type: DirectoryOrCreate

path: /var/run/nodeagent

# Used to install Flex Volume Driver

- name: flexvol-driver-host

hostPath:

type: DirectoryOrCreate

path: /usr/libexec/kubernetes/kubelet-plugins/volume/exec/nodeagent~uds

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: calico-node

namespace: kube-system

---

# Source: calico/templates/calico-kube-controllers.yaml

# See https://github.com/projectcalico/kube-controllers

apiVersion: apps/v1

kind: Deployment

metadata:

name: calico-kube-controllers

namespace: kube-system

labels:

k8s-app: calico-kube-controllers

spec:

# The controllers can only have a single active instance.

replicas: 1

selector:

matchLabels:

k8s-app: calico-kube-controllers

strategy:

type: Recreate

template:

metadata:

name: calico-kube-controllers

namespace: kube-system

labels:

k8s-app: calico-kube-controllers

annotations:

scheduler.alpha.kubernetes.io/critical-pod: ''

spec:

nodeSelector:

kubernetes.io/os: linux

tolerations:

# Mark the pod as a critical add-on for rescheduling.

- key: CriticalAddonsOnly

operator: Exists

- key: node-role.kubernetes.io/master

effect: NoSchedule

serviceAccountName: calico-kube-controllers

priorityClassName: system-cluster-critical

containers:

- name: calico-kube-controllers

image: calico/kube-controllers:v3.13.1

env:

# Choose which controllers to run.

- name: ENABLED_CONTROLLERS

value: node

- name: DATASTORE_TYPE

value: kubernetes

readinessProbe:

exec:

command:

- /usr/bin/check-status

- -r

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: calico-kube-controllers

namespace: kube-system

---

# Source: calico/templates/calico-etcd-secrets.yaml

---

# Source: calico/templates/calico-typha.yaml

---

# Source: calico/templates/configure-canal.yaml

maste_run.sh

#!/bin/bash

# 只在 master 节点执行

# 脚本出错时终止执行

set -e

if [ ${#POD_SUBNET} -eq 0 ] || [ ${#APISERVER_NAME} -eq 0 ]; then

echo -e "\033[31;1m请确保您已经设置了环境变量 POD_SUBNET 和 APISERVER_NAME \033[0m"

echo 当前POD_SUBNET=$POD_SUBNET

echo 当前APISERVER_NAME=$APISERVER_NAME

exit 1

fi

# 查看完整配置选项 https://godoc.org/k8s.io/kubernetes/cmd/kubeadm/app/apis/kubeadm/v1beta2

rm -f ./kubeadm-config.yaml

sudo bash -c "cat > ./kubeadm-config.yaml" <<EOF

apiVersion: kubeadm.k8s.io/v1beta2

kind: ClusterConfiguration

kubernetesVersion: v1.18.9

imageRepository: registry.aliyuncs.com/k8sxio

controlPlaneEndpoint: "${APISERVER_NAME}:6443"

networking:

serviceSubnet: "10.96.0.0/16"

podSubnet: "${POD_SUBNET}"

dnsDomain: "cluster.local"

EOF

# kubeadm init

# 根据您服务器网速的情况,您需要等候 3 - 10 分钟

sudo kubeadm init --config=kubeadm-config.yaml --upload-certs

# 配置 kubectl

sudo rm -rf /root/.kube/

sudo mkdir /root/.kube/

sudo cp -i /etc/kubernetes/admin.conf /root/.kube/config

# 安装 calico 网络插件

# 参考文档 https://docs.projectcalico.org/v3.13/getting-started/kubernetes/self-managed-onprem/onpremises

echo "安装calico-3.13.1"

sudo rm -f calico-3.13.1.yaml

# 可以直接下载运行,也可copy我上面的运行,下载的对于多网卡会有问题,根据自身选择即可

# wget https://kuboard.cn/install-script/calico/calico-3.13.1.yaml

sudo kubectl apply -f calico-3.13.1.yaml

检查master初始化结果:

# 只在 master 节点执行

# 执行如下命令,等待 3-10 分钟,直到所有的容器组处于 Running 状态

sudo watch kubectl get pod -n kube-system -o wide

# 查看 master 节点初始化结果

sudo kubectl get nodes -o wide

1、初始化 worker节点,获得 join命令参数

在 master 节点上执行

# 只在 master 节点执行

sudo kubeadm token create --print-join-command

有效时间

该 token 的有效时间为 2 个小时,2小时内,您可以使用此 token 初始化任意数量的 worker 节点。

2、初始化worker

针对所有的 worker 节点执行

# 只在 worker 节点执行

# 替换 x.x.x.x 为 master 节点的内网 IP

export

MASTER_IP=x.x.x.x

# 替换 apiserver.demo 为初始化 master 节点时所使用的 APISERVER_NAME

export APISERVER_NAME=apiserver.demo

sudo bash -c "echo '${MASTER_IP} ${APISERVER_NAME}' >> /etc/hosts"

# 替换为 master 节点上 kubeadm token create 命令的输出

kubeadm join apiserver.demo:6443 --token mpfjma.4vjjg8flqihor4vt --discovery-token-ca-cert-hash sha256:6f7a8e40a810323672de5eee6f4d19aa2dbdb38411845a1bf5dd63485c43d303

5、检查初始化结果

在 master 节点上执行

# 只在 master 节点执行

sudo kubectl get nodes -o wide

备注:

1、启动calico报错

# kubectl describe pod calico-node-jj7sn -n kube-system

Warning Unhealthy 3m12s kubelet Readiness probe failed: calico/node is not ready: BIRD is not ready: BGP not established with 10.100.1.1,10.100.2.12021-12-20 03:06:59.963 [INFO][146] health.go 156: Number of node(s) with BGP peering established = 0

解决:

- 调整calicao

网络插件的网卡发现机制,修改IP_AUTODETECTION_METHOD对应的value值。官方提供的yaml文件中,ip识别策略(IPDETECTMETHOD)没有配置,即默认为first-found,这会导致一个网络异常的ip作为nodeIP被注册,从而影响node-to-node

mesh。我们可以修改成can-reach或者interface的策略,尝试连接某一个Ready的node的IP,以此选择出正确的IP。

calico.yaml 文件添加以下二行

- name: IP_AUTODETECTION_METHOD

value: "interface=ens.*" # ens 根据实际网卡开头配置,支持正则表达式

配置完成如下:

# Cluster type to identify the deployment type

- name: CLUSTER_TYPE

value: "k8s,bgp"

- name: IP_AUTODETECTION_METHOD

value: "interface=ens.*"

# Auto-detect the BGP IP address.

- name: IP

value: "autodetect"

# Enable IPIP

- name: CALICO_IPV4POOL_IPIP

value: "Always"

重新调用生效

sudo kubectl apply -f calico-3.13.1.yaml

查看

sudo kubectl get pod -o wide -A

kuboard默认用户密码

kuboard:146.11.1.1:80

用户名: admin

密 码: Kuboard123

部署pod案例模板

部署pod服务(query)

deployment:

#Deployment

apiVersion: apps/v1

kind: Deployment

metadata:

name: query-deploy

namespace: leotest

labels:

name: query-deploy

spec:

replicas: 1

selector:

matchLabels:

name: query-deploy-pod

template:

metadata:

labels:

name: query-deploy-pod

spec:

terminationGracePeriodSeconds: 1

containers:

- name: "query"

image: query:latest

imagePullPolicy: IfNotPresent

ports:

- containerPort: 5000

volumeMounts:

- name: query-volume

mountPath: /var/opt

command: ["python","/home/run.py"]

env:

# 获取宿主机ip传个MY_NODE_IP环境变量

- name: MY_NODE_IP

valueFrom:

fieldRef:

fieldPath: status.hostIP

volumes:

- name: "query-volume"

hostPath:

path: /var/opt/query-project

service:

#Service

apiVersion: v1

kind: Service

metadata:

name: static

namespace: leotest

labels:

name: query-static

spec:

selector:

name: query-deploy-pod

sessionAffinity: ClientIP

type: NodePort

ports:

- name: "tcp"

port: 5000

targetPort: 5000

nodePort: 31000

部署import pod

#Deployment

apiVersion: apps/v1

kind: Deployment

metadata:

name: import-deploy

namespace: leotest

labels:

name: cimport-deploy

spec:

replicas: 2

selector:

matchLabels:

name: import-deploy-pod

template:

metadata:

labels:

name: import-deploy-pod

spec:

terminationGracePeriodSeconds: 1

nodeSelector:

import: "true"

containers:

- name: "import"

image: import:latest

imagePullPolicy: IfNotPresent

ports:

- containerPort: 9000

volumeMounts:

- name: import-volume

mountPath: /var/opt

env:

- name: MY_NODE_IP

valueFrom:

fieldRef:

fieldPath: status.hostIP

#command: [ "sh","-c","env >/etc/default/locale&& cat" ]

command: [ "sh","-c" ]

#args: [ "env >>/etc/default/locale;cat" ]

args: ["env >> /etc/default/locale;/etc/init.d/cron start;while true;do echo hello;sleep 30;done;"]

#args: ["env >> /etc/default/locale;sleep 61;cat;"]

volumes:

- name: "import-volume"

hostPath:

path: /var/opt/import-project

1、k8s pod 报错CrashLoopBackOff

一般都是command格式不合法导致导致的,如需要运行多条命令,使用;不要使用&&

# top命令使用如下

command: ["top","-b"]

或

command: ["top"]

args: ["-b"]

# shell命令使用如下来保持容器不会重启

command: ["/bin/sh"]

args: ["-c","while true;do echo hello;sleep 1;done"]

或

command: ["/bin/sh","-c","while true;do echo hello;sleep ;done"]

2、docker中crontab无法获取系统环境变量

/etc/init.d/cron start用于启动crontab服务,但这样启动的crontab服务中配置的定时命令是没有Dockerfile中设置的环境变量的。因此还需要在这之前执行env >> /etc/default/locale,这样Dockerfile中通过ENV设置的环境变量,在crontab中就可以正常读取了。

#!/bin/bash

set -x

# 保存环境变量,开启crontab服务

env >> /etc/default/locale

/etc/init.d/cron start

1、2、皆在pod模板中引用

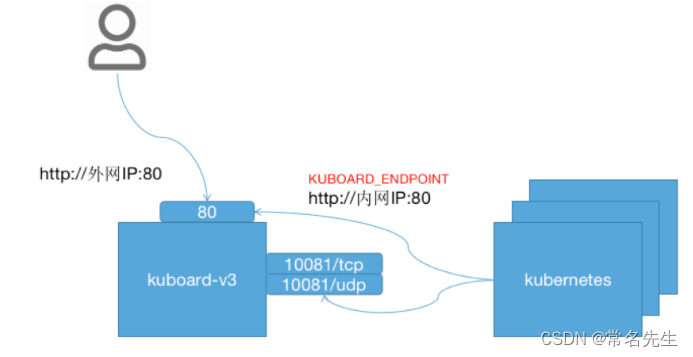

kuboard

sudo docker run -d \

--restart=unless-stopped \

--name=kuboard \

-p 80:80/tcp \

-p 10081:10081/tcp \

-e KUBOARD_ENDPOINT="http://内网IP:80" \

-e KUBOARD_AGENT_SERVER_TCP_PORT="10081" \

-v /root/kuboard-data:/data \

eipwork/kuboard:v3

# 也可以使用镜像 swr.cn-east-2.myhuaweicloud.com/kuboard/kuboard:v3 ,可以更快地完成镜像下载。

# 请不要使用 127.0.0.1 或者 localhost 作为内网 IP \

# Kuboard 不需要和 K8S 在同一个网段,Kuboard Agent 甚至可以通过代理访问 Kuboard Server \

在浏览器输入 http://your-host-ip:80 即可访问 Kuboard v3.x 的界面,登录方式:

- 用户名:

admin - 密 码:

Kuboard123

备注:

1、通过将参数 --upload-certs 添加到 kubeadm init,你可以将控制平面证书临时上传到集群中的 Secret。 请注意,此 Secret 将在 2 小时后自动过期。

2、要使非 root 用户可以运行 kubectl,请运行以下命令, 它们也是 kubeadm init 输出的一部分

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

3、init时随机生成证书密钥

该命令将打印出可以与 “init” 命令一起使用的安全的随机生成的证书密钥。

你也可以使用 kubeadm init --upload-certs 而无需指定证书密钥; 命令将为你生成并打印一个证书密钥。

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?