讨论问题范围:从app到audioserver因为perforamance导致的杂音问题

生产者/消费者模型

杂音问题的本质就是生产者产生数据不及时,杂音问题之所以复杂是因为音频数据传递次数比较多,一个消费者可能同时也是生产者,比如deepbuffer线程,对于AudioTrack他是消费者,但是对hal层而言,他就是生产者。另外,不同的消费者行为表现还有一些差异,比如数据不够时,消费者应该怎么办?有些消费者必须要持续的消耗数据,数据不够时就插入一个0数据的buffer, 有些消费者可能就停止消费数据,等到有数据时才继续消耗。 android一般有如下几种模型:

APP主动write数据:

callback机制:

fastmix机制:

duplicate机制

second output 机制

杂音问题发生的可能情况:

- write模式时,render thread送数据不及时(这种情况很少见,因为生产着生产数据速度很快,sharebufer一般是满的,app一般block在obtainbuffer中, 但是有些app自己是间隔固定周期写数据下来,处理不好就可能出现杂音)

- callback模式callbackthread送数据不及时(这种情况很少见,因为callbackthread从逻辑上来说不会被app block, 但是确实有发生,后面有例子)

- NativeThread送数据不及时(这种情况一是性能问题,调度不及时,一是app自己在nativethread中调了耗时操作,导致生产数据被block住了)

- callbackthread回调太慢了,nativethread把APP internal buffer写满了后override(录屏常见出现此类杂音)

- outputThread调度出问题了,得不到运行。

本文会分析前面4种产生的原因和对应的systrace, 在分析具体问题之前,需要了解android系统中生产者和消费者的行为。

消费者行为分析

OutputThread主要会面临下面三种情况,先分析threadloop的源代码,然后分析每种情况下app的行为

- 没有activeTrack

- 有ActiveTrack,但是ActiveTrack数据不够

- 有AcitveTrack,只少有一个Activetrack有充足数据

下面的分析以deepbuffer为主,direct和fast只描述重要差异部分

bool AudioFlinger::PlaybackThread::threadLoop()

{

mStandbyTimeNs = systemTime();

processConfigEvents_l();

for() {

// step1: 处理standby逻辑

if ((mActiveTracks.isEmpty() && systemTime() > mStandbyTimeNs) || isSuspended()) {

if (mActiveTracks.isEmpty() && mConfigEvents.isEmpty()) {

mWaitWorkCV.wait(mLock);

mMixerStatus = MIXER_IDLE;

mMixerStatusIgnoringFastTracks = MIXER_IDLE;

mBytesWritten = 0;

mBytesRemaining = 0;

checkSilentMode_l();

mStandbyTimeNs = systemTime() + mStandbyDelayNs;

mSleepTimeUs = mIdleSleepTimeUs;

if (mType == MIXER) {

sleepTimeShift = 0;

}

continue;

}

}

//step2 prepareTracks

mMixerStatus = prepareTracks_l(&tracksToRemove);

//step3 没有活跃track onIdle

if (mMixerStatus == MIXER_IDLE && !mActiveTracks.size()) {

onIdleMixer();

}

//step4: 发送有变化的metadata

updateMetadata_l();

if (mBytesRemaining == 0) {

if (mMixerStatus == MIXER_TRACKS_READY) {

// step5: 数据写完了,并且至少有一个track有数据就做mix

threadLoop_mix();

} else if ((mMixerStatus != MIXER_DRAIN_TRACK)

&& (mMixerStatus != MIXER_DRAIN_ALL)) {

//step6: 如果数据没有ready, 更新sleeptime

threadLoop_sleepTime();

if (mSleepTimeUs == 0) {

mCurrentWriteLength = mSinkBufferSize;

}

}//end if mixerStatus

mBytesRemaining = mCurrentWriteLength;

}

if (!waitingAsyncCallback()) {

if (mSleepTimeUs == 0) {

if (mBytesRemaining) {

//step7 写数据到hal层

ret = threadLoop_write();

mBytesRemaining -= ret;

} else if ((mMixerStatus == MIXER_DRAIN_TRACK) ||

(mMixerStatus == MIXER_DRAIN_ALL)) {

threadLoop_drain();

}

{

//step8 处理throttle逻辑

}

else {

//step9 sleep

if (!mSignalPending && mConfigEvents.isEmpty() && !exitPending()) {

mWaitWorkCV.waitRelative(mLock, microseconds((nsecs_t)mSleepTimeUs));

}

}//end bytesremaining ==0

}

//step 10 移除不活跃tracks

threadLoop_removeTracks(tracksToRemove);

tracksToRemove.clear();

}//end for

step1: 如果有没有活跃的track, 并且活跃的standby时间到了就调底层的standby,然后进入休眠

step2:调preprareTracks,这个函数会把需要参与mix的track加到AudioMix中,然后返回mix状态

enum mixer_state {

MIXER_IDLE, // no active tracks

MIXER_TRACKS_ENABLED, // at least one active track, but no track has any data ready

MIXER_TRACKS_READY, // at least one active track, and at least one track has data

MIXER_DRAIN_TRACK, // drain currently playing track

MIXER_DRAIN_ALL, // fully drain the hardware

// standby mode does not have an enum value

// suspend by audio policy manager is orthogonal to mixer state

};

MIXER_IDEL: 表示没有active track, 从standby推出就是MIXER_IDEL

MIXER_TRACKS_ENABLED: 至少有一个activeTrack, 但是没有足够的数据, client 调AudioTrack.start后就把audiotrack添加到activetrack list中

MIXER_TRACKS_READY:至少有一个activeTrack, 并且有足够的数据

track活跃的条件:对mixer类型的thread,要求至少有mixerthread的mixerbufer对应的buffer大小,另外systrace里打点nRdy也在这里更新:

// prepareTracks_l() must be called with ThreadBase::mLock held

AudioFlinger::PlaybackThread::mixer_state AudioFlinger::MixerThread::prepareTracks_l(

Vector< sp<Track> > *tracksToRemove)

{

mixer_state mixerStatus = MIXER_IDLE;

size_t count = mActiveTracks.size();

for (size_t i=0 ; i<count ; i++) {

const sp<Track> t = mActiveTracks[i];

Track* const track = t.get();

if (track->isFastTrack()) {

...

++fastTracks;

}

desiredFrames = sourceFramesNeededWithTimestretch(

sampleRate, mNormalFrameCount, mSampleRate, playbackRate.mSpeed);

// TODO: ONLY USED FOR LEGACY RESAMPLERS, remove when they are removed.

// add frames already consumed but not yet released by the resampler

// because mAudioTrackServerProxy->framesReady() will include these frames

desiredFrames += mAudioMixer->getUnreleasedFrames(trackId);

uint32_t minFrames = 1;

if ((track->sharedBuffer() == 0) && !track->isStopped() && !track->isPausing() &&

(mMixerStatusIgnoringFastTracks == MIXER_TRACKS_READY)) {

minFrames = desiredFrames;

}

size_t framesReady = track->framesReady();

if (ATRACE_ENABLED()) {

// I wish we had formatted trace names

std::string traceName("nRdy");

traceName += std::to_string(trackId);

ATRACE_INT(traceName.c_str(), framesReady);

}

if ((framesReady >= minFrames) && track->isReady() &&

!track->isPaused() && !track->isTerminated())

{

ALOGVV("track(%d) s=%08x [OK] on thread %p", trackId, cblk->mServer, this);

mixedTracks++;

if (mMixerStatusIgnoringFastTracks != MIXER_TRACKS_READY ||

mixerStatus != MIXER_TRACKS_ENABLED) {

mixerStatus = MIXER_TRACKS_READY;

}

} else {

if (framesReady < desiredFrames && !track->isStopped() && !track->isPaused()) {

ALOGV("track(%d) underrun, track state %s framesReady(%zu) < framesDesired(%zd)",

trackId, track->getTrackStateAsString(), framesReady, desiredFrames);

}

if ((track->sharedBuffer() != 0) || track->isTerminated() ||

track->isStopped() || track->isPaused()) {

tracksToRemove->add(track);

track->disable();

} else {

if (--(track->mRetryCount) <= 0) {

tracksToRemove->add(track);

}else if (mMixerStatusIgnoringFastTracks == MIXER_TRACKS_READY ||

mixerStatus != MIXER_TRACKS_READY) {

mixerStatus = MIXER_TRACKS_ENABLED;

}

}

}

} //end for

if ((mBytesRemaining == 0) && ((mixedTracks != 0 && mixedTracks == tracksWithEffect) ||

(mixedTracks == 0 && fastTracks > 0))) {

// FIXME as a performance optimization, should remember previous zero status

if (mMixerBufferValid) {

memset(mMixerBuffer, 0, mMixerBufferSize);

// TODO: In testing, mSinkBuffer below need not be cleared because

// the PlaybackThread::threadLoop() copies mMixerBuffer into mSinkBuffer

// after mixing.

//

// To enforce this guarantee:

// ((mixedTracks != 0 && mixedTracks == tracksWithEffect) ||

// (mixedTracks == 0 && fastTracks > 0))

// must imply MIXER_TRACKS_READY.

// Later, we may clear buffers regardless, and skip much of this logic.

}

// FIXME as a performance optimization, should remember previous zero status

memset(mSinkBuffer, 0, mNormalFrameCount * mFrameSize);

}

// if any fast tracks, then status is ready

mMixerStatusIgnoringFastTracks = mixerStatus;

if (fastTracks > 0) {

mixerStatus = MIXER_TRACKS_READY;

}

return mixerStatus;

}

当track第一次加入时,一般需要等到sharebuffer填满再才参与做mix

bool AudioFlinger::PlaybackThread::Track::isReady() const {

if (mFillingUpStatus != FS_FILLING || isStopped() || isPausing()) {

return true;

}

if (isStopping()) {

if (framesReady() > 0) {

mFillingUpStatus = FS_FILLED;

}

return true;

}

size_t bufferSizeInFrames = mServerProxy->getBufferSizeInFrames();

// Note: mServerProxy->getStartThresholdInFrames() is clamped.

const size_t startThresholdInFrames = mServerProxy->getStartThresholdInFrames();

const size_t framesToBeReady = std::clamp( // clamp again to validate client values.

std::min(startThresholdInFrames, bufferSizeInFrames), size_t(1), mFrameCount);

if (framesReady() >= framesToBeReady || (mCblk->mFlags & CBLK_FORCEREADY)) {

ALOGV("%s(%d): consider track ready with %zu/%zu, target was %zu)",

__func__, mId, framesReady(), bufferSizeInFrames, framesToBeReady);

mFillingUpStatus = FS_FILLED;

android_atomic_and(~CBLK_FORCEREADY, &mCblk->mFlags);

return true;

}

return false;

}

frameReady表示track里有效数据的大小, minFrame是根据mNormalFrameCount算出来的,deepbuffer一般是40ms, low latency的normal mixer thread是20ms

另外track数据是否ready除了数据大小还跟track的状态有关

如果track是stoped状态,则minFrames =1, 只要有数据就参与mix, 如果track是paused状态,无论是否有足够数据都不参与mix, AudioTrack->pause 暂停,AudioTrack->stop, 快速播完。当track时active状态时,如果数据不够,会给一个kMaxTrackRetries次重试,如果还没有数据则加到tracksToRemove中去

最后,如果有fastTrack是ready的,但是normal 的track没有数据,会将sinkBuffer清0, 返回MIXER_TRACKS_READY, 后面可以举个这个例子。

step3:如果有没有活跃的线程,standby时间也没到的话会进入到这里

onIdleMixer();

主要没有fastMixer的线程,只是打印一句log

step4

发送有变化的metadata, metadata可以在hal层用来做一些决策,在systace里可以用来标记outputThread检测有activeTrack加入

/** Metadata of a playback track for an in stream. */

typedef struct playback_track_metadata {

audio_usage_t usage;

audio_content_type_t content_type;

float gain; // Normalized linear volume. 0=silence, 1=0dbfs...

} playback_track_metadata_t;

在介绍step5和step6之前先介绍两个变量

mBytesRemaining:表示还剩余多少数据没有写道hal, 它的初始值 =mCurrentWriteLength = mSinkBufferSize, 也就是Mixer Buffer的大小,每次做完mix后更新为初始值,每次调完write后也更新,减去write的数据。

mSleepTimeUs:代表是否需要sleep, 只有mixer状态 !=MIXER_TRACKS_READY时才会更新这个时间

step 5

如果mBytesRemaining ==0. 代表上次mixer后的数据写完了,需要重新mix

void AudioFlinger::MixerThread::threadLoop_mix()

{

// mix buffers...

mAudioMixer->process();

mCurrentWriteLength = mSinkBufferSize;

// increase sleep time progressively when application underrun condition clears.

// Only increase sleep time if the mixer is ready for two consecutive times to avoid

// that a steady state of alternating ready/not ready conditions keeps the sleep time

// such that we would underrun the audio HAL.

if ((mSleepTimeUs == 0) && (sleepTimeShift > 0)) {

sleepTimeShift--;

}

mSleepTimeUs = 0;

mStandbyTimeNs = systemTime() + mStandbyDelayNs;

//TODO: delay standby when effects have a tail

}

这个函数里修改了好几个变量

mCurrentWriteLength代表本次需要写的数据长度

mSleepTimeUs: 刚刚做完mix, 肯定要将数据写到hal,不sleep

mStandbyTimeNs: 更新standby时间

sleepTimeShift:减少下次sleepTime时间

这个函数里还有一个比较重要的地方,在AudioMixer->process中,mixethread作为消费者会去消耗数据。通过obtainBuffer来取出有效的数据,然后做mix, 之后通过releaseBuffer来更新server的读index。这部分在后面详细介绍。

不同线程的mixer逻辑有些不一样,比如:

void AudioFlinger::DuplicatingThread::threadLoop_mix()

{

// mix buffers...

if (outputsReady(outputTracks)) {

mAudioMixer->process();

} else {

if (mMixerBufferValid) {

memset(mMixerBuffer, 0, mMixerBufferSize);

} else {

memset(mSinkBuffer, 0, mSinkBufferSize);

}

}

mSleepTimeUs = 0;

writeFrames = mNormalFrameCount;

mCurrentWriteLength = mSinkBufferSize;

mStandbyTimeNs = systemTime() + mStandbyDelayNs;

}

duplicate线程需要outputTrack所处的线程处于ready状态(退出standby状态),否则不做mix, 把sinBuffer清0后写下去,这个在分析录屏杂音时会再介绍

void AudioFlinger::DirectOutputThread::threadLoop_mix()

{

size_t frameCount = mFrameCount;

int8_t *curBuf = (int8_t *)mSinkBuffer;

// output audio to hardware

while (frameCount) {

AudioBufferProvider::Buffer buffer;

buffer.frameCount = frameCount;

status_t status = mActiveTrack->getNextBuffer(&buffer);

if (status != NO_ERROR || buffer.raw == NULL) {

// no need to pad with 0 for compressed audio

if (audio_has_proportional_frames(mFormat)) {

memset(curBuf, 0, frameCount * mFrameSize);

}

break;

}

memcpy(curBuf, buffer.raw, buffer.frameCount * mFrameSize);

frameCount -= buffer.frameCount;

curBuf += buffer.frameCount * mFrameSize;

mActiveTrack->releaseBuffer(&buffer);

}

mCurrentWriteLength = curBuf - (int8_t *)mSinkBuffer;

mSleepTimeUs = 0;

mStandbyTimeNs = systemTime() + mStandbyDelayNs;

mActiveTrack.clear();

directOutput只能有一个活跃的track, 不做mix, 直接把从activeTrack区数据后copy到sinkBuffer

step6

如果没有足够数据,则更新sleepTime时间,看是否需要sleep, 还是往hal层写0数据, 不同output线程逻辑是不一样的.

void AudioFlinger::MixerThread::threadLoop_sleepTime()

{

// If no tracks are ready, sleep once for the duration of an output

// buffer size, then write 0s to the output

if (mSleepTimeUs == 0) {

if (mMixerStatus == MIXER_TRACKS_ENABLED) {

if (mPipeSink.get() != nullptr && mPipeSink == mNormalSink) {

} else {

mSleepTimeUs = mActiveSleepTimeUs >> sleepTimeShift;

if (mSleepTimeUs < kMinThreadSleepTimeUs) {

mSleepTimeUs = kMinThreadSleepTimeUs;

}

// reduce sleep time in case of consecutive application underruns to avoid

// starving the audio HAL. As activeSleepTimeUs() is larger than a buffer

// duration we would end up writing less data than needed by the audio HAL if

// the condition persists.

if (sleepTimeShift < kMaxThreadSleepTimeShift) {

sleepTimeShift++;

}

}

} else {

mSleepTimeUs = mIdleSleepTimeUs + mIdleTimeOffsetUs;

}

} else if (mBytesWritten != 0 || (mMixerStatus == MIXER_TRACKS_ENABLED)) {

// clear out mMixerBuffer or mSinkBuffer, to ensure buffers are cleared

// before effects processing or output.

if (mMixerBufferValid) {

memset(mMixerBuffer, 0, mMixerBufferSize);

} else {

memset(mSinkBuffer, 0, mSinkBufferSize);

}

mSleepTimeUs = 0;

ALOGV_IF(mBytesWritten == 0 && (mMixerStatus == MIXER_TRACKS_ENABLED),

"anticipated start");

}

mIdleTimeOffsetUs = 0;

// TODO add standby time extension fct of effect tail

}

如果sleepTime = 0, 则更新下sleepTime, 这样线程会在step9中sleep, 下次再进到这个函数时,因为sleepTime !=0, (如果有活跃的track, 或者是播放暂停了)会把整个mixerbuffer 清0, sleepTimeUs 置成0, 这样在step8 中把0数据写下去。

考虑一种情况:在播放刚开始时,线程被唤醒后, 在有activetrack之前,mSleepTime不会清0, 线程会不停的sleep, 直到有track变成active。

更新sleepTime的逻辑:

- 如果有没有没有活跃的track, sleep时间为mIdleSleepTimeUs, 值为mixerbuffer对应时间的一半

uint32_t AudioFlinger::MixerThread::idleSleepTimeUs() const

{

return (uint32_t)(((mNormalFrameCount * 1000) / mSampleRate) * 1000) / 2;

}

- 如果有active的track, 且mNormalSink !=mPipeSink

sleepTimes会不停的右移sleepTimeShift, sleepTimeShift会递增,最多到2, 如果是deepbufer buffer线程,数据持续不够时,休眠时间会从40ms,20ms,10ms,最后稳定在10ms

static const uint32_t kMaxThreadSleepTimeShift = 2;

static const uint32_t kMinThreadSleepTimeUs = 5000;

mSleepTimeUs = mActiveSleepTimeUs >> sleepTimeShift;

if (mSleepTimeUs < kMinThreadSleepTimeUs) {

mSleepTimeUs = kMinThreadSleepTimeUs;

}

// reduce sleep time in case of consecutive application underruns to avoid

// starving the audio HAL. As activeSleepTimeUs() is larger than a buffer

// duration we would end up writing less data than needed by the audio HAL if

// the condition persists.

if (sleepTimeShift < kMaxThreadSleepTimeShift) {

sleepTimeShift++;

}

}

这样做的目的是让sleep的时间缩短,如果app写数据时,可以及时消耗

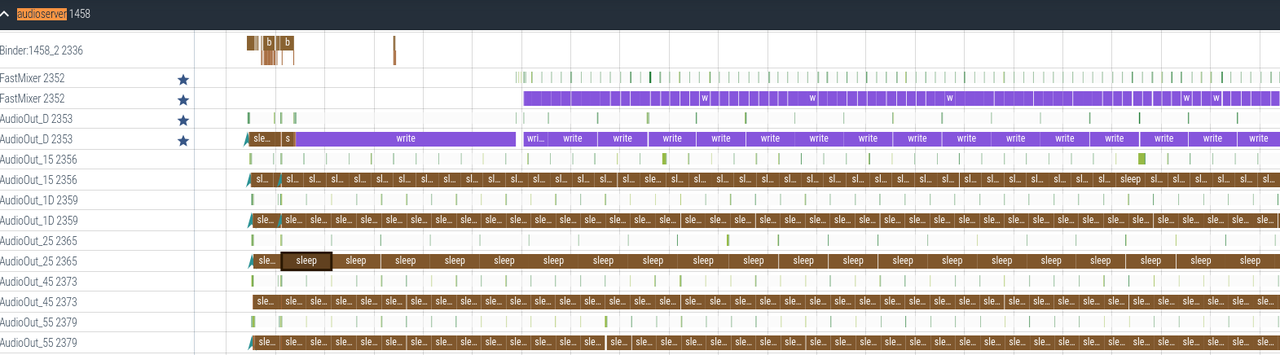

到这里整理下思路,利用现有知识来分析下起播场景:

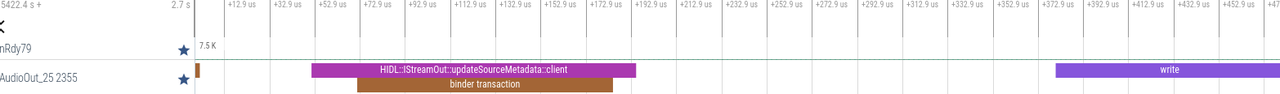

Output thread 0xb400007cd17e8d00, name AudioOut_25, tid 2355, type 0 (MIXER):

I/O handle: 37

Standby: no

2 Tracks of which 1 are active

Type Id Active Client Session Port Id S Flags Format Chn mask SRate ST Usg CT G db L dB R dB VS dB Server FrmCnt FrmRdy F Underruns Flushed Latency

85 yes 13136 337 69 A 0x000 00000001 00000003 44100 3 1 2 -30 0 0 0 00017418 7072 7072 A 0 0 305.39 t

83 no 2175 321 67 P 0x600 00000001 00000003 44100 3 1 2 -inf 0 0 0 00718F8C 45968 18168 A 0 28328 538.40 k

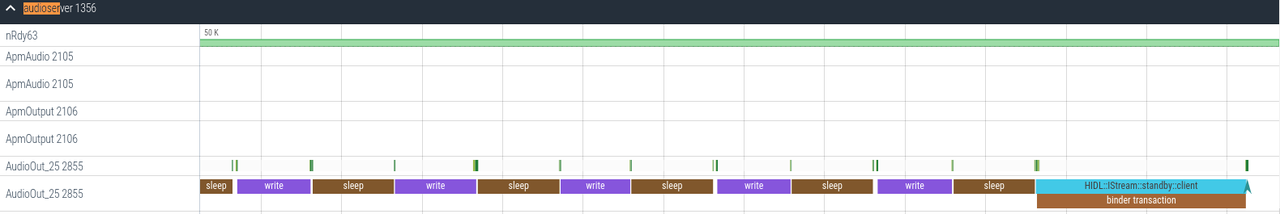

前面没有活跃的track, mixerStatus为IDLE, 按20ms周期sleep, 当track加入acdtiveTrack时, 因为nrdy还没有足够的数据,开始往底层写第一笔0数据,长度为20ms, 第一次写数据在hal层会开通路,所以花的时间比较长,在这个过程中,app已经把sharebuffer填满了,之后mixeStatus变成ready, 开始消耗有效的数据

如果是voip通话,走direct通路,这个函数中不会将sleepTimeUs=0, 也就时数据不够时会sleep, 但是不会写0, 根据是否有activetrack,sleep时间有些区别

mActiveSleepTimeUs 一般就是mixbuffer对应的时间

void AudioFlinger::DirectOutputThread::threadLoop_sleepTime()

{

// do not write to HAL when paused

if (mHwPaused || (usesHwAvSync() && mStandby)) {

mSleepTimeUs = mIdleSleepTimeUs;

return;

}

if (mMixerStatus == MIXER_TRACKS_ENABLED) {

mSleepTimeUs = mActiveSleepTimeUs;

} else {

mSleepTimeUs = mIdleSleepTimeUs;

}

// Note: In S or later, we do not write zeroes for

// linear or proportional PCM direct tracks in underrun.

}

step 7

写数据,返回值为实际写的数据,

step8

处理throttle逻辑,这里是要保证outputthread写数据的速度不要超过2倍预期的速度,一般是在刚开始播的时候, 有些app只有minbuffer size的buffer, kernel里本身有几个缓冲buffer, 刚开始时,这2个buffer会被快速写到kernel, 如果有app来不及送数据的话,audioflinger会补0。 所以这里限制下outputThread写数据的速度

ret = threadLoop_write();

const int64_t lastIoEndNs = systemTime();

writePeriodNs = lastIoEndNs - mLastIoEndNs;

mLastIoEndNs = lastIoEndNs;

if (mThreadThrottle

&& mMixerStatus == MIXER_TRACKS_READY // we are mixing (active tracks)

&& writePeriodNs > 0) { // we have write period info

const int32_t deltaMs = writePeriodNs / NANOS_PER_MILLISECOND;

const int32_t throttleMs = (int32_t)mHalfBufferMs - deltaMs;

if ((signed)mHalfBufferMs >= throttleMs && throttleMs > 0) {

usleep(throttleMs * 1000);

mLastIoEndNs = systemTime(); // we fetch the write end time again.

} else {

}

}

step9

sleep一个段时间

mWaitWorkCV.waitRelative(mLock, microseconds((nsecs_t)mSleepTimeUs));

这里最多sleepTimeUS,线程可能会被提前唤醒,比如要个线程发送config event

sleep10

把不活跃的线程移除,思考一个问题,如有app因为性能问题,不写数据了,被移出activetracks, 那么app再次些数据时,怎么能继续播放呢?

这个问题需要回头看下preparetracks, 在把track加入到tracksToRemove时,掉了track->disable()

void AudioFlinger::PlaybackThread::Track::disable()

{

// TODO(b/142394888): the filling status should also be reset to filling

signalClientFlag(CBLK_DISABLED);

}

void AudioFlinger::PlaybackThread::Track::signalClientFlag(int32_t flag)

{

// FIXME should use proxy, and needs work

audio_track_cblk_t* cblk = mCblk;

android_atomic_or(flag, &cblk->mFlags);

android_atomic_release_store(0x40000000, &cblk->mFutex);

// client is not in server, so FUTEX_WAKE is needed instead of FUTEX_WAKE_PRIVATE

(void) syscall(__NR_futex, &cblk->mFutex, FUTEX_WAKE, INT_MAX);

}

app再次写数据时,在obtainBuffer时会返回NOT_ENOUGH_DATA,从而调restartIfDisabled()来重新把track加到activeTracks中来

接下来把前面提到的三个场景回过头来分析下:

- 没有activeTrack

正常情没有activetrack时app会进入standby,处于休眠状态,但是当app需要与audioflinger通信时,会调到AudioFlinger::registerClient, 这里会唤醒所有的线程,这些线程起来后因为没有活跃的track, 会不停的sleep, sleep的时间为mIdleSleepTimeUs, 而且不会写0数据(看看前面threadlopp_sleeptime更新部分。

app暂停时:

- 有ActiveTrack,但是ActiveTrack数据不够

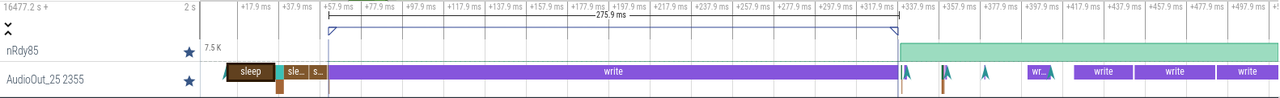

下面这个图就是很典型的杂音问题之一

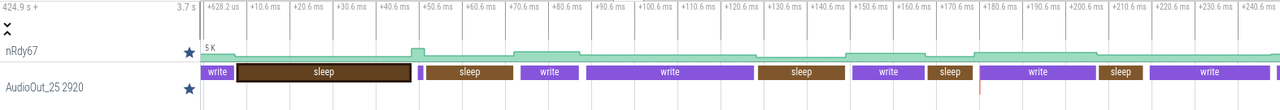

2920是deepbuffer线程,nRdy67是 track ID为67的audiotrack对应sharbuffer的数据大小,那么这个图里,哪一笔write回产生杂音呢? 答案是图中最后一次write写的是0

生产者行为分析

生产者和消费者之前通过shareBuffer交换数据,shareBuffere有一个control block, 其中mFront代表消费者的读指针,mRear代表生产者的写指针。如下图所示,蓝色的代表filled size, 白色的代表avail_size。

shareBuffer有两个重要的参数, framcount(sharebuffer的大小, notificationframes, 每间隔多久跟app要一次数据)

* frameCount: Minimum size of track PCM buffer in frames. This defines the

* application's contribution to the

* latency of the track. The actual size selected by the AudioTrack could be

* larger if the requested size is not compatible with current audio HAL

* configuration. Zero means to use a default value.

* notificationFrames: The callback function is called each time notificationFrames PCM

* frames have been consumed from track input buffer by server.

* Zero means to use a default value, which is typically:

* - fast tracks: HAL buffer size, even if track frameCount is larger

* - normal tracks: 1/2 of track frameCount

* A positive value means that many frames at initial source sample rate.

* A negative value for this parameter specifies the negative of the

* requested number of notifications (sub-buffers) in the entire buffer.

* For fast tracks, the FastMixer will process one sub-buffer at a time.

* The size of each sub-buffer is determined by the HAL.

* To get "double buffering", for example, one should pass -2.

* The minimum number of sub-buffers is 1 (expressed as -1),

* and the maximum number of sub-buffers is 8 (expressed as -8).

* Negative is only permitted for fast tracks, and if frameCount is zero.

* TODO It is ugly to overload a parameter in this way depending on

* whether it is positive, negative, or zero. Consider splitting apart.

Framecount:

对于fastTrack,一般app指定framecount =0, notificationFrames 为一个负数, 由系统来计算framecount

wilhelm/src/android/AudioPlayer_to_android.cpp

if ((policy & AUDIO_OUTPUT_FLAG_FAST) != 0) {

// negative notificationFrames is the number of notifications (sub-buffers) per track

// buffer for details see the explanation at frameworks/av/include/media/AudioTrack.h

notificationFrames = -pAudioPlayer->mBufferQueue.mNumBuffers;

} else {

notificationFrames = 0;

}

android::AudioTrack* pat = new android::AudioTrack(

pAudioPlayer->mStreamType, // streamType

sampleRate, // sampleRate

sles_to_android_sampleFormat(df_pcm), // format

channelMask, // channel mask

0, // frameCount

policy, // flags

audioTrack_callBack_pullFromBuffQueue, // callback

(void *) pAudioPlayer, // user

notificationFrames, // see comment above

pAudioPlayer->mSessionId);

AudioTrack.cpp

mNotificationFramesReq = 0;

const uint32_t minNotificationsPerBuffer = 1;

const uint32_t maxNotificationsPerBuffer = 8;

mNotificationsPerBufferReq = min(maxNotificationsPerBuffer,

max((uint32_t) -notificationFrames, minNotificationsPerBuffer));

minFrameCount = mFrameCount * notificationsPerBuffer;

所以,基本上通过opensl 接口创建的游戏,framecount都是 1536=192x8

对于非fastatrack, 一般由app指定, 系统会计算一个最小值: max(period count * period size / mNormal framecount, 2)× (mNormal framecount +1 +1)

notificationFrameCount:

对于fastTrack:

如果成功创建为fastTrack, 值为 outputThread的mixbuffer大小:4ms

如果被deny,值为20ms对应的buffer

对于非fastTrack:

当sharebuffer size是outputThread的3倍时,值为 sharebuffer size /3, 否则为sharebuffer size /2

获取buffer:

status_t ClientProxy::obtainBuffer(Buffer* buffer, const struct timespec *requested,

struct timespec *elapsed)

{

for (;;) {

int32_t front;

int32_t rear;

if (mIsOut) {

front = android_atomic_acquire_load(&cblk->u.mStreaming.mFront);

rear = cblk->u.mStreaming.mRear;

else {

}

// write to rear, read from front

ssize_t filled = audio_utils::safe_sub_overflow(rear, front);

ssize_t adjustableSize = (ssize_t) getBufferSizeInFrames();

ssize_t avail = (mIsOut) ? adjustableSize - filled : filled;

if (avail < 0) {

avail = 0;

} else if (avail > 0) {

// 'avail' may be non-contiguous, so return only the first contiguous chunk

size_t part1;

if (mIsOut) {

//上图中情况1和情况2

rear &= mFrameCountP2 - 1;

part1 = mFrameCountP2 - rear;

} else {

}

//上图中情况3

if (part1 > (size_t)avail) {

part1 = avail;

}

//part 1大于client端需要大小

if (part1 > buffer->mFrameCount) {

part1 = buffer->mFrameCount;

}

//返回值等于part1

buffer->mFrameCount = part1;

//返回buffer起始指针

buffer->mRaw = part1 > 0 ?

&((char *) mBuffers)[(mIsOut ? rear : front) * mFrameSize] : NULL;

buffer->mNonContig = avail - part1;

mUnreleased = part1;

status = NO_ERROR;

break;

}

//走到这里就代表avail ==0了

struct timespec remaining;

const struct timespec *ts;

switch (timeout) {

// 不等待

case TIMEOUT_ZERO:

status = WOULD_BLOCK;

goto end;

case TIMEOUT_INFINITE:

ts = NULL;

break;

case TIMEOUT_FINITE:

timeout = TIMEOUT_CONTINUE;

if (MAX_SEC == 0) {

ts = requested;

break;

}

FALLTHROUGH_INTENDED;

case TIMEOUT_CONTINUE:

// FIXME we do not retry if requested < 10ms? needs documentation on this state machine

if (!measure || requested->tv_sec < total.tv_sec ||

(requested->tv_sec == total.tv_sec && requested->tv_nsec <= total.tv_nsec)) {

status = TIMED_OUT;

goto end;

}

break;

default:

LOG_ALWAYS_FATAL("obtainBuffer() timeout=%d", timeout);

ts = NULL;

break;

}

int32_t old = android_atomic_and(~CBLK_FUTEX_WAKE, &cblk->mFutex);

if (!(old & CBLK_FUTEX_WAKE)) {

errno = 0;

(void) syscall(__NR_futex, &cblk->mFutex,

mClientInServer ? FUTEX_WAIT_PRIVATE : FUTEX_WAIT, old & ~CBLK_FUTEX_WAKE, ts);

status_t error = errno; // clock_gettime can affect errno

// update total elapsed time spent waiting

switch (error) {

case 0: // normal wakeup by server, or by binderDied()

case EWOULDBLOCK: // benign race condition with server

case EINTR: // wait was interrupted by signal or other spurious wakeup

case ETIMEDOUT: // time-out expired

// FIXME these error/non-0 status are being dropped

break;

default:

status = error;

ALOGE("%s unexpected error %s", __func__, strerror(status));

goto end;

}

}

}

end:

if (status != NO_ERROR) {

buffer->mFrameCount = 0;

buffer->mRaw = NULL;

buffer->mNonContig = 0;

mUnreleased = 0;

}

if (elapsed != NULL) {

*elapsed = total;

}

if (requested == NULL) {

requested = &kNonBlocking;

}

return status;

}

buffer是个输入输出参数,其中mFrameCount

输入:代表需求buffer大小

输出:代表返回buffer大小

client端的处理逻辑:

-

只要avail size >0就返回

当buffer不连续时(上图情况2), 只返回连续这一部分, 剩余部分大小记录在mNonContig

buffer->mFrameCount = part1;

buffer->mNonContig = avail - part1; -

如果avail ==0, 有三种情况

client端不等待: 直接返回 status = WOULD_BLOCK;

client端最多等待一个固定时间: 通过 __NR_futex系统调用进入休眠,输入ts=request, 这种模式下app还还可以多传一个参数elapsed,用来统计obtainBuffer等待的时间

client端等到有数据为止: 通过 __NR_futex系统调用进入休眠,输入ts=null, 代表一直休眠

client端的releaseBuffer 只是更新下mRear值

void ClientProxy::releaseBuffer(Buffer* buffer)

{

size_t stepCount = buffer->mFrameCount;

audio_track_cblk_t* cblk = mCblk;

// Both of these barriers are required

if (mIsOut) {

int32_t rear = cblk->u.mStreaming.mRear;

android_atomic_release_store(stepCount + rear, &cblk->u.mStreaming.mRear);

} else {

}

}

接下来看下server端

status_t ServerProxy::obtainBuffer(Buffer* buffer, bool ackFlush)

{

{

audio_track_cblk_t* cblk = mCblk;

// compute number of frames available to write (AudioTrack) or read (AudioRecord),

// or use previous cached value from framesReady(), with added barrier if it omits.

int32_t front;

int32_t rear;

// See notes on barriers at ClientProxy::obtainBuffer()

if (mIsOut) {

flushBufferIfNeeded(); // might modify mFront

rear = getRear();

front = cblk->u.mStreaming.mFront;

} else {

}

ssize_t filled = audio_utils::safe_sub_overflow(rear, front);

// don't allow filling pipe beyond the nominal size

size_t availToServer;

if (mIsOut) {

availToServer = filled;

mAvailToClient = mFrameCount - filled;

} else {

availToServer = mFrameCount - filled;

mAvailToClient = filled;

}

// 'availToServer' may be non-contiguous, so return only the first contiguous chunk

size_t part1;

if (mIsOut) {

front &= mFrameCountP2 - 1;

part1 = mFrameCountP2 - front;

} else {

}

//情况1和2

if (part1 > availToServer) {

part1 = availToServer;

}

size_t ask = buffer->mFrameCount;

//最多

if (part1 > ask) {

part1 = ask;

}

// is assignment redundant in some cases?

buffer->mFrameCount = part1;

buffer->mRaw = part1 > 0 ?

&((char *) mBuffers)[(mIsOut ? front : rear) * mFrameSize] : NULL;

buffer->mNonContig = availToServer - part1;

return part1 > 0 ? NO_ERROR : WOULD_BLOCK;

}

}

server端从不block, 如果没有数据返回WOULD_BLOCK, 否则返回part1部分大小

void ServerProxy::releaseBuffer(Buffer* buffer)

{

LOG_ALWAYS_FATAL_IF(buffer == NULL);

size_t stepCount = buffer->mFrameCount;

audio_track_cblk_t* cblk = mCblk;

if (mIsOut) {

int32_t front = cblk->u.mStreaming.mFront;

android_atomic_release_store(stepCount + front, &cblk->u.mStreaming.mFront);

} else {

}

cblk->mServer += stepCount;

mReleased += stepCount;

size_t half = mFrameCount / 2;

if (half == 0) {

half = 1;

}

size_t minimum = (size_t) cblk->mMinimum;

if (minimum == 0) {

minimum = mIsOut ? half : 1;

} else if (minimum > half) {

minimum = half;

}

// FIXME AudioRecord wakeup needs to be optimized; it currently wakes up client every time

if (!mIsOut || (mAvailToClient + stepCount >= minimum)) {

ALOGV("mAvailToClient=%zu stepCount=%zu minimum=%zu", mAvailToClient, stepCount, minimum);

int32_t old = android_atomic_or(CBLK_FUTEX_WAKE, &cblk->mFutex);

if (!(old & CBLK_FUTEX_WAKE)) {

(void) syscall(__NR_futex, &cblk->mFutex,

mClientInServer ? FUTEX_WAKE_PRIVATE : FUTEX_WAKE, 1);

}

}

buffer->mFrameCount = 0;

buffer->mRaw = NULL;

buffer->mNonContig = 0;

}

server端releaseBuffer 更新mFront指针,如果总的空闲buffer size>minnum,就会唤醒client端。这里cblk->mMinimum 是AudioTrack创建时指定的,就是前面提到的notificationframes, minnum最大不能超过 shareBuffer的一半

status_t AudioTrack::createTrack_l()

{

status = audioFlinger->createTrack(VALUE_OR_FATAL(input.toAidl()), response);

mNotificationFramesAct = (uint32_t)output.notificationFrameCount;

mProxy->setMinimum(mNotificationFramesAct);

}

总结一下:

client端只需要关注obtainBuffer: 有数据就返回, 如果数据不连续,只返回第一部分, buffer满时,根据传进来的参数,会直接返回,或者等到有数据为止,或者等一个固定时间

server端只需要关注releaseBuffer: 在数据达到minnum时会唤醒client

但是实际过程比这会更复杂一点,AudioTrack的write函数相对简单:

ssize_t AudioTrack::write(const void* buffer, size_t userSize, bool blocking)

{

size_t written = 0;

Buffer audioBuffer;

while (userSize >= mFrameSize) {

audioBuffer.frameCount = userSize / mFrameSize;

status_t err = obtainBuffer(&audioBuffer,

blocking ? &ClientProxy::kForever : &ClientProxy::kNonBlocking);

if (err < 0) {

if (written > 0) {

break;

}

if (err == TIMED_OUT || err == -EINTR) {

err = WOULD_BLOCK;

}

return ssize_t(err);

}

size_t toWrite = audioBuffer.size;

memcpy(audioBuffer.i8, buffer, toWrite);

buffer = ((const char *) buffer) + toWrite;

userSize -= toWrite;

written += toWrite;

releaseBuffer(&audioBuffer);

}

return written;

}

一般app传入的参数都是blocking, 所以每次obtainbuffer都要等到有数据为止,只有在出错的情况下会返回, 不过有些应用,像BiliBili传入的参数就是noblocking, 返回实际写的数据,如果一个byte也没有写就返回WOULD_BLOCK.

nsecs_t AudioTrack::processAudioBuffer()

{

struct timespec timeout;

const struct timespec *requested = &ClientProxy::kForever;

//只有app主动设置了updatePeriod, makerPosion时才会走到下面

if (ns != NS_WHENEVER) {

timeout.tv_sec = ns / 1000000000LL;

timeout.tv_nsec = ns % 1000000000LL;

ALOGV("%s(%d): timeout %ld.%03d",

__func__, mPortId, timeout.tv_sec, (int) timeout.tv_nsec / 1000000);

requested = &timeout;

}

while (mRemainingFrames > 0) {

Buffer audioBuffer;

audioBuffer.frameCount = mRemainingFrames;

size_t nonContig;

status_t err = obtainBuffer(&audioBuffer, requested, NULL, &nonContig);

LOG_ALWAYS_FATAL_IF((err != NO_ERROR) != (audioBuffer.frameCount == 0),

"%s(%d): obtainBuffer() err=%d frameCount=%zu",

__func__, mPortId, err, audioBuffer.frameCount);

//更换为noblocking模式

requested = &ClientProxy::kNonBlocking;

size_t avail = audioBuffer.frameCount + nonContig;

ALOGV("%s(%d): obtainBuffer(%u) returned %zu = %zu + %zu err %d",

__func__, mPortId, mRemainingFrames, avail, audioBuffer.frameCount, nonContig, err);

//走到这里代表avail buffer为0, 休眠1ms后重新来

if (err != NO_ERROR) {

if (err == TIMED_OUT || err == WOULD_BLOCK || err == -EINTR ||

(isOffloaded() && (err == DEAD_OBJECT))) {

// FIXME bug 25195759

return 1000000;

}

ALOGE("%s(%d): Error %d obtaining an audio buffer, giving up.",

__func__, mPortId, err);

return NS_NEVER;

}

//走到这里代表有数据了, ,一次全新的processAudioBufer调用时,mRetryOnPartialBuffer =true

if (mRetryOnPartialBuffer && audio_has_proportional_frames(mFormat)) {

mRetryOnPartialBuffer = false;

if (avail < mRemainingFrames) {

if (ns > 0) { // account for obtain time

const nsecs_t timeNow = systemTime();

ns = max((nsecs_t)0, ns - (timeNow - timeAfterCallbacks));

}

// delayNs is first computed by the additional frames required in the buffer.

nsecs_t delayNs = framesToNanoseconds(

mRemainingFrames - avail, sampleRate, speed);

// afNs is the AudioFlinger mixer period in ns.

const nsecs_t afNs = framesToNanoseconds(mAfFrameCount, mAfSampleRate, speed);

// If the AudioTrack is double buffered based on the AudioFlinger mixer period,

// we may have a race if we wait based on the number of frames desired.

// This is a possible issue with resampling and AAudio.

//

// The granularity of audioflinger processing is one mixer period; if

// our wait time is less than one mixer period, wait at most half the period.

if (delayNs < afNs) {

delayNs = std::min(delayNs, afNs / 2);

}

// adjust our ns wait by delayNs.

if (ns < 0 /* NS_WHENEVER */ || delayNs < ns) {

ns = delayNs;

}

return ns;

}

}

//走到这里有多少数据就先填多少数据

size_t reqSize = audioBuffer.size;

if (mTransfer == TRANSFER_SYNC_NOTIF_CALLBACK) {

// when notifying client it can write more data, pass the total size that can be

// written in the next write() call, since it's not passed through the callback

audioBuffer.size += nonContig;

}

mCbf(mTransfer == TRANSFER_CALLBACK ? EVENT_MORE_DATA : EVENT_CAN_WRITE_MORE_DATA,

mUserData, &audioBuffer);

size_t writtenSize = audioBuffer.size;

// Validate on returned size

if (ssize_t(writtenSize) < 0 || writtenSize > reqSize) {

ALOGE("%s(%d): EVENT_MORE_DATA requested %zu bytes but callback returned %zd bytes",

__func__, mPortId, reqSize, ssize_t(writtenSize));

return NS_NEVER;

}

if (writtenSize == 0) {

if (mTransfer == TRANSFER_SYNC_NOTIF_CALLBACK) {

// The callback EVENT_CAN_WRITE_MORE_DATA was processed in the JNI of

// android.media.AudioTrack. The JNI is not using the callback to provide data,

// it only signals to the Java client that it can provide more data, which

// this track is read to accept now.

// The playback thread will be awaken at the next ::write()

return NS_WHENEVER;

}

// The callback is done filling buffers

// Keep this thread going to handle timed events and

// still try to get more data in intervals of WAIT_PERIOD_MS

// but don't just loop and block the CPU, so wait

// mCbf(EVENT_MORE_DATA, ...) might either

// (1) Block until it can fill the buffer, returning 0 size on EOS.

// (2) Block until it can fill the buffer, returning 0 data (silence) on EOS.

// (3) Return 0 size when no data is available, does not wait for more data.

//

// (1) and (2) occurs with AudioPlayer/AwesomePlayer; (3) occurs with NuPlayer.

// We try to compute the wait time to avoid a tight sleep-wait cycle,

// especially for case (3).

//

// The decision to support (1) and (2) affect the sizing of mRemainingFrames

// and this loop; whereas for case (3) we could simply check once with the full

// buffer size and skip the loop entirely.

nsecs_t myns;

if (audio_has_proportional_frames(mFormat)) {

// time to wait based on buffer occupancy

const nsecs_t datans = mRemainingFrames <= avail ? 0 :

framesToNanoseconds(mRemainingFrames - avail, sampleRate, speed);

// audio flinger thread buffer size (TODO: adjust for fast tracks)

// FIXME: use mAfFrameCountHAL instead of mAfFrameCount below for fast tracks.

const nsecs_t afns = framesToNanoseconds(mAfFrameCount, mAfSampleRate, speed);

// add a half the AudioFlinger buffer time to avoid soaking CPU if datans is 0.

myns = datans + (afns / 2);

} else {

// FIXME: This could ping quite a bit if the buffer isn't full.

// Note that when mState is stopping we waitStreamEnd, so it never gets here.

myns = kWaitPeriodNs;

}

if (ns > 0) { // account for obtain and callback time

const nsecs_t timeNow = systemTime();

ns = max((nsecs_t)0, ns - (timeNow - timeAfterCallbacks));

}

if (ns < 0 /* NS_WHENEVER */ || myns < ns) {

ns = myns;

}

return ns;

}

size_t releasedFrames = writtenSize / mFrameSize;

audioBuffer.frameCount = releasedFrames;

mRemainingFrames -= releasedFrames;

if (misalignment >= releasedFrames) {

misalignment -= releasedFrames;

} else {

misalignment = 0;

}

releaseBuffer(&audioBuffer);

writtenFrames += releasedFrames;

// FIXME here is where we would repeat EVENT_MORE_DATA again on same advanced buffer

// if callback doesn't like to accept the full chunk

if (writtenSize < reqSize) {

continue;

}

// There could be enough non-contiguous frames available to satisfy the remaining request

if (mRemainingFrames <= nonContig) {

continue;

}

}

mRemainingFrames = notificationFrames;

mRetryOnPartialBuffer = true;

// A lot has transpired since ns was calculated, so run again immediately and re-calculate

return 0;

}

正常情况下,第一次obtainbuffer的request是是forever, 之后会把request改成noblocking. 这一点要特别注意,因为avail =0时, client端去obtain数据时,可能等待,也可能直接返回。

另外消耗完整的 mRemainingFrames大小的数据后,mRemainingFrames和mRetryOnPartialBuffer才会刷新, 要注意的是,processAudioBuffer处理的过程中有很多return的位置, 所以从systrace里看到processAudioBuffer并不代表处理了mRemainingFrames大小的数据。

当一次全新的调用开始时,如果sharebuffer里数据小于需求数据,会根据缺少的数据时长去休眠。

下次进来时,如果有数据还不够,就不从这里返回了。

情景分析

在分析问题之前需要分析app的行为, 可以通过dumpsys找到播放线程,client PID, track ID, framecount等关键参数, 通过debuggard找到APP的render线程,或者callback线程

英雄联盟:

Output thread 0xb400007ceed31ac0, name AudioOut_D, tid 2335, type 0 (MIXER):

I/O handle: 13

Standby: no

Fast tracks: sMaxFastTracks=8 activeMask=0x7

Index Active Full Partial Empty Recent Ready Written

0 yes 251 0 44 full 1536 671071104

1 yes 7 0 146 full 1536 2719104

2 yes 134 0 608 full 1536 1889664

7 Tracks of which 2 are active

Type Id Active Client Session Port Id S Flags Format Chn mask SRate ST Usg CT G db L dB R dB VS dB Server FrmCnt FrmRdy F Underruns Flushed Latency

S 67 no 16160 193 47 S 0x600 00000001 00000003 44100 2 6 4 -11 -0.35 -0.35 0 0003F000 16128 0 f 0 0 62.92 k

S 68 no 16160 201 48 S 0x600 00000001 00000003 44100 2 6 4 -11 -0.35 -0.35 0 00037200 16128 0 f 0 0 56.22 k

S 69 no 16160 209 49 S 0x600 00000001 00000003 44100 2 6 4 -11 -0.35 -0.35 0 0003B100 16128 0 f 0 0 62.61 k

F2 105 yes 18213 537 89 A 0x000 00000001 00000003 48000 3 1 0 -26 0 0 0 001CD7C0 1536 1536 f 0 0 53.00 t

F1 104 yes 18213 529 88 A 0x000 00000001 00000003 48000 3 1 0 -26 0 0 0 00297FC0 1536 1536 f 0 0 53.00 t

106 no 18213 553 90 I 0x000 00000001 00000001 16000 3 1 0 -inf 0 0 0 00000000 972 0 I 0 0 new

S 66 no 16160 185 46 S 0x600 00000001 00000003 44100 2 6 4 -11 -0.35 -0.35 0 00013B00 16128 0 f 0 0 61.75 k

前面的type字段

s: static

p: patchtrack

F: fasttrack

可以看到总共有两个fastTrack, 没有normal track

怎么样分析APP里行为呢:

adb shell debuggerd -b 18213 | grep -iE “processAudioBuffer|obtainbuffer”

"AudioTrack" sysTid=18386

#00 pc 00000000000881b4 /apex/com.android.runtime/lib64/bionic/libc.so (syscall+36) (BuildId: aec8135f170446601ecfbb3a8984afdb)

#01 pc 000000000008ca7c /apex/com.android.runtime/lib64/bionic/libc.so (__futex_wait_ex(void volatile*, bool, int, bool, timespec const*)+148) (BuildId: aec8135f170446601ecfbb3a8984afdb)

#02 pc 00000000000f1ec4 /apex/com.android.runtime/lib64/bionic/libc.so (NonPI::MutexLockWithTimeout(pthread_mutex_internal_t*, bool, timespec const*)+224) (BuildId: aec8135f170446601ecfbb3a8984afdb)

#03 pc 0000000000097c60 /apex/com.android.art/lib64/libc++.so (std::__1::mutex::lock()+12) (BuildId: 71a87721940ed09a5ab1722dc94fd181)

#04 pc 0000000000024ecc /apex/com.android.art/lib64/libartbase.so (std::__1::__function::__func<art::InitLogging(char**, void (&)(char const*))::LogdLoggerLocked, std::__1::allocator<art::InitLogging(char**, void (&)(char const*))::LogdLoggerLocked>, void (android::base::LogId, android::base::LogSeverity, char const*, char const*, unsigned int, char const*)>::operator()(android::base::LogId&&, android::base::LogSeverity&&, char const*&&, char const*&&, unsigned int&&, char const*&&)+84) (BuildId: 48e56efbc2457a65b2dc6d8f35e72c06)

#05 pc 0000000000016a50 /apex/com.android.art/lib64/libbase.so (android::base::SetLogger(std::__1::function<void (android::base::LogId, android::base::LogSeverity, char const*, char const*, unsigned int, char const*)>&&)::$_2::__invoke(__android_log_message const*)+196) (BuildId: 96895dcea0300fc102132e9762aef6bc)

#06 pc 00000000000069bc /system/lib64/liblog.so (__android_log_write_log_message+164) (BuildId: 1e8986717d5458a9de68bbea05d55866)

#07 pc 0000000000006c60 /system/lib64/liblog.so (__android_log_print+228) (BuildId: 1e8986717d5458a9de68bbea05d55866)

#08 pc 0000000000098464 /system/lib64/libaudioclient.so (android::AudioTrack::releaseBuffer(android::AudioTrack::Buffer const*)+404) (BuildId: 38c9c9d3c08f399501cc7e73994f18a3)

#09 pc 0000000000097124 /system/lib64/libaudioclient.so (android::AudioTrack::processAudioBuffer()+2764) (BuildId: 38c9c9d3c08f399501cc7e73994f18a3)

"AudioTrack" sysTid=18689

#00 pc 00000000000cbc48 /apex/com.android.runtime/lib64/bionic/libc.so (__vfprintf+684) (BuildId: aec8135f170446601ecfbb3a8984afdb)

#01 pc 00000000000eb2c8 /apex/com.android.runtime/lib64/bionic/libc.so (vsnprintf+196) (BuildId: aec8135f170446601ecfbb3a8984afdb)

#02 pc 0000000000006c38 /system/lib64/liblog.so (__android_log_print+188) (BuildId: 1e8986717d5458a9de68bbea05d55866)

#03 pc 0000000000096fc0 /system/lib64/libaudioclient.so (android::AudioTrack::processAudioBuffer()+2408) (BuildId: 38c9c9d3c08f399501cc7e73994f18a3)

#04 pc 00000000000961f0 /system/lib64/libaudioclient.so (android::AudioTrack::AudioTrackThread::threadLoop()+296) (BuildId: 38c9c9d3c08f399501cc7e73994f18a3)

可以看到英雄联盟有两个活跃的track, 通过callback机制来写数据, 两个fast track的的占用fasttrack的index分别为1和2, framcount都是1536, 他们的trackID为 104和105。 trackID和fast track index的ID号是和 systrace中的nrdy对应的。

后面是遇到的一些典型问题和systrace分析

问题类型一:write模式时,render thread送数据不及时

QQ视频播放杂音 systrace分析

问题类型四: callbackThread回调慢了

录屏杂音 systrace分析

问题类型二:callback模式callbackthread送数据不及时

王者荣耀后台杂音 systrace分析

问题类型三:nativeThread送数据不及时

游戏时插拔耳机杂音问题 systrace分析

2354

2354

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?