k8s资源调度

Scheduler调度器做为Kubernetes三大核心组件之一, 承载着整个集群资源的调度功能,其根据特定调度算法和策略,将Pod调度到最优工作节点上,从而更合理与充分的利用集群计算资源。其作用是根据特定的调度算法和策略将Pod调度到指定的计算节点(Node)上,其做为单独的程序运行,启动之后会一直监听API Server,获取PodSpec.NodeName为空的Pod,对每个Pod都会创建一个绑定。默认情况下,k8s的调度器采用扩散策略,将同一集群内部的pod对象调度到不同的Node节点,以保证资源的均衡利用。

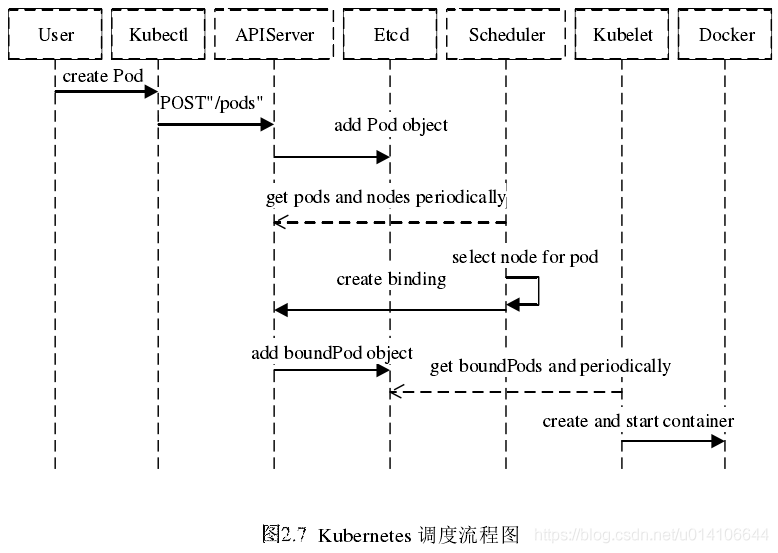

具体的调度过程,一般如下:

-

首先,客户端通过API Server的REST API/kubectl/helm创建pod/service/deployment/job等,支持类型主要为JSON/YAML/

-

接下来,API Server收到用户请求,存储到相关数据到etcd。、

-

调度器通过API Server查看未调度(bind)的Pod列表,循环遍历地为每个Pod分配节点,尝试为Pod分配节点。调度过程分为2个阶段:

- 第一阶段:预选过程,过滤节点,调度器用一组规则过滤掉不符合要求的主机。比如Pod指定了所需要的资源量,那么可用资源比Pod需要的资源量少的主机会被过滤掉。

- 第二阶段:优选过程,节点优先级打分,对第一步筛选出的符合要求的主机进行打分,在主机打分阶段,调度器会考虑一些整体优化策略,比如把容一个Replication Controller的副本分布到不同的主机上,使用最低负载的主机等。

-

选择主机:选择打分最高的节点,进行binding操作,结果存储到etcd中。

-

所选节点对于的kubelet根据调度结果执行Pod创建操作。

nodeSelector

nodeSelector是最简单的约束方式。nodeSelector是pod.spec的一个字段

通过--show-labels可以查看指定node的labels

[root@master ~]# kubectl get node node1 --show-labels

NAME STATUS ROLES AGE VERSION LABELS

node1 NotReady <none> 4d21h v1.20.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=node1,kubernetes.io/os=linux

如果没有额外添加 nodes labels,那么看到的如上所示的默认标签。我们可以通过 kubectl label node 命令给指定 node 添加 labels:

[root@master ~]# kubectl label node node1 disktype=ssd

node/node1 labeled

[root@master ~]# kubectl get node node1 --show-labels

NAME STATUS ROLES AGE VERSION LABELS

node1 NotReady <none> 4d21h v1.20.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,disktype=ssd,kubernetes.io/arch=amd64,kubernetes.io/hostname=node1,kubernetes.io/os=linux

当然,你也可以通过 kubectl label node 删除指定的 labels(标签 key 接 - 号即可)

[root@master ~]# kubectl label node node1 disktype-

node/node1 labeled

[root@master ~]# kubectl get node node1 --show-labels

NAME STATUS ROLES AGE VERSION LABELS

node1 NotReady <none> 4d21h v1.20.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=node1,kubernetes.io/os=linux

创建测试 pod 并指定 nodeSelector 选项绑定节点:

[root@master mainfest]# kubectl label node node1 disktype=ssd

node/node1 labeled

[root@master mainfest]# kubectl get nodes node1 --show-labels

NAME STATUS ROLES AGE VERSION LABELS

node1 Ready <none> 4d21h v1.20.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,disktype=ssd,kubernetes.io/arch=amd64,kubernetes.io/hostname=node1,kubernetes.io/os=linux

[root@master mainfest]# vim test.yaml

apiVersion: v1

kind: Pod

metadata:

name: test

labels:

env: test

spec:

containers:

- name: test

image: nginx

imagePullPolicy: IfNotPresent

nodeSelector:

disktype: ssd

查看pod调度的节点

[root@master mainfest]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

t1 1/1 Running 0 73s 10.244.1.31 node1 <none> <none>

test 1/1 Running 0 6s 10.244.1.32 node1 <none> <none>

可以看出,test这个pod被强制调度到了有disktype=ssd这个label的node上。

nodeAffinity

nodeAffinity意为node亲和性调度策略。是用于替换nodeSelector的全新调度策略。目前有两种节点亲和性表达:

- RequiredDuringSchedulingIgnoredDuringExecution:

必须满足制定的规则才可以调度pode到Node上。相当于硬限制 - PreferredDuringSchedulingIgnoreDuringExecution:

强调优先满足制定规则,调度器会尝试调度pod到Node上,但并不强求,相当于软限制。多个优先级规则还可以设置权重值,以定义执行的先后顺序。

IgnoredDuringExecution的意思是:

如果一个pod所在的节点在pod运行期间标签发生了变更,不在符合该pod的节点亲和性需求,则系统将忽略node上lable的变化,该pod能机选在该节点运行。

NodeAffinity 语法支持的操作符包括:

- In:label 的值在某个列表中

- NotIn:label 的值不在某个列表中

- Exists:某个 label 存在

- DoesNotExit:某个 label 不存在

- Gt:label 的值大于某个值

- Lt:label 的值小于某个值

nodeAffinity规则设置的注意事项如下:

- 如果同时定义了nodeSelector和nodeAffinity,name必须两个条件都得到满足,pod才能最终运行在指定的node上。

- 如果nodeAffinity指定了多个nodeSelectorTerms,那么其中一个能够匹配成功即可。

- 如果在nodeSelectorTerms中有多个matchExpressions,则一个节点必须满足所有matchExpressions才能运行该pod。

apiVersion: v1

kind: Pod

metadata:

name: test1

labels:

app: nginx

spec:

containers:

- name: test1

image: nginx

imagePullPolicy: IfNotPresent

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution: //硬策略

nodeSelectorTerms:

- matchExpressions:

- key: disktype

values:

- ssd

operator: In

preferredDuringSchedulingIgnoredDuringExecution: //软策略

- weight: 10

preference:

matchExpressions:

- key: name

values:

- test

operator: In

给node2打上disktype=ssd的标签

[root@master mainfest]# kubectl label node node2 disktype=ssd

node/node2 labeled

[root@master mainfest]# kubectl get node node2 --show-labels

NAME STATUS ROLES AGE VERSION LABELS

node2 Ready <none> 4d22h v1.20.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,disktype=ssd,kubernetes.io/arch=amd64,kubernetes.io/hostname=node2,kubernetes.io/os=linux

给node1打上name=test的label和删除name=test的label并测试查看结果

[root@master ~]# kubectl label node node1 name=test

node/node1 labeled

[root@master ~]# kubectl get node node1 --show-labels

NAME STATUS ROLES AGE VERSION LABELS

node1 Ready <none> 4d22h v1.20.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,disktype=ssd,kubernetes.io/arch=amd64,kubernetes.io/hostname=node1,kubernetes.io/os=linux,name=test

[root@master mainfest]# kubectl apply -f test1.yaml

pod/test1 created

[root@master mainfest]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

t1 1/1 Running 0 36m 10.244.1.31 node1 <none> <none>

test 1/1 Running 0 35m 10.244.1.32 node1 <none> <none>

test1 1/1 Running 0 3s 10.244.1.33 node1 <none> <none>

删除node1上name=test的label

[root@master mainfest]# kubectl label node node1 name-

node/node1 labeled

[root@master mainfest]# kubectl get node node1 --show-labels

NAME STATUS ROLES AGE VERSION LABELS

node1 Ready <none> 4d22h v1.20.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,disktype=ssd,kubernetes.io/arch=amd64,kubernetes.io/hostname=node1,kubernetes.io/os=linux

[root@master mainfest]# kubectl apply -f test1.yaml

pod/test1 created

[root@master mainfest]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

t1 1/1 Running 0 40m 10.244.1.31 node1 <none> <none>

test 1/1 Running 0 39m 10.244.1.32 node1 <none> <none>

test1 1/1 Running 0 7s 10.244.2.44 node2 <none> <none>

上面这个pod首先是要求要运行在有disktype=ssd这个label的node上,如果有多个node上都有这个label,则优先在有name=test这个label上创建

taint

使用kubeclt taint命令可以给某个node设置五点,node被设置污点之后就和pod之间存在了一种相斥的关系,可以让node拒绝pod的调度执行,甚至将node上本已存在的pod驱逐出去

Taints:避免Pod调度到特定Node上

Tolerations:允许Pod调度到持有Taints的Node上

应用场景:

- 专用节点:根据业务线将Node分组管理,希望在默认情况下不调度该节点,只有配置了污点容忍才允许分配

- 配备特殊硬件:部分Node配有SSD硬盘、GPU,希望在默认情况下不调度该节点,只有配置了污点容忍才允许分配

- 基于Taint的驱逐

#Taint(污点)

[root@master haproxy]# kubectl describe node master

Name: master.example.com

Roles: control-plane,master

Labels: beta.kubernetes.io/arch=amd64

beta.kubernetes.io/os=linux

kubernetes.io/arch=amd64

kubernetes.io/hostname=master.example.com

kubernetes.io/os=linux

node-role.kubernetes.io/control-plane=

node-role.kubernetes.io/master=

node.kubernetes.io/exclude-from-external-load-balancers=

Annotations: flannel.alpha.coreos.com/backend-data: {"VNI":1,"VtepMAC":"8e:50:ba:7a:30:2b"}

flannel.alpha.coreos.com/backend-type: vxlan

flannel.alpha.coreos.com/kube-subnet-manager: true

flannel.alpha.coreos.com/public-ip: 192.168.240.30

kubeadm.alpha.kubernetes.io/cri-socket: /var/run/dockershim.sock

node.alpha.kubernetes.io/ttl: 0

volumes.kubernetes.io/controller-managed-attach-detach: true

CreationTimestamp: Sun, 19 Dec 2021 02:41:49 -0500

Taints: node-role.kubernetes.io/master:NoSchedule #aints:避免Pod调度到特定Node上

Unschedulable: false

#Tolerations(污点容忍)

[root@master ~]# kubectl describe pod httpd1-57c7b6f7cb-sk86h

Name: httpd1-57c7b6f7cb-sk86h

Namespace: default

·····

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s ##Tolerations(污点容忍)允许Pod调度到持有Taints的Node上

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning FailedCreatePodSandBox 12m kubelet Failed to create pod sandbox: rpc error: code = Unknown desc = failed to set up sandbox container "2126da4117ba6ce45ddff8a2e0b9de59bac65f05c7be343249d50edea2cacf37" network for pod "httpd1-57c7b6f7cb-sk86h": networkPlugin cni failed to set up pod "httpd1-57c7b6f7cb-sk86h_default" network: open /run/flannel/subnet.env: no such file or directory

Warning FailedCreatePodSandBox 12m kubelet Failed to create pod "best2001/httpd"

Normal Pulled 11m kubelet Successfully pulled image "best2001/httpd" in 16.175310708s

Normal Created 11m kubelet Created container httpd1

Normal Started 11m kubelet Started container httpd1

节点添加污点

格式: kubectl taint node [node] key=value:[effect]

例如: kubectl taint node k8s-node1 gpu=yes:NoSchedule验证: kubectl describe node k8s-node1 |grep Taint

其中[effect]可取值:

- NoSchedule :一定不能被调度

- PreferNoSchedule:尽量不要调度,非必须配置容忍

- NoExecute:不仅不会调度,还会驱逐Node上已有的Pod

添加污点容忍(tolrations)字段到Pod配置中

#添加污点disktype

[root@master haproxy]# kubectl taint node node1.example.com disktype:NoSchedule

node/node1.example.com tainted

#查看

[root@master haproxy]# kubectl describe node node1.example.com

Name: node1.example.com

Roles: <none>

Labels: beta.kubernetes.io/arch=amd64

beta.kubernetes.io/os=linux

kubernetes.io/arch=amd64

kubernetes.io/hostname=node1.example.com

kubernetes.io/os=linux

Annotations: flannel.alpha.coreos.com/backend-data: {"VNI":1,"VtepMAC":"12:9e:43:99:21:bd"}

flannel.alpha.coreos.com/backend-type: vxlan

flannel.alpha.coreos.com/kube-subnet-manager: true

flannel.alpha.coreos.com/public-ip: 192.168.240.50

kubeadm.alpha.kubernetes.io/cri-socket: /var/run/dockershim.sock

node.alpha.kubernetes.io/ttl: 0

volumes.kubernetes.io/controller-managed-attach-detach: true

CreationTimestamp: Sun, 19 Dec 2021 03:27:16 -0500

Taints: disktype:NoSchedule #污点添加成功

#测试创建一个容器

[root@master haproxy]# kubectl apply -f nginx.yml

deployment.apps/nginx1 created

service/nginx1 created

[root@master haproxy]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx1-7cf8bc594f-8j8tv 1/1 Running 0 14s 10.244.1.92 node2.example.com <none> <none> #因为node1上面有污点,所以创建的容器会在node2上面跑

去掉污点:

kubectl taint node [node] key:[effect]-

[root@master haproxy]# kubectl taint node node1.example.com disktype-

node/node1.example.com untainted

[root@master haproxy]# kubectl describe node node1.example.com

Name: node1.example.com

Roles: <none>

Labels: beta.kubernetes.io/arch=amd64

beta.kubernetes.io/os=linux

kubernetes.io/arch=amd64

kubernetes.io/hostname=node1.example.com

kubernetes.io/os=linux

Annotations: flannel.alpha.coreos.com/backend-data: {"VNI":1,"VtepMAC":"12:9e:43:99:21:bd"}

flannel.alpha.coreos.com/backend-type: vxlan

flannel.alpha.coreos.com/kube-subnet-manager: true

flannel.alpha.coreos.com/public-ip: 192.168.240.50

kubeadm.alpha.kubernetes.io/cri-socket: /var/run/dockershim.sock

node.alpha.kubernetes.io/ttl: 0

volumes.kubernetes.io/controller-managed-attach-detach: true

CreationTimestamp: Sun, 19 Dec 2021 03:27:16 -0500

Taints: <none> #污点已经删除成功

Unschedulable: false

tolerations

设置了污点的Node将根据taint的effect:NoSchedule、PreferNoSchedule、NoExecute和Pod之间产生互斥的关系,Pod将在一定程度上不会被调度到Node上。

但我们可以在Pod上设置容忍 (Toleration),意思是设置了容忍的Pod将可以容忍污点的存在,可以被调度到存在污点的Node上

tolerations:

- key: name

value: test

operator: Equal

effect: NoSchedule

其中key,vaule,effect要与Node上设置的taint保持一致

operator的值为Exists将会忽略value值

tolerationSeconds 用于描述当 Pod 需要被驱逐时可以在 Pod 上继续保留运行的时间

[root@master mainfest]# kubectl taint node node2 name=test:NoSchedule

node/node2 tainted

[root@master mainfest]# kubectl describe node node2|grep -i taint

Taints: name=test:NoSchedule

[root@master mainfest]# kubectl apply -f test2.yaml

pod/test2 created

[root@master mainfest]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

t1 1/1 Running 0 6h38m 10.244.1.31 node1 <none> <none>

test 1/1 Running 0 6h37m 10.244.1.32 node1 <none> <none>

test1 1/1 Running 0 21m 10.244.2.45 node2 <none> <none>

test2 1/1 Running 0 7s 10.244.2.49 node2 <none> <none>

可以看到,虽然我们在node2上设置了污点,但因为我们在资源清单中定义了污点容忍,所以pod依旧能创建在node2上。

本文深入探讨Kubernetes资源调度机制,包括Scheduler组件的工作原理、调度流程、节点选择策略及Pod如何通过nodeSelector、nodeAffinity和taints/tolerations机制绑定到特定节点。

本文深入探讨Kubernetes资源调度机制,包括Scheduler组件的工作原理、调度流程、节点选择策略及Pod如何通过nodeSelector、nodeAffinity和taints/tolerations机制绑定到特定节点。

1833

1833

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?