版本信息:

dss:1.1.1

linkis 1.1.1

hadoop:3.1.3

hive:3.1.2

spark:3.0.0

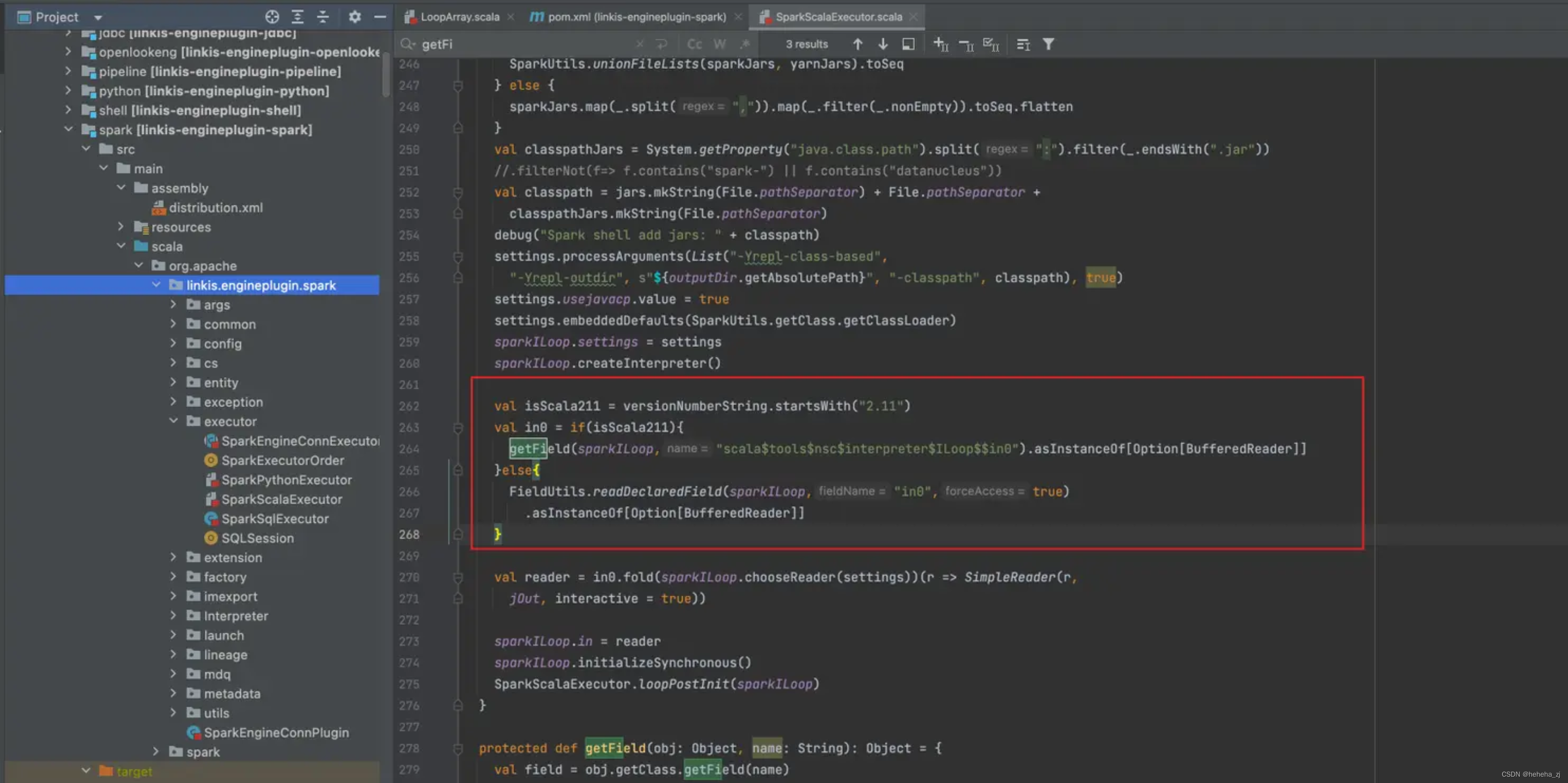

修改文件:SparkScalaExecutor.scala

修改文件内容如下:上图红色标记内容

修改文件内容如下:上图红色标记内容

val isScala211 = versionNumberString.startsWith("2.11")

val in0 = if(isScala211){

getField(sparkILoop,"scala$tools$nsc$interpreter$ILoop$$in0").asInstanceOf[Option[BufferedReader]]

}else{

FieldUtils.readDeclaredField(sparkILoop,"in0",true)

.asInstanceOf[Option[BufferedReader]]

}

重新打包,然后上传到linkis相应目录下更换jar包即可

jar包路径:

/ddhome/bin/dss_linkis/linkis/lib/linkis-engineconn-plugins/spark/dist/v3.0.0/lib

重启服务:

./sbin/linkis-daemon.sh restart cg-engineplugin

命令验证:

sh ./bin/linkis-cli -engineType spark-3.0.0 -codeType scala -code "val l = 1 ; print(l);" -submitUser hadoop -proxyUser hadoop

文章详细描述了在特定版本环境下(如dss:1.1.1,linkis:1.1.1,hadoop:3.1.3,hive:3.1.2,spark:3.0.0)对SparkScalaExecutor.scala文件进行修改的过程,特别是针对Scala2.11的处理逻辑。修改后,需重新打包jar文件,将其上传至Linkis的指定目录替换原有jar。接着,通过执行重启服务脚本来应用变更,并使用linkis-cli-engine验证命令检查更新是否成功。

文章详细描述了在特定版本环境下(如dss:1.1.1,linkis:1.1.1,hadoop:3.1.3,hive:3.1.2,spark:3.0.0)对SparkScalaExecutor.scala文件进行修改的过程,特别是针对Scala2.11的处理逻辑。修改后,需重新打包jar文件,将其上传至Linkis的指定目录替换原有jar。接着,通过执行重启服务脚本来应用变更,并使用linkis-cli-engine验证命令检查更新是否成功。

9068

9068

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?