构建DNN架构

a^[l]:第l层的激活函数

w^[l],b^[l]:第l层的参数

x^(i):第i个训练样本

a^[l]_i:第l层第i个神经元的激活函数

包

numpy

matplotlib

dnn_utils

import numpy as np

import h5py

import matplotlib.pyplot as plt

from testCases_v2 import *

from dnn_utils_v2 import sigmoid, sigmoid_backward, relu, relu_backward

%matplotlib inline

plt.rcParams['figure.figsize'] = (5.0, 4.0) # set default size of plots

plt.rcParams['image.interpolation'] = 'nearest'

plt.rcParams['image.cmap'] = 'gray'

%load_ext autoreload

%autoreload 2

np.random.seed(1)

作业大纲

建一个2层NN和一个L层NN

1.初始化参数

2.实现前向传播

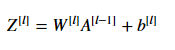

实现一个线性部分(Z^[l])

实现激活函数(ReLu/sigmoid)

将前两步结合起来,形成[LINEAR->ACTIVATION]正向传播

复制L-1次,最后一次使用[LINEAR->sigmoid]正向传播

3.计算损失函数

4.实现反向传播

完成反向传播的线性部分

计算激活函数的导数(relu_backward/sigmoid_backward)

结合前两步,形成 [LINEAR->ACTIVATION] 反向传播

复制L-1次,最后一次使用[LINEAR->sigmoid]反向传播

5.更新参数

初始化

2层NN

import numpy as np

def initialize_parameters(n_x, n_h, n_y):

'''

input:

n_x:input layer size

n_h:hidden layer size

n_y:outpou layer size

output:

parameters:which combine W1,W2,b1,b2

W1,b1:1th layer parameters

W1.shape = n_h,n_x

b1.shape = n_h,1

W2,b2:2th layer parameters

W2.shape = n_y,n_h

b2.shape = n_y,1

'''

np.random.seed(1)

W1 = np.random.randn(n_h, n_x) * 0.01

W2 = np.random.randn(n_y, n_h) * 0.01

b1 = np.zeros((n_h, 1))

b2 = np.zeros((n_y, 1))

assert (W1.shape == (n_h, n_x))

assert (W2.shape == (n_y, n_h))

assert (b1.shape == (n_h, 1))

assert (b2.shape == (n_y, 1))

parameters = {

"W1": W1,

"W2": W2,

"b1": b1,

"b2": b2}

return parameters

parameters = initialize_parameters(2,2,1)

print("W1 = " + str(parameters["W1"]))

print("b1 = " + str(parameters["b1"]))

print("W2 = " + str(parameters["W2"]))

print("b2 = " + str(parameters["b2"]))

L层NN

import two_layer_nn

import numpy as np

def initialize_parameters_deep(layer_dims):

'''

input:

layer_dims:python array (list) containing the dimensions of each layer in our network

example:layer_dims = [2,4,1]means it's a 2 layer NN,input layer has 2 units,hidden layer has 4 units,output layer has 1 unit

output:

:parameters: which combines W1,b1,W2,b2,...,WL,bL

W1.shape = n1,n0 b1.shape = n1,1

W2.shape = n2,n1 b2.shape = n2,1

...

WL.shape = nL,nL-1,bL.shape = nL,1

'''

np.random.seed(3)

parameters = {}

L = len(layer_dims)

for i in range(1,L):

parameters["W"+str(i)] = np.random.randn(layer_dims[i],layer_dims[i-1])*0.01

parameters["b"+str(i)] = np.zeros((layer_dims[i],1))

assert (parameters["W"+str(i)].shape == (layer_dims[i],layer_dims[i-1]))

assert (parameters["b"+str(i)].shape == (layer_dims[i],1))

return parameters

parameters = initialize_parameters_deep([5,4,3])

print("W1 = " + str(parameters["W1"]))

print("b1 = " + str(parameters["b1"]))

print("W2 = " + str(parameters["W2"]))

print("b2 = " + str(parameters["b2"]))

前向传播

本文详细介绍了如何构建深度神经网络(DNN)的架构,从初始设置开始,包括2层和L层神经网络的初始化,接着讲解前向传播过程,特别是线性部分和激活函数的应用。此外,还涵盖了代价函数、反向传播算法以及参数更新的步骤,全面阐述DNN的构建流程。

本文详细介绍了如何构建深度神经网络(DNN)的架构,从初始设置开始,包括2层和L层神经网络的初始化,接着讲解前向传播过程,特别是线性部分和激活函数的应用。此外,还涵盖了代价函数、反向传播算法以及参数更新的步骤,全面阐述DNN的构建流程。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1380

1380

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?