Introduction

This is one of the most oft asked questions and different solutions are given to it. I had earlier published this little tip/trick about this. With the simple class shown there, it is no doubt possible to maintain just one instance but that is just part of the desired behavior. Have you noticed how Firefox keeps adding tabs to its running instance whenever you open more pages from the disk? That happens because the other instances, before closing, tell the running instance to open those files. Similar behavior can be incorporated into any application with some extension to the class shown in the tip/trick. The extension is a bit involved and warranted a full fledged article.

In this day when C# on .NET is the number one choice for Windows UI programming, I don’t know if this C++ class that uses Win32 API holds any value; nonetheless, I want to share it. All the development was done on MSVC6 and tested on WinXP SP 3, but I suppose this must be able to run on all Win32 platforms.

In the course of this article, I might come across as wordy and pedantic. I choose to be so because there is a lot of stuff dealing with system programming with which most people might not be familiar. Yet, I don’t intend to replace any book or MSDN. I'll just briefly explain things relevant to the subject and leave the rest to be got from MSDN or the web. If you happen to be at ease with these concepts, you may skim through.

The Usage

The class SingleInstanceGuard provides a simple and intuitive interface.

class

SingleInstanceGuard

{

public

:

class

Exception

{

public

:

enum

REASON

{

DIFFERENT_GUARDS,

UNABLE_TO_CHECK,

UNABLE_TO_READ,

UNABLE_TO_WRITE,

UNABLE_TO_ALERT,

SHARING_WITH_SELF,

DATA_TOO_BIG,

OPERATION_NOT_SUPPORTED

};

REASON m_r;

Exception(REASON r):m_r(r){}

};

//

This is the alert message that would be sent to the

//

running instance by the other instances

static

UINT SIGM_ALERT;

SingleInstanceGuard(const

char

*pID, long

nSharedDataSize=-1

);

~SingleInstanceGuard();

void

SetTargetWindow(HWND h);

bool

AlreadyRunning();

template

<

class

DATA>

SingleInstanceGuard& operator

<

<

(const

DATA &d){return

Write((void

*)&d, sizeof

(d));}

SingleInstanceGuard& operator

<

<

(const

std::string &s);

template

<

class

DATA>

bool

operator

>

>

(DATA &d){return

Read((void

*)&d, sizeof

(d));}

bool

operator

>

>

(std::string &s);

void

AlertTheRunningInstance();

private

:

SingleInstanceGuard& Write(void

*pData, int

nize);

bool

Read(void

*pData, int

nize);

};

The first parameter of the constructor is the ID for the guard. You have to make sure it is unique. The rest of the interface must be pretty much self explanatory. The points of interest here, which are new, are the overloaded operators and AlertTheRunningInstance() , which together help the other instances communicate with the running one. To demonstrate this, three different examples are included. One is a console application, one is a Win32 application and one is an MFC application.

I have to make this very clear at the outset that any application that wishes to incorporate the said behavior must be either an event driven one or a multi threaded one. This is because the application must be capable of queuing or interrupting its jobs so that the alert message issued by other instances may be processed. Among the examples given, Win32 and MFC are inherently event driven while the console is not, so the console is made into a multi threaded application. Note that what the running instance of an application does with the shared data and/or alert message is left to the application logic. Each of these examples does something different.

I’ll begin explaining the usage with Console application.

#define

TAG "

05B6DEE0-D3BB-11DF-9F2A-3D55DFD72085"

struct

P{int

n;char

**params;};

HANDLE hStay = NULL;

unsigned

long

_stdcall Monitor(void

*p);

BOOL WINAPI Handler(DWORD d);

void

main(int

n, char

** params)

{

SetConsoleCtrlHandler(Handler, TRUE);

hStay = CreateEvent(NULL, FALSE, FALSE, NULL);

P *p = new

P;

p->

n = n;

p->

params = params;

DWORD tid;

CreateThread(NULL, 0

, Monitor, p, 0

, &tid);

WaitForSingleObject(hStay, INFINITE);

}

unsigned

long

_stdcall Monitor(void

*p)

{

P *pp = (P*)p;

SingleInstanceGuard sig(TAG);

if

(sig.AlreadyRunning())

{

for

(int

k = 1

; k<

pp->

n; k++)

{

string s = pp->

params[k];

sig <

<

s;

}

sig.AlertTheRunningInstance();

SetEvent(hStay);

delete

pp;

return

0

;

}

MSG msg;

while

(::GetMessage(&msg, NULL, 0

, 0

))

{

if

(SingleInstanceGuard::SIGM_ALERT == msg.message)

{

cout <

<

"

Received these from other instance: "

;

string s;

while

(sig >

>

s)

cout <

<

s.c_str() <

<

"

"

;

cout <

<

endl;

}

}

delete

pp;

return

0

;

}

BOOL WINAPI Handler(DWORD d)

{

if

(d == CTRL_C_EVENT)

{

cout <

<

"

Press 'c' to continue and any other key to exit: "

;

char

c;

cin >

>

c;

if

('

c'

!=c) SetEvent(hStay);

return

TRUE;

}

return

FALSE;

}

The parameters received by main() are packed into a structure and passed on to the Monitor thread as its parameter. The function WaitForSingleObject(...) used by main() ensures that it doesn’t return immediately causing the application to end. After unpacking the parameters, the Monitor thread checks to see if there is a running instance. If there is, it writes the parameters into the guard, requests for the running instance to be alerted and returns. Before returning, it sets the event which causes main() to come out of the wait state there by closing the application. This is OK since we don’t need the second (or any other) instance to run any more, now that the data is shared. If however there was no running instance, making this the first one, it is going to go into message loop. Upon receiving the message SingleInstanceGuard::SIGM_ALERT , which would be posted by other instances through a call to AlertTheRunningInstance() , the running instance reads back the shared data and prints them. Then it goes on to wait for other possible alerts. This instance will keep running until either the console window is closed or main() comes out of the waiting. To achieve the latter, a handler for the Ctrl+c is installed, which will be called when the key combination is hit. This handler is necessary in order to extract some kind of input from the user. Upon typing anything other than ‘c ’, the event is set which causes main() to come out of the waiting and the application ends. A small batch file is provided with the example to test the scenario where multiple instances open up.

The key thing that you must have got out of this example is to be able to imagine which code is for the running instance and which code is for the others. This is going to remain important irrespective of the application model.

The Win32 example is a simple one. There is no data to be shared and the running instance is just going to flash and come to front.

#define

TAG "

SingleInstanceGuardTest_Win32"

LRESULT CALLBACK WndProc (HWND hwnd, UINT message, WPARAM wParam, LPARAM lParam)

{

if

(SingleInstanceGuard::SIGM_ALERT == message)

{

FlashWindow(hwnd, TRUE);

::SetForegroundWindow(hwnd);

return

0

;

}

switch

(message)

{

case

WM_DESTROY:

PostQuitMessage (0

) ;

return

0

;

}

return

DefWindowProc (hwnd, message, wParam, lParam) ;

}

int

WINAPI WinMain (HINSTANCE hInstance, HINSTANCE hPrevInstance,

PSTR szCmdLine, int

iCmdShow)

{

SingleInstanceGuard sig(TAG);

if

(sig.AlreadyRunning())

{

sig.AlertTheRunningInstance();

return

0

;

}

static

TCHAR szAppName[] = TEXT ("

HelloWin"

) ;

HWND hwnd ;

MSG msg ;

WNDCLASS wndclass ;

wndclass.style = CS_HREDRAW | CS_VREDRAW ;

wndclass.lpfnWndProc = WndProc ;

wndclass.cbClsExtra = 0

;

wndclass.cbWndExtra = 0

;

wndclass.hInstance = hInstance ;

wndclass.hIcon = LoadIcon (NULL, IDI_APPLICATION) ;

wndclass.hCursor = LoadCursor (NULL, IDC_ARROW) ;

wndclass.hbrBackground = (HBRUSH) GetStockObject (WHITE_BRUSH) ;

wndclass.lpszMenuName = NULL ;

wndclass.lpszClassName = szAppName ;

if

(!RegisterClass (&wndclass))

{

MessageBox (NULL, TEXT ("

This program requires Windows NT!"

),

szAppName, MB_ICONERROR) ;

return

0

;

}

hwnd = CreateWindow (szAppName, //

window class name

TEXT ("

The Hello Program"

), //

window caption

WS_OVERLAPPEDWINDOW, //

window style

CW_USEDEFAULT, //

initial x position

CW_USEDEFAULT, //

initial y position

CW_USEDEFAULT, //

initial x size

CW_USEDEFAULT, //

initial y size

NULL, //

parent window handle

NULL, //

window menu handle

hInstance, //

program instance handle

NULL) ; //

creation parameters

ShowWindow (hwnd, iCmdShow) ;

UpdateWindow (hwnd) ;

sig.SetTargetWindow(hwnd);

while

(GetMessage (&msg, NULL, 0

, 0

))

{

TranslateMessage (&msg) ;

DispatchMessage (&msg) ;

}

return

msg.wParam ;

}

The SetTargetWindow(...) takes as its parameter the window in the running instance that is supposed to receive the alert message. This function is relevant to GUI applications and must be used without fail. The importance of this function is explained later.

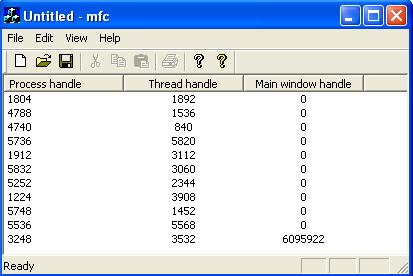

The MFC example follows along the same lines as the other two. However, in order to make it a bit interesting, the MFC application logs (latest one first) the process id, the thread id and the active window handle shared by other instances. Because of the nature of the MFC application source code, only those relevant to the discussion are shown below:

///

//////////////////////////////////////////////////////////////////////////

//

CMfcApp initialization

#define

TAG "

SingleInstanceGuardTest_MFC"

//

** IMPORTANT **

//

This must be a non local object. If otherwise, say when local to InitInstance(),

//

after the function returns this object will destroyed which removes the guard event,

//

effectively rendering the whole effort worthless since every instance IS going to

//

create a brand new event.

SingleInstanceGuard g_sig(TAG);

void

Fill(DWORD dwProcess, DWORD dwThread, DWORD window)

{

CListView *pView = (CListView*)((CFrameWnd*)theApp.m_pMainWnd)->

GetActiveView();

CListCtrl &listCtrl = pView->

GetListCtrl();

CString str;

str.Format("

%d"

, dwProcess);

listCtrl.InsertItem(0

, str);

str.Format("

%d"

, dwThread);

listCtrl.SetItem(0

, 1

, LVIF_TEXT, str, 0

, 0

, 0

, 0

);

str.Format("

%d"

, window);

listCtrl.SetItem(0

, 2

, LVIF_TEXT, str, 0

, 0

, 0

, 0

);

}

BOOL CMfcApp::OnSIGAlert(WPARAM, LPARAM)

{

DWORD dwProcess, dwThread, window;

g_sig >

>

dwProcess;

g_sig >

>

dwThread;

g_sig >

>

window;

Fill(dwProcess, dwThread, window);

m_pMainWnd->

SetForegroundWindow();

return

TRUE;

}

BOOL CMfcApp::InitInstance()

{

DWORD dwProcess = ::GetCurrentProcessId();

DWORD dwThread = ::GetCurrentThreadId();

if

(g_sig.AlreadyRunning())

{

HWND h = m_pMainWnd->

GetSafeHwnd();

g_sig <

<

dwProcess <

<

dwThread <

<

(DWORD)h;

g_sig.AlertTheRunningInstance();

return

FALSE;

}

//

other code

HWND h = m_pMainWnd->

GetSafeHwnd();

g_sig.SetTargetWindow(h);

Fill(dwProcess, dwThread, (DWORD)h);

return

TRUE;

}

///

//////////////////////////////////////////////////////////////////////////

//

CMainFrame

IMPLEMENT_DYNCREATE(CMainFrame, CFrameWnd)

BEGIN_MESSAGE_MAP(CMainFrame, CFrameWnd)

//

{{AFX_MSG_MAP(CMainFrame)

//

NOTE - the ClassWizard will add and remove mapping macros here.

//

DO NOT EDIT what you see in these blocks of generated code !

ON_WM_CREATE()

//

}}AFX_MSG_MAP

ON_REGISTERED_MESSAGE(SingleInstanceGuard::SIGM_ALERT, OnSIGAlert)

END_MESSAGE_MAP()

BOOL CMainFrame::OnSIGAlert(WPARAM w, LPARAM l)

{

return

((CMfcApp*)AfxGetApp())->

OnSIGAlert(w, l);

}

This is a simple SDI application with a CListView derived class for the view. The ON_REGISTERED_MESSAGE macro takes care of calling the OnSIGAlert(...) function when the alert message is issued by the other instances. The rest of the snippet is just about logging the shared information. The following screen shot shows what it looks like:

How Does It Work?

There are two aspects to the SingleInstanceGuard class. One is checking if an instance is already running and the other is sharing data with the running instance.

The checking is done in essentially the same way as described in that tip/trick.

bool

IsAlreadyRunning(const

char

*pid)

{

g_hGuardEvent = CreateEvent(NULL, FALSE, FALSE, pid);

return

ERROR_ALREADY_EXISTS==GetLastError();;

}

bool

SingleInstanceGuard::AlreadyRunning()

{

return

g_bRunning;

}

The flag g_bRunning is initialized in the constructor.

The sharing part can be divided into two aspects. One is sharing the data and the other is alerting the running instance. The second parameter the constructor takes is the desired size of the data to be shared. It is a minimum of 4KB by default, which should be more than sufficient to fulfill almost all scenarios. To understand how the sharing is done, we need to dig a bit into the details.

You have to know that each process will get its own virtual memory space in which to run. What this means is, in normal circumstances, although any two processes might have the exact same addresses within their memory space, the data present there will be not accessible to the other. This applies to multiple instances of one application as well (for the OS, it hardly matters if more than one process was created from the same .exe file on the disk). So the only way to share data across processes would be to put data in some place form where all the processes can access it. A simple and naïve way would be to put in a file, say in the temporary folder, but that is not good as the file could be deleted from there or even get corrupted, for a variety of reasons. One way to avoid this would be to lock the file. This however leads to no other process but the one that locked it having permission to access it, effectively failing the purpose.

This is where a memory mapped file helps us. Memory mapped file (MMF) is a kernel object that deals with memory. MMF is what serves as the base most implementation of all cross process data sharing techniques, including WM_COPYDATA . As the name suggests, MMF is a file that is mapped into a memory location. Once the file is mapped, its contents can be accessed as a stream of bytes, much like that of an array. This greatly simplifies data manipulation. Also, this file could be locked by a process (usually the one that creates it) and yet, other processes can map its contents into memory and access it. This implies that we would have to first create a disk file and then map it. But when the size of the data to be shared is not very big, like in this case, we can avoid the chore of creating (and later deleting) a separate disk file and use the system paging file instead. Paging file is the disk file maintained by the OS to implement virtual memory.

Any kernel object can be created named or unnamed and the named ones could be shared across processes if the name is known to all the processes. So a named MMF is what we will be using to share the data. Although the data is successfully shared using MMF, there still is a chance of corruption due to simultaneous reading and writing by several instances. Like any other shared data, this must be protected against that. The obvious way to achieve this is through a mutex, but I am going to use two event objects. I’ll tell the reason in a bit. Note that even these event objects must be shared across the instances.

There are some ground rules to access our MMF. The first instance only reads the data and the other instances only write the data. Writing or reading can happen only when the MMF is free. At any given point of time when the MMF is in use, either a write operation or a read operation will be taking place.

Before I add more words, let us look at the constructor that will elucidate.

SingleInstanceGuard::SingleInstanceGuard(const

char

*pID, long

nSharedDataSize/*

-1*/

)

{

g_pid = pID;

if

(nSharedDataSize >

MINIMUM_MMF_SIZE)

g_nSharedDataSize = nSharedDataSize;

g_bRunning = IsAlreadyRunning(g_pid);

/*

create/open the other kernel objects */

string strMMF = g_pid;

strMMF += "

MMF"

;

string strCanRead = g_pid;

strCanRead += "

CanRead"

;

string strCanWrite = g_pid;

strCanWrite += "

CanWrite"

;

string strAlert = g_pid;

strAlert += "

AlertMessage"

;

if

(g_bRunning)

{

/*

Open the MMF */

g_hMMF = OpenFileMapping(FILE_MAP_READ |

FILE_MAP_WRITE, FALSE, strMMF.c_str());

/*

Read out the communication information */

char

*pBytes = (char

*)MapViewOfFile(g_hMMF,

FILE_MAP_ALL_ACCESS, 0

, 0

, 0

);

if

(0==pBytes)

throw

Exception(Exception::UNABLE_TO_CHECK);

long

nSize;

memcpy((void

*)&nSize, pBytes, sizeof

(long

));

if

(nSize != g_nSharedDataSize)

throw

Exception(Exception::DIFFERENT_GUARDS);

g_nBytesRW += sizeof

(long

);

UnmapViewOfFile(pBytes);

/*

open the control kernel objects */

g_hCanReadData = CreateEvent(NULL, FALSE,

FALSE, strCanRead.c_str());

g_hCanWriteData = CreateEvent(NULL, FALSE,

FALSE, strCanWrite.c_str());

}

else

{

/*

register the alert message */

SIGM_ALERT = ::RegisterWindowMessage(strAlert.c_str());

/*

Create the control kernel objects */

g_hCanReadData = CreateEvent(NULL, FALSE,

FALSE, strCanRead.c_str());

g_hCanWriteData = CreateEvent(NULL, FALSE,

TRUE, //

allow the first copy to proceed

//

to write without waiting

strCanWrite.c_str());

/*

create the MMF (backed by the system paging file) */

long

nMMFSize = sizeof

(long

) //

space for the said size

//

value itself, to verify later

+ sizeof

(long

)//

size of data actually shared

+ g_nSharedDataSize;//

the said size itself

g_hMMF = CreateFileMapping(INVALID_HANDLE_VALUE,

NULL, PAGE_READWRITE, 0

, nMMFSize, strMMF.c_str());

/*

Write out the communication information */

char

*pBytes = (char

*)MapViewOfFile

(g_hMMF, FILE_MAP_ALL_ACCESS, 0

, 0

, 0

);

if

(0==pBytes)

throw

Exception(Exception::UNABLE_TO_CHECK);

memcpy(pBytes+g_nBytesRW, (void

*)&g_nSharedDataSize,

sizeof

(g_nSharedDataSize));

g_nBytesRW += sizeof

(g_nSharedDataSize);

long

W = 0

;//

written size

memcpy(pBytes+g_nBytesRW, (void

*)&W, sizeof

(W));

g_nBytesRW += sizeof

(W);

UnmapViewOfFile(pBytes);

/*

mark the thread id and create the alert monitor */

g_dwFirstInstanceThreadId = GetCurrentThreadId();

DWORD tid;

CreateThread(NULL, 0

, AlertMonitor, NULL, 0

, &tid);

}

g_nBytesRW = 0

;

}

The variable g_bRunning holds a flag indicating whether an instance is already running. This check is needed throughout and this flag helps reduce multiple calls to CreateEvent(…) . If an instance is running, it (the other instance) is going to open the events and the MMF. If not, the first instance will create them. There are two events namely g_hCanWriteData and g_hCanReadData which guard the writing and reading of the MMF respectively. The first parameter CreateFileMapping(…) takes is the disk file handle. Passing INVALID_HANDLE_VALUE indicates that we intend to use the paging file. The size of our MMF is more than just the data size. Apart from the data, it also contains the intended size of the data and the size of the data actually written. The former is a check to avoid using a different guard’s MMF. This is not particularly useful in regular cases but when you need to share data above 4KB in some apps and use the same name mistakenly in more than one app, this will come in handy. The latter, the size of data actually written, is useful in extracting data from MMF. This is how the MMF stream looks like:

![]()

The functions MapViewOfFile(...) and UnmapViewOfFile(...) do the mapping and unmapping of the file respectively. It is important to unmap the file when the job is done. The first instance also registers the alert message, marks the thread id and creates the monitor thread. As you notice, appropriate exceptions are thrown when things fail, so it is advisable to use this class within a try /catch block (although I haven’t done so in the simple examples).

unsigned

long

_stdcall AlertMonitor(void

*)

{

while

(1

)

{

WaitForSingleObject(g_hCanReadData, INFINITE);

g_nBytesRW = 0

;

g_nWrittenDataSize = -1

;

if

(NULL != g_hWndFirstInstanceWindow)

PostMessage(g_hWndFirstInstanceWindow,

SingleInstanceGuard::SIGM_ALERT, 0

, 0

);

else

PostThreadMessage(g_dwFirstInstanceThreadId,

SingleInstanceGuard::SIGM_ALERT, 0

, 0

);

}

return

0

;

}

The function WaitForSingleObject(...) is a very crucial function in the implementation of this class and must be understood. The first parameter is the handle of any kernel object and the second parameter is the time in milliseconds. This function causes the calling thread to wait until at least the specified number of milliseconds. INFINITE indicates the thread has to wait until the kernel object is signaled. There are other reasons for a thread to come out of the wait state but those won’t apply here.

This thread is very simple. It waits till it is allowed to read from the MMF. It then resets the size of data read and the size of data to be read. The variable g_nBytesRW might indicate the bytes read or the bytes written depending on the instance. The variable g_nWrittenDataSize is valid only in the reading instance, the first one, and helps in reading data from the MMF. It then posts the alert message. Remember, we are with the first instance here. It is in response to this message that the first instance reads data.

The window handle g_hWndFirstInstanceWindow is set by the first instance through SetTargetWindow(...) . There is a reason why the message is posted to the window if it is set. When a message is posted to a GUI thread as opposed to a window, it can be lost while a window owned by the thread is being moved, resized, etc. or when in a modal loop. The former case is less likely to happen in a single user session than the latter. Even if a GUI client is not going to have any modal loops, it is best to set the target window to avoid subtle bugs.

void

SingleInstanceGuard::SetTargetWindow(HWND h)

{

if

(g_bRunning)//

only the first instance needs this

throw

Exception(Exception::OPERATION_NOT_SUPPORTED);

g_hWndFirstInstanceWindow = h;

}

The overloaded operators used to read and write data simply call the Read(...) and Write(...) functions.

bool

SingleInstanceGuard::Read(void

*pData, int

nSize)

{

//

This is called only after successful wait on g_hCanRead

//

from the monitor thread.

//

So no need to wait here

if

(g_bRunning)//

only first instance must read

throw

Exception(Exception::SHARING_WITH_SELF);

if

(g_nBytesRW == g_nWrittenDataSize)

return

false

;//

no more read

if

(g_nBytesRW + nSize >

g_nSharedDataSize)

throw

Exception(Exception::DATA_TOO_BIG);

char

*pBytes = (char

*)MapViewOfFile(g_hMMF, FILE_MAP_ALL_ACCESS, 0

, 0

, 0

);

if

(0==pBytes)

throw

Exception(Exception::UNABLE_TO_READ);

if

(g_nWrittenDataSize <

0

)

{

g_nWrittenDataSize = *(long

*)(pBytes+sizeof(long

));

//

reset so that an empty alert after a shared

//

data alert won't read wrong data

*(long

*)(pBytes+sizeof(long

)) = 0

;

}

if

(0

== g_nWrittenDataSize)

{

SetEvent(g_hCanWriteData);//

let others write

return

false

;

}

memcpy(pData, pBytes+sizeof(long

)+sizeof(long

)+g_nBytesRW, nSize);

g_nBytesRW += nSize;

UnmapViewOfFile(pBytes);

if

(g_nBytesRW == g_nWrittenDataSize)

SetEvent(g_hCanWriteData);//

let others write

return

true

;

}

This function returns true /false to indicate if further read can happen. This makes the operator >> while loop compliant. When it is found that there is no data to be read or all the data has been read, it signals that the MMF is free for writing. Any waiting instance will then go on to write.

SingleInstanceGuard& SingleInstanceGuard::Write(void

*pData, int

nSize)

{

if

(!g_bRunning)//

first instance needn't write anything

throw

Exception(Exception::SHARING_WITH_SELF);

if

(!g_bHasWrittenPreviously)

WaitForSingleObject(g_hCanWriteData, INFINITE);

g_bHasWrittenPreviously = true

;

if

(g_nBytesRW + nSize >

g_nSharedDataSize)

throw

Exception(Exception::DATA_TOO_BIG);

char

*pBytes = (char

*)MapViewOfFile(g_hMMF, FILE_MAP_ALL_ACCESS, 0

, 0

, 0

);

if

(0==pBytes)

throw

Exception(Exception::UNABLE_TO_WRITE);

memcpy(pBytes+sizeof(long

)+sizeof(long

)+g_nBytesRW, pData, nSize);

g_nBytesRW += nSize;

UnmapViewOfFile(pBytes);

return

*this

;

}

It waits until it is allowed to write. Note here that, as per rules, this will happen only in the other instances. You might have noticed in the first instance part of the constructor that the event g_hCanWriteData is created signaled unlike g_hCanReadData . This is done so that the second instance need not wait for any signal to write. If it has to wait, it will wait forever since the only time this event will be signaled is when a read operation was attempted and completed by the first instance. That would lead to a deadlock if instances have to share data. Note that even if you start more instances at the exact same time, one of them as chosen by the OS will end up becoming the second one. The others will be queued.

This brings me to explain why I chose events over mutex. It isn’t sufficient to just allow access to the MMF in sequence but it is also important to allow it when it is appropriate. Mutex just helps queuing the access but events help timing them. With judicious usage of events, a mutex like queuing can also be achieved. The variable g_bHasWrittenPreviously helps in this regard.

The alerting is simple. It sets the g_hCanRead event which wakes the AlertMonitor(...) of the first instance. But before that, it updates the actual data size written if any writing was attempted.

void

SingleInstanceGuard::AlertTheRunningInstance()

{

if

(!g_bHasWrittenPreviously)

WaitForSingleObject(g_hCanWriteData, INFINITE);

else

{

//

write the number of bytes written into the MMF into the MMF

char

*pBytes = (char

*)MapViewOfFile

(g_hMMF, FILE_MAP_ALL_ACCESS, 0

, 0

, 0

);

if

(0==pBytes)

throw

Exception(Exception::UNABLE_TO_ALERT);

*(long

*)(pBytes + sizeof

(long

)) = g_nBytesRW;

UnmapViewOfFile(pBytes);

}

g_bHasWrittenPreviously = false

;

//

alert

SetEvent(g_hCanReadData);

}

The destructor closes all the handles. Any kernel object created/opened must be closed when no longer needed. Every kernel object has a usage counter which is incremented on every creation/opening and every closing will decrement it. Only when the counter reaches zero will the object be destroyed, so these kernel objects will be destroyed only after the first instance exits. Within other instances, when there was no data shared, it also sets the g_hCanWriteData that allows the queued instances to write their data.

SingleInstanceGuard::~SingleInstanceGuard()

{

//

This is necessary when other instances won't share data and simply alert.

//

g_nBytesRW is the written bytes wrt other instance

if

(g_bRunning && (0==g_nBytesRW))

SetEvent(g_hCanWriteData);

CloseHandle(g_hGuardEvent);

CloseHandle(g_hCanWriteData);

CloseHandle(g_hCanReadData);

CloseHandle(g_hMMF);

}

Notes

- There are a total of three event objects involved in the implementation. One is a dummy object and the other two actually serve the original purpose of events, timing a thread’s resumption.

- Both overloaded operators are explicitly disabled for

char*. I have forced the clients to usestd::stringas it would be a chore to, especially, read back thechar*which might lead to unsuspecting memory leaks. - You may incorporate overloads for other data structures like vector, map, etc. if your application demands it. The implementation of

std::stringmust be able to serve as an idea. - Each application must incorporate its own data format for sharing. In simple terms, the read and write must match.

- It is extremely important to make sure the name you pass as the first parameter is unique. It would be a disaster if more than one application uses the same name, say "

MyApp". Use aGUIDfor the name. - Although globals are used for the implementation, it must be alright as each of these would be in separate processes.

- If you inspect the code a bit closely, you will realize that a class was not necessary. This could as well have been done with just a couple of C style functions, as even the implementation itself depends on global variables and functions. True, but I chose a C++ style class mainly for the ease of use, especially for the convenience of the overloaded operators. With C style functions, one would have had to use “ugly” syntax similar to the

Read(...)andWrite(...)but with the operators, things look clean and simple. I tend to mix these two programming paradigms in situations like these. - Use this class within a

try/catchblock just to be safe (although I haven’t done so in these simple examples).

Changes in Version 1.1

Added a public function void SetTargetWindow(HWND h) to fix a nasty bug. In version 1.0, the alert message was always posted to the thread of the first instance. That mechanism would crash and burn in a GUI app, especially when the app was in a modal loop and data was supposed to be shared across the instances. When a GUI app would be in a modal loop, the messages that have no target window (like when posted to the thread) would be eaten and not delivered. Other instances waiting to write data would go into limbo because the permission is never set. This is because when data is shared, the write permission would be given by the first instance after it has finished reading in response to the alert message but the message is never delivered to the thread. The instances would remain in the memory (although they won't appear on screen).

Thanks to Victor Nijegorodov for pointing out that PostThreadMesage(...) must not be used with GUI threads.

You may test this scenario with the updated MFC app. It has a white colored question mark button on the toolbar and clicking it would open a modal message box. Modify the source code and compare. In the app class, comment the SetTargetWindow(...) and add ON_REGISTERED_MESSAGE(SingleInstanceGuard::SIGM_ALERT, OnSIGAlert) to the message map. When the modal box is on and you attempt to fire up another instance, the instance won't appear but it will remain in memory waiting for the never to be got write permission. Open the Windows Task Manager while testing and you will see how the app name keeps adding to the list in the Process tab. However, not sharing any data (with the modified code) won't cause this but, as I said, it is best to set the target window in GUI apps.

History

- 12th October, 2010: Version 1.0

- 20th October, 2010: Version 1.1

License

This article, along with any associated source code and files, is licensed under The Code Project Open License (CPOL)

About the Author:bleedingfingers

I am Vinay MS. (male).I am from Bangalore,yup,the (in)famous city that has lent its name to that (in)famous verb that helped a half-Kenyan become the world's most powerful man.

本文介绍了一个C++单实例守护类,用于确保应用程序只有一个运行实例,并能在多个实例间共享数据。通过使用内存映射文件和事件对象,实现了跨进程的数据同步和通知机制。

本文介绍了一个C++单实例守护类,用于确保应用程序只有一个运行实例,并能在多个实例间共享数据。通过使用内存映射文件和事件对象,实现了跨进程的数据同步和通知机制。

1607

1607

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?