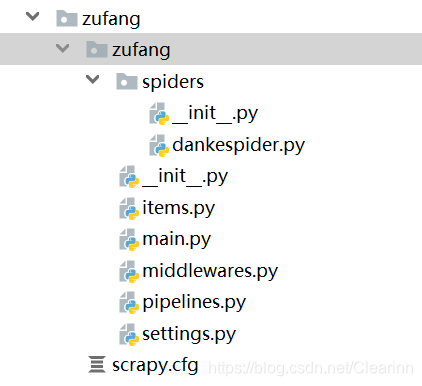

一。项目结构

二。模块划分

1.dankespider

import scrapy

from ..items import ZufangItem

headers = {

'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.131 Safari/537.36'

}

class Danke_Spider(scrapy.Spider):

name = 'danke'

def start_requests(self):

url = 'https://www.danke.com/room/sh'

yield scrapy.Request(url=url,headers=headers)

def parse(self, response):

house = response.xpath('//div[@class="r_lbx_cena"]/a/text()').getall()

money = response.xpath('//div[@class="r_lbx_moneya"]/span/text()').getall()

status = response.xpath('//div[@class="r_lbx_cenb"]').xpath('string(.)').getall()

print(house,money,status)

print(len(house),len(money),len(status))

print(type(house),type(money),type(status))

for i in range(len(house)):

info = ZufangItem()

#清洗数据

house[i] = house[i].replace(' ','')

status[i] = status[i].replace(' ','')

info['house'] = house[i]

info['money'] = money[i]

info['status'] = status[i]

yield info

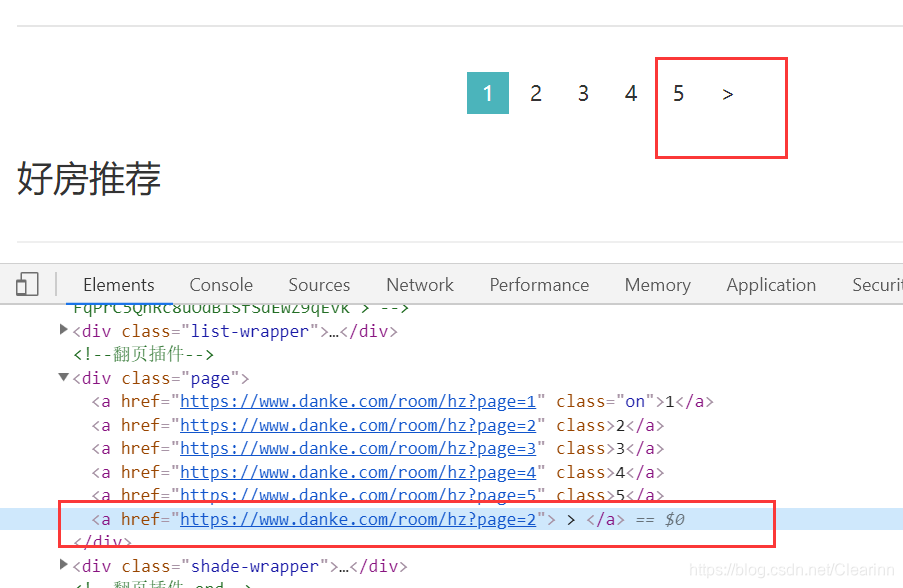

#下一页

next_page = response.xpath('/html/body/div[3]/div/div[6]/div[3]/a/@href').getall()[-1]

print(next_page)

if next_page is not None:

print('进入下一页了啊')

next_page = response.urljoin(next_page)

yield scrapy.Request(next_page,callback=self.parse)

分析:先获取div下的所有a标签的href组成的列表,然后取最后一个href

即:response.xpath(’/html/body/div[3]/div/div[6]/div[3]/a/@href’).getall()[-1]

2.items

# -*- coding: utf-8 -*-

# Define here the models for your scraped items

#

# See documentation in:

# https://docs.scrapy.org/en/latest/topics/items.html

import scrapy

class ZufangItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

house = scrapy.Field()

money = scrapy.Field()

status = scrapy.Field()

3.pipelines

使用mongoDB数据库

# -*- coding: utf-8 -*-

# Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: https://docs.scrapy.org/en/latest/topics/item-pipeline.html

import pymongo

conn = pymongo.MongoClient(host='localhost',port=27017)

mydb = conn.test

myset = mydb.zufang

class ZufangPipeline(object):

def process_item(self, item, spider):

print('插入信息')

informations = {

'house':item['house'],

'money':item['money'],

'status':item['status'],

}

myset.insert(informations)

return item

4.settings

# -*- coding: utf-8 -*-

# Scrapy settings for zufang project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# https://docs.scrapy.org/en/latest/topics/settings.html

# https://docs.scrapy.org/en/latest/topics/downloader-middleware.html

# https://docs.scrapy.org/en/latest/topics/spider-middleware.html

BOT_NAME = 'zufang'

SPIDER_MODULES = ['zufang.spiders']

NEWSPIDER_MODULE = 'zufang.spiders'

# Crawl responsibly by identifying yourself (and your website) on the user-agent

#USER_AGENT = 'zufang (+http://www.yourdomain.com)'

# Obey robots.txt rules

ROBOTSTXT_OBEY = False

# Configure maximum concurrent requests performed by Scrapy (default: 16)

#CONCURRENT_REQUESTS = 32

# Configure a delay for requests for the same website (default: 0)

# See https://docs.scrapy.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

#DOWNLOAD_DELAY = 3

# The download delay setting will honor only one of:

#CONCURRENT_REQUESTS_PER_DOMAIN = 16

#CONCURRENT_REQUESTS_PER_IP = 16

# Disable cookies (enabled by default)

#COOKIES_ENABLED = False

# Disable Telnet Console (enabled by default)

#TELNETCONSOLE_ENABLED = False

# Override the default request headers:

#DEFAULT_REQUEST_HEADERS = {

# 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

# 'Accept-Language': 'en',

#}

# Enable or disable spider middlewares

# See https://docs.scrapy.org/en/latest/topics/spider-middleware.html

#SPIDER_MIDDLEWARES = {

# 'zufang.middlewares.ZufangSpiderMiddleware': 543,

#}

# Enable or disable downloader middlewares

# See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html

#DOWNLOADER_MIDDLEWARES = {

# 'zufang.middlewares.ZufangDownloaderMiddleware': 543,

#}

# Enable or disable extensions

# See https://docs.scrapy.org/en/latest/topics/extensions.html

#EXTENSIONS = {

# 'scrapy.extensions.telnet.TelnetConsole': None,

#}

# Configure item pipelines

# See https://docs.scrapy.org/en/latest/topics/item-pipeline.html

ITEM_PIPELINES = {

'zufang.pipelines.ZufangPipeline': 300,

}

# Enable and configure the AutoThrottle extension (disabled by default)

# See https://docs.scrapy.org/en/latest/topics/autothrottle.html

#AUTOTHROTTLE_ENABLED = True

# The initial download delay

#AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

#AUTOTHROTTLE_MAX_DELAY = 60

# The average number of requests Scrapy should be sending in parallel to

# each remote server

#AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

#AUTOTHROTTLE_DEBUG = False

# Enable and configure HTTP caching (disabled by default)

# See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

#HTTPCACHE_ENABLED = True

#HTTPCACHE_EXPIRATION_SECS = 0

#HTTPCACHE_DIR = 'httpcache'

#HTTPCACHE_IGNORE_HTTP_CODES = []

#HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'

5.main

from scrapy import cmdline

cmdline.execute(['scrapy','crawl','danke'])

518

518

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?