https://colab.research.google.com/drive/1L7Dp08UmepHif5XCfN1z6uD3OZ8mN0nR?usp=sharing

上面是colab的共享,里面的代码可以直接运行。

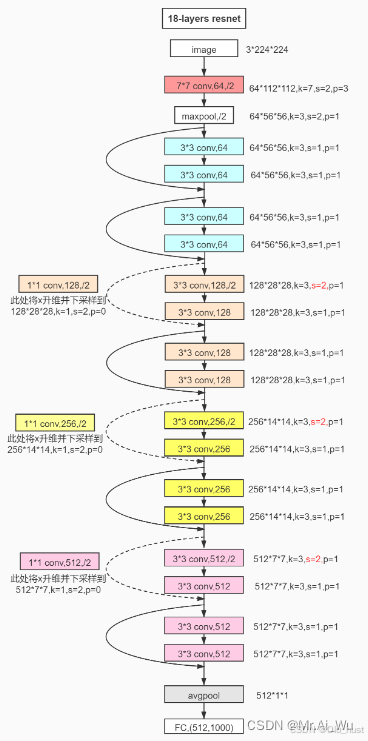

今天学习一下了resnet-18的实现,虽然现在很多可以直接调用这个模型,但还是希望自己搭建一下学习一下这个的层次。

理论参考一下:ResNet-18超详细介绍!!!!_resnet18-优快云博客

class BasicBlock(nn.Module):

def __init__(self, in_channels, out_channels, stride=1):

super(BasicBlock, self).__init__()

# 第一层卷积

self.conv1 = nn.Conv2d(in_channels, out_channels, kernel_size=3, stride=stride, padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(out_channels)

# 第二层卷积

self.conv2 = nn.Conv2d(out_channels, out_channels, kernel_size=3, stride=1, padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(out_channels)

# 快捷连接(skip connection)

self.shortcut = nn.Sequential()

if stride != 1 or in_channels != out_channels:

self.shortcut = nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(out_channels)

)

def forward(self, x):

out = F.relu(self.bn1(self.conv1(x)))

out = self.bn2(self.conv2(out))

out += self.shortcut(x) # 添加残差连接

out = F.relu(out)

return out

class ResNet(nn.Module):

def __init__(self, block, num_blocks, num_classes=1000):

super(ResNet, self).__init__()

self.in_channels = 64

# 第一层卷积和池化

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3, bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

# 堆叠的残差块

self.layer1 = self._make_layer(block, 64, num_blocks[0], stride=1)

self.layer2 = self._make_layer(block, 128, num_blocks[1], stride=2)

self.layer3 = self._make_layer(block, 256, num_blocks[2], stride=2)

self.layer4 = self._make_layer(block, 512, num_blocks[3], stride=2)

# 最后的全连接层

self.avgpool = nn.AdaptiveAvgPool2d((1, 1))

self.fc = nn.Linear(512, num_classes)

def _make_layer(self, block, out_channels, num_blocks, stride):

layers = []

layers.append(block(self.in_channels, out_channels, stride))

self.in_channels = out_channels

for _ in range(1, num_blocks):

layers.append(block(self.in_channels, out_channels))

return nn.Sequential(*layers)

def forward(self, x):

x = self.maxpool(F.relu(self.bn1(self.conv1(x))))

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = torch.flatten(x, 1) # 展平

x = self.fc(x)

return x

# 创建 ResNet-18 模型

model = ResNet(BasicBlock, [2, 2, 2, 2], num_classes=10)这里# 第一层卷积和池化的输入通道数默认是3,可以根据自己的情况修改self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3, bias=False)。还有比如我用的MNIST就是改成1,对应的分类个数num_classes=10。

完整的代码放到:

https://colab.research.google.com/drive/1L7Dp08UmepHif5XCfN1z6uD3OZ8mN0nR?usp=sharing

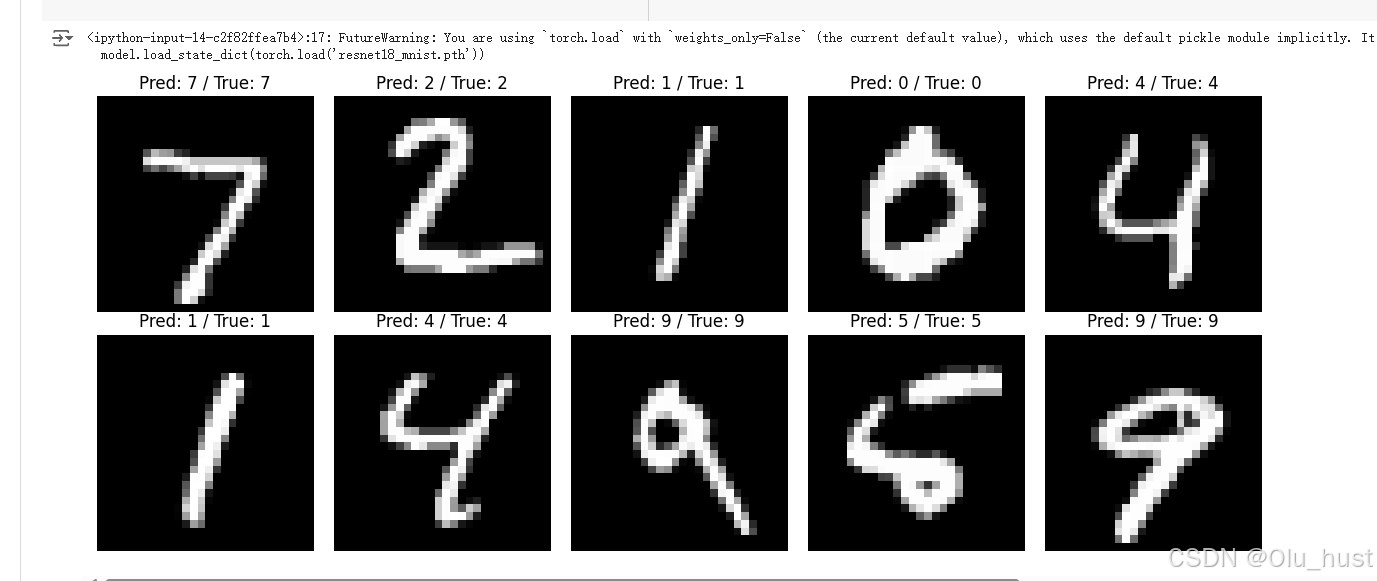

结果:一个epoch精度就到了96%了。

5579

5579

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?