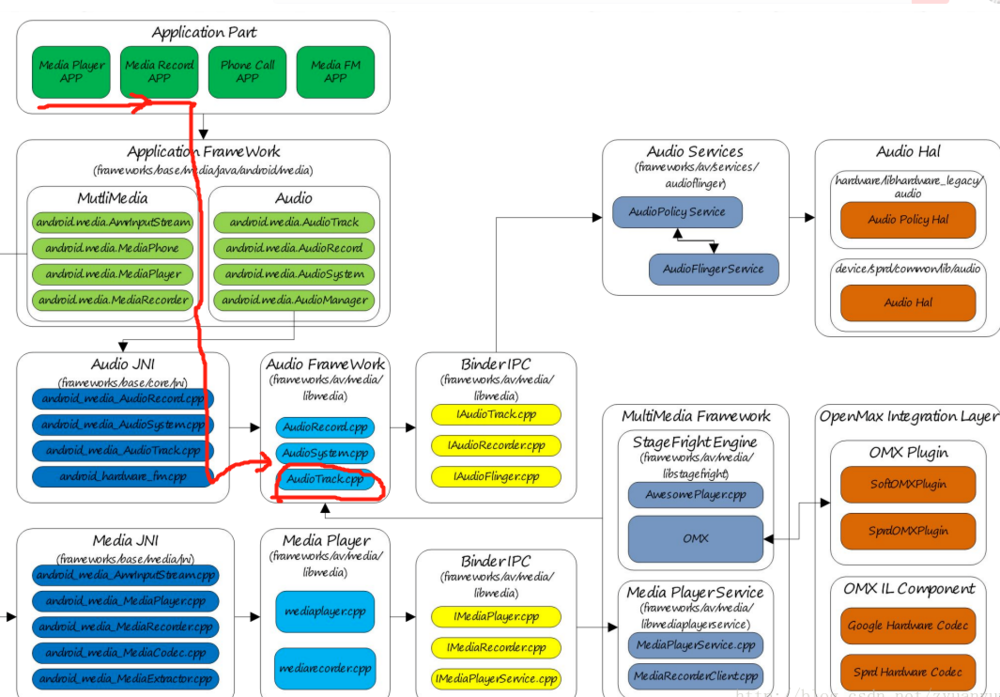

了解了上面的2个API,我们再来看下Android音频系统的框架图。

从上面的音频系统框架图(看画红线的部分),我们可以知道,应用上调用MediaPlayer、MediaRecorder来播放、录音,在framewrok层会调用到AudioTrack.cpp这个文件。

那么回到文章的重点,我们需要在播放视频的时候,把视频的音频流实时的截取出来。那截取音频流的这部分工作,就可以放在AudioTrack.cpp中进行处理。

我们来看下AudioTrack.cpp里面比较重要的方法

ssize_t AudioTrack::write(const void* buffer, size_t userSize, bool blocking) { if (mTransfer != TRANSFER_SYNC) { return INVALID_OPERATION; }

if (isDirect()) {

AutoMutex lock(mLock);

int32_t flags = android_atomic_and(

~(CBLK_UNDERRUN | CBLK_LOOP_CYCLE | CBLK_LOOP_FINAL | CBLK_BUFFER_END),

&mCblk->mFlags);

if (flags & CBLK_INVALID) {

return DEAD_OBJECT;

}

}

if (ssize_t(userSize) < 0 || (buffer == NULL && userSize != 0)) {

// Sanity-check: user is most-likely passing an error code, and it would

// make the return value ambiguous (actualSize vs error).

ALOGE(“AudioTrack::write(buffer=%p, size=%zu (%zd)”, buffer, userSize, userSize);

return BAD_VALUE;

}

size_t written = 0;

Buffer audioBuffer;

while (userSize >= m

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?