目录

一 k8s网络通信

1.1 k8s通信整体架构

- k8s通过CNI接口接入其他插件来实现网络通讯。目前比较流行的插件有flannel,calico等

- CNI插件存放位置:# cat /etc/cni/net.d/10-flannel.conflist

- 插件使用的解决方案如下

- 虚拟网桥,虚拟网卡,多个容器共用一个虚拟网卡进行通信。

- 多路复用:MacVLAN,多个容器共用一个物理网卡进行通信。

- 硬件交换:SR-LOV,一个物理网卡可以虚拟出多个接口,这个性能最好。

- 容器间通信:

- 同一个pod内的多个容器间的通信,通过lo即可实现pod之间的通信

- 同一节点的pod之间通过cni网桥转发数据包。

- 不同节点的pod之间的通信需要网络插件支持

- pod和service通信: 通过iptables或ipvs实现通信,ipvs取代不了iptables,因为ipvs只能做负载均衡,而做不了nat转换

- pod和外网通信:iptables的MASQUERADE

- Service与集群外部客户端的通信;(ingress、nodeport、loadbalancer)

1.2 flannel网络插件

插件组成:

| 插件 | 功能 |

| VXLAN | 即Virtual Extensible LAN(虚拟可扩展局域网),是Linux本身支持的一网种网络虚拟化技术。VXLAN可以完全在内核态实现封装和解封装工作,从而通过“隧道”机制,构建出覆盖网络(Overlay Network) |

| VTEP | VXLAN Tunnel End Point(虚拟隧道端点),在Flannel中 VNI的默认值是1,这也是为什么宿主机的VTEP设备都叫flannel.1的原因 |

| Cni0 | 网桥设备,每创建一个pod都会创建一对 veth pair。其中一端是pod中的eth0,另一端是Cni0网桥中的端口(网卡) |

| Flannel.1 | TUN设备(虚拟网卡),用来进行 vxlan 报文的处理(封包和解包)。不同node之间的pod数据流量都从overlay设备以隧道的形式发送到对端 |

| Flanneld | flannel在每个主机中运行flanneld作为agent,它会为所在主机从集群的网络地址空间中,获取一个小的网段subnet,本主机内所有容器的IP地址都将从中分配。同时Flanneld监听K8s集群数据库,为flannel.1设备提供封装数据时必要的mac、ip等网络数据信息 |

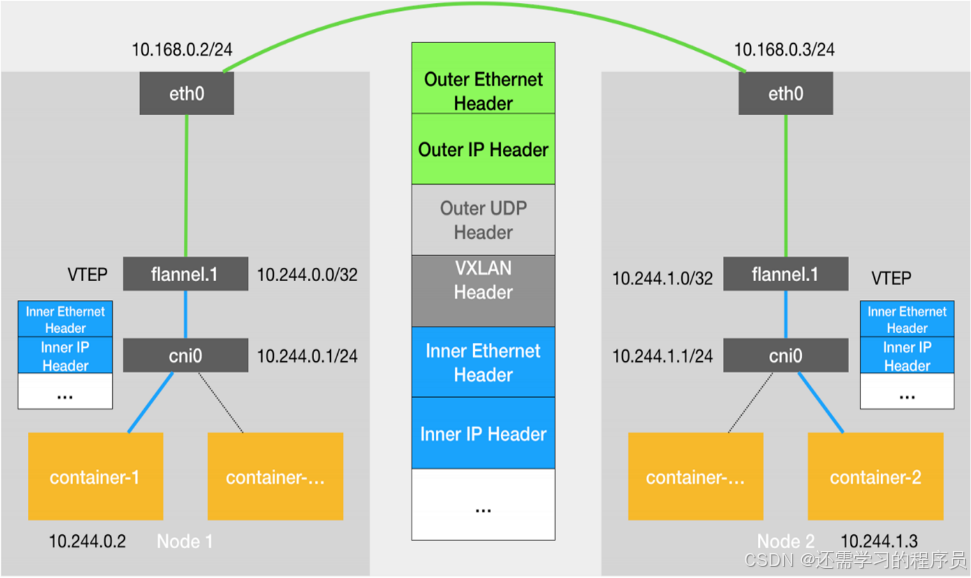

1.2.1 flannel跨主机通信原理

- 当容器发送IP包,通过veth pair 发往cni网桥,再路由到本机的flannel.1设备进行处理。

- VTEP设备之间通过二层数据帧进行通信,源VTEP设备收到原始IP包后,在上面加上一个目的MAC地址,封装成一个内部数据帧,发送给目的VTEP设备。

- 内部数据桢,并不能在宿主机的二层网络传输,Linux内核还需要把它进一步封装成为宿主机的一个普通的数据帧,承载着内部数据帧通过宿主机的eth0进行传输。

- Linux会在内部数据帧前面,加上一个VXLAN头,VXLAN头里有一个重要的标志叫VNI,它是VTEP识别某个数据桢是不是应该归自己处理的重要标识。

- flannel.1设备只知道另一端flannel.1设备的MAC地址,却不知道对应的宿主机地址是什么。在linux内核里面,网络设备进行转发的依据,来自FDB的转发数据库,这个flannel.1网桥对应的FDB信息,是由flanneld进程维护的。

- linux内核在IP包前面再加上二层数据帧头,把目标节点的MAC地址填进去,MAC地址从宿主机的ARP表获取。

- 此时flannel.1设备就可以把这个数据帧从eth0发出去,再经过宿主机网络来到目标节点的eth0设备。目标主机内核网络栈会发现这个数据帧有VXLAN Header,并且VNI为1,Linux内核会对它进行拆包,拿到内部数据帧,根据VNI的值,交给本机flannel.1设备处理,flannel.1拆包,根据路由表发往cni网桥,最后到达目标容器。

#默认网络通信路由

[root@k8s-master ~]# ip route

default via 192.168.10.2 dev eth0 proto static metric 100

10.244.0.0/24 dev cni0 proto kernel scope link src 10.244.0.1

10.244.1.0/24 via 10.244.1.0 dev flannel.1 onlink

10.244.2.0/24 via 10.244.2.0 dev flannel.1 onlink

172.17.0.0/16 dev docker0 proto kernel scope link src 172.17.0.1 linkdown

192.168.10.0/24 dev eth0 proto kernel scope link src 192.168.10.100 metric 100#桥接转发数据库

[root@k8s-master ~]# bridge fdb

01:00:5e:00:00:01 dev eth0 self permanent

33:33:00:00:00:01 dev eth0 self permanent

01:00:5e:00:00:fb dev eth0 self permanent

33:33:ff:be:ab:e6 dev eth0 self permanent

33:33:00:00:00:fb dev eth0 self permanent

33:33:00:00:00:01 dev docker0 self permanent

01:00:5e:00:00:6a dev docker0 self permanent

33:33:00:00:00:6a dev docker0 self permanent

01:00:5e:00:00:01 dev docker0 self permanent

01:00:5e:00:00:fb dev docker0 self permanent

02:42:9e:43:f0:86 dev docker0 vlan 1 master docker0 permanent

02:42:9e:43:f0:86 dev docker0 master docker0 permanent

33:33:00:00:00:01 dev kube-ipvs0 self permanent

fe:81:a8:41:83:8e dev flannel.1 dst 192.168.10.10 self permanent

7e:c0:50:f7:ce:22 dev flannel.1 dst 192.168.10.20 self permanent

33:33:00:00:00:01 dev cni0 self permanent

01:00:5e:00:00:6a dev cni0 self permanent

33:33:00:00:00:6a dev cni0 self permanent

01:00:5e:00:00:01 dev cni0 self permanent

33:33:ff:74:bd:86 dev cni0 self permanent

01:00:5e:00:00:fb dev cni0 self permanent

33:33:00:00:00:fb dev cni0 self permanent

e6:2f:5f:74:bd:86 dev cni0 vlan 1 master cni0 permanent

e6:2f:5f:74:bd:86 dev cni0 master cni0 permanent

0e:89:86:cb:6d:1c dev vethf4599562 master cni0

e2:9a:62:7e:c0:17 dev vethf4599562 vlan 1 master cni0 permanent

e2:9a:62:7e:c0:17 dev vethf4599562 master cni0 permanent

33:33:00:00:00:01 dev vethf4599562 self permanent

01:00:5e:00:00:01 dev vethf4599562 self permanent

33:33:ff:7e:c0:17 dev vethf4599562 self permanent

33:33:00:00:00:fb dev vethf4599562 self permanent

be:95:df:26:af:e5 dev veth9add31bd master cni0

ce:2a:19:31:7b:d7 dev veth9add31bd vlan 1 master cni0 permanent

ce:2a:19:31:7b:d7 dev veth9add31bd master cni0 permanent

33:33:00:00:00:01 dev veth9add31bd self permanent

01:00:5e:00:00:01 dev veth9add31bd self permanent

33:33:ff:31:7b:d7 dev veth9add31bd self permanent

33:33:00:00:00:fb dev veth9add31bd self permanent#arp列表

[root@k8s-master ~]# arp -n

Address HWtype HWaddress Flags Mask Iface

192.168.10.1 ether 00:50:56:c0:00:08 C eth0

192.168.10.20 ether 00:0c:29:25:3b:0b C eth0

192.168.10.130 ether 00:0c:29:68:9a:74 C eth0

10.244.0.2 ether 0e:89:86:cb:6d:1c C cni0

10.244.1.0 ether fe:81:a8:41:83:8e CM flannel.1

10.244.2.0 ether 7e:c0:50:f7:ce:22 CM flannel.1

192.168.10.2 ether 00:50:56:f1:37:f4 C eth0

10.244.0.3 ether be:95:df:26:af:e5 C cni0

192.168.10.10 ether 00:0c:29:19:ba:9c C eth0

[root@k8s-master ~]#

1.2.2 flannel支持的后端模式

| 网络模式 | 功能 |

| vxlan | 报文封装,默认模式 |

| Directrouting | 直接路由,跨网段使用vxlan,同网段使用host-gw模式 |

| host-gw | 主机网关,性能好,但只能在二层网络中,不支持跨网络 如果有成千上万的Pod,容易产生广播风暴,不推荐 |

| UDP | 性能差,不推荐 |

更改flannel的默认模式

[root@k8s-master ~]# kubectl -n kube-flannel edit cm kube-flannel-cfg

apiVersion: v1

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"EnableNFTables": false,

"Backend": {

"Type": "host-gw" #更改内容

}

}#重启pod

[root@k8s-master ~]# kubectl -n kube-flannel delete pod --all

pod "kube-flannel-ds-9vds2" deleted

pod "kube-flannel-ds-bf7bj" deleted

pod "kube-flannel-ds-wxvfx" deleted

[root@k8s-master ~]# ip route

default via 192.168.10.2 dev eth0 proto static metric 100

10.244.0.0/24 dev cni0 proto kernel scope link src 10.244.0.1

10.244.1.0/24 via 192.168.10.10 dev eth0

10.244.2.0/24 via 192.168.10.20 dev eth0

172.17.0.0/16 dev docker0 proto kernel scope link src 172.17.0.1 linkdown

192.168.10.0/24 dev eth0 proto kernel scope link src 192.168.10.100 metric 100

1.3 calico网络插件

官网:

https://docs.projectcalico.org/getting-started/kubernetes/self-managed-onprem/onpremises

1.3.1 calico简介:

- 纯三层的转发,中间没有任何的NAT和overlay,转发效率最好。

- Calico 仅依赖三层路由可达。Calico 较少的依赖性使它能适配所有 VM、Container、白盒或者混合环境场景。

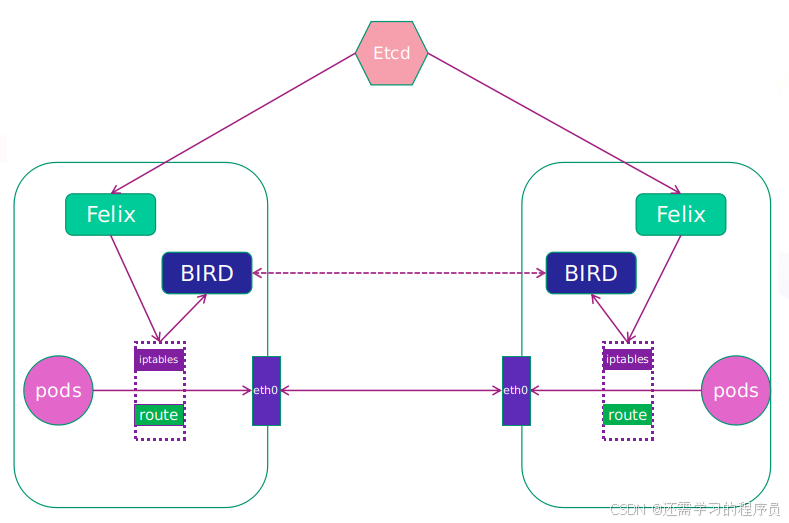

1.3.2 calico网络架构

- Felix:监听ECTD中心的存储获取事件,用户创建pod后,Felix负责将其网卡、IP、MAC都设置好,然后在内核的路由表里面写一条,注明这个IP应该到这张网卡。同样如果用户制定了隔离策略,Felix同样会将该策略创建到ACL中,以实现隔离。

- BIRD:一个标准的路由程序,它会从内核里面获取哪一些IP的路由发生了变化,然后通过标准BGP的路由协议扩散到整个其他的宿主机上,让外界都知道这个IP在这里,路由的时候到这里

1.3.3 部署calico

删除flannel插件

[root@k8s-master ~]# kubectl delete -f kube-flannel.yml

namespace "kube-flannel" deleted

serviceaccount "flannel" deleted

clusterrole.rbac.authorization.k8s.io "flannel" deleted

clusterrolebinding.rbac.authorization.k8s.io "flannel" deleted

configmap "kube-flannel-cfg" deleted

daemonset.apps "kube-flannel-ds" deleted

删除所有节点上flannel配置文件,避免冲突

[root@k8s-master & node & node2 ~]# rm -rf /etc/cni/net.d/10-flannel.conflist

下载部署文件

[root@k8s-master ~]# mkdir calico

[root@k8s-master ~]# cd calico/[root@k8s-master calico]# curl https://raw.githubusercontent.com/projectcalico/calico/v3.28.1/manifests/calico-typha.yaml -o calico.yaml

下载镜像上传至仓库:

[root@k8s-master calico]# docker load -i calico-3.28.1.tar

6b2e64a0b556: Loading layer 3.69MB/3.69MB

38ba74eb8103: Loading layer 205.4MB/205.4MB

5f70bf18a086: Loading layer 1.024kB/1.024kB

Loaded image: calico/cni:v3.28.1

3831744e3436: Loading layer 366.9MB/366.9MB

Loaded image: calico/node:v3.28.1

4f27db678727: Loading layer 75.59MB/75.59MB

Loaded image: calico/kube-controllers:v3.28.1

993f578a98d3: Loading layer 67.61MB/67.61MB

Loaded image: calico/typha:v3.28.1

[root@k8s-master calico]# docker tag calico/cni:v3.28.1 reg.timinglee.org/calico/cni:v3.28.1

[root@k8s-master calico]# docker tag calico/node:v3.28.1 reg.timinglee.org/calico/node:v3.28.1[root@k8s-master ~]# docker tag calico/kube-controllers:v3.28.1 reg.timinglee.org/calico/kube-controllers:v3.28.1

[root@k8s-master calico]# docker tag calico/typha:v3.28.1 reg.timinglee.org/calico/typha:v3.28.1

[root@k8s-master calico]# docker push reg.timinglee.org/calico/cni:v3.28.1

The push refers to repository [reg.timinglee.org/calico/cni]

5f70bf18a086: Mounted from library/calico/cni

38ba74eb8103: Mounted from library/calico/cni

6b2e64a0b556: Mounted from library/calico/typha

v3.28.1: digest: sha256:4bf108485f738856b2a56dbcfb3848c8fb9161b97c967a7cd479a60855e13370 size: 946

[root@k8s-master calico]# docker push reg.timinglee.org/calico/node:v3.28.1

The push refers to repository [reg.timinglee.org/calico/node]

3831744e3436: Mounted from library/calico/node

v3.28.1: digest: sha256:f72bd42a299e280eed13231cc499b2d9d228ca2f51f6fd599d2f4176049d7880 size: 530[root@k8s-master ~]# docker push reg.timinglee.org/calico/kube-controllers:v3.28.1

The push refers to repository [reg.timinglee.org/calico/kube-controllers]

4f27db678727: Pushed

6b2e64a0b556: Mounted from calico/typha

v3.28.1: digest: sha256:8579fad4baca75ce79644db84d6a1e776a3c3f5674521163e960ccebd7206669 size: 740

[root@k8s-master calico]# docker push reg.timinglee.org/calico/typha:v3.28.1

The push refers to repository [reg.timinglee.org/calico/typha]

993f578a98d3: Mounted from library/calico/typha

6b2e64a0b556: Mounted from calico/cni

v3.28.1: digest: sha256:093ee2e785b54c2edb64dc68c6b2186ffa5c47aba32948a35ae88acb4f30108f size: 740

更改yml设置

[root@k8s-master calico]# vim calico.yaml

4835 image: reg.timinglee.org/calico/cni:v3.28.1

4835 image: reg.timinglee.org/calico/cni:v3.28.1

4906 image: reg.timinglee.org/calico/node:v3.28.1

4932 image: reg.timinglee.org/calico/node:v3.28.1

5160 image: reg.timinglee.org/calico/kube-controllers:v3.28.1

5249 - image: reg.timinglee.org/calico/typha:v3.28.14973 - name: CALICO_IPV4POOL_VXLAN

4974 value: "Never"4999 - name: CALICO_IPV4POOL_CIDR

5000 value: "10.244.0.0/16"

5001 - name: CALICO_AUTODETECTION_METHOD

5002 value: "interface=eth0"[root@k8s-master calico]# kubectl apply -f calico.yaml

poddisruptionbudget.policy/calico-kube-controllers created

poddisruptionbudget.policy/calico-typha created

serviceaccount/calico-kube-controllers created

serviceaccount/calico-node created

serviceaccount/calico-cni-plugin created

configmap/calico-config created

customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgpfilters.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/caliconodestatuses.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipreservations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/kubecontrollersconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org created

clusterrole.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrole.rbac.authorization.k8s.io/calico-node created

clusterrole.rbac.authorization.k8s.io/calico-cni-plugin created

clusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrolebinding.rbac.authorization.k8s.io/calico-node created

clusterrolebinding.rbac.authorization.k8s.io/calico-cni-plugin created

service/calico-typha created

daemonset.apps/calico-node created

deployment.apps/calico-kube-controllers created

deployment.apps/calico-typha created

[root@k8s-master calico]# kubectl -n kube-system get pods

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-b9c79b596-wnrvp 1/1 Running 0 12m

calico-node-9t2pp 1/1 Running 0 12m

calico-node-d88bf 1/1 Running 0 12m

calico-node-ljrsn 1/1 Running 0 12m

calico-typha-55df74b8b4-dqwgb 1/1 Running 0 12m

coredns-647dc95897-6w6cc 1/1 Running 8 (45h ago) 34d

coredns-647dc95897-twvqh 1/1 Running 8 (45h ago) 34d

etcd-k8s-master 1/1 Running 9 (45h ago) 34d

kube-apiserver-k8s-master 1/1 Running 1 (45h ago) 3d3h

kube-controller-manager-k8s-master 1/1 Running 13 (16m ago) 34d

kube-proxy-7q8qw 1/1 Running 2 (45h ago) 3d3h

kube-proxy-fkn62 1/1 Running 2 (45h ago) 3d3h

kube-proxy-nnk6v 1/1 Running 2 (45h ago) 3d3h

kube-scheduler-k8s-master 1/1 Running 15 (16m ago) 34d

测试:

[root@k8s-master calico]# kubectl run web --image reg.timinglee.org/library/myapp:v1

pod/web created

[root@k8s-master calico]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

web 1/1 Running 0 13s 10.244.169.129 k8s-node2 <none> <none>

[root@k8s-master calico]# curl 10.244.169.129

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

二 k8s调度(Scheduling)

2.1 调度在Kubernetes中的作用

- 调度是指将未调度的Pod自动分配到集群中的节点的过程

- 调度器通过 kubernetes 的 watch 机制来发现集群中新创建且尚未被调度到 Node 上的 Pod

- 调度器会将发现的每一个未调度的 Pod 调度到一个合适的 Node 上来运行

2.2 调度原理:

- 创建Pod

用户通过Kubernetes API创建Pod对象,并在其中指定Pod的资源需求、容器镜像等信息。

- 调度器监视Pod

Kubernetes调度器监视集群中的未调度Pod对象,并为其选择最佳的节点。

- 选择节点

调度器通过算法选择最佳的节点,并将Pod绑定到该节点上。调度器选择节点的依据包括节点的资源使用情况、Pod的资源需求、亲和性和反亲和性等。

- 绑定Pod到节点

调度器将Pod和节点之间的绑定信息保存在etcd数据库中,以便节点可以获取Pod的调度信息。

- 节点启动Pod

节点定期检查etcd数据库中的Pod调度信息,并启动相应的Pod。如果节点故障或资源不足,调度器会重新调度Pod,并将其绑定到其他节点上运行。

2.3 调度器种类

- 默认调度器(Default Scheduler):

是Kubernetes中的默认调度器,负责对新创建的Pod进行调度,并将Pod调度到合适的节点上。

- 自定义调度器(Custom Scheduler):

是一种自定义的调度器实现,可以根据实际需求来定义调度策略和规则,以实现更灵活和多样化的调度功能。

- 扩展调度器(Extended Scheduler):

是一种支持调度器扩展器的调度器实现,可以通过调度器扩展器来添加自定义的调度规则和策略,以实现更灵活和多样化的调度功能。

- kube-scheduler是kubernetes中的默认调度器,在kubernetes运行后会自动在控制节点运行

2.4 常用调度方法

2.4.1 nodename

- nodeName 是节点选择约束的最简单方法,但一般不推荐

- 如果 nodeName 在 PodSpec 中指定了,则它优先于其他的节点选择方法

- 使用 nodeName 来选择节点的一些限制

如果指定的节点不存在。

如果指定的节点没有资源来容纳 pod,则pod 调度失败。

云环境中的节点名称并非总是可预测或稳定的

实例:

#建立pod文件

[root@k8s-master ~]# kubectl run testpod --image reg.timinglee.org/library/myapp:v1 --dry-run=client -o yaml > pod.yml

#设置调度

[root@k8s-master ~]# vim pod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: testpod

name: testpod

spec:

nodeName: k8s-node2

containers:

- image: reg.timinglee.org/library/myapp:v1

name: testpod

#建立pod[root@k8s-master ~]# kubectl apply -f pod.yml

pod/testpod created

[root@k8s-master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

testpod 1/1 Running 0 12s 10.244.169.130 k8s-node2 <none> <none>

注意:

nodeName: k8s3 #找不到节点pod会出现pending,优先级最高,其他调度方式无效

2.4.2 Nodeselector(通过标签控制节点)

- nodeSelector 是节点选择约束的最简单推荐形式

- 给选择的节点添加标签:

kubectl label nodes k8s-node1 lab=lee

- 可以给多个节点设定相同标签

示例:

#查看节点标签

[root@k8s-master ~]# kubectl get nodes --show-labels

NAME STATUS ROLES AGE VERSION LABELS

k8s-master Ready control-plane 34d v1.30.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-master,kubernetes.io/os=linux,node-role.kubernetes.io/control-plane=,node.kubernetes.io/exclude-from-external-load-balancers=

k8s-node Ready <none> 27d v1.30.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-node,kubernetes.io/os=linux

k8s-node2 Ready <none> 27d v1.30.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-node2,kubernetes.io/os=linux

#设定节点标签

[root@k8s-master ~]# kubectl get nodes k8s-node --show-labels

NAME STATUS ROLES AGE VERSION LABELS

k8s-node Ready <none> 27d v1.30.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-node,kubernetes.io/os=linux

#调度设置

[root@k8s-master ~]# vim pod2.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: testpod

name: testpod

spec:

nodeSelector:

lab: timinglee

containers:

- image: reg.timinglee.org/library/myapp:v1

name: testpod

[root@k8s-master ~]# kubectl apply -f pod2.yml

pod/testpod created

[root@k8s-master ~]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

testpod 1/1 Running 0 8s 10.244.113.130 k8s-node <none> <none>

注意:节点标签可以给N个节点加

2.5 affinity(亲和性)

官方文档 :

https://kubernetes.io/zh/docs/concepts/scheduling-eviction/assign-pod-node

2.5.1 亲和与反亲和

- nodeSelector 提供了一种非常简单的方法来将 pod 约束到具有特定标签的节点上。亲和/反亲和功能极大地扩展了你可以表达约束的类型。

- 使用节点上的 pod 的标签来约束,而不是使用节点本身的标签,来允许哪些 pod 可以或者不可以被放置在一起。

2.5.2 nodeAffinity节点亲和

- 那个节点服务指定条件就在那个节点运行

- requiredDuringSchedulingIgnoredDuringExecution 必须满足,但不会影响已经调度

- preferredDuringSchedulingIgnoredDuringExecution 倾向满足,在无法满足情况下也会调度pod

IgnoreDuringExecution 表示如果在Pod运行期间Node的标签发生变化,导致亲和性策略不能满足,则继续运行当前的Pod。

- nodeaffinity还支持多种规则匹配条件的配置如

| 匹配规则 | 功能 |

| ln | label 的值在列表内 |

| Notln | label 的值不在列表内 |

| Gt | label 的值大于设置的值,不支持Pod亲和性 |

| Lt | label 的值小于设置的值,不支持pod亲和性 |

| Exists | 设置的label 存在 |

| DoesNotExist | 设置的 label 不存在 |

nodeAffinity示例

#示例1

[root@k8s-master ~]# mkdir scheduler

[root@k8s-master ~]# cd scheduler/[root@k8s-master scheduler]# kubectl label nodes k8s-node2 disk=ssd

node/k8s-node2 labeled

[root@k8s-master scheduler]# kubectl get nodes k8s-node2 --show-labels

NAME STATUS ROLES AGE VERSION LABELS

k8s-node2 Ready <none> 27d v1.30.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,disk=ssd,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-node2,kubernetes.io/os=linux

[root@k8s-master scheduler]# vim pod.yml

apiVersion: v1

kind: Pod

metadata:

name: node-affinity

spec:

containers:

- name: nginx

image: reg.timinglee.org/library/nginx:latest

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: disk

operator: In | NotIn #两个结果相反(二选其一即可)

values:

- ssdoperator: In

[root@k8s-master scheduler]# kubectl apply -f pod.yml

pod/node-affinity created[root@k8s-master scheduler]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

node-affinity 1/1 Running 0 21s 10.244.169.132 k8s-node2 <none> <none>

operator: NotIn[root@k8s-master scheduler]# kubectl apply -f pod.yml

pod/node-affinity created

[root@k8s-master scheduler]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

node-affinity 1/1 Running 0 7s 10.244.113.131 k8s-node <none> <none>

2.5.3 Podaffinity(pod的亲和)

- 那个节点有符合条件的POD就在那个节点运行

- podAffinity 主要解决POD可以和哪些POD部署在同一个节点中的问题

- podAntiAffinity主要解决POD不能和哪些POD部署在同一个节点中的问题。它们处理的是Kubernetes集群内部POD和POD之间的关系

- Pod 间亲和与反亲和在与更高级别的集合(例如 ReplicaSets,StatefulSets,Deployments 等)一起使用时,

- Pod 间亲和与反亲和需要大量的处理,这可能会显著减慢大规模集群中的调度。

Podaffinity示例

[root@k8s-master scheduler]# vim example.yml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

labels:

app: nginx

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: reg.timinglee.org/library/nginx:latest

affinity:

podAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: app

operator: In

values:

- nginx

topologyKey: "kubernetes.io/hostname"

[root@k8s-master scheduler]# kubectl apply -f example.yml

deployment.apps/nginx-deployment created

[root@k8s-master scheduler]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-deployment-84ff85c6d7-4xtsf 1/1 Running 0 12s 10.244.169.133 k8s-node2 <none> <none>

nginx-deployment-84ff85c6d7-d6hsl 1/1 Running 0 12s 10.244.169.134 k8s-node2 <none> <none>

nginx-deployment-84ff85c6d7-nff6t 1/1 Running 0 12s 10.244.169.135 k8s-node2 <none> <none>

2.5.4 Podantiaffinity(pod反亲和)

Podantiaffinity示例

[root@k8s-master scheduler]# vim example2.yml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

labels:

app: nginx

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: reg.timinglee.org/library/nginx:latest

affinity:

podAntiAffinity: #反亲和

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: app

operator: In

values:

- nginx

topologyKey: "kubernetes.io/hostname"

[root@k8s-master scheduler]# kubectl apply -f example2.yml

deployment.apps/nginx-deployment created

[root@k8s-master scheduler]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-deployment-6f5dbf5d49-bgq4w 0/1 Pending 0 13s <none> <none> <none> <none>

nginx-deployment-6f5dbf5d49-wpclg 1/1 Running 0 13s 10.244.169.136 k8s-node2 <none> <none>

nginx-deployment-6f5dbf5d49-znnt7 1/1 Running 0 13s 10.244.113.132 k8s-node <none> <none>

2.6 Taints(污点模式,禁止调度)

- Taints(污点)是Node的一个属性,设置了Taints后,默认Kubernetes是不会将Pod调度到这个Node上

- Kubernetes如果为Pod设置Tolerations(容忍),只要Pod能够容忍Node上的污点,那么Kubernetes就会忽略Node上的污点,就能够(不是必须)把Pod调度过去

- 可以使用命令 kubectl taint 给节点增加一个 taint:

$ kubectl taint nodes <nodename> key=string:effect #命令执行方法

$ kubectl taint nodes node1 key=value:NoSchedule #创建

$ kubectl describe nodes server1 | grep Taints #查询

$ kubectl taint nodes node1 key- #删除

其中[effect] 可取值:

| effect值 | 解释 |

| NoSchedule | POD 不会被调度到标记为 taints 节点 |

| PreferNoSchedule | NoSchedule 的软策略版本,尽量不调度到此节点 |

| NoExecute | 如该节点内正在运行的 POD 没有对应 Tolerate 设置,会直接被逐出 |

2.6.1 Taints示例

#建立控制器并运行

[root@k8s-master scheduler]# vim example3.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: web

name: web

spec:

replicas: 2

selector:

matchLabels:

app: web

template:

metadata:

labels:

app: web

spec:

containers:

- image: reg.timinglee.org/library/nginx:latest

name: nginx

[root@k8s-master scheduler]# kubectl apply -f example3.yml

deployment.apps/web created

[root@k8s-master scheduler]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

web-5c48c8964d-r9r5r 1/1 Running 0 8s 10.244.113.133 k8s-node <none> <none>

web-5c48c8964d-zpspg 1/1 Running 0 8s 10.244.169.137 k8s-node2 <none> <none>

#设定污点为NoSchedule[root@k8s-master scheduler]# kubectl taint node k8s-node name=lee:NoSchedule

node/k8s-node tainted

[root@k8s-master scheduler]# kubectl describe nodes k8s-node | grep Tain

Taints: name=lee:NoSchedule

#控制器增加pod[root@k8s-master scheduler]# kubectl apply -f example3.yml

deployment.apps/web configured

[root@k8s-master scheduler]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

web-5c48c8964d-9gz52 1/1 Running 0 4s 10.244.169.139 k8s-node2 <none> <none>

web-5c48c8964d-gv7gm 1/1 Running 0 4s 10.244.169.140 k8s-node2 <none> <none>

web-5c48c8964d-r2rt7 1/1 Running 0 4s 10.244.169.138 k8s-node2 <none> <none>

web-5c48c8964d-r9r5r 1/1 Running 0 3m31s 10.244.113.133 k8s-node <none> <none>

web-5c48c8964d-zpspg 1/1 Running 0 3m31s 10.244.169.137 k8s-node2 <none> <none>#设定污点为NoExecute

[root@k8s-master scheduler]# kubectl taint node k8s-node name=lee:NoExecute

node/k8s-node tainted

[root@k8s-master scheduler]# kubectl describe nodes k8s-node | grep Tain

Taints: name=lee:NoExecute

[root@k8s-master scheduler]# kubectl apply -f example3.yml

deployment.apps/web configured

[root@k8s-master scheduler]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

web-5c48c8964d-9gz52 1/1 Running 0 3m6s 10.244.169.139 k8s-node2 <none> <none>

web-5c48c8964d-b667m 1/1 Running 0 9s 10.244.169.144 k8s-node2 <none> <none>

web-5c48c8964d-gv7gm 1/1 Running 0 3m6s 10.244.169.140 k8s-node2 <none> <none>

web-5c48c8964d-r2rt7 1/1 Running 0 3m6s 10.244.169.138 k8s-node2 <none> <none>

web-5c48c8964d-tx77t 1/1 Running 0 77s 10.244.169.142 k8s-node2 <none> <none>

web-5c48c8964d-zpspg 1/1 Running 0 6m33s 10.244.169.137 k8s-node2 <none> <none>#删除污点

[root@k8s-master scheduler]# kubectl taint node k8s-node name-

node/k8s-node untainted

[root@k8s-master scheduler]# kubectl describe nodes k8s-node | grep Tain

Taints: <none>

tolerations(污点容忍)

- tolerations中定义的key、value、effect,要与node上设置的taint保持一直:

如果 operator 是 Equal ,则key与value之间的关系必须相等。

如果 operator 是 Exists ,value可以省略

如果不指定operator属性,则默认值为Equal。

- 还有两个特殊值:

当不指定key,再配合Exists 就能匹配所有的key与value ,可以容忍所有污点。

当不指定effect ,则匹配所有的effect

污点容忍示例:

#设定节点污点

[root@k8s-master scheduler]# kubectl taint node k8s-node name=lee:NoExecute

node/k8s-node tainted

[root@k8s-master scheduler]# kubectl taint node k8s-node2 nodetype=bad:NoSchedule

node/k8s-node2 tainted

[root@k8s-master scheduler]# vim example4.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: web

name: web

spec:

replicas: 6

selector:

matchLabels:

app: web

template:

metadata:

labels:

app: web

spec:

containers:

- image: reg.timinglee.org/library/nginx:latest

name: nginx

tolerations: #容忍所有污点

- operator: Existstolerations: #容忍effect为Noschedule的污点

- operator: Exists

effect: NoScheduletolerations: #容忍指定kv的NoSchedule污点

- key: nodetype

value: bad

effect: NoSchedule#这个是把三个容忍融合在一起的结果:

[root@k8s-master scheduler]# kubectl apply -f example4.yml

deployment.apps/web created

[root@k8s-master scheduler]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

web-86bbd7676-85pjd 1/1 Running 0 3m20s 10.244.113.135 k8s-node <none> <none>

web-86bbd7676-gsf5r 1/1 Running 0 3m20s 10.244.235.194 k8s-master <none> <none>

web-86bbd7676-kth94 1/1 Running 0 3m20s 10.244.235.195 k8s-master <none> <none>

web-86bbd7676-p9lgf 1/1 Running 0 3m20s 10.244.169.146 k8s-node2 <none> <none>

web-86bbd7676-qcgdb 1/1 Running 0 3m20s 10.244.169.145 k8s-node2 <none> <none>

web-86bbd7676-r9gsc 1/1 Running 0 3m20s 10.244.113.134 k8s-node <none> <none>#删除节点污点

[root@k8s-master auth]# kubectl taint node k8s-node name-

node/k8s-node untainted

[root@k8s-master auth]# kubectl describe nodes k8s-node | grep Tain

Taints: <none>

[root@k8s-master auth]# kubectl taint node k8s-node2 nodetype-

node/k8s-node2 untainted

[root@k8s-master auth]# kubectl describe nodes k8s-node2 | grep Tain

Taints: <none>

注意:三种容忍方式每次测试写一个即可

1236

1236

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?