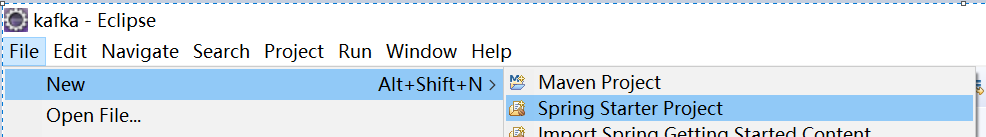

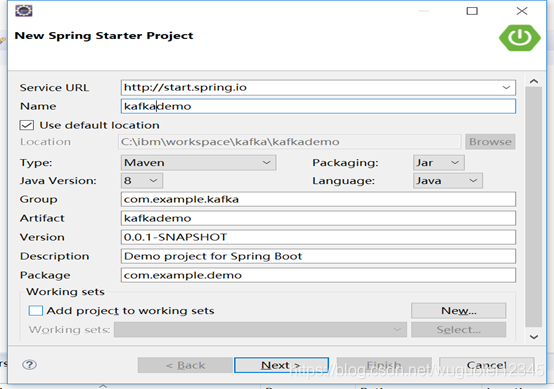

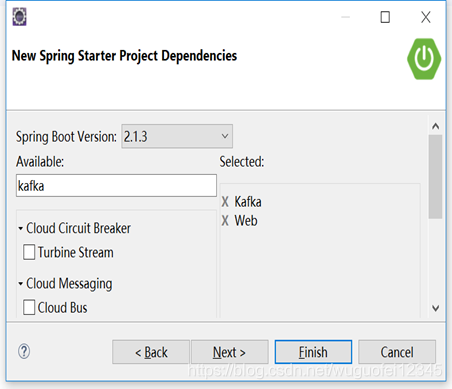

1.创建一个springboot的工程

2.pom.xml添加原始的kafka maven依赖

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_2.12</artifactId>

<version>2.1.1</version>

</dependency>

3.创建一个kafkaproducer KafkaProducerDemo

package com.example.demo.kafka;

import java.util.Properties;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.Producer;

import org.apache.kafka.clients.producer.ProducerRecord;

public class KafkaProducerDemo {

private Producer<String, String> producer;

public void start() {

initKafkaProducer();

sendMsgToKafka();

}

private void sendMsgToKafka() {

ProducerRecord<String, String> record = new ProducerRecord<>("test_kafka_topic", "TestKafkaKey", "this is the test message");

producer.send(record);

producer.close();

}

private void initKafkaProducer() {

Properties props = new Properties();

//kafka集群

props.put("bootstrap.servers", "localhost:9092");

// 配置value的序列化类

props.put("value.serializer", "org.apache.kafka.common.serialization.StringSerializer");

// 配置key的序列化类

props.put("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

producer = new KafkaProducer<String, String>(props);

}

public static void main(String[] args) {

new KafkaProducerDemo().start();

}

}

4.创建一个kafkaproducer KafkaConsumerDemo

package com.example.demo.kafka;

import java.time.Duration;

import java.util.Arrays;

import java.util.Properties;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer;

public class KafkaConsumerDemo {

private KafkaConsumer<String, String> consumer;

public void start() {

initKfkaConsumer();

getMessageFromKafka();

}

private void getMessageFromKafka() {

while (true) {

ConsumerRecords<String, String> records = consumer.poll(Duration.ofSeconds(1));

for (ConsumerRecord<String, String> record : records) {

System.out.println("-------------------------------------------------------------------");

System.out.printf("partition = %d offset = %d, key = %s, value = %s%n", record.partition(),record.offset(), record.key(), record.value());

}

}

}

private void initKfkaConsumer() {

Properties props = new Properties();

//kafka集群

props.put("bootstrap.servers", "localhost:9092");

//反序列化类,要和producer的序列化对应

props.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

props.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

props.put("group.id", "test.group");

consumer = new KafkaConsumer<>(props);

//主题,从哪个主题取消息

consumer.subscribe(Arrays.asList("test_kafka_topic"));

}

public static void main(String[] args) {

new KafkaConsumerDemo().start();

}

}

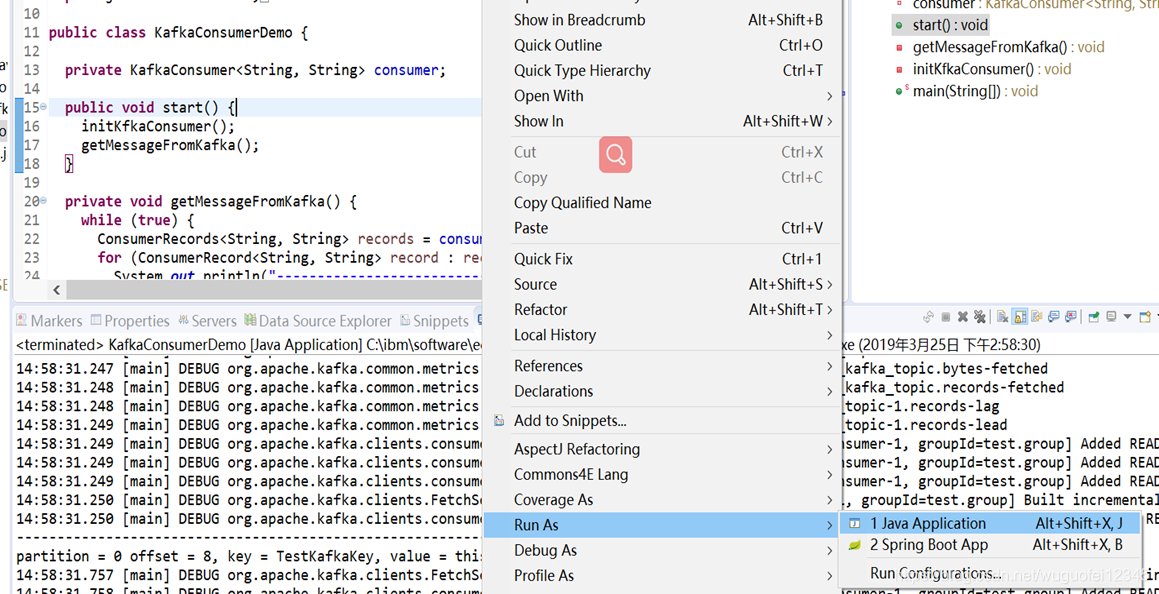

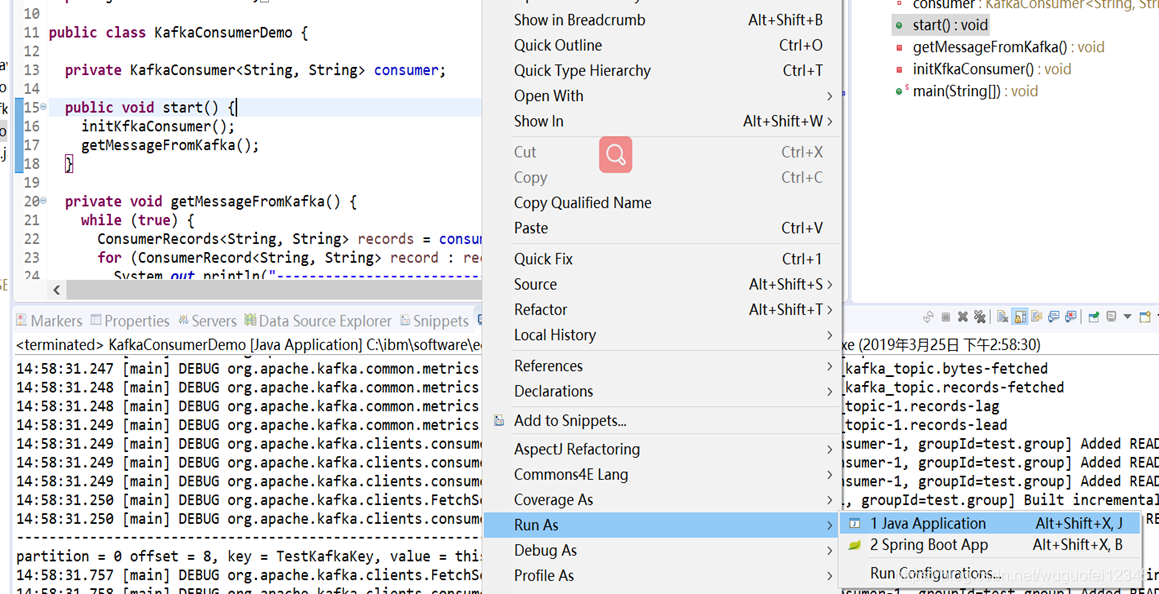

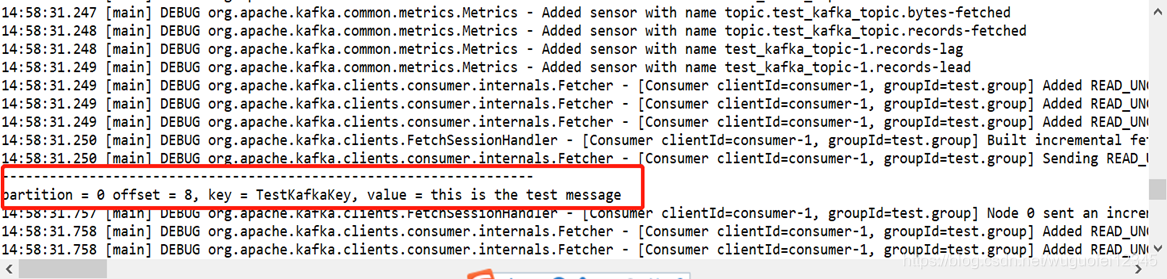

5.启动KafkaConsumerDemo

6.启动KafkaProducerDemo

7.Consumer将输出,producer发送过来的消息,如下图

demo github 地址:https://github.com/wuguofei/kafkademo.git

本文介绍如何使用Spring Boot搭建Kafka环境,包括配置Kafka生产者与消费者,并通过示例代码演示消息的发送与接收过程。

本文介绍如何使用Spring Boot搭建Kafka环境,包括配置Kafka生产者与消费者,并通过示例代码演示消息的发送与接收过程。

543

543

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?