现在做的是固定表到 mysql 的设置,需要自定义 udf 然后传入固定的列值。

先创建一个 maven 工程,自定义 jar 的编写:

pom.xml

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.jz.flume</groupId>

<artifactId>flumeMysql</artifactId>

<version>1.0-SNAPSHOT</version>

<properties> <maven.compiler.target>1.8</maven.compiler.target>

<maven.compiler.source>1.8</maven.compiler.source>

<version.flume>1.8.0</version.flume>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.flume</groupId>

<artifactId>flume-ng-core</artifactId>

<version>1.8.0</version>

</dependency>

<dependency>

<groupId>org.apache.flume</groupId>

<artifactId>flume-ng-configuration</artifactId>

<version>1.8.0</version>

</dependency>

<!-- https://mvnrepository.com/artifact/mysql/mysql-connector-java -->

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>8.0.15</version>

</dependency>

</dependencies>

</project>

通过写 java bean 对象,映射 mysql 表:

info.java

package com.jz.flume;

public class Info {

private String content;

private String createBy;

public String getContent() {

return content;

}

public void setContent(String content) {

this.content = content;

}

public String getCreateBy() {

return createBy;

}

public void setCreateBy(String createBy) {

this.createBy = createBy;

}

}

flume 中 sink 调用的编写

package com.us.flume;

import com.google.common.base.Preconditions;

import com.google.common.base.Throwables;

import com.google.common.collect.Lists;

import org.apache.flume.*;

import org.apache.flume.conf.Configurable;

import org.apache.flume.sink.AbstractSink;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import java.sql.Connection;

import java.sql.DriverManager;

import java.sql.PreparedStatement;

import java.sql.SQLException;

import java.util.List;

public class MysqlSink extends AbstractSink implements Configurable {

private Logger LOG = LoggerFactory.getLogger(MysqlSink.class);

private String hostname;

private String port;

private String databaseName;

private String tableName;

private String user;

private String password;

private PreparedStatement preparedStatement;

private Connection conn;

private int batchSize;

public MysqlSink() {

LOG.info("MysqlSink start...");

}

public void configure(Context context) {

hostname = context.getString("hostname");

Preconditions.checkNotNull(hostname, "hostname must be set!!");

port = context.getString("port");

Preconditions.checkNotNull(port, "port must be set!!");

databaseName = context.getString("databaseName");

Preconditions.checkNotNull(databaseName, "databaseName must be set!!");

tableName = context.getString("tableName");

Preconditions.checkNotNull(tableName, "tableName must be set!!");

user = context.getString("user");

Preconditions.checkNotNull(user, "user must be set!!");

password = context.getString("password");

Preconditions.checkNotNull(password, "password must be set!!");

batchSize = context.getInteger("batchSize", 100);

Preconditions.checkNotNull(batchSize > 0, "batchSize must be a positive number!!");

}

@Override

public void start() {

super.start();

try {

//调用Class.forName()方法加载驱动程序

Class.forName("com.mysql.jdbc.Driver");

} catch (ClassNotFoundException e) {

e.printStackTrace();

}

String url = "jdbc:mysql://" + hostname + ":" + port + "/" + databaseName;

//调用DriverManager对象的getConnection()方法,获得一个Connection对象

try {

conn = DriverManager.getConnection(url, user, password);

conn.setAutoCommit(false);

//创建一个Statement对象

preparedStatement = conn.prepareStatement("insert into " + tableName +

" (content,create_by) values (?,?)");

} catch (SQLException e) {

e.printStackTrace();

System.exit(1);

}

}

@Override

public void stop() {

super.stop();

if (preparedStatement != null) {

try {

preparedStatement.close();

} catch (SQLException e) {

e.printStackTrace();

}

}

if (conn != null) {

try {

conn.close();

} catch (SQLException e) {

e.printStackTrace();

}

}

}

public Status process() throws EventDeliveryException {

Status result = Status.READY;

Channel channel = getChannel();

Transaction transaction = channel.getTransaction();

Event event;

String content;

List<Info> infos = Lists.newArrayList();

transaction.begin();

try {

for (int i = 0; i < batchSize; i++) {

event = channel.take();

if (event != null) {//对事件进行处理

//event 的 body 为 "exec tail$i , abel"

content = new String(event.getBody());

Info info=new Info();

if (content.contains(",")) {

//存储 event 的 content

info.setContent(content.substring(0, content.indexOf(",")));

//存储 event 的 create +1 是要减去那个 ","

info.setCreateBy(content.substring(content.indexOf(",")+1));

}else{

info.setContent(content);

}

infos.add(info);

} else {

result = Status.BACKOFF;

break;

}

}

if (infos.size() > 0) {

preparedStatement.clearBatch();

for (Info temp : infos) {

preparedStatement.setString(1, temp.getContent());

preparedStatement.setString(2, temp.getCreateBy());

preparedStatement.addBatch();

}

preparedStatement.executeBatch();

conn.commit();

}

transaction.commit();

} catch (Exception e) {

try {

transaction.rollback();

} catch (Exception e2) {

LOG.error("Exception in rollback. Rollback might not have been" +

"successful.", e2);

}

LOG.error("Failed to commit transaction." +

"Transaction rolled back.", e);

Throwables.propagate(e);

} finally {

transaction.close();

}

return result;

}

}

然后把maven 打成 jar 包放入 flume 的lib 文件下,mysql-connector-java jar 包也放进去

然后写 flume 下的 conf:

agent1.sources = s1

agent1.sinks = mysqlSink

agent1.channels = c1

agent1.sources.s1.type = org.apache.flume.source.kafka.KafkaSource

agent1.sources.s1.channels = c1

agent1.sources.s1.batchSize = 100

agent1.sources.s1.batchDurationMillis = 1000

agent1.sources.s1.kafka.bootstrap.servers = 888.888.88.8:8888

agent1.sources.s1.kafka.topics = katest

agent1.sources.s1.kafka.consumer.group.id = ka1

agent1.sources.s1.inputCharset = GBK

agent1.sinks.mysqlSink.type = com.jz.flume.MysqlSink

agent1.sinks.mysqlSink.hostname=88.888.88.8

agent1.sinks.mysqlSink.port=88888

agent1.sinks.mysqlSink.databaseName=etltest

agent1.sinks.mysqlSink.tableName=flume_test

agent1.sinks.mysqlSink.user=8888

agent1.sinks.mysqlSink.password=88888888

agent1.sinks.mysqlSink.channel = c1

agent1.channels.c1.type = memory

agent1.channels.c1.capacity = 1000

agent1.channels.c1.transactionCapactiy = 100

mysql 中建表:

create table flume_test(id integer primary key auto_increment,content varchar(20),create_by text);

启动 flume:

./bin/flume-ng agent -c /data/flume/apache-flume-1.8.0-bin/conf -f /data/flume/apache-flume-1.8.0-bin/conf/kafka_mysql.conf -n agent1 -Dflume.root.logger=INFO,console

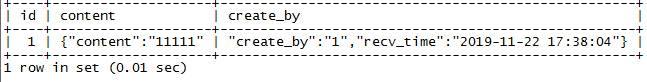

向 kafka 中传入数据,看 mysql 中是否收到:

数据成功存进去。

参考文档:

https://blog.youkuaiyun.com/u012373815/article/details/54098581

6251

6251

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?