本次的作业要求来自:https://edu.cnblogs.com/campus/gzcc/GZCC-16SE2/homework/2941

爬取网页的URL是:http://news.gzcc.cn/html/2005/xiaoyuanxinwen_0710/2.html

源代码如下:

#!/usr/bin/env python # _*_ coding:utf-8 _* import pandas import requests from bs4 import BeautifulSoup from datetime import datetime import re import pandas as pd import sqlite3 def click(url): id=re.findall('(\d{1,5})',url)[-1] clickUrl='http://oa.gzcc.cn/api.php?op=count&id={}&modelid=80'.format(id) resClick=requests.get(clickUrl) newsClick=int(resClick.text.split('.html')[-1].lstrip("('").rstrip("');")) return newsClick def newsdt(showinfo): newsDate=showinfo.split()[0].split(":")[1] newsTime=showinfo.split()[1] newsDT=newsDate+' '+newsTime dt=datetime.strptime(newsDT,'%Y-%m-%d %H:%M:%S') return dt def anews(url): newsDetail={} res=requests.get(url) res.encoding='utf-8' soup=BeautifulSoup(res.text,'html.parser') newsDetail['nenewsTitle']=soup.select('.show-title')[0].text showinfo=soup.select('.show-info')[0].text newsDetail['newsDT']=newsdt(showinfo) newsDetail['newsClick']=click(newsUrl) return newsDetail def alist(url): res=requests.get(listUrl) res.encoding='utf-8' soup = BeautifulSoup(res.text,'html.parser') newsList=[] for news in soup.select('li'): if len(news.select('.news-list-title'))>0: newsUrl=news.select('a')[0]['href'] newsDesc=news.select('.news-list-description')[0].text newsDict=anews(newsUrl) newsDict['description']=newsDesc newsList.append(newsDict) return newsList newsUrl='http://news.gzcc.cn/html/2005/xiaoyuanxinwen_0710/2.html' anews(newsUrl) res = requests.get('http://news.gzcc.cn/html/xiaoyuanxinwen/') res.encoding='utf-8' soup = BeautifulSoup(res.text,'html.parser') for news in soup.select('li'): if len(news.select('.news-list-title'))>0: newsUrl=news.select('a')[0]['href'] print(anews(newsUrl)) listUrl='http://news.gzcc.cn/html/xiaoyuanxinwen/' res=requests.get(listUrl) res.encoding='utf-8' soup = BeautifulSoup(res.text,'html.parser') newsList=[] for news in soup.select('li'): if len(news.select('.news-list-title'))>0: newsUrl=news.select('a')[0]['href'] newsDesc=news.select('.news-list-description')[0].text newsDict=anews(newsUrl) newsDict['description']=newsDesc newsList.append(newsDict) print(newsList) alist(listUrl) allnews=[] for i in range(73,83): listUrl='http://news.gzcc.cn/html/xiaoyuanxinwen/{}.html'.format(i) allnews.extend(alist(listUrl)) len(allnews) newsdf = pd.DataFrame(allnews) newsdf.to_csv(r'D:\office\gzcc.csv') with sqlite3.connect('gzccnewsdb.sqlite') as db: newsdf.to_sql('gzccnewsdb',db)

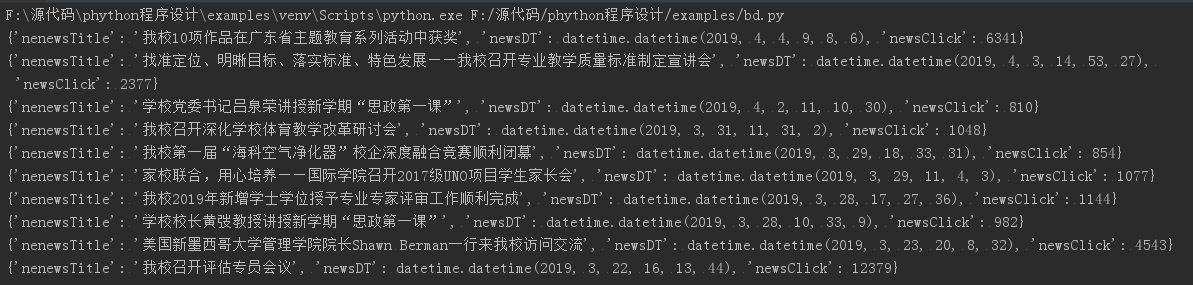

.从新闻url获取新闻详情: 字典,anews。运行结果如下:

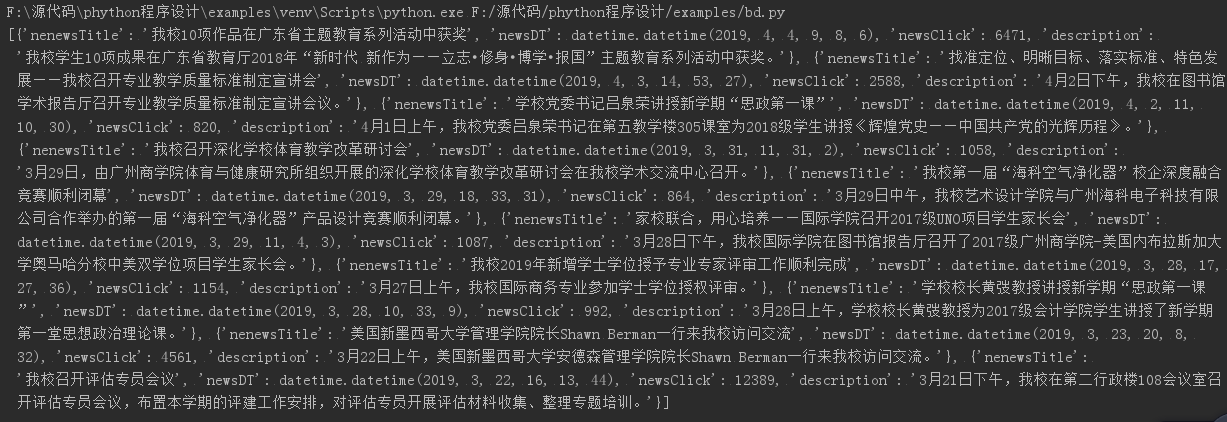

从列表页的url获取新闻url:列表append(字典) alist。运行结果如下:

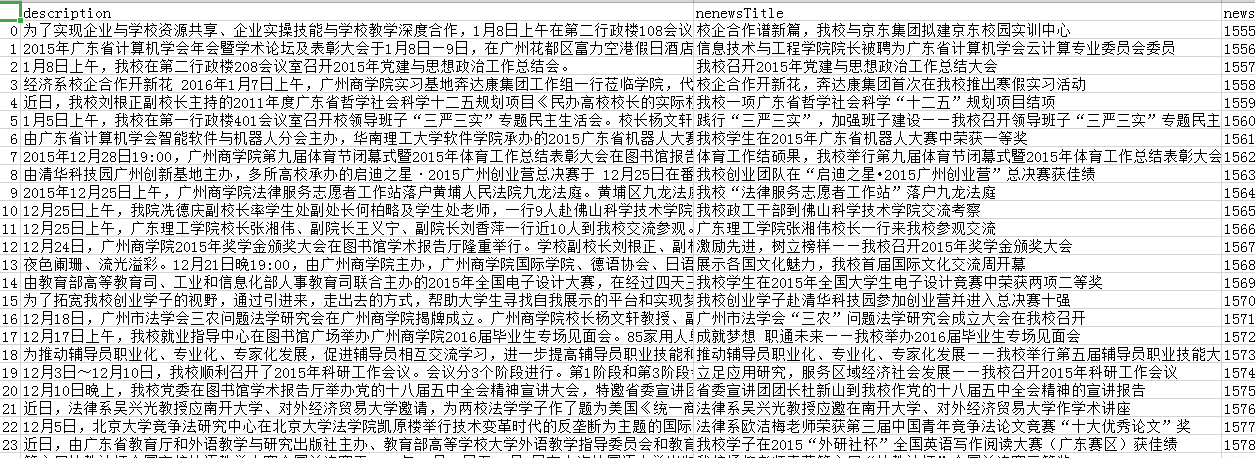

生成所页列表页的url并获取全部新闻 :列表extend(列表) allnews,保存到csv文件。运行结果如下:

本文详细介绍了使用Python进行网络爬虫的实际操作,通过BeautifulSoup和requests库,从广州城市职业学院网站抓取新闻列表及详情,包括标题、发布时间、点击数等信息,并将数据整理为DataFrame,最终保存为CSV文件和SQLite数据库。

本文详细介绍了使用Python进行网络爬虫的实际操作,通过BeautifulSoup和requests库,从广州城市职业学院网站抓取新闻列表及详情,包括标题、发布时间、点击数等信息,并将数据整理为DataFrame,最终保存为CSV文件和SQLite数据库。

136

136

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?