文章目录

安装scrapy_redis

修改start_urls,settings就能实现简单分布式爬虫

pip install scrapy_redis

简单分布式,主机redis实现request去重、数据存储;虚拟机爬取、解析数据

spider修改

主要是不需要start_urls,改为redis_key = 'fang:start'

在主机端Redis中lpush fang:start https://www.fang.com/SoufunFamily.htm,部署的云端虚拟机就开始爬取信息了

# -*- coding: utf-8 -*-

import scrapy

import re

from Fang.items import FangItem

from urllib import request

from scrapy_redis.spiders import RedisSpider

class SfwSpider(RedisSpider):

name = 'sfw'

allowed_domains = ['fang.com']

#在主机端Redis中lpush fang:start https://www.fang.com/SoufunFamily.htm

#部署的云端虚拟机就开始爬取信息了

redis_key = 'fang:start'

def parse(self, response):

trs = response.xpath('//div[@class="outCont"]//tr')

province = None

#解析省份

for tr in trs:

tds = tr.xpath(".//td[2]//text()").extract()

if '\xa0' not in tds:

province_td = tds[0]

province = province_td

else:

province_td = province

#海外不要

if province == '其它':

continue

#分别解析城市和城市链接

citys = tr.xpath(".//td[3]/a")

for city_a in citys:

city = city_a.xpath("./text()").extract()[0]

city_href = city_a.xpath("./@href").extract()[0]

print(province_td,city,city_href)

# 构建新房、二手房链接

url_base = city_href.split('.')

scheme = url_base[0]

domain = url_base[1] + '.' + url_base[2]

#北京特殊

if 'bj' in scheme:

newhouse_url = 'https://newhouse.fang.com/house/s/'

esf_url = 'https://esf.fang.com/'

else:

newhouse_url = scheme + '.' + 'newhouse' + '.' + domain + 'house/s/'

esf_url = scheme + '.' + 'esf' + '.' + domain

print(newhouse_url,esf_url)

yield scrapy.Request(url=newhouse_url,callback=self.parse_newhouse,meta={'info':(province_td,city)})

break

break

#详情页解析

def parse_newhouse(self,response):

province,city = response.meta.get('info')

lis = response.xpath("//div[@class='nl_con clearfix']/ul/li")

for li in lis:

name_mid = li.xpath('.//div[@class="nlcd_name"]/a/text()').extract()

#数据清洗,去除干扰数据

if name_mid != []:

name = name_mid[0].strip()

# print(name)

price_mid = li.xpath('.//div[@class="nhouse_price"]//text()').extract()

if price_mid != []:

price = ''.join(price_mid)

price = re.sub(r'广告|\s','',price)

print(price)

jushi_mid = li.xpath('.//div[@class="house_type clearfix"]/a/text()').extract()

if jushi_mid != []:

jushi = jushi_mid

jushi = list(map(lambda x:re.sub(r'\s','',x),jushi))

jushi = list(filter(lambda x:x.endswith('居'),jushi))

# print(jushi)

area_mid = li.xpath('.//div[@class="house_type clearfix"]/text()').extract()

if area_mid != []:

area = area_mid

area = list(map(lambda x:re.sub(r'-|/|\s','',x),area))

area = list(filter(lambda x:x.endswith('平米'),area))

# print(area)

address_mid = li.xpath('.//div[@class="address"]/a/@title').extract()

if address_mid != []:

address = address_mid[0]

# print(address)

onsale_mid = li.xpath('.//div[@class="fangyuan"]/span/text()').extract()

if onsale_mid != []:

onsale = onsale_mid[0]

# print(onsale)

origin_mid = li.xpath('.//div[@class="nlcd_name"]/a/@href').extract()

if origin_mid != []:

origin = origin_mid[0]

print(origin)

# print(province,city,jushi,area,address,onsale,origin)

item = FangItem(province=province,city=city,jushi=jushi,area=area,address=address,onsale=onsale,origin=origin)

yield item

next_url = response.xpath('//div[@class="page"]//a[@class="next"]/@href').get()

next_url = request.urljoin(response.url,next_url)

if next_url:

yield scrapy.Request(url=next_url,callback=self.parse_newhouse,meta={'info':(province_td,city)})

items

import scrapy

class FangItem(scrapy.Item):

province = scrapy.Field()

city = scrapy.Field()

name = scrapy.Field()

price = scrapy.Field()

jushi = scrapy.Field()

area = scrapy.Field()

address = scrapy.Field()

onsale = scrapy.Field()

origin = scrapy.Field()

中间件随机请求头

import random

class FangDownloaderMiddleware(object):

def process_request(self, request, spider):

#随机请求头

USER_AGENT = [

'Mozilla/4.0 (compatible; MSIE 10.0; Windows NT 6.1; Trident/5.0)',

'Mozilla/1.22 (compatible; MSIE 10.0; Windows 3.1)',

'Mozilla/5.0 (Windows; U; MSIE 9.0; WIndows NT 9.0; en-US))',

'Mozilla/5.0 (Windows; U; MSIE 9.0; Windows NT 9.0; en-US)',

'Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 7.1; Trident/5.0)'

]

user_agent = random.choice(USER_AGENT)

request.headers['User-Agent'] = user_agent

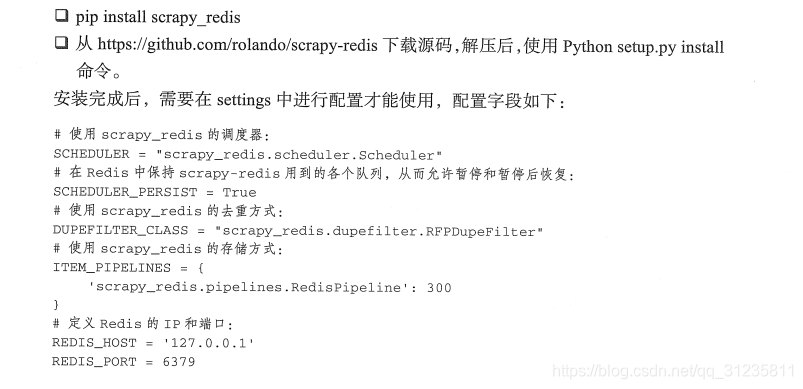

修改settings,将普通Scrapy改为分布式爬虫

#机器人协议

ROBOTSTXT_OBEY = False

DEFAULT_REQUEST_HEADERS = {

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

'Accept-Language': 'en',

}

#启用随机请求头

DOWNLOADER_MIDDLEWARES = {

'Fang.middlewares.FangDownloaderMiddleware': 543,

}

# Scrapy-Redis相关配置

# 启用redis的调度器

SCHEDULER = "scrapy_redis.scheduler.Scheduler"

# 确保所有爬虫共享相同的去重指纹

DUPEFILTER_CLASS = "scrapy_redis.dupefilter.RFPDupeFilter"

# 启用RedisPipeline,item数据存储到redis数据库

ITEM_PIPELINES = {

'scrapy_redis.pipelines.RedisPipeline': 300

}

# 在redis中保持scrapy-redis用到的队列,停止爬取不会清理redis中的队列,从而可以实现暂停和恢复的功能。

SCHEDULER_PERSIST = True

# 设置连接redis信息

REDIS_HOST = '主机IP地址'

REDIS_PORT = 6379

本文介绍如何使用Scrapy-Redis实现分布式爬虫,通过主机的Redis进行request去重及数据存储,云端虚拟机负责抓取和解析数据。文章详细展示了代码修改过程,包括Spider类的调整、中间件的实现及settings配置。

本文介绍如何使用Scrapy-Redis实现分布式爬虫,通过主机的Redis进行request去重及数据存储,云端虚拟机负责抓取和解析数据。文章详细展示了代码修改过程,包括Spider类的调整、中间件的实现及settings配置。

5727

5727

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?