Kubernetes 全面学习指南:从部署到高级应用(10000+字)

前言

容器化技术的浪潮势不可挡,而Kubernetes(K8s)作为容器编排领域的领导者,已成为现代软件开发和运维的基石。本篇博客旨在超越基础概念,为您提供一份深入、全面、详尽的Kubernetes学习指南。我们将从集群的核心架构开始,逐步深入到Pod的生命周期管理、各种控制器的自动化能力、微服务的网络暴露、复杂的数据持久化,直至集群网络、调度和安全机制的深层原理。本指南包含丰富的理论剖析和实战代码,旨在帮助您真正掌握Kubernetes的精髓,而非仅仅停留在表面使用。

第一章:Kubernetes概述与部署

1.1 什么是Kubernetes?为什么需要它?

在没有Kubernetes的时代,管理大规模的容器化应用面临诸多挑战:如何高效地部署数百个容器?如果某个容器崩溃了如何自动重启?如何为容器提供统一的服务访问入口和负载均衡?这些问题,正是Kubernetes旨在解决的。

Kubernetes是一个可移植、可扩展的开源平台,用于管理容器化工作负载和服务。其核心思想是声明式配置:您只需描述期望的集群状态(例如,我需要三个Nginx容器副本),Kubernetes就会持续监控集群的当前状态,并自动采取行动以使其达到期望状态。这种“自愈”能力和自动化特性,极大地简化了运维复杂度,提升了应用的弹性和可用性。

1.2 Kubernetes核心架构与组件解析

一个典型的Kubernetes集群由一个**主节点(Control Plane)和多个工作节点(Worker Nodes)**组成。

主节点(Control Plane)组件

-

API Server:作为集群的唯一入口,所有对集群的操作(如创建Pod、查询状态)都必须通过API Server。它是一个RESTful API服务器,负责处理和验证所有API请求,并协调集群内各组件的通信。API Server的安全性至关重要,它通过认证、授权和准入控制机制来保护集群。

-

etcd:一个高可用的、强一致性的键值存储数据库。它保存了整个集群的所有状态数据,包括Pod、Service、ConfigMap、Secret等所有资源对象的定义和状态。etcd是Kubernetes集群的“大脑”,其稳定性和可用性直接决定了整个集群的健康。

-

Scheduler(调度器):负责将新创建的、未分配节点的Pod调度到最佳工作节点上。调度器通过一个复杂的过程来做出决策,包括:

-

过滤(Filtering):排除所有不满足Pod需求的节点(例如,资源不足、特定硬件要求)。

-

打分(Scoring):对剩下的可用节点进行打分,得分最高的节点被选中。打分规则包括资源利用率、节点亲和性/反亲和性等。

-

-

Controller Manager(控制器管理器):负责运行各种控制器。每个控制器都负责一个特定的任务,例如:

-

Deployment Controller:负责管理Deployment和ReplicaSet的生命周期。

-

ReplicaSet Controller:确保Pod的副本数量始终符合期望。

-

Endpoint Controller:填充Service和Pod之间的连接信息。

-

工作节点(Worker Node)组件

-

Kubelet:运行在每个工作节点上的代理。它接收API Server的指令,负责管理该节点上的Pod和容器的生命周期。Kubelet会定期向API Server汇报节点和Pod的状态,确保API Server中的状态是最新的。

-

Kube-Proxy:一个网络代理,负责为Service提供负载均衡和服务发现。它监听API Server中Service和Endpoint的变化,并根据这些变化配置节点上的网络规则(如iptables或IPVS),将流向Service IP的流量转发到后端Pod。

-

Container Runtime:负责真正运行容器的软件,例如Docker、containerd或CRI-O。Kubelet通过CRI(Container Runtime Interface)与容器运行时进行通信。

K8S 的 常用名词感念

Master:集群控制节点,每个集群需要至少一个master节点负责集群的管控Node:工作负载节点,由master分配容器到这些node工作节点上,然后node节点上的

Pod:kubernetes的最小控制单元,容器都是运行在pod中的,一个pod中可以有1个或者多个容器

Controller:控制器,通过它来实现对pod的管理,比如启动pod、停止pod、伸缩pod的数量等等

Service:pod对外服务的统一入口,下面可以维护者同一类的多个pod

Label:标签,用于对pod进行分类,同一类pod会拥有相同的标签

NameSpace:命名空间,用来隔离pod的运行环境

k8s 环境部署说明

| 主机名 | IP | 角色 |

| harbor | 172.25.254.200 | harbor仓库 |

| master | 172.25.254.100 | master,k8s集群控制节点 |

| node1 | 172.25.254.101 | worker,k8s集群工作节点 |

| node2 | 172.25.254.102 | worker,k8s集群工作节点 |

安装kubernetes集群

基础环境设置

- 所有节点时间同步

[root@master ~]#ntpdata ntp1.aliyun.com

## 如果没有ntpdate命令,则需要安装ntp

- 所有节点关闭防火墙

## 关闭防火墙

[root@master ~]# systemctl stop firewalld

[root@master ~]# systemctl disable firewalld

Removed symlink /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed symlink /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@master ~]#

- 所有节点关闭selinux

## 关闭selinux

[root@master ~]# getenforce

Enforcing

[root@master ~]#

[root@master ~]# setenforce 0

[root@master ~]# getenforce

Permissive

[root@master ~]# sed -i 's/SELINUX=enforcing/SELINUX=permissive/g' /etc/selinux/config

- 所有节点关闭swap

## 临时关闭

[root@master ~]# free -m

total used free shared buff/cache available

Mem: 1819 201 1050 9 567 1457

Swap: 2047 0 2047

[root@master ~]# swapoff -a

[root@master ~]# free -m

total used free shared buff/cache available

Mem: 1819 200 1051 9 567 1457

Swap: 0 0 0

[root@master ~]#

## 永久关闭挂载

[root@master ~]#sed -i '/swap/ s/^/#/' /etc/fstab

- 所有节点修改内核参数,开启ip_fword

[root@master ~]#echo "net.ipv4.ip_forward = 1" >> /etc/sysctl.conf

[root@master ~]#sysctl -p

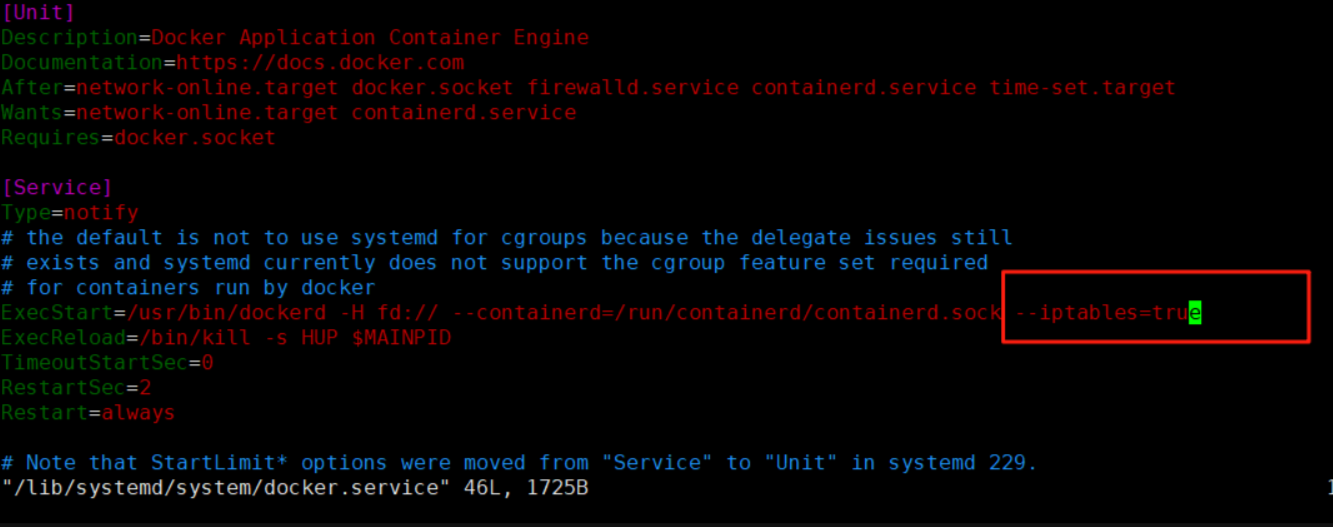

- 所有节点按照docker

dnf install *.rpm -y --allowerasing

vim /lib/systemd/system/docker.service

--iptables=true

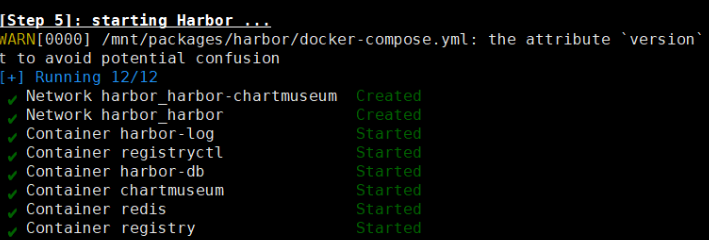

systemctl start docker解压harbor包

tar xzf harbor-offline-installer-v2.5.4.tgz 创建证书存放目录:

mkdir -p /data/certs生成证书:

openssl req -newkey rsa:4096 \

-nodes -sha256 -keyout /data/certs/rin.key \

-addext "subjectAltName = DNS:www.rin.com" \

-x509 -days 365 -out /data/certs/rin.crt编辑harbor仓库配置文件:

cp harbor.yml.tmpl harbor.yml

vim harbor.yml# The IP address or hostname to access admin UI and registry service.

# DO NOT use localhost or 127.0.0.1, because Harbor needs to be accessed by external clients.

hostname: www.rin.com

# https related config

https:

# https port for harbor, default is 443

port: 443

# The path of cert and key files for nginx

certificate: /data/certs/rin.crt

private_key: /data/certs/rin.key

# The initial password of Harbor admin

# It only works in first time to install harbor

# Remember Change the admin password from UI after launching Harbor.

harbor_admin_password: rin

# The default data volume

data_volume: /data

安装

./install.sh --with-chartmuseum

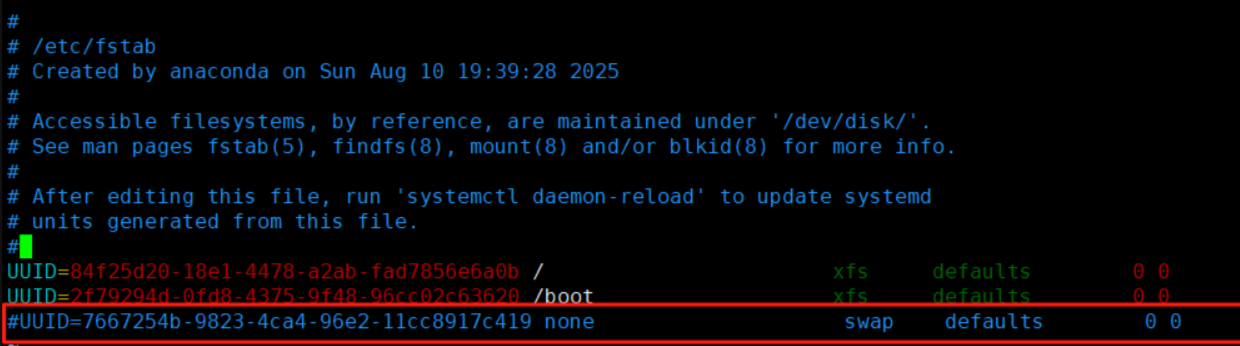

禁用swap

master和node配置:

systemctl mask swap.target

vim /etc/fstab

重新加载

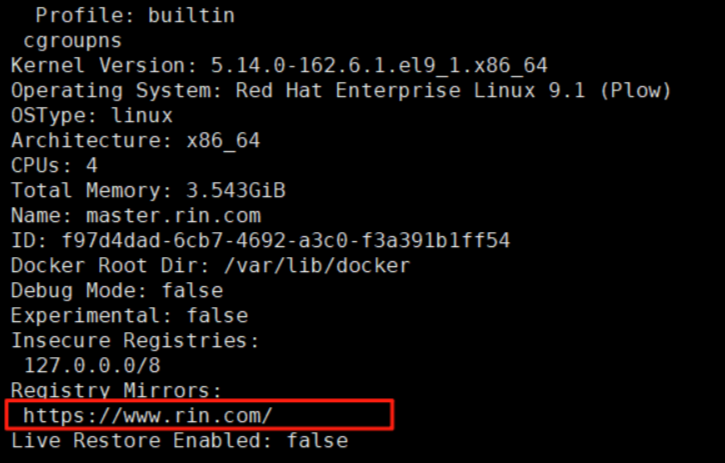

systemctl daemon-reload设置docker默认库

vim /etc/docker/daemon.json

{

"registry-mirrors": ["https://www.rin.com"]

}

覆盖

for i in 10 20 100;

do scp -r /etc/docker/daemon.json root@172.25.254.$i:/etc/docker/daemon.json;

done启动各节点的docker:

for i in 10 20 100;

do ssh root@172.25.254.$i "systemctl start docker";

done

配置解析

vim /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.25.254.100 master.rin.com

172.25.254.200 harbor.rin.com

172.25.254.10 node1.rin.com

172.25.254.20 node2.rin.com将解析分发覆盖各节点:

for i in 10 20 100;

do scp -r /etc/hosts root@172.25.254.$i:/etc/hosts;

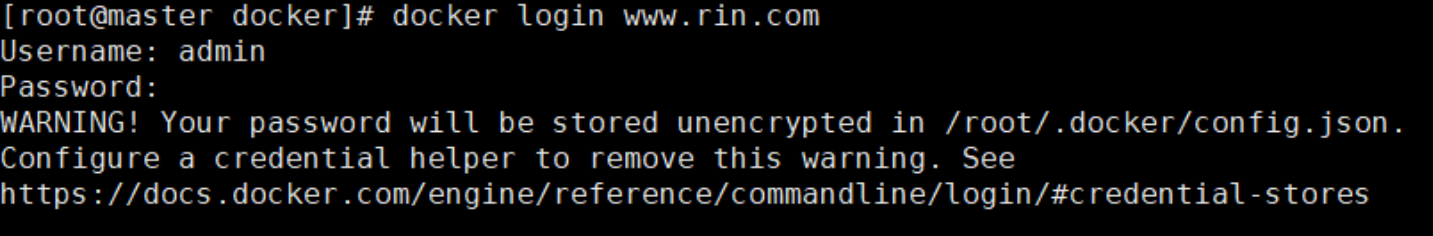

done登录harbor仓库:

安装K8S部署工具

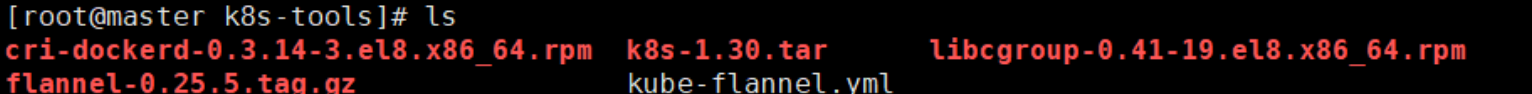

在所节点安装cri-docker

将tools目录传输到各节点:

for i in 10 20 100;

do scp -r /mnt/k8s-tools root@172.25.254.$i:/mnt;

done安装rpm包并配置

dnf install *.rpm -y

vim /lib/systemd/system/cri-docker.service

--network-plugin=cni --pod-infra-container-image=www.rin.com/k8s/pause:3.9分发覆盖

for i in 10 20 100;

do scp -r /lib/systemd/system/cri-docker.service

root@172.25.254.$i:/lib/systemd/system/cri-docker.service;

done启动cri插件

systemctl start dockersystemctl enable --now cri-docker.servicesystemctl start cri-docker.socket

systemctl start cri-docker

systemctl status cri-docker安装依赖

dnf install libnetfilter_conntrack -y安装解压好的所有rpm包

[root@master k8s-1.30]# ls

k8s-1.30.tar.gz

[root@master k8s-1.30]# tar -xzf k8s-1.30.tar.gz

[root@master k8s-1.30]# ls

conntrack-tools-1.4.7-2.el9.x86_64.rpm kubernetes-cni-1.4.0-150500.1.1.x86_64.rpm

cri-dockerd-0.3.14-3.el8.x86_64.rpm libcgroup-0.41-19.el8.x86_64.rpm

cri-tools-1.30.1-150500.1.1.x86_64.rpm libnetfilter_conntrack-1.0.9-1.el9.x86_64.rpm

k8s-1.30.tar.gz libnetfilter_cthelper-1.0.0-22.el9.x86_64.rpm

kubeadm-1.30.0-150500.1.1.x86_64.rpm libnetfilter_cttimeout-1.0.0-19.el9.x86_64.rpm

kubectl-1.30.0-150500.1.1.x86_64.rpm libnetfilter_queue-1.0.5-1.el9.x86_64.rpm

kubelet-1.30.0-150500.1.1.x86_64.rpm socat-1.7.4.1-5.el9.x86_64.rpm安装目录内的所有rpm包

dnf install *.rpm -y集群初始化

启动kubelet服务

systemctl enable --now kubelet.service

初始化:

kubeadm init

--pod-network-cidr=10.244.0.0/16

--image-repository www.rin.com/k8s

--kubernetes-version v1.30.0

--cri-socket=unix:///var/run/cri-dockerd.sock

指定集群配置文件变量

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

export KUBECONFIG=/etc/kubernetes/admin.conf

echo $KUBECONFIG

kubectl get nodes

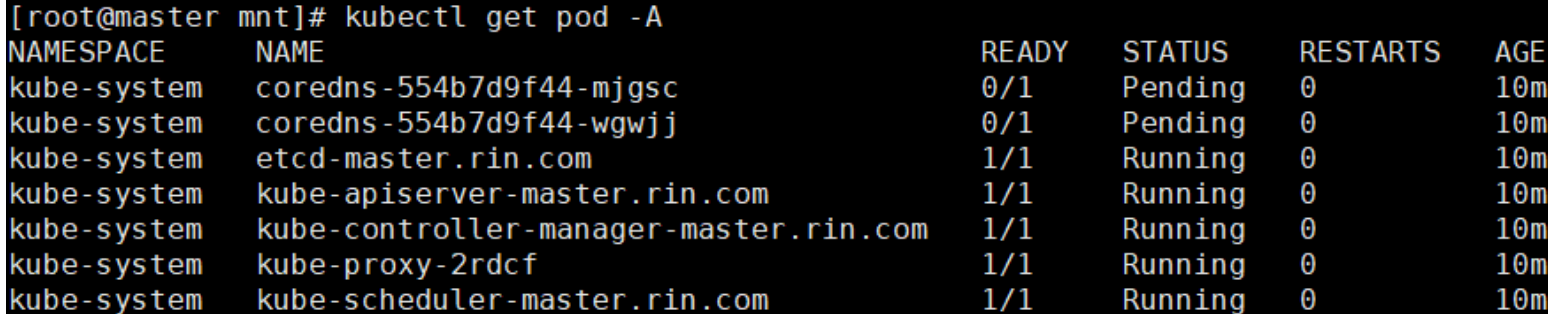

安装flannel网络插件

下载镜像:

#上传镜像到仓库

[root@k8s-master ~]# docker tag flannel/flannel:v0.25.5 \

reg.wjy.org/flannel/flannel:v0.25.5

[root@k8s-master ~]# docker push reg.wjy.org/flannel/flannel:v0.25.5

[root@k8s-master ~]# docker tag flannel/flannel-cni-plugin:v1.5.1-flannel1 \

reg.wjy.org/flannel/flannel-cni-plugin:v1.5.1-flannel1

[root@k8s-master ~]# docker push reg.wjy.org/flannel/flannel-cni-plugin:v1.5.1-flannel1

#编辑kube-flannel.yml 修改镜像下载位置

[root@k8s-master ~]# vim kube-flannel.yml

#需要修改以下几行

[root@k8s-master ~]# grep -n image kube-flannel.yml

146: image: reg.wjy.org/flannel/flannel:v0.25.5

173: image: reg.wjy.org/flannel/flannel-cni-plugin:v1.5.1-flannel1

184: image: reg.wjy.org/flannel/flannel:v0.25.5

#安装flannel网络插件

[root@k8s-master ~]# kubectl apply -f kube-flannel.yml节点扩容

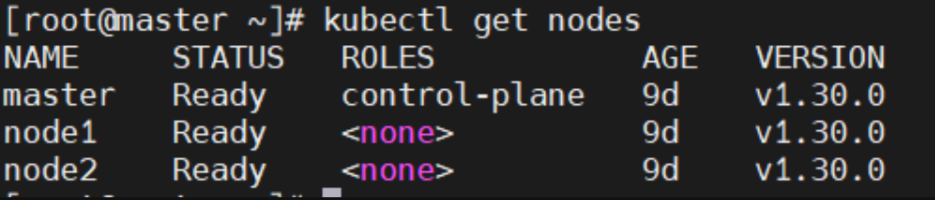

kubeadm join 172.25.254.100:6443 --token a4l2fc.ahmfiubi738p3iup --discovery-token-ca-cert-hash sha256:b99fd92564901bbe4c29b376a8616224ebc05279bf4212f775aaa985592cf169 --cri-socket=unix:///var/run/cri-dockerd.sock测试

[root@master k8s-tools]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master.rin.com Ready control-plane 41m v1.30.0

node1.rin.com Ready <none> 3m7s v1.30.0

node2.rin.com Ready <none> 2m27s v1.30.0查看所有node的状态

[root@k8s-node1 & 2 ~]# kubeadm join 172.25.254.100:6443 --token 5hwptm.zwn7epa6pvatbpwf --discovery-token-ca-cert-hash sha256:52f1a83b70ffc8744db5570288ab51987ef2b563bf906ba4244a300f61e9db23 --cri-socket=unix:///var/run/cri-dockerd.sock

pod

Pod 是 Kubernetes 部署应用程序的基本单元,也是 Kubernetes 中最小的可部署对象。一个 Pod 封装了一个或多个应用程序容器(例如 Docker),以及存储资源、唯一的网络 IP 和控制这些容器如何运行的选项。

2.1 什么是资源

在 Kubernetes 中,所有的内容都抽象为资源,用户需要通过操作资源来管理 Kubernetes。

-

Kubernetes 的最小管理单元是 Pod 而不是容器,只能将容器放在

Pod中。 -

Kubernetes 一般不会直接管理 Pod,而是通过

Pod 控制器来管理 Pod 的。 -

Pod 中服务的访问是由 Kubernetes 提供的

Service资源来实现。 -

Pod 中程序的数据需要持久化是由 Kubernetes 提供的各种存储系统来实现。

2.2 Pod 生命周期与状态

Pod 的状态包括 Pending、Running、Succeeded、Failed 和 Unknown。这些状态由镜像拉取、调度、kubelet 和容器运行时共同决定。

2.3 健康检查

健康检查确保应用的可用性,主要包括三种探针:

-

livenessProbe:判活,失败后重启容器。

-

readinessProbe:判就绪,失败后摘除 Service 流量。

-

startupProbe:为启动较慢的应用提供“缓冲期”。

2.4 资源管理与 QoS

-

requests:决定 Pod 调度的资源下限。

-

limits:设定 Pod 资源的上限。

根据 requests 和 limits 的配置,Pod 的 QoS(服务质量)等级分为:Guaranteed(requests=limits)、Burstable(部分设置)和 BestEffort(未设置)。

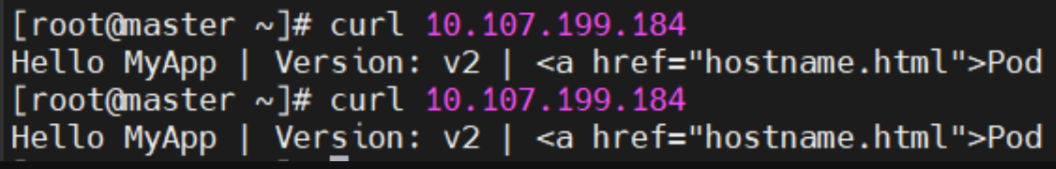

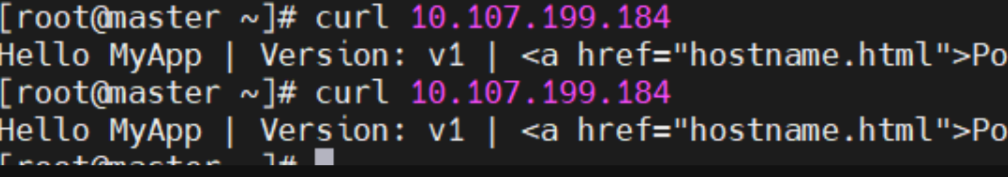

应用版本更新回滚

kubectl create deployment timinglee --image myapp:v1 --replicas 2kubectl expose deployment timinglee --port 80 --target-port 80

历史更新

kubectl rollout history deployment timinglee

更新控制器镜像版本

kubectl set image deployments/timinglee myapp=myapp:v2访问内容测试

版本回滚

kubectl rollout undo deployment timinglee --to-revision 1

2.5 资源管理方式

Kubernetes 资源管理有三种方式:

-

命令式对象管理:直接使用命令操作资源。适用于测试环境,简单但不易审计。

kubectl run nginx-pod --image=nginx:latest --port=80 -

命令式对象配置:通过命令和配置文件操作资源。适用于开发环境,可审计,但配置文件多时操作麻烦。

kubectl create/patch -f nginx-pod.yaml -

声明式对象配置:通过

apply命令和配置文件操作资源。支持目录操作,是生产环境的推荐方式。kubectl apply -f nginx-pod.yaml

2.6运行简单的单个容器pod

用命令获取yaml模板

[root@k8s-master ~]# kubectl run timinglee --image myapp:v1 --dry-run=client -o yaml > pod.yml

[root@k8s-master ~]# vim pod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: timing #pod标签

name: timinglee #pod名称

spec:

containers:

- image: myapp:v1 #pod镜像

name: timinglee #容器名称2.7运行多个容器pod

注意:如果多个容器运行在一个pod中,资源共享的同时在使用相同资源时也会干扰,比如端口

#一个端口干扰示例:

[root@k8s-master ~]# vim pod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: timing

name: timinglee

spec:

containers:

- image: nginx:latest

name: web1

- image: nginx:latest

name: web2

[root@k8s-master ~]# kubectl apply -f pod.yml

pod/timinglee created

[root@k8s-master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

timinglee 1/2 Error 1 (14s ago) 18s

#查看日志

[root@k8s-master ~]# kubectl logs timinglee web2

2024/08/31 12:43:20 [emerg] 1#1: bind() to [::]:80 failed (98: Address already in use)

nginx: [emerg] bind() to [::]:80 failed (98: Address already in use)

2024/08/31 12:43:20 [notice] 1#1: try again to bind() after 500ms

2024/08/31 12:43:20 [emerg] 1#1: still could not bind()

nginx: [emerg] still could not bind()在一个pod中开启多个容器时一定要确保容器彼此不能互相干扰

[root@k8s-master ~]# vim pod.yml

[root@k8s-master ~]# kubectl apply -f pod.yml

pod/timinglee created

apiVersion: v1

kind: Pod

metadata:

labels:

run: timing

name: timinglee

spec:

containers:

- image: nginx:latest

name: web1

- image: busybox:latest

name: busybox

command: ["/bin/sh","-c","sleep 1000000"]

[root@k8s-master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

timinglee 2/2 Running 0 19s2.8理解pod间的网络整合

同在一个pod中的容器公用一个网络

[root@k8s-master ~]# vim pod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: timinglee

name: test

spec:

containers:

- image: myapp:v1

name: myapp1

- image: busyboxplus:latest

name: busyboxplus

command: ["/bin/sh","-c","sleep 1000000"]

[root@k8s-master ~]# kubectl apply -f pod.yml

pod/test created

[root@k8s-master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test 2/2 Running 0 8s

[root@k8s-master ~]# kubectl exec test -c busyboxplus -- curl -s localhost

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

2.9端口映射

[root@k8s-master ~]# vim pod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: timinglee

name: test

spec:

containers:

- image: myapp:v1

name: myapp1

ports:

- name: http

containerPort: 80

hostPort: 80

protocol: TCP

#测试

[root@k8s-master ~]# kubectl apply -f pod.yml

pod/test created

[root@k8s-master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

test 1/1 Running 0 12s 10.244.1.2 k8s-node1.timinglee.org <none> <none>

[root@k8s-master ~]# curl k8s-node1.timinglee.org

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

2.10如何设定环境变量

[root@k8s-master ~]# vim pod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: timinglee

name: test

spec:

containers:

- image: busybox:latest

name: busybox

command: ["/bin/sh","-c","echo $NAME;sleep 3000000"]

env:

- name: NAME

value: timinglee

[root@k8s-master ~]# kubectl apply -f pod.yml

pod/test created

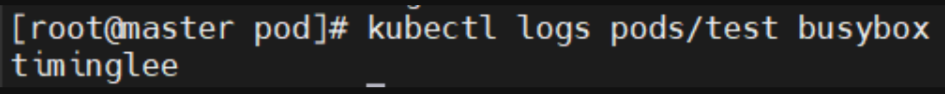

[root@k8s-master ~]# kubectl logs pods/test busybox

timinglee

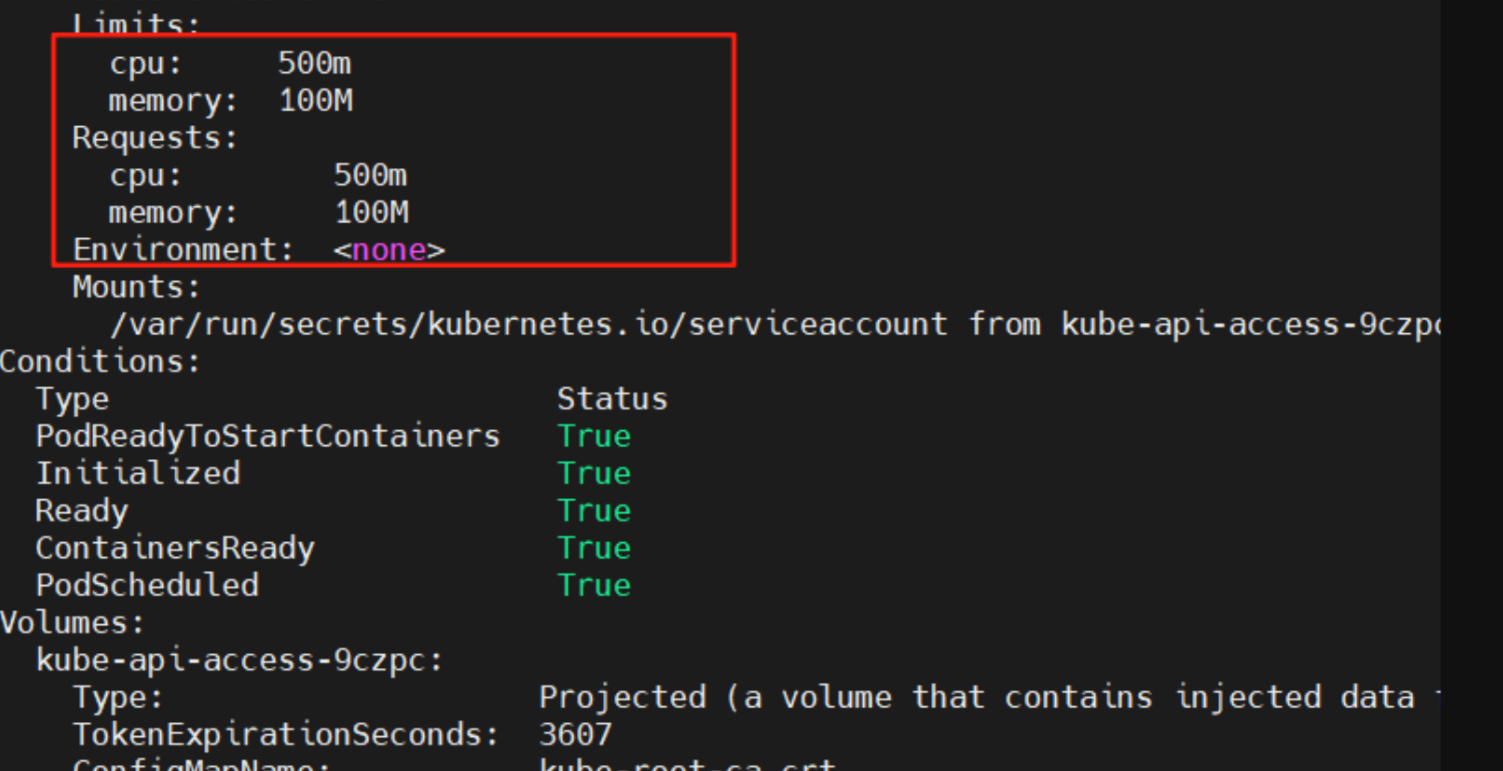

2.11资源限制

资源限制会影响pod的Qos Class资源优先级,资源优先级分为Guaranteed > Burstable > BestEffort

QoS(Quality of Service)即服务质量

| 资源设定 | 优先级类型 |

|---|---|

| 资源限定未设定 | BestEffort |

| 资源限定设定且最大和最小不一致 | Burstable |

| 资源限定设定且最大和最小一致 | Guaranteed |

[root@k8s-master ~]# vim pod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: timinglee

name: test

spec:

containers:

- image: myapp:v1

name: myapp

resources:

limits: #pod使用资源的最高限制

cpu: 500m

memory: 100M

requests: #pod期望使用资源量,不能大于limits

cpu: 500m

memory: 100M

root@k8s-master ~]# kubectl apply -f pod.yml

pod/test created

[root@k8s-master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test 1/1 Running 0 3s

[root@k8s-master ~]# kubectl describe pods test

Limits:

cpu: 500m

memory: 100M

Requests:

cpu: 500m

memory: 100M

QoS Class: Guaranteed

2.12容器启动管理

[root@k8s-master ~]# vim pod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: timinglee

name: test

spec:

restartPolicy: Always

containers:

- image: myapp:v1

name: myapp

[root@k8s-master ~]# kubectl apply -f pod.yml

pod/test created

[root@k8s-master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

test 1/1 Running 0 6s 10.244.2.3 k8s-node2 <none> <none>

[root@k8s-node2 ~]# docker rm -f ccac1d64ea812.13选择运行节点

[root@k8s-master ~]# vim pod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: timinglee

name: test

spec:

nodeSelector:

kubernetes.io/hostname: k8s-node1

restartPolicy: Always

containers:

- image: myapp:v1

name: myapp

[root@k8s-master ~]# kubectl apply -f pod.yml

pod/test created

[root@k8s-master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

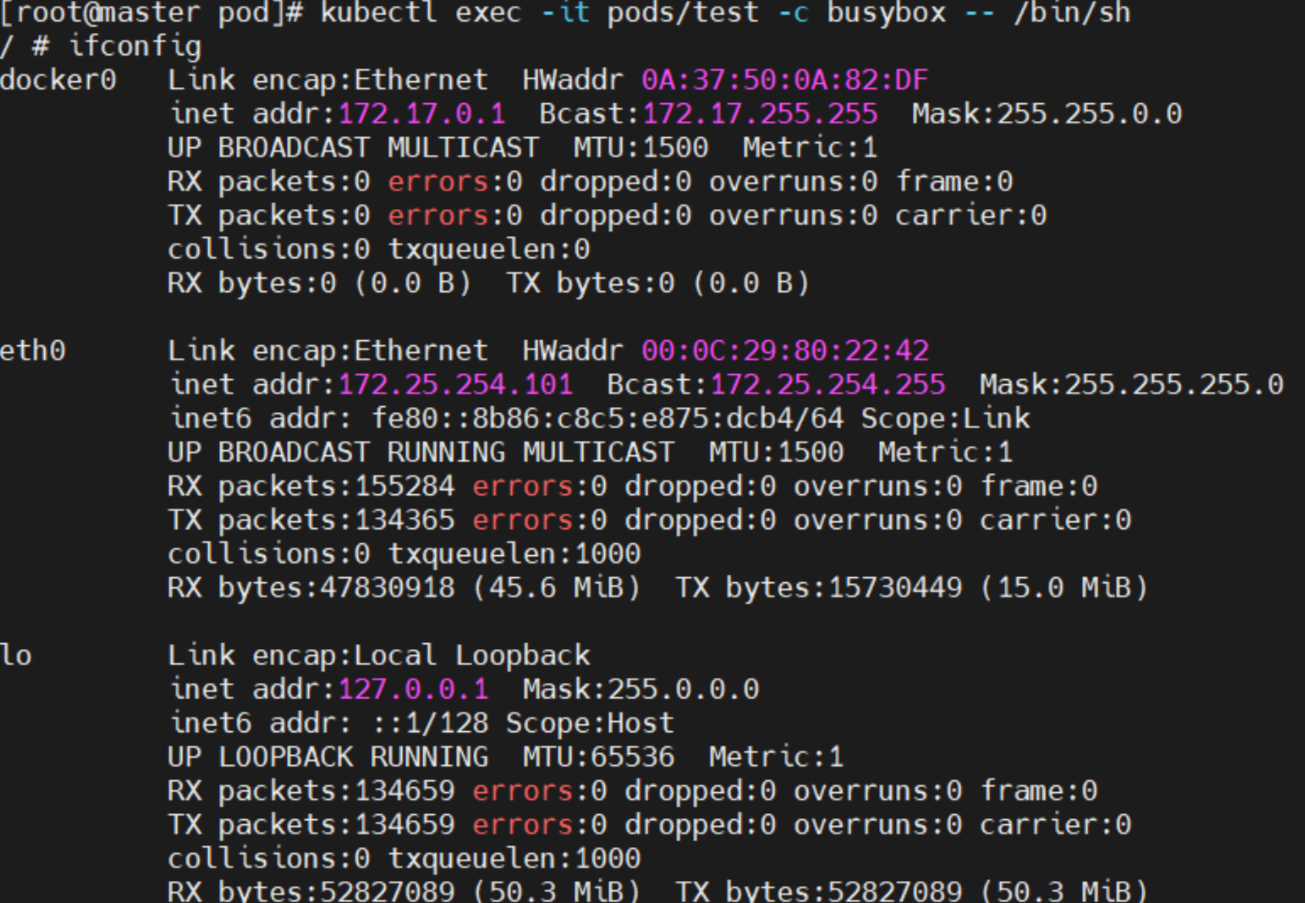

test 1/1 Running 0 21s 10.244.1.5 k8s-node1 <none> <none>2.14共享宿主机网络

[root@k8s-master ~]# vim pod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: timinglee

name: test

spec:

hostNetwork: true

restartPolicy: Always

containers:

- image: busybox:latest

name: busybox

command: ["/bin/sh","-c","sleep 100000"]

[root@k8s-master ~]# kubectl apply -f pod.yml

pod/test created

[root@k8s-master ~]# kubectl exec -it pods/test -c busybox -- /bin/sh

/ # ifconfig

cni0 Link encap:Ethernet HWaddr E6:D4:AA:81:12:B4

inet addr:10.244.2.1 Bcast:10.244.2.255 Mask:255.255.255.0

inet6 addr: fe80::e4d4:aaff:fe81:12b4/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1450 Metric:1

RX packets:6259 errors:0 dropped:0 overruns:0 frame:0

TX packets:6495 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:506704 (494.8 KiB) TX bytes:625439 (610.7 KiB)

docker0 Link encap:Ethernet HWaddr 02:42:99:4A:30:DC

inet addr:172.17.0.1 Bcast:172.17.255.255 Mask:255.255.0.0

UP BROADCAST MULTICAST MTU:1500 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

eth0 Link encap:Ethernet HWaddr 00:0C:29:6A:A8:61

inet addr:172.25.254.20 Bcast:172.25.254.255 Mask:255.255.255.0

inet6 addr: fe80::8ff3:f39c:dc0c:1f0e/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:27858 errors:0 dropped:0 overruns:0 frame:0

TX packets:14454 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:26591259 (25.3 MiB) TX bytes:1756895 (1.6 MiB)

flannel.1 Link encap:Ethernet HWaddr EA:36:60:20:12:05

inet addr:10.244.2.0 Bcast:0.0.0.0 Mask:255.255.255.255

inet6 addr: fe80::e836:60ff:fe20:1205/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1450 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:40 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:163 errors:0 dropped:0 overruns:0 frame:0

TX packets:163 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:13630 (13.3 KiB) TX bytes:13630 (13.3 KiB)

veth9a516531 Link encap:Ethernet HWaddr 7A:92:08:90:DE:B2

inet6 addr: fe80::7892:8ff:fe90:deb2/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1450 Metric:1

RX packets:6236 errors:0 dropped:0 overruns:0 frame:0

TX packets:6476 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:592532 (578.6 KiB) TX bytes:622765 (608.1 KiB)

/ # exit

三 pod的生命周期

3.1 INIT 容器

官方文档:Pod | Kubernetes

-

Pod 可以包含多个容器,应用运行在这些容器里面,同时 Pod 也可以有一个或多个先于应用容器启动的 Init 容器。

-

Init 容器与普通的容器非常像,除了如下两点:

-

它们总是运行到完成

-

init 容器不支持 Readiness,因为它们必须在 Pod 就绪之前运行完成,每个 Init 容器必须运行成功,下一个才能够运行。

-

-

如果Pod的 Init 容器失败,Kubernetes 会不断地重启该 Pod,直到 Init 容器成功为止。但是,如果 Pod 对应的 restartPolicy 值为 Never,它不会重新启动。

3.1.1 INIT 容器的功能

-

Init 容器可以包含一些安装过程中应用容器中不存在的实用工具或个性化代码。

-

Init 容器可以安全地运行这些工具,避免这些工具导致应用镜像的安全性降低。

-

应用镜像的创建者和部署者可以各自独立工作,而没有必要联合构建一个单独的应用镜像。

-

Init 容器能以不同于Pod内应用容器的文件系统视图运行。因此,Init容器可具有访问 Secrets 的权限,而应用容器不能够访问。

-

由于 Init 容器必须在应用容器启动之前运行完成,因此 Init 容器提供了一种机制来阻塞或延迟应用容器的启动,直到满足了一组先决条件。一旦前置条件满足,Pod内的所有的应用容器会并行启动。

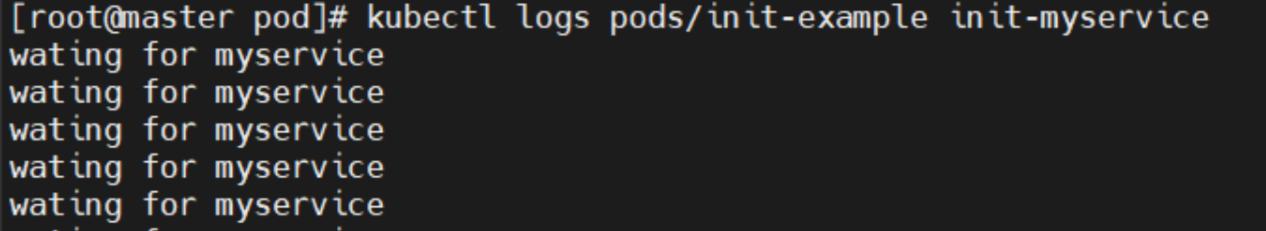

3.1.2 INIT 容器示例

[root@k8s-master ~]# vim pod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

name: initpod

name: initpod

spec:

containers:

- image: myapp:v1

name: myapp

initContainers:

- name: init-myservice

image: busybox

command: ["sh","-c","until test -e /testfile;do echo wating for myservice; sleep 2;done"]

[root@k8s-master ~]# kubectl apply -f pod.yml

pod/initpod created

[root@k8s-master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

initpod 0/1 Init:0/1 0 3s

[root@k8s-master ~]# kubectl logs pods/initpod init-myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

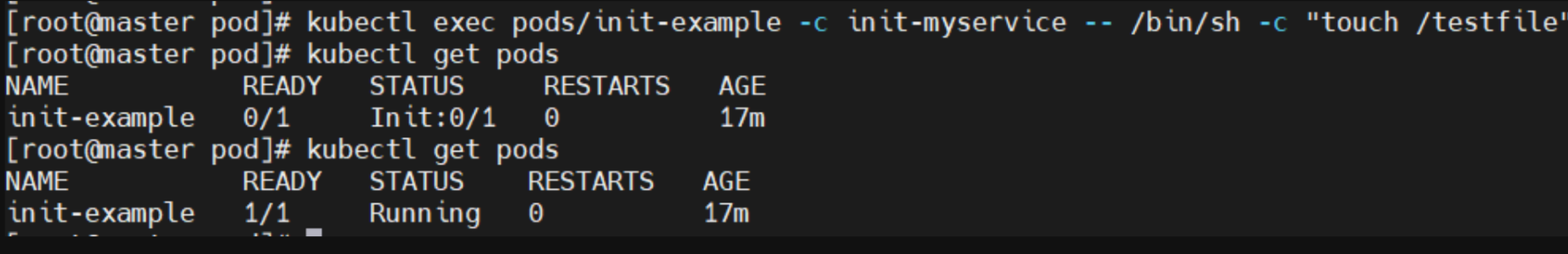

[root@k8s-master ~]# kubectl exec pods/initpod -c init-myservice -- /bin/sh -c "touch /testfile"

[root@k8s-master ~]# kubectl get pods NAME READY STATUS RESTARTS AGE

initpod 1/1 Running 0 62sapiVersion: v1

kind: Pod

metadata:

labels:

run: init-example

name: init-example

spec:

containers:

- image: myapp:v1

name: init-example

initContainers:

- name: init-myservice

image: busybox:latest

command: ["sh","-c","until test -e /testfile;do echo wating for myservice; sleep 2;done"]

kubectl exec pods/initpod -c init-myservice -- /bin/sh -c "touch /testfile"

探针实例

3.2存活探针示例

apiVersion: v1

kind: Pod

metadata:

labels:

run: liveness-example

name: liveness-example

spec:

containers:

- image: myapp:v1

name: myapp

livenessProbe:

tcpSocket: #检测端口存在性

port: 8080

initialDelaySeconds: 3 #容器启动后要等待多少秒后就探针开始工作,默认是 0

periodSeconds: 1 #执行探测的时间间隔,默认为 10s

timeoutSeconds: 1 #探针执行检测请求后,等待响应的超时时间,默认为 1s

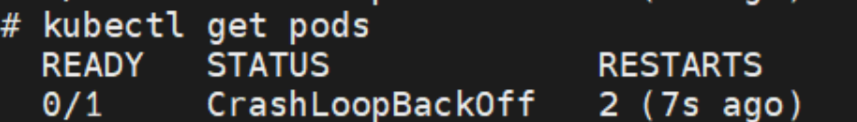

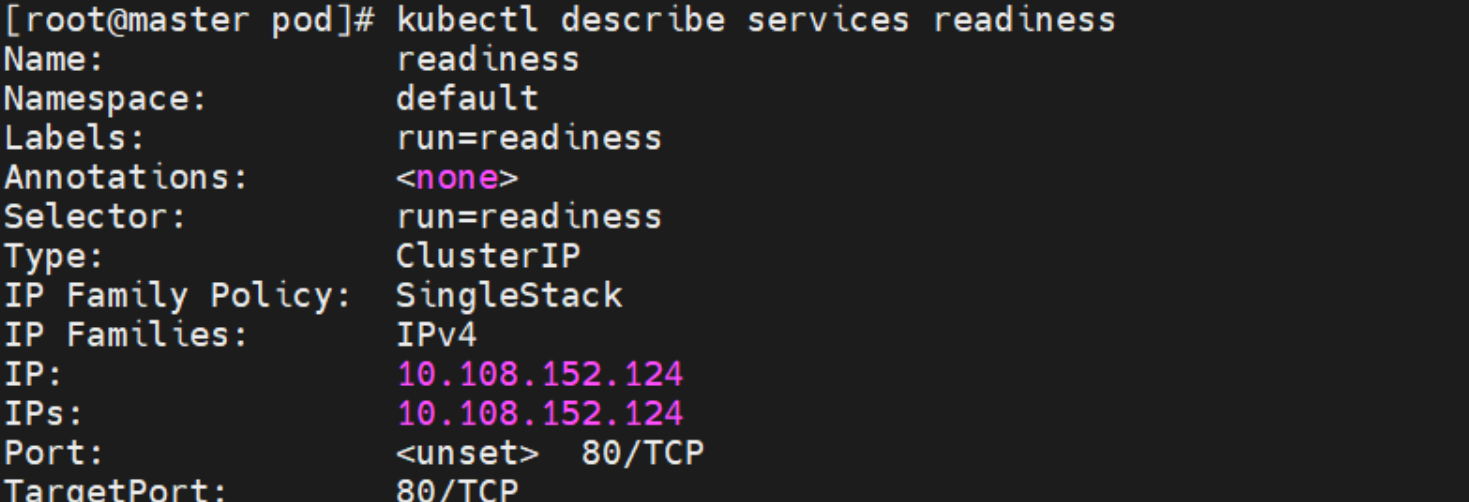

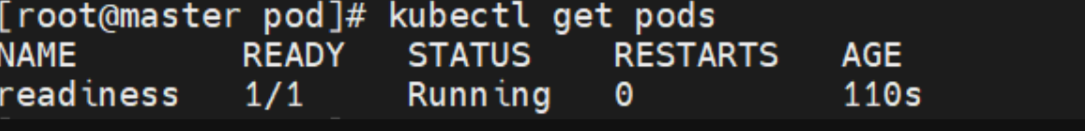

3.3就绪探针示例

apiVersion: v1

kind: Pod

metadata:

labels:

run: readiness

name: readiness

spec:

containers:

- image: myapp:v1

name: myapp

readinessProbe:

httpGet: #指定使用 HTTP GET请求来执行探测

path: /test.html #表示探测时发送 HTTP GET请求的路径,即对/test.html路径的请求

port: 80 #指定请求发送到容器的 80 端口

initialDelaySeconds: 1 #表示容器启动后,延迟 1 秒再开始执行第一次探测

periodSeconds: 3 #设置探测的时间间隔为 3 秒

timeoutSeconds: 1 #定义了每次探测的超时时间为 1 秒

控制器

控制器常用类型

| 控制器名称 | 控制器用途 |

|---|---|

| Replication Controller | 比较原始的pod控制器,已经被废弃,由ReplicaSet替代 |

| ReplicaSet | ReplicaSet 确保任何时间都有指定数量的 Pod 副本在运行 |

| Deployment | 一个 Deployment 为 Pod 和 ReplicaSet 提供声明式的更新能力 |

| DaemonSet | DaemonSet 确保全指定节点上运行一个 Pod 的副本 |

| StatefulSet | StatefulSet 是用来管理有状态应用的工作负载 API 对象。 |

| Job | 执行批处理任务,仅执行一次任务,保证任务的一个或多个Pod成功结束 |

| CronJob | Cron Job 创建基于时间调度的 Jobs。 |

| HPA全称Horizontal Pod Autoscaler | 根据资源利用率自动调整service中Pod数量,实现Pod水平自动缩放 |

replicaset控制器

3.1 replicaset功能

-

ReplicaSet 是下一代的 Replication Controller,官方推荐使用ReplicaSet

-

ReplicaSet和Replication Controller的唯一区别是选择器的支持,ReplicaSet支持新的基于集合的选择器需求

-

ReplicaSet 确保任何时间都有指定数量的 Pod 副本在运行

-

虽然 ReplicaSets 可以独立使用,但今天它主要被Deployments 用作协调 Pod 创建、删除和更新的机制

3.2 replicaset参数说明

| 参数名称 | 字段类型 | 参数说明 |

|---|---|---|

| spec | Object | 详细定义对象,固定值就写Spec |

| spec.replicas | integer | 指定维护pod数量 |

| spec.selector | Object | Selector是对pod的标签查询,与pod数量匹配 |

| spec.selector.matchLabels | string | 指定Selector查询标签的名称和值,以key:value方式指定 |

| spec.template | Object | 指定对pod的描述信息,比如lab标签,运行容器的信息等 |

| spec.template.metadata | Object | 指定pod属性 |

| spec.template.metadata.labels | string | 指定pod标签 |

| spec.template.spec | Object | 详细定义对象 |

| spec.template.spec.containers | list | Spec对象的容器列表定义 |

| spec.template.spec.containers.name | string | 指定容器名称 |

| spec.template.spec.containers.image | string | 指定容器镜像 |

replicaset 示例:

#生成yml文件

[root@master ~]# kubectl create deployment replicaset --image myapp:v1 --dry-run=client -o yaml > replicaset.yml

#编辑配置文件

[root@master ~]# cat replicaset.yml

apiVersion: apps/v1

kind: ReplicaSet

metadata:

name: replicaset

spec:

replicas: 2

selector:

matchLabels:

app: myapp

template:

metadata:

labels:

app: myapp

spec:

containers:

- image: myapp:v1

name: myapp

#创建了一个名为 replicaset 的 ReplicaSet 资源

[root@master ~]# kubectl apply -f replicaset.yml

replicaset.apps/replicaset created

#查看pod列表

[root@master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

replicaset-gq8q7 1/1 Running 0 7s app=myapp

replicaset-zpln9 1/1 Running 0 7s app=myapp

#将其中一个 replicaset的标签名字覆盖为rin

[root@master ~]# kubectl label pod replicaset-gq8q7 app=rin --overwrite

pod/replicaset-gq8q7 labeled

#查看pod列表,检测到名字不同,会自动生成一个新的 replicaset,标签为app=myapp

[root@master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

replicaset-gq8q7 1/1 Running 0 73s app=rin

replicaset-zdhwr 1/1 Running 0 1s app=myapp

replicaset-zpln9 1/1 Running 0 73s app=myapp

#将刚刚改为rin的标签名字更改回来

[root@master ~]# kubectl label pod replicaset-gq8q7 app=myapp --overwrite

pod/replicaset-gq8q7 labeled

#发现会自动将多的pod删除

[root@master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

replicaset-gq8q7 1/1 Running 0 109s app=myapp

replicaset-zpln9 1/1 Running 0 109s app=myapp

#随机一个pod删除

[root@master ~]# kubectl delete pods replicaset-gq8q7

pod "replicaset-gq8q7" deleted

#系统会自动补充pod

[root@master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

replicaset-bwcqc 1/1 Running 0 6s app=myapp

replicaset-zpln9 1/1 Running 0 2m30s app=myapp

#回收资源

[root@master ~]# kubectl delete -f replicaset.yml

replicaset.apps "replicaset" deleteddeployment 控制器

deployment控制器的功能

-

为了更好的解决服务编排的问题,kubernetes在V1.2版本开始,引入了Deployment控制器。

-

Deployment控制器并不直接管理pod,而是通过管理ReplicaSet来间接管理Pod

-

Deployment管理ReplicaSet,ReplicaSet管理Pod

-

Deployment 为 Pod 和 ReplicaSet 提供了一个申明式的定义方法

-

在Deployment中ReplicaSet相当于一个版本

典型的应用场景:

-

用来创建Pod和ReplicaSet

-

滚动更新和回滚

-

扩容和缩容

-

暂停与恢复

deployment控制器示例

[root@master ~]# kubectl create deployment deployment --image myapp:v1 --dry-run=client -o yaml > deployment.yml

[root@master ~]# cat deployment.yml

apiVersion: apps/v1

kind: Deployment

metadata:

name: deployment

spec:

replicas: 4

selector:

matchLabels:

app: myapp

template:

metadata:

labels:

app: myapp

spec:

containers:

- image: myapp:v1

name: myapp

[root@master ~]# kubectl apply -f deployment.yml

deployment.apps/deployment created

[root@master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

deployment-5d886954d4-f5r2c 1/1 Running 0 5s app=myapp,pod-template-hash=5d886954d4

deployment-5d886954d4-gnjh4 1/1 Running 0 5s app=myapp,pod-template-hash=5d886954d4

deployment-5d886954d4-lxfdv 1/1 Running 0 5s app=myapp,pod-template-hash=5d886954d4

deployment-5d886954d4-vbngd 1/1 Running 0 5s app=myapp,pod-template-hash=5d886954d4版本迭代

[root@master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE ES

deployment-5d886954d4-f5r2c 1/1 Running 0 102s

deployment-5d886954d4-gnjh4 1/1 Running 0 102s

deployment-5d886954d4-lxfdv 1/1 Running 0 102s

deployment-5d886954d4-vbngd 1/1 Running 0 102s

#pod运行容器版本为v1

[root@master ~]# curl 10.244.1.8

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a

[root@master ~]# kubectl describe deployments.apps deployment

Name: deployment

Namespace: default

CreationTimestamp: Tue, 12 Aug 2025 23:35:58 -0400

Labels: <none>

Annotations: deployment.kubernetes.io/revision: 1

Selector: app=myapp

Replicas: 4 desired | 4 updated | 4 total | 4 ava

StrategyType: RollingUpdate

MinReadySeconds: 0

RollingUpdateStrategy: 25% max unavailable, 25% max surge

Pod Template:

Labels: app=myapp

Containers:

myapp:

Image: myapp:v1

Port: <none>

Host Port: <none>

Environment: <none>

Mounts: <none>

Volumes: <none>

Node-Selectors: <none>

Tolerations: <none>

Conditions:

Type Status Reason

---- ------ ------

Available True MinimumReplicasAvailable

Progressing True NewReplicaSetAvailable

OldReplicaSets: <none>

NewReplicaSet: deployment-5d886954d4 (4/4 replicas created)

Events:

Type Reason Age From Mess

---- ------ ---- ---- ----

Normal ScalingReplicaSet 2m44s deployment-controller Scal

#更新容器运行版本

[root@master ~]# vim deployment.yml

[root@master ~]# cat deployment.yml

apiVersion: apps/v1

kind: Deployment

metadata:

name: deployment

spec:

minReadySeconds: 5 #最小就绪时间5秒

replicas: 4

selector:

matchLabels:

app: myapp

template:

metadata:

labels:

app: myapp

spec:

containers:

- image: myapp:v2

name: myapp

[root@master ~]# kubectl apply -f deployment.yml

版本回滚

[root@master ~]# cat deployment.yml

apiVersion: apps/v1

kind: Deployment

metadata:

name: deployment

spec:

replicas: 4

selector:

matchLabels:

app: myapp

template:

metadata:

labels:

app: myapp

spec:

containers:

- image: myapp:v1 #回滚到之前版本

name: myapp

[root@master ~]# kubectl apply -f deployment.yml

#测试回滚效果

[root@master ~]# curl 10.244.1.8

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>滚动更新策略

[root@master ~]# cat deployment.yml

apiVersion: apps/v1

kind: Deployment

metadata:

name: deployment

spec:

minReadySeconds: 5

replicas: 4

strategy:

rollingUpdate:

maxSurge: 1

maxUnavailable: 0

selector:

matchLabels:

app: myapp

template:

metadata:

labels:

app: myapp

spec:

containers:

- image: myapp:v1

name: myapp

[root@master ~]# kubectl apply -f deployment.yml 暂停及恢复

[root@k8s2 pod]# kubectl rollout pause deployment deployment-example

[root@k8s2 pod]# vim deployment-example.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: deployment-example

spec:

minReadySeconds: 5

strategy:

rollingUpdate:

maxSurge: 1

maxUnavailable: 0

replicas: 6

selector:

matchLabels:

app: myapp

template:

metadata:

labels:

app: myapp

spec:

containers:

- name: myapp

image: nginx

resources:

limits:

cpu: 0.5

memory: 200Mi

requests:

cpu: 0.5

memory: 200Mi

[root@k8s2 pod]# kubectl apply -f deployment-example.yaml

#调整副本数,不受影响

[root@k8s-master ~]# kubectl describe pods deployment-7f4786db9c-8jw22

Name: deployment-7f4786db9c-8jw22

Namespace: default

Priority: 0

Service Account: default

Node: k8s-node1/172.25.254.10

Start Time: Mon, 02 Sep 2024 00:27:20 +0800

Labels: app=myapp

pod-template-hash=7f4786db9c

Annotations: <none>

Status: Running

IP: 10.244.1.31

IPs:

IP: 10.244.1.31

Controlled By: ReplicaSet/deployment-7f4786db9c

Containers:

myapp:

Container ID: docker://01ad7216e0a8c2674bf17adcc9b071e9bfb951eb294cafa2b8482bb8b4940c1d

Image: myapp:v2

Image ID: docker-pullable://myapp@sha256:5f4afc8302ade316fc47c99ee1d41f8ba94dbe7e3e7747dd87215a15429b9102

Port: <none>

Host Port: <none>

State: Running

Started: Mon, 02 Sep 2024 00:27:21 +0800

Ready: True

Restart Count: 0

Environment: <none>

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-mfjjp (ro)

Conditions:

Type Status

PodReadyToStartContainers True

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

kube-api-access-mfjjp:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

ConfigMapOptional: <nil>

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 6m22s default-scheduler Successfully assigned default/deployment-7f4786db9c-8jw22 to k8s-node1

Normal Pulled 6m22s kubelet Container image "myapp:v2" already present on machine

Normal Created 6m21s kubelet Created container myapp

Normal Started 6m21s kubelet Started container myapp

#但是更新镜像和修改资源并没有触发更新

[root@k8s2 pod]# kubectl rollout history deployment deployment-example

deployment.apps/deployment-example

REVISION CHANGE-CAUSE

3 <none>

4 <none>

#恢复后开始触发更新

[root@k8s2 pod]# kubectl rollout resume deployment deployment-example

[root@k8s2 pod]# kubectl rollout history deployment deployment-example

deployment.apps/deployment-example

REVISION CHANGE-CAUSE

3 <none>

4 <none>

5 <none>

#回收

[root@k8s2 pod]# kubectl delete -f deployment-example.yamldaemonset控制器

daemonset功能

DaemonSet 确保全部(或者某些)节点上运行一个 Pod 的副本。当有节点加入集群时, 也会为他们新增一个 Pod ,当有节点从集群移除时,这些 Pod 也会被回收。删除 DaemonSet 将会删除它创建的所有 Pod

DaemonSet 的典型用法:

-

在每个节点上运行集群存储 DaemonSet,例如 glusterd、ceph。

-

在每个节点上运行日志收集 DaemonSet,例如 fluentd、logstash。

-

在每个节点上运行监控 DaemonSet,例如 Prometheus Node Exporter、zabbix agent等

-

一个简单的用法是在所有的节点上都启动一个 DaemonSet,将被作为每种类型的 daemon 使用

-

一个稍微复杂的用法是单独对每种 daemon 类型使用多个 DaemonSet,但具有不同的标志, 并且对不同硬件类型具有不同的内存、CPU 要求

daemonset 示例

[root@k8s2 pod]# cat daemonset-example.yml

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: daemonset-example

spec:

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

tolerations: #对于污点节点的容忍

- effect: NoSchedule

operator: Exists

containers:

- name: nginx

image: nginx

[root@k8s-master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

daemonset-87h6s 1/1 Running 0 47s 10.244.0.8 k8s-master <none> <none>

daemonset-n4vs4 1/1 Running 0 47s 10.244.2.38 k8s-node2 <none> <none>

daemonset-vhxmq 1/1 Running 0 47s 10.244.1.40 k8s-node1 <none> <none>

#回收

[root@k8s2 pod]# kubectl delete -f daemonset-example.ymljob 控制器

job控制器功能

Job,主要用于负责批量处理(一次要处理指定数量任务)短暂的一次性(每个任务仅运行一次就结束)任务

Job特点如下:

-

当Job创建的pod执行成功结束时,Job将记录成功结束的pod数量

-

当成功结束的pod达到指定的数量时,Job将完成执行

job 控制器示例

[root@k8s2 pod]# vim job.yml

apiVersion: batch/v1

kind: Job

metadata:

name: pi

spec:

completions: 6 #一共完成任务数为6

parallelism: 2 #每次并行完成2个

template:

spec:

containers:

- name: pi

image: perl:5.34.0

command: ["perl", "-Mbignum=bpi", "-wle", "print bpi(2000)"] 计算Π的后2000位

restartPolicy: Never #关闭后不自动重启

backoffLimit: 4 #运行失败后尝试4重新运行

[root@k8s2 pod]# kubectl apply -f job.ymlcronjob 控制器

cronjob 控制器功能

-

Cron Job 创建基于时间调度的 Jobs。

-

CronJob控制器以Job控制器资源为其管控对象,并借助它管理pod资源对象,

-

CronJob可以以类似于Linux操作系统的周期性任务作业计划的方式控制其运行时间点及重复运行的方式。

-

CronJob可以在特定的时间点(反复的)去运行job任务。

cronjob 控制器示例

[root@k8s2 pod]# vim cronjob.yml

apiVersion: batch/v1

kind: CronJob

metadata:

name: hello

spec:

schedule: "* * * * *"

jobTemplate:

spec:

template:

spec:

containers:

- name: hello

image: busybox

imagePullPolicy: IfNotPresent

command:

- /bin/sh

- -c

- date; echo Hello from the Kubernetes cluster

restartPolicy: OnFailure

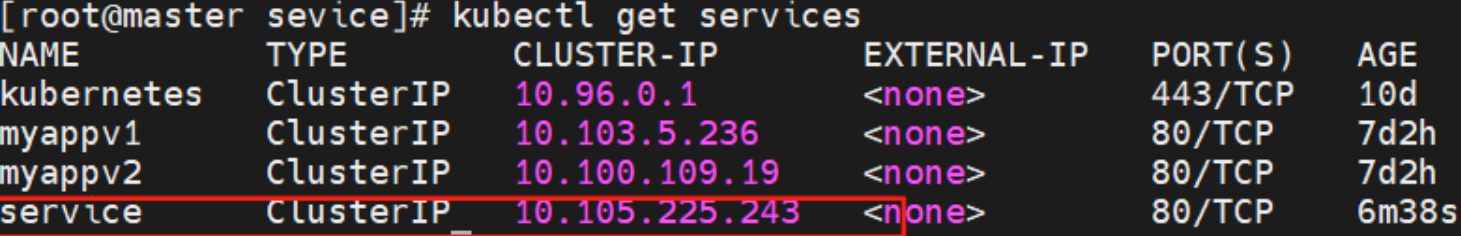

[root@k8s2 pod]# kubectl apply -f cronjob.yml微服务

用控制器来完成集群的工作负载,那么应用如何暴漏出去?需要通过微服务暴漏出去后才能被访问

-

Service是一组提供相同服务的Pod对外开放的接口。

-

借助Service,应用可以实现服务发现和负载均衡。

-

service默认只支持4层负载均衡能力,没有7层功能。(可以通过Ingress实现)

微服务的类型

| 微服务类型 | 作用描述 |

|---|---|

| ClusterIP | 默认值,k8s系统给service自动分配的虚拟IP,只能在集群内部访问 |

| NodePort | 将Service通过指定的Node上的端口暴露给外部,访问任意一个NodeIP:nodePort都将路由到ClusterIP |

| LoadBalancer | 在NodePort的基础上,借助cloud provider创建一个外部的负载均衡器,并将请求转发到 NodeIP:NodePort,此模式只能在云服务器上使用 |

| ExternalName | 将服务通过 DNS CNAME 记录方式转发到指定的域名(通过 spec.externlName 设定 |

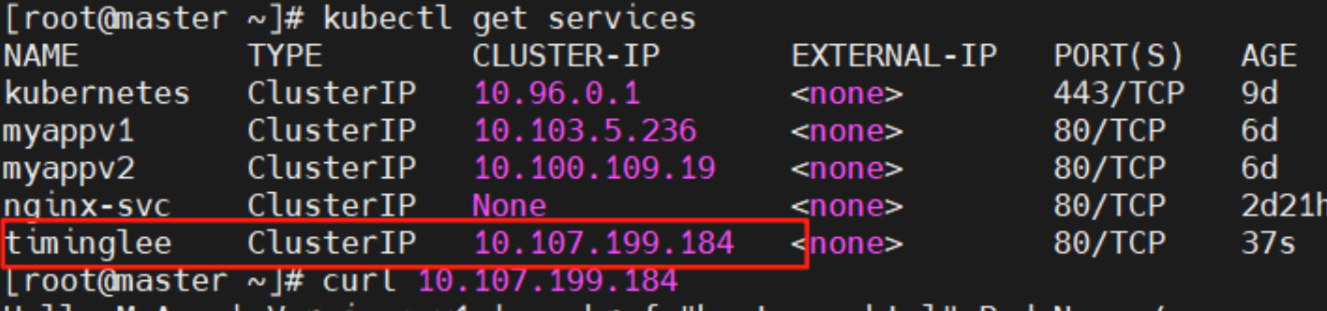

#生成控制器文件并建立控制器

[root@k8s-master ~]# kubectl create deployment timinglee --image myapp:v1 --replicas 2 --dry-run=client -o yaml > timinglee.yaml

#生成微服务yaml追加到已有yaml中

[root@k8s-master ~]# kubectl expose deployment timinglee --port 80 --target-port 80 --dry-run=client -o yaml >> timinglee.yaml

[root@k8s-master ~]# vim timinglee.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: timinglee

name: timinglee

spec:

replicas: 2

selector:

matchLabels:

app: timinglee

template:

metadata:

creationTimestamp: null

labels:

app: timinglee

spec:

containers:

- image: myapp:v1

name: myapp

--- #不同资源间用---隔开

apiVersion: v1

kind: Service

metadata:

labels:

app: timinglee

name: timinglee

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: timinglee

[root@k8s-master ~]# kubectl apply -f timinglee.yaml

deployment.apps/timinglee created

service/timinglee created

[root@k8s-master ~]# kubectl get services

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 19h

timinglee ClusterIP 10.99.127.134 <none> 80/TCP 16s

微服务默认使用iptables调度

```bash

[root@k8s-master ~]# kubectl get services -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 19h <none>

timinglee ClusterIP 10.99.127.134 <none> 80/TCP 119s app=timinglee #集群内部IP 134

#可以在火墙中查看到策略信息

[root@k8s-master ~]# iptables -t nat -nL

KUBE-SVC-I7WXYK76FWYNTTGM 6 -- 0.0.0.0/0 10.99.127.134 /* default/timinglee cluster IP */ tcp dpt:80

```

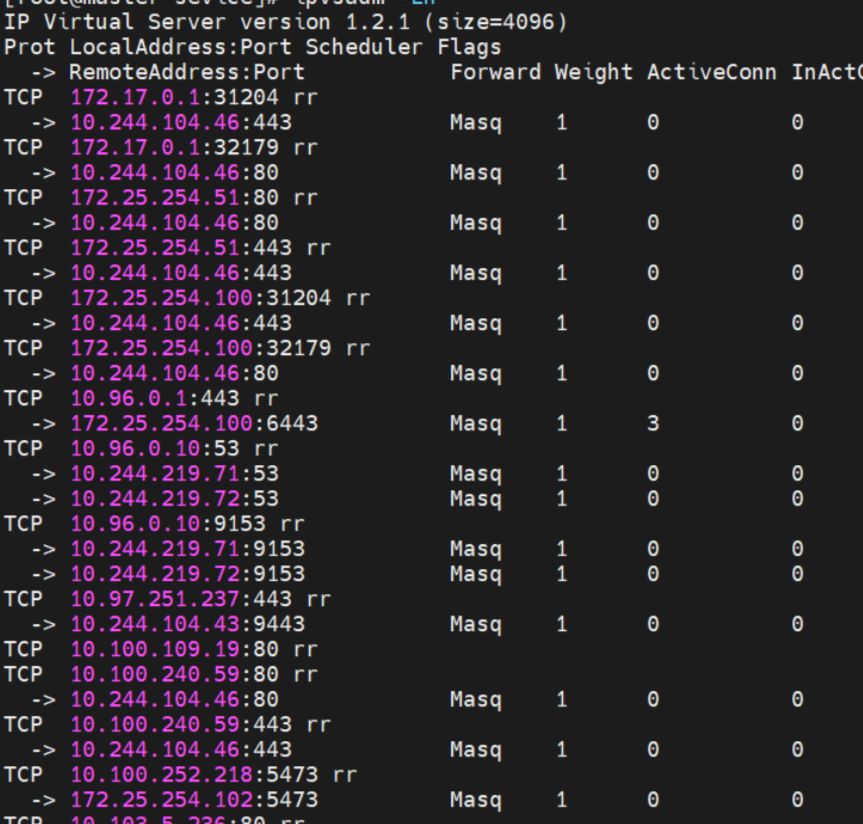

ipvs模式

-

Service 是由 kube-proxy 组件,加上 iptables 来共同实现的

-

kube-proxy 通过 iptables 处理 Service 的过程,需要在宿主机上设置相当多的 iptables 规则,如果宿主机有大量的Pod,不断刷新iptables规则,会消耗大量的CPU资源

-

IPVS模式的service,可以使K8s集群支持更多量级的Pod

配置方式

1.在所有节点中安装ipvsadm

[root@k8s-所有节点 pod]yum install ipvsadm –y2 .修改master节点的代理配置

[root@k8s-master ~]# kubectl -n kube-system edit cm kube-proxy3.重启pod

在pod运行时配置文件中采用默认配置,当改变配置文件后已经运行的pod状态不会变化,所以要重启pod

[root@master sevice]# kubectl -n kube-system get pods | awk '/kube-proxy/{system("kubectl -n kube-system delete pods "$1)}'

pod "kube-proxy-6k6t6" deleted

pod "kube-proxy-99n6f" deleted

pod "kube-proxy-flfnm" deleted

微服务类型

clusterip

clusterip模式只能在集群内访问,并对集群内的pod提供健康检测和自动发现功能

[root@k8s2 service]# vim myapp.yml

---

apiVersion: v1

kind: Service

metadata:

labels:

app: timinglee

name: timinglee

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: timinglee

type: ClusterIP

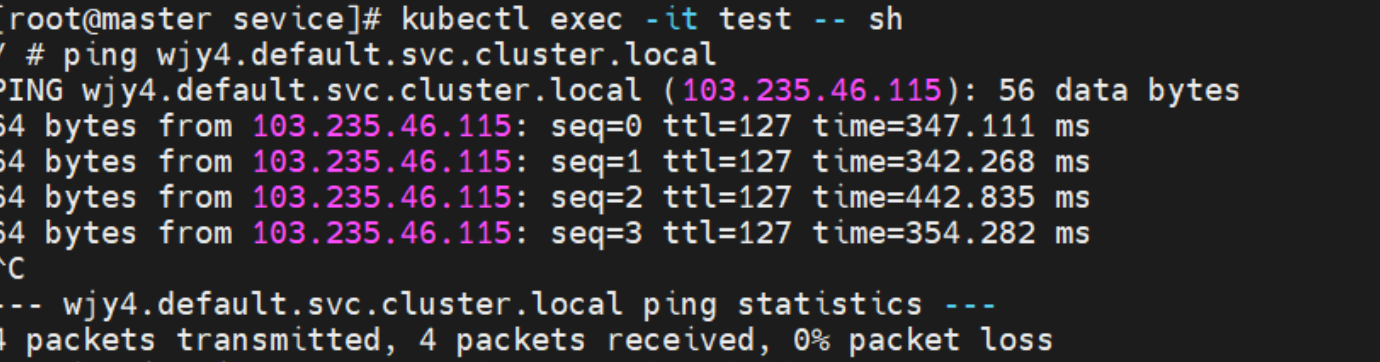

ClusterIP中的特殊模式headless

headless(无头服务)

对于无头 Services 并不会分配 Cluster IP,kube-proxy不会处理它们, 而且平台也不会为它们进行负载均衡和路由,集群访问通过dns解析直接指向到业务pod上的IP,所有的调度有dns单独完成

[root@k8s-master ~]# vim timinglee.yaml

---

apiVersion: v1

kind: Service

metadata:

labels:

app: timinglee

name: timinglee

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: timinglee

type: ClusterIP

clusterIP: None

[root@k8s-master ~]# kubectl delete -f timinglee.yaml

[root@k8s-master ~]# kubectl apply -f timinglee.yaml

deployment.apps/timinglee created

#测试

[root@k8s-master ~]# kubectl get services timinglee

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

timinglee ClusterIP None <none> 80/TCP 6s

[root@k8s-master ~]# dig timinglee.default.svc.cluster.local @10.96.0.10

; <<>> DiG 9.16.23-RH <<>> timinglee.default.svc.cluster.local @10.96.0.10

;; global options: +cmd

;; Got answer:

;; WARNING: .local is reserved for Multicast DNS

;; You are currently testing what happens when an mDNS query is leaked to DNS

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 51527

;; flags: qr aa rd; QUERY: 1, ANSWER: 2, AUTHORITY: 0, ADDITIONAL: 1

;; WARNING: recursion requested but not available

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 4096

; COOKIE: 81f9c97b3f28b3b9 (echoed)

;; QUESTION SECTION:

;timinglee.default.svc.cluster.local. IN A

;; ANSWER SECTION:

timinglee.default.svc.cluster.local. 20 IN A 10.244.2.14 #直接解析到pod上

timinglee.default.svc.cluster.local. 20 IN A 10.244.1.18

;; Query time: 0 msec

;; SERVER: 10.96.0.10#53(10.96.0.10)

;; WHEN: Wed Sep 04 13:58:23 CST 2024

;; MSG SIZE rcvd: 178

#开启一个busyboxplus的pod测试

[root@k8s-master ~]# kubectl run test --image busyboxplus -it

If you don't see a command prompt, try pressing enter.

/ # nslookup timinglee-service

Server: 10.96.0.10

Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

Name: timinglee-service

Address 1: 10.244.2.16 10-244-2-16.timinglee-service.default.svc.cluster.local

Address 2: 10.244.2.17 10-244-2-17.timinglee-service.default.svc.cluster.local

Address 3: 10.244.1.22 10-244-1-22.timinglee-service.default.svc.cluster.local

Address 4: 10.244.1.21 10-244-1-21.timinglee-service.default.svc.cluster.local

/ # curl timinglee-service

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

/ # curl timinglee-service/hostname.html

timinglee-c56f584cf-b8t6mnodeport

通过ipvs暴漏端口从而使外部主机通过master节点的对外ip:<port>来访问pod业务

其访问过程为:

[root@k8s-master ~]# vim timinglee.yaml

---

apiVersion: v1

kind: Service

metadata:

labels:

app: timinglee-service

name: timinglee-service

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: timinglee

type: NodePort

[root@k8s-master ~]# kubectl apply -f timinglee.yaml

deployment.apps/timinglee created

service/timinglee-service created

[root@k8s-master ~]# kubectl get services timinglee-service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

timinglee-service NodePort 10.98.60.22 <none> 80:31771/TCP 8

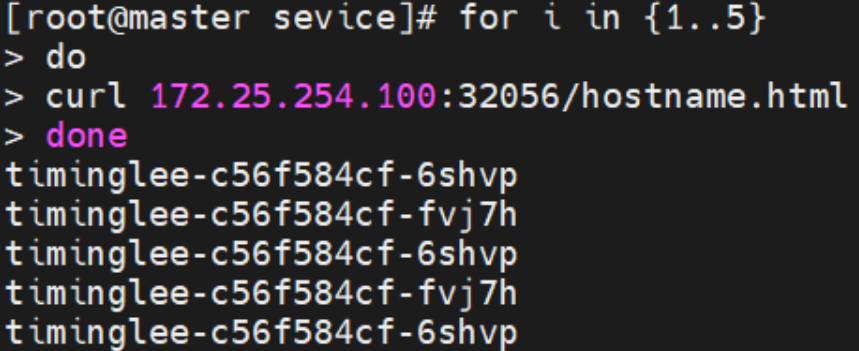

nodeport在集群节点上绑定端口,一个端口对应一个服务

[root@k8s-master ~]# for i in {1..5}

> do

> curl 172.25.254.100:31771/hostname.html

> done

timinglee-c56f584cf-fjxdk

timinglee-c56f584cf-5m2z5

timinglee-c56f584cf-z2w4d

timinglee-c56f584cf-tt5g6

timinglee-c56f584cf-fjxdk测试

loadbalancer

云平台会为我们分配vip并实现访问,如果是裸金属主机那么需要metallb来实现ip的分配

[root@k8s-master ~]# vim timinglee.yaml

---

apiVersion: v1

kind: Service

metadata:

labels:

app: timinglee-service

name: timinglee-service

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: timinglee

type: LoadBalancer

[root@k8s2 service]# kubectl apply -f myapp.yml

默认无法分配外部访问IP

[root@k8s2 service]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 4d1h

myapp LoadBalancer 10.107.23.134 <pending> 80:32537/TCP 4s

LoadBalancer模式适用云平台,裸金属环境需要安装metallb提供支持metalLB

官网:Installation :: MetalLB, bare metal load-balancer for Kubernetes

metalLB功能

为LoadBalancer分配vip

1.设置ipvs模式

[root@k8s-master ~]# kubectl edit cm -n kube-system kube-proxy

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

mode: "ipvs"

ipvs:

strictARP: true

[root@k8s-master ~]# kubectl -n kube-system get pods | awk '/kube-proxy/{system("kubectl -n kube-system delete pods "$1)}'2.下载部署文件

[root@k8s2 metallb]# wget https://raw.githubusercontent.com/metallb/metallb/v0.13.12/config/manifests/metallb-native.yaml3.修改文件中镜像地址,与harbor仓库路径保持一致

[root@k8s-master ~]# vim metallb-native.yaml

...

image: metallb/controller:v0.14.8

image: metallb/speaker:v0.14.8

4.上传镜像到harbor

[root@k8s-master ~]# docker pull quay.io/metallb/controller:v0.14.8

[root@k8s-master ~]# docker pull quay.io/metallb/speaker:v0.14.8

[root@k8s-master ~]# docker tag quay.io/metallb/speaker:v0.14.8 reg.timinglee.org/metallb/speaker:v0.14.8

[root@k8s-master ~]# docker tag quay.io/metallb/controller:v0.14.8 reg.timinglee.org/metallb/controller:v0.14.8

[root@k8s-master ~]# docker push reg.timinglee.org/metallb/speaker:v0.14.8

[root@k8s-master ~]# docker push reg.timinglee.org/metallb/controller:v0.14.8

部署服务

[root@k8s2 metallb]# kubectl apply -f metallb-native.yaml

[root@k8s-master ~]# kubectl -n metallb-system get pods

NAME READY STATUS RESTARTS AGE

controller-65957f77c8-25nrw 1/1 Running 0 30s

speaker-p94xq 1/1 Running 0 29s

speaker-qmpct 1/1 Running 0 29s

speaker-xh4zh 1/1 Running 0 30s配置分配地址段

[root@k8s-master ~]# vim configmap.yml

apiVersion: metallb.io/v1beta1

kind: IPAddressPool

metadata:

name: first-pool #地址池名称

namespace: metallb-system

spec:

addresses:

- 172.25.254.50-172.25.254.99 #修改为自己本地地址段

--- #两个不同的kind中间必须加分割

apiVersion: metallb.io/v1beta1

kind: L2Advertisement

metadata:

name: example

namespace: metallb-system

spec:

ipAddressPools:

- first-pool #使用地址池

[root@k8s-master ~]# kubectl apply -f configmap.yml

ipaddresspool.metallb.io/first-pool created

l2advertisement.metallb.io/example created

[root@k8s-master ~]# kubectl get services

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 21h

timinglee-service LoadBalancer 10.109.36.123 172.25.254.50 80:31595/TCP 9m9s

#通过分配地址从集群外访问服务

[root@reg ~]# curl 172.25.254.50

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>externalname

-

开启services后,不会被分配IP,而是用dns解析CNAME固定域名来解决ip变化问题

-

一般应用于外部业务和pod沟通或外部业务迁移到pod内时

-

在应用向集群迁移过程中,externalname在过度阶段就可以起作用了。

-

集群外的资源迁移到集群时,在迁移的过程中ip可能会变化,但是域名+dns解析能完美解决此问题

[root@k8s-master ~]# vim timinglee.yaml

---

apiVersion: v1

kind: Service

metadata:

labels:

app: timinglee-service

name: timinglee-service

spec:

selector:

app: timinglee

type: ExternalName

externalName: www.timinglee.org

[root@k8s-master ~]# kubectl apply -f timinglee.yaml

[root@k8s-master ~]# kubectl get services timinglee-service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

timinglee-service ExternalName <none> www.timinglee.org <none> 2m58s

Ingress-nginx

官网:

Installation Guide - Ingress-Nginx Controller

ingress-nginx功能

-

一种全局的、为了代理不同后端 Service 而设置的负载均衡服务,支持7层

-

Ingress由两部分组成:Ingress controller和Ingress服务

-

Ingress Controller 会根据你定义的 Ingress 对象,提供对应的代理能力。

-

业界常用的各种反向代理项目,比如 Nginx、HAProxy、Envoy、Traefik 等,都已经为Kubernetes 专门维护了对应的 Ingress Controller。

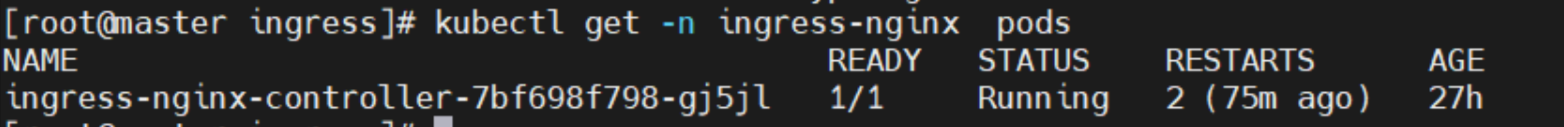

部署ingress

下载部署文件

[root@k8s-master ~]# wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v1.11.2/deploy/static/provider/baremetal/deploy.yaml上传ingress所需镜像到harbor

[root@k8s-master ~]# docker tag registry.k8s.io/ingress-nginx/controller:v1.11.2@sha256:d5f8217feeac4887cb1ed21f27c2674e58be06bd8f5184cacea2a69abaf78dce reg.timinglee.org/ingress-nginx/controller:v1.11.2

[root@k8s-master ~]# docker tag registry.k8s.io/ingress-nginx/kube-webhook-certgen:v1.4.3@sha256:a320a50cc91bd15fd2d6fa6de58bd98c1bd64b9a6f926ce23a600d87043455a3 reg.timinglee.org/ingress-nginx/kube-webhook-certgen:v1.4.3

[root@k8s-master ~]# docker push reg.timinglee.org/ingress-nginx/controller:v1.11.2

[root@k8s-master ~]# docker push reg.timinglee.org/ingress-nginx/kube-webhook-certgen:v1.4.3安装

[root@k8s-master ~]# vim deploy.yaml

445 image: ingress-nginx/controller:v1.11.2

546 image: ingress-nginx/kube-webhook-certgen:v1.4.3

599 image: ingress-nginx/kube-webhook-certgen:v1.4.3

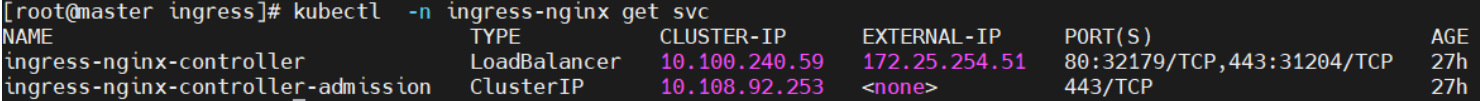

修改微服务

[root@k8s-master ~]# kubectl -n ingress-nginx edit svc ingress-nginx-controller

type: LoadBalanceringress 的高级用法

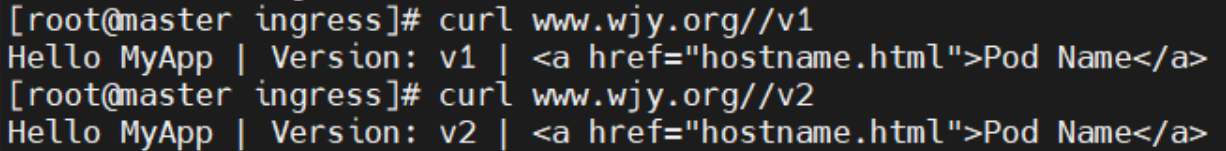

基于路径的访问

1.建立用于测试的控制器myapp

[root@k8s-master app]# kubectl create deployment myapp-v1 --image myapp:v1 --dry-run=client -o yaml > myapp-v1.yaml

[root@k8s-master app]# kubectl create deployment myapp-v2 --image myapp:v2 --dry-run=client -o yaml > myapp-v2.yaml

[root@k8s-master app]# vim myapp-v1.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: myapp-v1

name: myapp-v1

spec:

replicas: 1

selector:

matchLabels:

app: myapp-v1

strategy: {}

template:

metadata:

labels:

app: myapp-v1

spec:

containers:

- image: myapp:v1

name: myapp

---

apiVersion: v1

kind: Service

metadata:

labels:

app: myapp-v1

name: myapp-v1

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: myapp-v1

[root@k8s-master app]# vim myapp-v2.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: myapp-v2

name: myapp-v2

spec:

replicas: 1

selector:

matchLabels:

app: myapp-v2

template:

metadata:

labels:

app: myapp-v2

spec:

containers:

- image: myapp:v2

name: myapp

---

apiVersion: v1

kind: Service

metadata:

labels:

app: myapp-v2

name: myapp-v2

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: myapp-v2

[root@k8s-master app]# kubectl expose deployment myapp-v1 --port 80 --target-port 80 --dry-run=client -o yaml >> myapp-v1.yaml

[root@k8s-master app]# kubectl expose deployment myapp-v2 --port 80 --target-port 80 --dry-run=client -o yaml >> myapp-v1.yaml

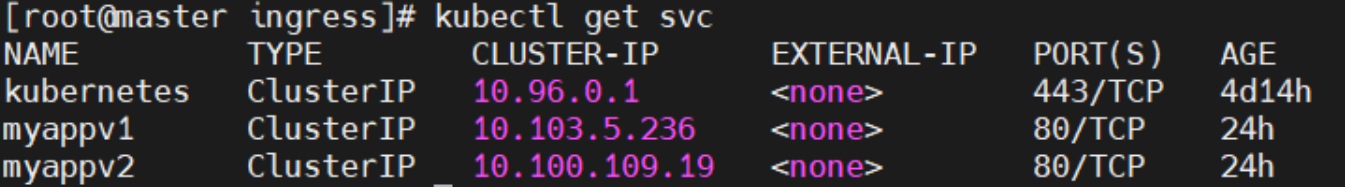

[root@k8s-master app]# kubectl get services

建立ingress的yaml

[root@k8s-master app]# vim ingress1.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/rewrite-target: / #访问路径后加任何内容都被定向到/

name: ingress1

spec:

ingressClassName: nginx

rules:

- host: www.timinglee.org

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /v1

pathType: Prefix

- backend:

service:

name: myapp-v2

port:

number: 80

path: /v2

pathType: Prefix

测试

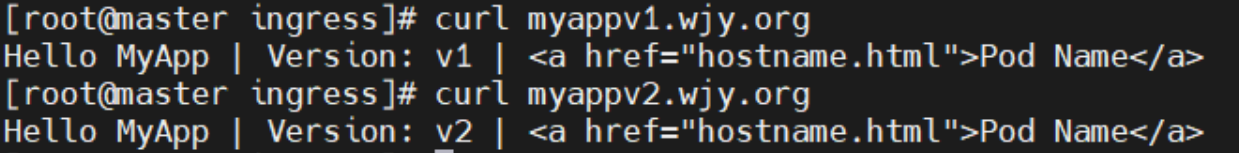

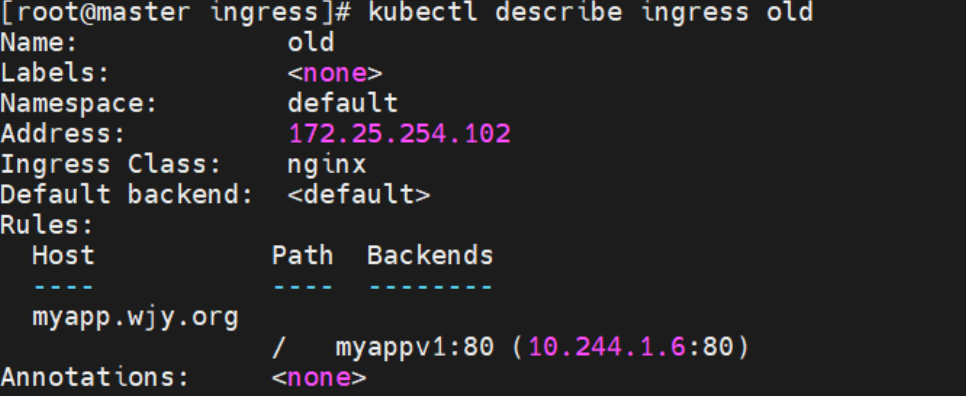

基于域名的访问

在测试主机中设定解析

[root@reg ~]# vim /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.25.254.250 reg.timinglee.org

172.25.254.50 www.timinglee.org myappv1.timinglee.org myappv2.timinglee.org

建立基于域名的yml文件

[root@k8s-master app]# vim ingress2.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

name: ingress2

spec:

ingressClassName: nginx

rules:

- host: myappv1.timinglee.org

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /

pathType: Prefix

- host: myappv2.timinglee.org

http:

paths:

- backend:

service:

name: myapp-v2

port:

number: 80

path: /

pathType: Prefix

#利用文件建立ingress

[root@k8s-master app]# kubectl apply -f ingress2.yml

ingress.networking.k8s.io/ingress2 created

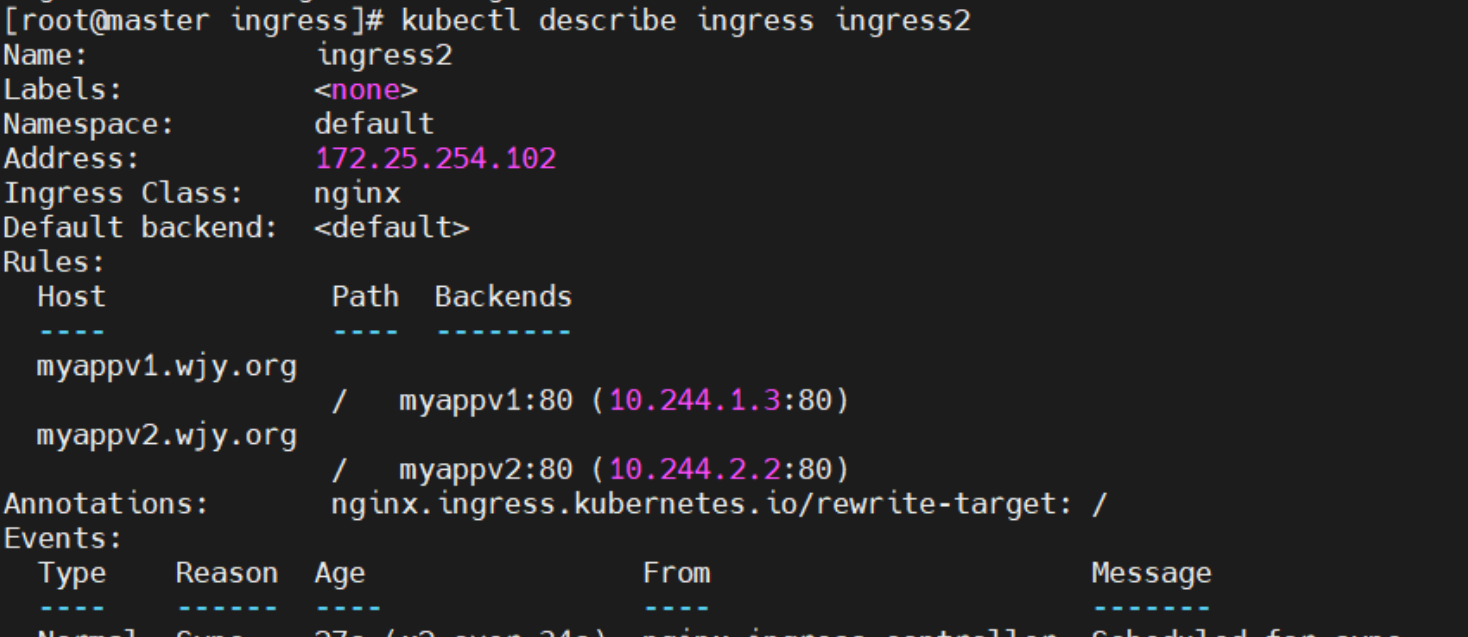

[root@k8s-master app]# kubectl describe ingress ingress2

Name: ingress2

Labels: <none>

Namespace: default

Address:

Ingress Class: nginx

Default backend: <default>

Rules:

Host Path Backends

---- ---- --------

myappv1.timinglee.org

/ myapp-v1:80 (10.244.2.31:80)

myappv2.timinglee.org

/ myapp-v2:80 (10.244.2.32:80)

Annotations: nginx.ingress.kubernetes.io/rewrite-target: /

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Sync 21s nginx-ingress-controller Scheduled for sync

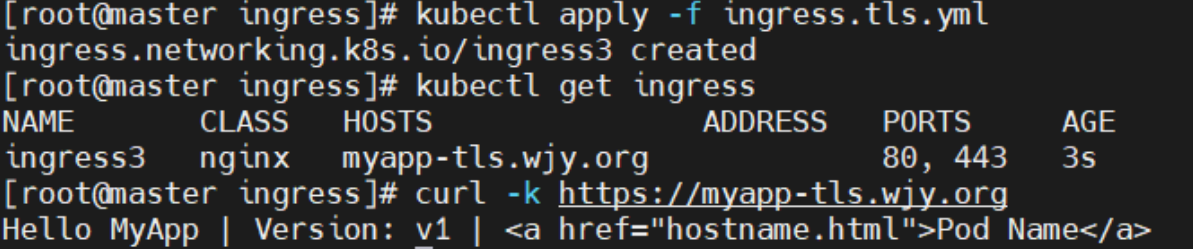

建立tls加密

建立证书

[root@k8s-master app]# openssl req -newkey rsa:2048 -nodes -keyout tls.key -x509 -days 365 -subj "/CN=nginxsvc/O=nginxsvc" -out tls.crt建立加密资源类型secret

[root@k8s-master app]# kubectl create secret tls web-tls-secret --key tls.key --cert tls.crt

secret/web-tls-secret created

[root@k8s-master app]# kubectl get secrets

NAME TYPE DATA AGE

web-tls-secret kubernetes.io/tls 2 6s建立ingress3基于tls认证的yml文件

[root@k8s-master app]# vim ingress3.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

name: ingress3

spec:

tls:

- hosts:

- myapp-tls.timinglee.org

secretName: web-tls-secret

ingressClassName: nginx

rules:

- host: myapp-tls.timinglee.org

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /

pathType: Prefix

#测试

[root@reg ~]# curl -k https://myapp-tls.timinglee.org

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

建立auth认证

建立认证文件

[root@k8s-master app]# dnf install httpd-tools -y

[root@k8s-master app]# htpasswd -cm auth lee

New password:

Re-type new password:

Adding password for user lee

[root@k8s-master app]# cat auth

lee:$apr1$BohBRkkI$hZzRDfpdtNzue98bFgcU10建立认证类型资源

[root@k8s-master app]# kubectl create secret generic auth-web --from-file auth

root@k8s-master app]# kubectl describe secrets auth-web

Name: auth-web

Namespace: default

Labels: <none>

Annotations: <none>

Type: Opaque

Data

====

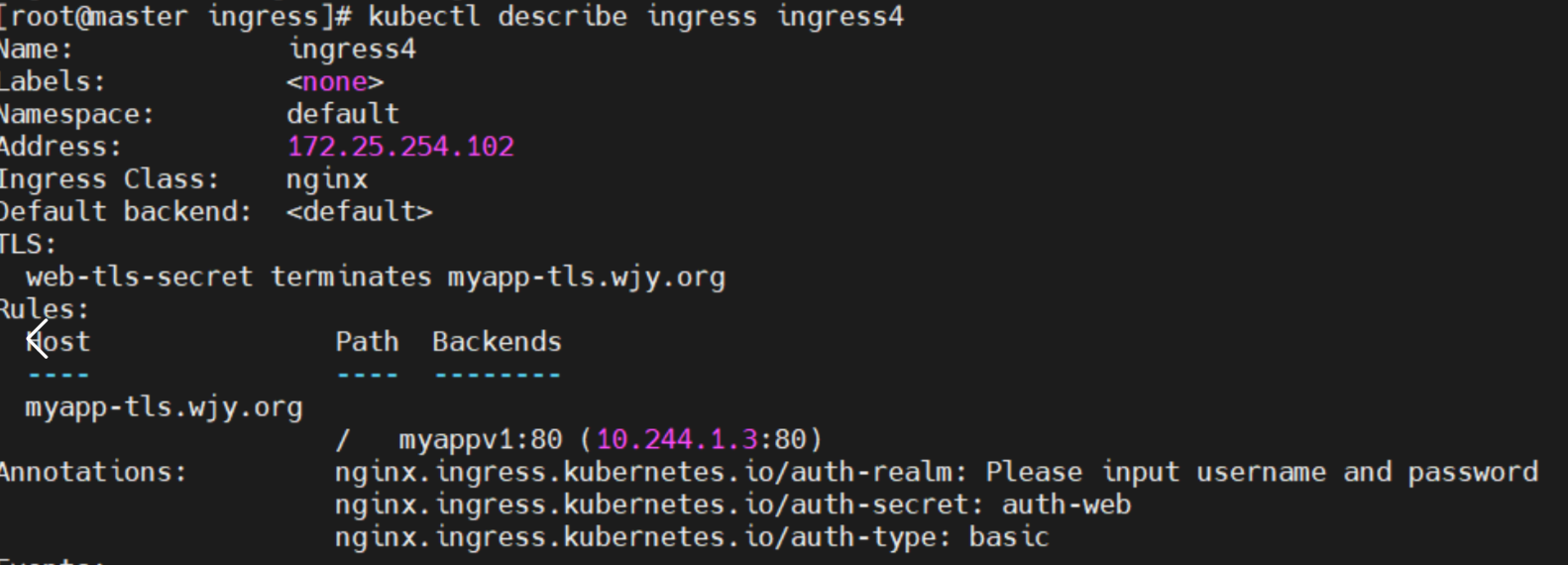

auth: 42 bytes建立ingress4基于用户认证的yaml文件

[root@k8s-master app]# vim ingress4.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/auth-type: basic

nginx.ingress.kubernetes.io/auth-secret: auth-web

nginx.ingress.kubernetes.io/auth-realm: "Please input username and password"

name: ingress4

spec:

tls:

- hosts:

- myapp-tls.timinglee.org

secretName: web-tls-secret

ingressClassName: nginx

rules:

- host: myapp-tls.timinglee.org

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /

pathType: Prefix建立ingress4

[root@k8s-master app]# kubectl apply -f ingress4.yml

ingress.networking.k8s.io/ingress4 created

[root@k8s-master app]# kubectl describe ingress ingress4

Name: ingress4

Labels: <none>

Namespace: default

Address:

Ingress Class: nginx

Default backend: <default>

TLS:

web-tls-secret terminates myapp-tls.timinglee.org

Rules:

Host Path Backends

---- ---- --------

myapp-tls.timinglee.org

/ myapp-v1:80 (10.244.2.31:80)

Annotations: nginx.ingress.kubernetes.io/auth-realm: Please input username and password

nginx.ingress.kubernetes.io/auth-secret: auth-web

nginx.ingress.kubernetes.io/auth-type: basic

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Sync 14s nginx-ingress-controller Scheduled for sync

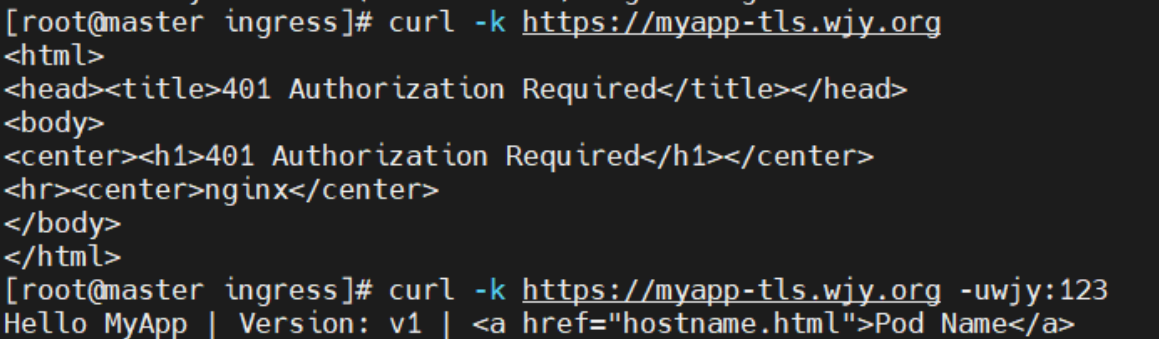

#测试:

[root@reg ~]# curl -k https://myapp-tls.timinglee.org

<html>

<head><title>401 Authorization Required</title></head>

<body>

<center><h1>401 Authorization Required</h1></center>

<hr><center>nginx</center>

</body>

</html>

[root@reg ~]# curl -k https://myapp-tls.timinglee.org -ulee:lee

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

测试

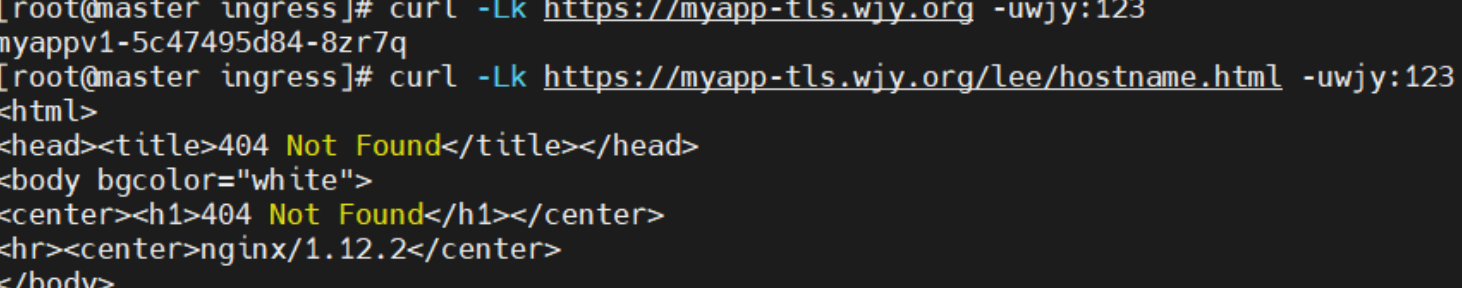

rewrite重定向

指定默认访问的文件到hostname.html上

[root@k8s-master app]# vim ingress5.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/app-root: /hostname.html

nginx.ingress.kubernetes.io/auth-type: basic

nginx.ingress.kubernetes.io/auth-secret: auth-web

nginx.ingress.kubernetes.io/auth-realm: "Please input username and password"

name: ingress5

spec:

tls:

- hosts:

- myapp-tls.timinglee.org

secretName: web-tls-secret

ingressClassName: nginx

rules:

- host: myapp-tls.timinglee.org

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /

pathType: Prefix

[root@k8s-master app]# kubectl apply -f ingress5.yml

ingress.networking.k8s.io/ingress5 created

[root@k8s-master app]# kubectl describe ingress ingress5

Name: ingress5

Labels: <none>

Namespace: default

Address: 172.25.254.10

Ingress Class: nginx

Default backend: <default>

TLS:

web-tls-secret terminates myapp-tls.timinglee.org

Rules:

Host Path Backends

---- ---- --------

myapp-tls.timinglee.org

/ myapp-v1:80 (10.244.2.31:80)

Annotations: nginx.ingress.kubernetes.io/app-root: /hostname.html

nginx.ingress.kubernetes.io/auth-realm: Please input username and password

nginx.ingress.kubernetes.io/auth-secret: auth-web

nginx.ingress.kubernetes.io/auth-type: basic

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Sync 2m16s (x2 over 2m54s) nginx-ingress-controller Scheduled for sync

测试

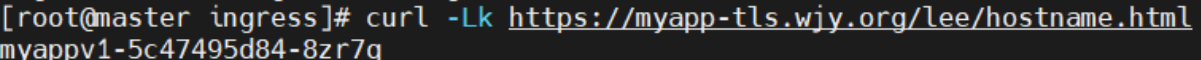

重定向问题

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /$2

nginx.ingress.kubernetes.io/use-regex: "true"

nginx.ingress.kubernetes.io/auth-type: basic

nginx.ingress.kubernetes.io/auth-secret: auth-web

nginx.ingress.kubernetes.io/auth-realm: "Please input username and password"

name: ingress6

spec:

tls:

- hosts:

- myapp-tls.timinglee.org

secretName: web-tls-secret

ingressClassName: nginx

rules:

- host: myapp-tls.timinglee.org

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /

pathType: Prefix

- backend:

service:

name: myapp-v1

port:

number: 80

path: /lee(/|$)(.*) #正则表达式匹配/lee/,/lee/abc

pathType: ImplementationSpecific

Canary金丝雀发布

金丝雀发布(Canary Release)也称为灰度发布,是一种软件发布策略。

主要目的是在将新版本的软件全面推广到生产环境之前,先在一小部分用户或服务器上进行测试和验证,以降低因新版本引入重大问题而对整个系统造成的影响。

是一种Pod的发布方式。金丝雀发布采取先添加、再删除的方式,保证Pod的总量不低于期望值。并且在更新部分Pod后,暂停更新,当确认新Pod版本运行正常后再进行其他版本的Pod的更新。

Canary发布方式

基于header(http包头)灰度

-

通过Annotaion扩展

-

创建灰度ingress,配置灰度头部key以及value

-

灰度流量验证完毕后,切换正式ingress到新版本

-

之前我们在做升级时可以通过控制器做滚动更新,默认25%利用header可以使升级更为平滑,通过key 和vule 测试新的业务体系是否有问题。

建立版本1的ingress

[root@k8s-master app]# vim ingress7.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

name: myapp-v1-ingress

spec:

ingressClassName: nginx

rules:

- host: myapp.timinglee.org

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /

pathType: Prefix

[root@k8s-master app]# kubectl describe ingress myapp-v1-ingress

Name: myapp-v1-ingress

Labels: <none>

Namespace: default

Address: 172.25.254.10

Ingress Class: nginx

Default backend: <default>

Rules:

Host Path Backends

---- ---- --------

myapp.timinglee.org

/ myapp-v1:80 (10.244.2.31:80)

Annotations: <none>

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Sync 44s (x2 over 73s) nginx-ingress-controller Scheduled for sync

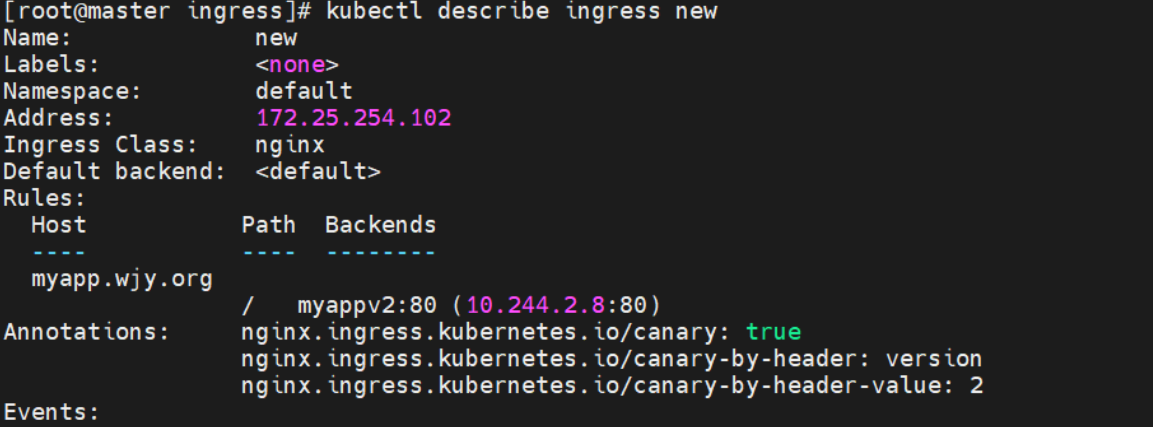

建立基于header的ingress

[root@k8s-master app]# vim ingress8.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-by-header: “version”

nginx.ingress.kubernetes.io/canary-by-header-value: ”2“

name: myapp-v2-ingress

spec:

ingressClassName: nginx

rules:

- host: myapp.timinglee.org

http:

paths:

- backend:

service:

name: myapp-v2

port:

number: 80

path: /

pathType: Prefix

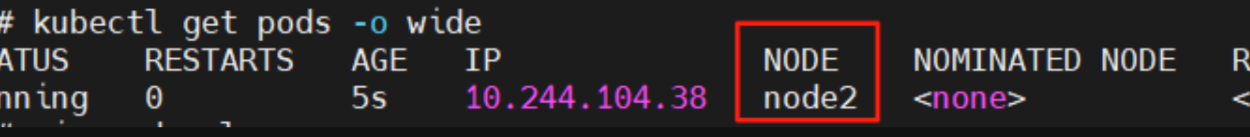

[root@k8s-master app]# kubectl apply -f ingress8.yml

ingress.networking.k8s.io/myapp-v2-ingress created

[root@k8s-master app]# kubectl describe ingress myapp-v2-ingress

Name: myapp-v2-ingress

Labels: <none>

Namespace: default

Address:

Ingress Class: nginx

Default backend: <default>

Rules:

Host Path Backends

---- ---- --------

myapp.timinglee.org

/ myapp-v2:80 (10.244.2.32:80)

Annotations: nginx.ingress.kubernetes.io/canary: true

nginx.ingress.kubernetes.io/canary-by-header: version

nginx.ingress.kubernetes.io/canary-by-header-value: 2

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Sync 21s nginx-ingress-controller Scheduled for sync

基于权重的灰度发布

-

通过Annotaion拓展

-

创建灰度ingress,配置灰度权重以及总权重

-

灰度流量验证完毕后,切换正式ingress到新版本

[root@k8s-master app]# vim ingress8.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-weight: "10" #更改权重值

nginx.ingress.kubernetes.io/canary-weight-total: "100"

name: myapp-v2-ingress

spec:

ingressClassName: nginx

rules:

- host: myapp.timinglee.org

http:

paths:

- backend:

service:

name: myapp-v2

port:

number: 80

path: /

pathType: Prefix

[root@k8s-master app]# kubectl apply -f ingress8.yml

ingress.networking.k8s.io/myapp-v2-ingress created

#测试:

[root@reg ~]# vim check_ingress.sh

#!/bin/bash

v1=0

v2=0

for (( i=0; i<100; i++))

do

response=`curl -s myapp.timinglee.org |grep -c v1`

v1=`expr $v1 + $response`

v2=`expr $v2 + 1 - $response`

done

echo "v1:$v1, v2:$v2"

[root@reg ~]# sh check_ingress.sh

v1:90, v2:10

#更改完毕权重后继续测试可观察变化

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?