先从官网下载下scala,然后配置环境变量,和配置java环境变量一样

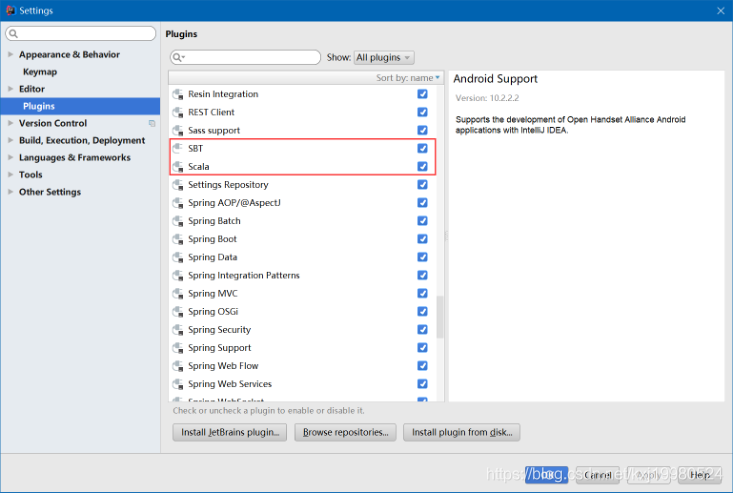

然后打开idea下载这两个插件

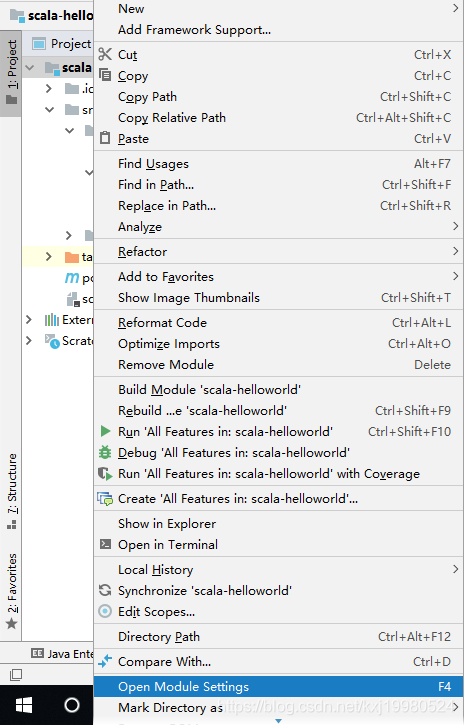

创建好普通maven项目后

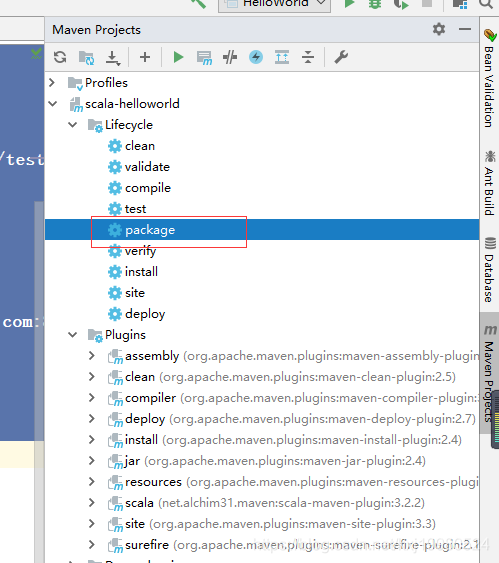

导入pom依赖,下面的几个plugins是必须的打包时使用的,只需要修改mainclass就可以了

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.buba</groupId>

<artifactId>scala-helloworld</artifactId>

<version>1.0-SNAPSHOT</version>

<packaging>jar</packaging>

<properties>

<scala.version>2.11.8</scala.version>

<spark.version>2.1.1</spark.version>

</properties>

<dependencies>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

<scope>provided</scope>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.spark/spark-core -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>${spark.version}</version>

<scope>provided</scope>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.6.1</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.2.2</version>

<executions>

<execution>

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-assembly-plugin</artifactId>

<version>3.0.0</version>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

<configuration>

<archive>

<manifest>

<mainClass>com.buba.WordCount</mainClass>

</manifest>

</archive>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

</plugin>

</plugins>

</build>

</project>

scala类,worldcount

package com.buba

import org.apache.spark.{SparkConf, SparkContext}

object WordCount extends App {

val sparkConf = new SparkConf().setAppName("WordCount")

val sparkContext = new SparkContext(sparkConf)

val file = sparkContext.textFile("hdfs://hadoop-senior01.buba.com:8020/test.txt")

val words = file.flatMap(_.split(" "))

val wordsTuple = words.map((_,1))

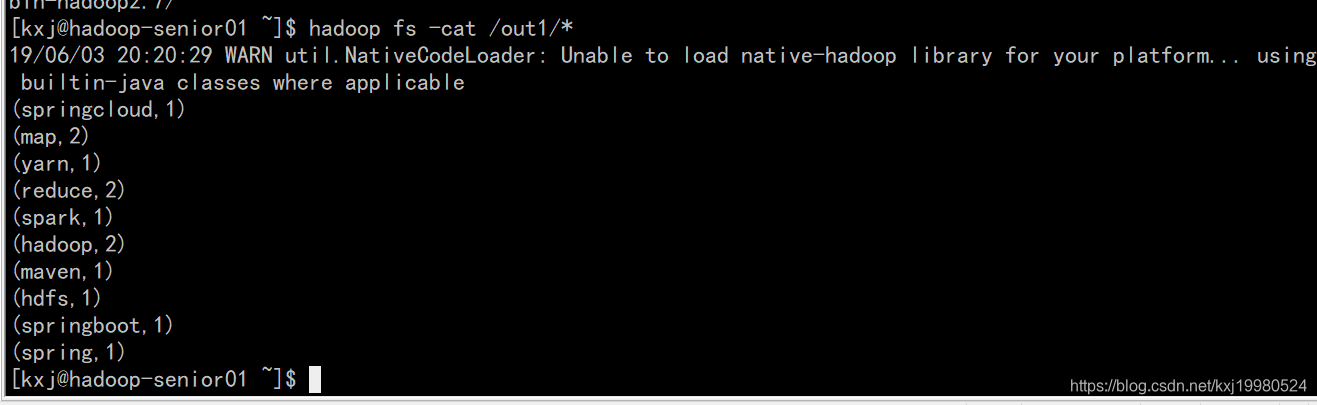

wordsTuple.reduceByKey(_+_).saveAsTextFile("hdfs://hadoop-senior01.buba.com:8020/out1")

sparkContext.stop()

}

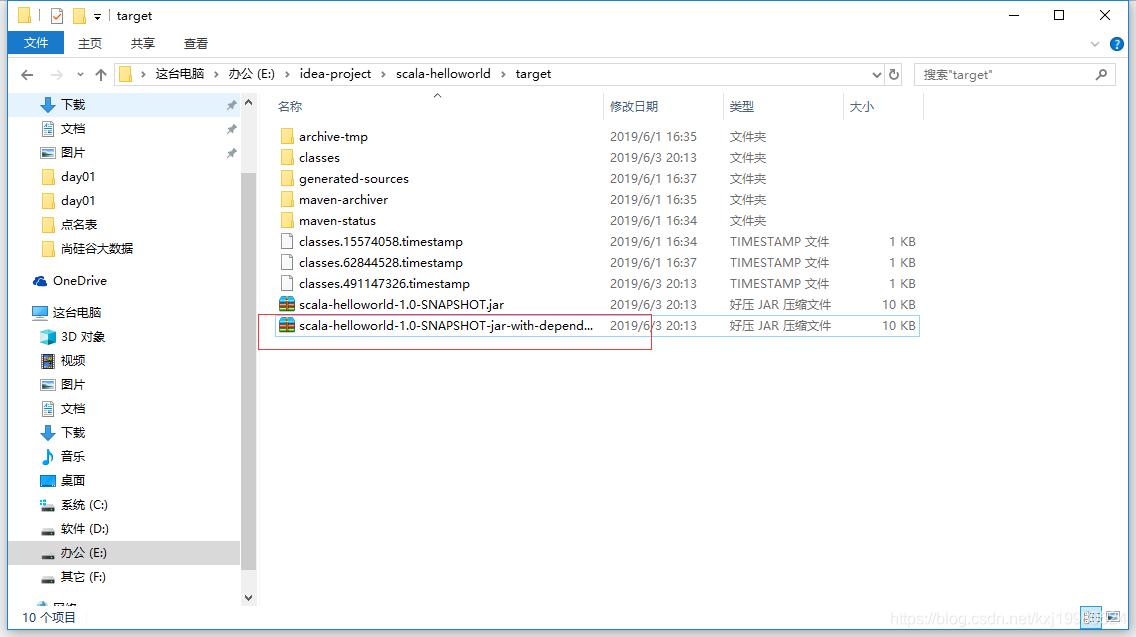

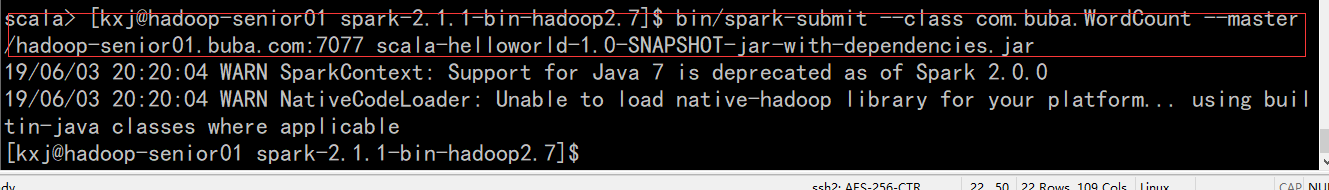

打完包后把这个jar包上传到spark集群上去

8693

8693

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?