【卷积神经网络】(三)VGG

1.简介

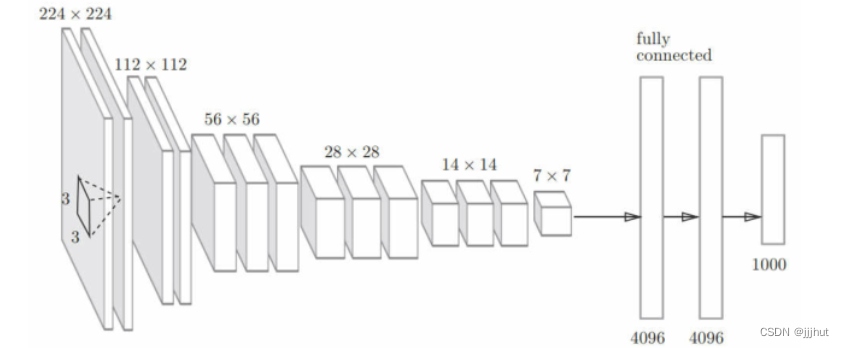

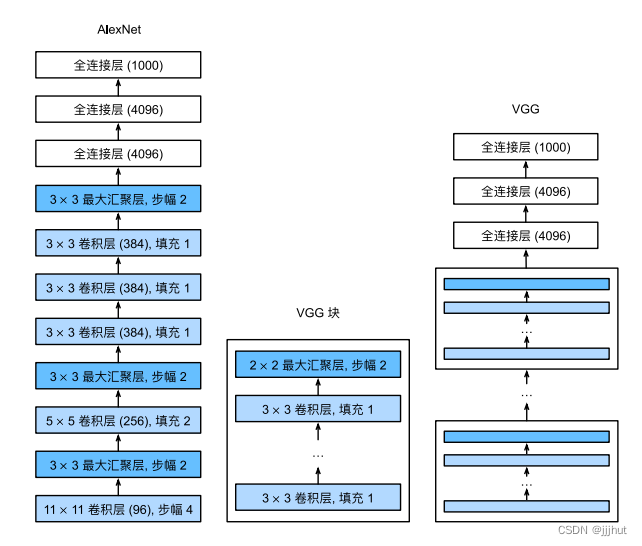

VGG 是由卷积层和池化层构成的基础CNN。不过,如下图所示,它的特点在于将有权重的层(卷积层或者全连接层)叠加到16层(或者19层),具备了深度。

VGG中需要注意:

(1)基于3×3的小型滤波器的卷积层的运算是连续进行的。如上图所示,重复进行“卷积层叠加2次到4次,再通过池化层将大小减半”的处理,最后经由全连接层输出结果。

AlexNet 与 VGG 的网络结构比较:

同:本质上都是块设计。

基于Pytorch 的 VGG-11

构建模型

VGG-11 由 8个卷积层和三个全连接层构成。

import torch

from torch import nn

from d2l import torch as d2l

conv_arch = ((1, 64), (1, 128), (2, 256), (2, 512), (2, 512))

def vgg(conv_arch):

conv_blks = []

in_channels = 1

# 卷积层部分

for (num_convs, out_channels) in conv_arch:

conv_blks.append(vgg_block(num_convs, in_channels, out_channels))

in_channels = out_channels

return nn.Sequential(

*conv_blks, nn.Flatten(),

# 全连接层部分

nn.Linear(out_channels * 7 * 7, 4096), nn.ReLU(), nn.Dropout(0.5),

nn.Linear(4096, 4096), nn.ReLU(), nn.Dropout(0.5),

nn.Linear(4096, 10))

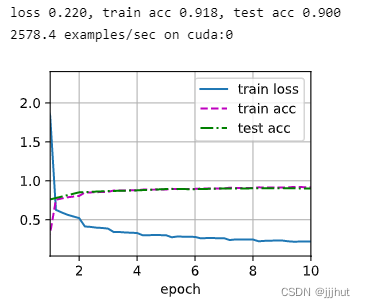

训练模型

ratio = 4

small_conv_arch = [(pair[0], pair[1] // ratio) for pair in conv_arch]

net = vgg(small_conv_arch)

lr, num_epochs, batch_size = 0.05, 10, 128

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size, resize=224)

d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

本文介绍了VGG神经网络,其特点是深层结构,特别强调了使用3x3小型滤波器的连续卷积层。通过对比AlexNet,展示了基于Pytorch实现的VGG-11模型的构建过程,包括8个卷积层和3个全连接层,以及训练示例。

本文介绍了VGG神经网络,其特点是深层结构,特别强调了使用3x3小型滤波器的连续卷积层。通过对比AlexNet,展示了基于Pytorch实现的VGG-11模型的构建过程,包括8个卷积层和3个全连接层,以及训练示例。

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?