官方HBase-MapReduce

查看HBase的MapReduce任务的所需的依赖

bin/hbase mapredcp

执行环境变量的导入

$ export HBASE_HOME=/opt/module/hbase-1.3.1

$ export HADOOP_CLASSPATH = ``${HBASE_HOME}/bin/hbase mapredcp `

运行官方的MapReduce任务

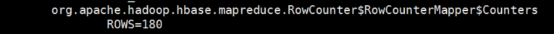

– 案例一:统计Student表中有多少行数据

==$ /opt/module/hadoop-2.8.4/bin/yarn jar lib/hbase-server-1.3.1.jar rowcounter ns_ct:calllog=

案例二:使用MapReduce将本地数据导入到HBase

(1) 在本地创建一个tsv格式的文件:city.tsv,自己建表用\t分割数据

1001 BeiJing China

1002 New York TUS

1003 ShangHai China

尖叫提示:上面的这个数据不要从word中直接复制,有格式错误

(2) 创建HBase表

hbase(main):001:0> create ‘city’,'cf’

(3) 在HDFS中创建input_fruit文件夹并上传city.tsv文件

$ /opt/module/hadoop-2.8.4/bin/hdfs dfs -mkdir /hbase_test/

$ /opt/module/hadoop-2.8.4/bin/hdfs dfs -put city.tsv /hbase_test/

(4) 执行MapReduce到HBase的fruit表中

$ /opt/module/hadoop-2.8.4/bin/yarn jar lib/hbase-server-1.3.1.jar importtsv

-Dimporttsv.columns=HBASE_ROW_KEY,cf:name,cf:countries city

hdfs://bigdata11:9000/hbase_test

(5) 使用scan命令查看导入后的结果

base(main):001:0> scan 'city’

HBase2HBase

目标:将city表中的一部分数据,通过MR迁入到city_mr表中。

分步实现:

(1) 构建HBaseMapper类,用于读取city表中的数据

public class HBaseMapper extends TableMapper<ImmutableBytesWritable, Put> {

@Override

protected void map(ImmutableBytesWritable key, Result value, Context context) throws IOException, InterruptedException {

//将city的name和color提取出来,相当于将每一行数据读取出来放入到Put对象中。

Put put = new Put(key.get());

//遍历添加column行

for (Cell cell : value.rawCells()){

//添加/克隆列族:cf

if ("cf".equals(Bytes.toString(CellUtil.cloneFamily(cell)))){

//添加/克隆列:name

if ("name".equals(Bytes.toString(CellUtil.cloneQualifier(cell)))){

//将该列cell加入到put对象中

put.add(cell);

}

}

}

//将从fruit读取到的每行数据写入到context中作为map的输出

context.write(key,put);

}

}

2) 构建HBaseReduce类,用于将读取到的fruit表中的数据写入到city_mr表中

public class HBaseReduce extends TableReducer<ImmutableBytesWritable, Put, NullWritable> {

@Override

protected void reduce(ImmutableBytesWritable key, Iterable<Put> values, Context context) throws IOException, InterruptedException {

//将map获取的数据写入到对应的文件中

for (Put put : values){

context.write(NullWritable.get(),put);

}

}

}

3) 构建 CityToCity_mr extends Configured implements Tool用于组装运行Job任务

public class CityToCity_mr extends Configured implements Tool {

public int run(String[] strings) throws Exception {

//获取配置文件

Configuration conf = this.getConf();

//创建job任务

Job job = Job.getInstance(conf,getClass().getSimpleName());

job.setJarByClass(CityToCity_mr.class);

//创建扫描器

Scan scan = new Scan();

//设置Mapper,注意导入的是mapreduce包下的,不是mapred包下的,后者是老版本

TableMapReduceUtil.initTableMapperJob(

//读数据的表

"City",

//扫描器

scan,

//设置map类

HBaseMapper.class,

//设置输出数据类型

ImmutableBytesWritable.class,

Put.class,

//配置job

job

);

//配置Reduce

TableMapReduceUtil.initTableReducerJob(

//将数据写到City_mr中

"city_mr",

//设置Reduce类

HBaseReduce.class,

//配置给job任务

job

);

//设置Reduce数量,最少1个

job.setNumReduceTasks(1);

boolean status = job.waitForCompletion(true);

if (status){

return 0;

}else {

return 1;

}

}

public static void main(String[] args) throws Exception {

Configuration conf = HBaseConfiguration.create();

int status = ToolRunner.run(conf, new CityToCity_mr(), args);

System.exit(status);

}

}

打包运行任务

尖叫提示:运行任务前,如果待数据导入的表不存在,则需要提前创建之。

HDFS2HBase

目标:实现将HDFS中的数据写入到HBase表中。

分步实现:

构建HDFS2HBaseMapper于读取HDFS中的文件数据

import org.apache.hadoop.hbase.client.Put;

import org.apache.hadoop.hbase.io.ImmutableBytesWritable;

import org.apache.hadoop.hbase.util.Bytes;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class HDFS2HBaseMapper extends Mapper<LongWritable, Text, ImmutableBytesWritable, Put> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//从HDFS中读取数据

String lineValue = value.toString();

//读取出来的每行数据使用\t进行分割,存于String数组

String[] values = lineValue.split("\t");

//根据数据中值的含义进行取值 1001 apple red

String rowkey = values[0];

String name = values[1];

String color = values[2];

//初始化rowkey

ImmutableBytesWritable immutableBytesWritable = new ImmutableBytesWritable(Bytes.toBytes(rowkey));

//初始化put

Put put = new Put(Bytes.toBytes(rowkey));

//参数分别:列蔟,列,值

put.add(Bytes.toBytes("info"),Bytes.toBytes("name"),Bytes.toBytes("name"));

put.add(Bytes.toBytes("info"),Bytes.toBytes("color"),Bytes.toBytes("color"));

context.write(immutableBytesWritable,put);

}

}

构建HDFS2HBaseReduce类

import org.apache.hadoop.hbase.client.Put;

import org.apache.hadoop.hbase.io.ImmutableBytesWritable;

import org.apache.hadoop.hbase.mapreduce.TableReducer;

import org.apache.hadoop.io.NullWritable;

import java.io.IOException;

public class HDFS2HBaseReduce extends TableReducer<ImmutableBytesWritable, Put, NullWritable> {

@Override

protected void reduce(ImmutableBytesWritable key, Iterable<Put> values, Context context) throws IOException, InterruptedException {

//读出来的每一行数据写入到 fruit_hdfs 表中

for (Put put : values){

context.write(NullWritable.get(),put);

}

}

}

创建HDFS2HBase组装Job

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.client.Put;

import org.apache.hadoop.hbase.io.ImmutableBytesWritable;

import org.apache.hadoop.hbase.mapreduce.TableMapReduceUtil;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

import java.io.IOException;

public class HDFS2HBase extends Configured implements Tool {

public int run(String[] strings) throws Exception {

String args = "hdfs://bigdata11:9000/input_fruit/fruit.tsv";

//得到Configuration

Configuration conf = this.getConf();

//创建job任务

Job job = Job.getInstance(conf, getClass().getSimpleName());

job.setJarByClass(HDFS2HBase.class);

Path path = new Path(args);

FileInputFormat.addInputPath(job,path);

//设置Mapper

job.setMapperClass(HBaseMapper.class);

job.setMapOutputKeyClass(ImmutableBytesWritable.class);

job.setMapOutputValueClass(Put.class);

//设置Reduce

TableMapReduceUtil.initTableReducerJob("fruit_hdfs",HBaseReduce.class,job);

//设置Reduce个数

job.setNumReduceTasks(1);

boolean isSuccess = job.waitForCompletion(true);

if (!isSuccess){

throw new IOException("Job running with error");

}

return isSuccess? 0 : 1;

}

public static void main(String[] args) throws Exception {

Configuration conf = HBaseConfiguration.create();

int status = ToolRunner.run(conf, new HDFS2HBase(), args);

System.exit(status);

}

}

打包运行

尖叫提示:运行任务前,如果待数据导入的表不存在,则需要提前创建之。

410

410

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?