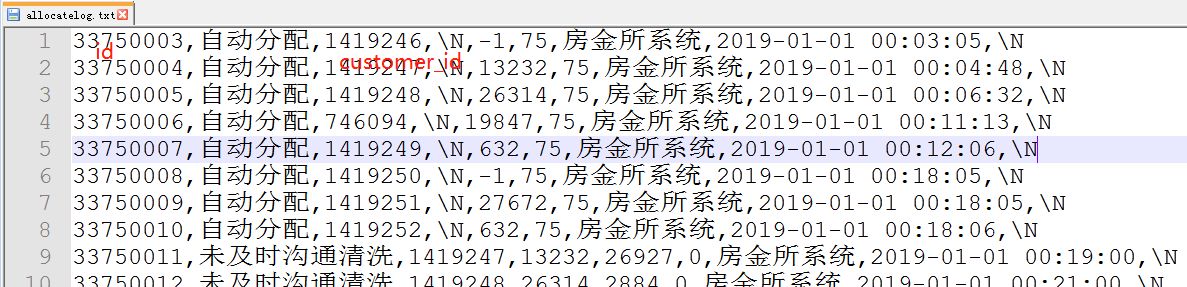

数据文件格式

根据上述数据文件编写的mapreduce代码

package first.first_maven;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

/*

* 模仿hive的rank功能,选择第一个

*/

public class AllocateLog {

public static class MyMapper extends Mapper<LongWritable, Text, IntWritable, IntWritable>{

@Override

protected void map(LongWritable key, Text value, Context context)

throws IOException, InterruptedException {

String words[]=value.toString().split(",");

// 获取customer_id和分配的id

context.write(new IntWritable(Integer.parseInt(words[2])), new IntWritable(Integer.parseInt(words[0])));

}

}

public static class MyReducer extends Reducer<IntWritable, IntWritable, IntWritable, IntWritable>{

@Override

protected void reduce(IntWritable key, Iterable<IntWritable> value,Context context)

throws IOException, InterruptedException {

//选取每个customer_id的首次分配id

int tmp=99999999;

for(IntWritable v:value){

if(tmp>v.get()){

tmp=v.get();

}

}

context.write(key, new IntWritable(tmp));

}

}

public static void main(String[] args) throws Exception {

Configuration conf=new Configuration();

Job job = Job.getInstance(conf, "myjob");

job.setJarByClass(WordCount.class);

job.setMapperClass(MyMapper.class);

job.setMapOutputKeyClass(IntWritable.class);

job.setMapOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job,new Path(args[0]));

job.setReducerClass(MyReducer.class);

job.setOutputKeyClass(IntWritable.class);

job.setOutputValueClass(IntWritable.class);

FileOutputFormat.setOutputPath(job,new Path(args[1]));

int isok=job.waitForCompletion(true)?0:1;

System.exit(isok);

}

}

本文介绍了一种使用MapReduce编程模型来模仿Hive的rank功能的方法,具体实现了选择每个customer_id对应的首次分配id的功能。通过自定义Mapper和Reducer类,文章详细展示了如何解析输入数据、处理业务逻辑以及配置并运行MapReduce作业。

本文介绍了一种使用MapReduce编程模型来模仿Hive的rank功能的方法,具体实现了选择每个customer_id对应的首次分配id的功能。通过自定义Mapper和Reducer类,文章详细展示了如何解析输入数据、处理业务逻辑以及配置并运行MapReduce作业。

4042

4042

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?