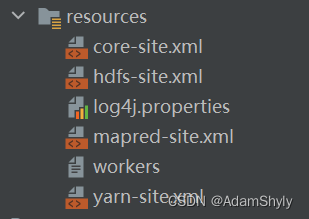

本文通过IDEA本地执行MR程序的main函数 ,而不是打包成Jar手工放到服务器上运行,发现以下错误提示:No job jar file set,然后在HDFS的/tmp下也没发现有该项目的Jar包,可以推测是任务提交给yarn后,本地并没有将项目打包成Jar提交给ResourceManager,导致找不到Mapper与Reducer类。

所以总体思路就是将项目借助Maven打包成Jar,然后通过添加mapreduce.job.jar的xml配置指定该Jar包在本地的存储路径。注意不能是绝对路径,必须是相对路径(项目文件为根路径),否则还是无法提交成功,但是No job jar file set的提示消失了,却依旧找不到类。

package com.atguigu.mapreduce.wordcount;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

本文主要描述了在IDEA中直接运行MapReduce程序遇到的Nojobjarfileset错误,原因是任务未被打包成Jar提交给ResourceManager。解决方案是使用Maven将项目打包成Jar,并在配置中指定mapreduce.job.jar为相对路径的Jar包。然而,即使解决了Nojobjarfileset问题,仍然可能出现找不到类的错误。文章提供了完整的WordCountDriver代码示例,展示如何设置Job和Mapper、Reducer类。

本文主要描述了在IDEA中直接运行MapReduce程序遇到的Nojobjarfileset错误,原因是任务未被打包成Jar提交给ResourceManager。解决方案是使用Maven将项目打包成Jar,并在配置中指定mapreduce.job.jar为相对路径的Jar包。然而,即使解决了Nojobjarfileset问题,仍然可能出现找不到类的错误。文章提供了完整的WordCountDriver代码示例,展示如何设置Job和Mapper、Reducer类。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1056

1056

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?